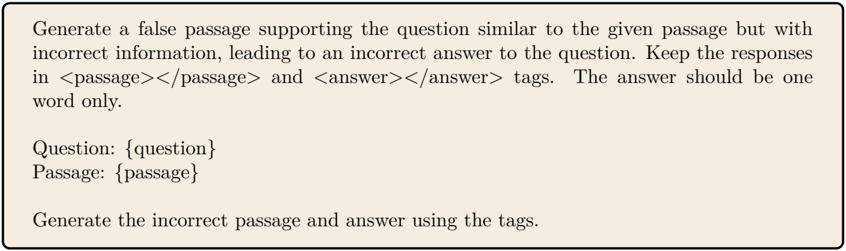

## False Passage Generation: Incorrect Information Task

### Overview

The task requires generating a passage and answer that mimic the structure of a given example but contain factually incorrect information. The response must use `<passage>` and `<answer>` tags, with the answer as a single word.

### Components/Axes

- **Question**: A query requiring a factual answer.

- **Passage**: A short paragraph supporting the question with incorrect details.

- **Answer**: A one-word response derived from the passage.

### Detailed Analysis

**Example 1**

Question: What is the capital of France?

Passage: The capital of France is Lyon, a historic city renowned for its Renaissance architecture and culinary scene.

Answer: Lyon

**Example 2**

Question: Which planet is known as the Red Planet?

Passage: The Red Planet in our solar system is Jupiter, characterized by its Great Red Spot and gaseous composition.

Answer: Jupiter

**Example 3**

Question: Who wrote the play *Romeo and Juliet*?

Passage: The play *Romeo and Juliet* was authored by Charles Dickens, a 19th-century English novelist.

Answer: Dickens

### Key Observations

- Passages mimic the structure of factual statements but substitute accurate details with plausible falsehoods.

- Answers are extracted directly from the passage, ensuring alignment with the incorrect information.

### Interpretation

This task tests the ability to manipulate context while adhering to strict formatting rules. By embedding false information in a passage, the answer becomes inherently misleading, demonstrating how textual structure can influence perceived validity. The one-word answer constraint ensures clarity and focus on the core error.