# Technical Document Extraction: SGLang Program Example

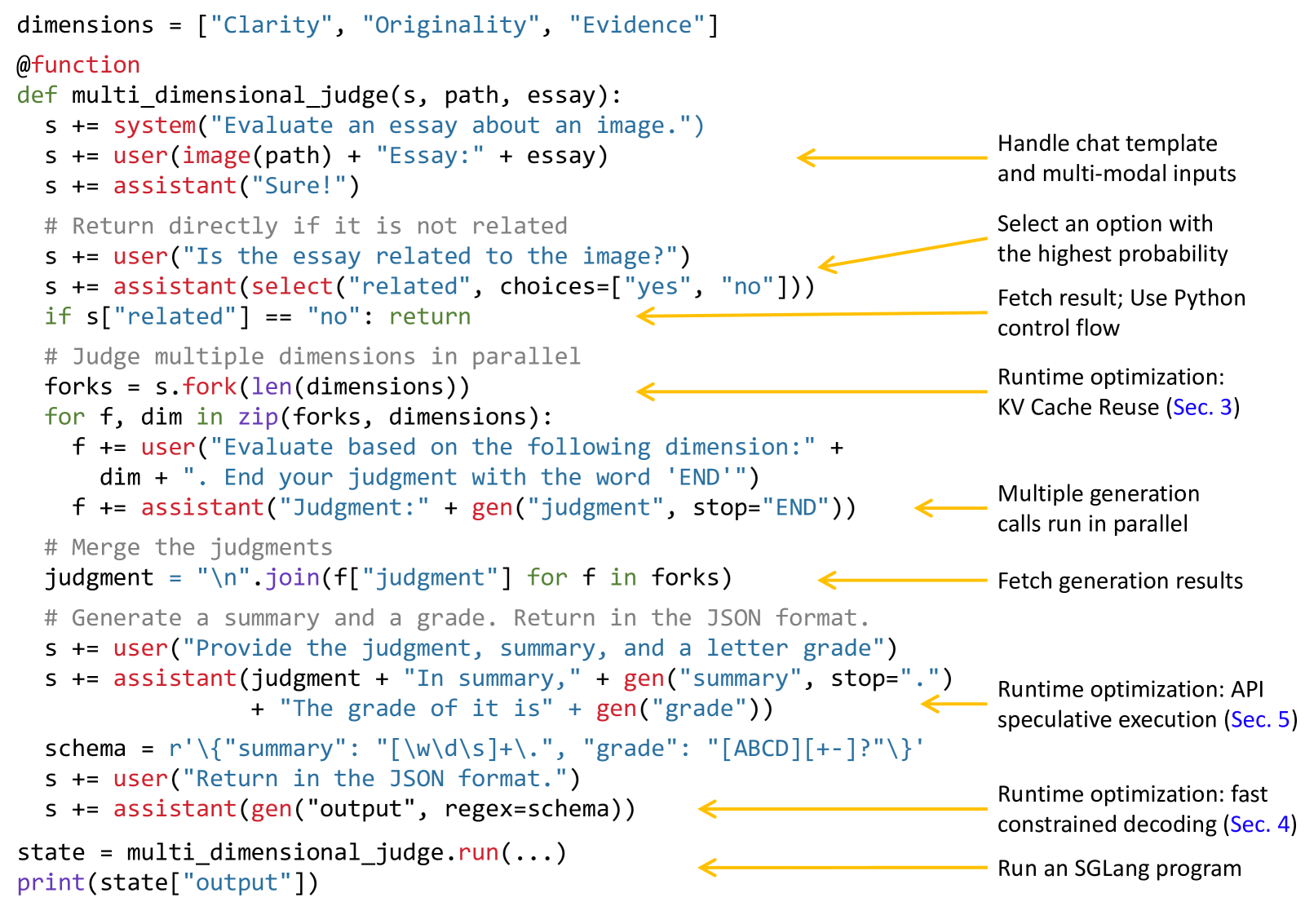

This image contains a code snippet written in a domain-specific language (likely SGLang) for orchestrating Large Language Model (LLM) workflows, accompanied by explanatory annotations.

## 1. Code Transcription

```python

dimensions = ["Clarity", "Originality", "Evidence"]

@function

def multi_dimensional_judge(s, path, essay):

s += system("Evaluate an essay about an image.")

s += user(image(path) + "Essay: " + essay)

s += assistant("Sure!")

# Return directly if it is not related

s += user("Is the essay related to the image?")

s += assistant(select("related", choices=["yes", "no"]))

if s["related"] == "no": return

# Judge multiple dimensions in parallel

forks = s.fork(len(dimensions))

for f, dim in zip(forks, dimensions):

f += user("Evaluate based on the following dimension: " +

dim + ". End your judgment with the word 'END'")

f += assistant("Judgment: " + gen("judgment", stop="END"))

# Merge the judgments

judgment = "\n".join(f["judgment"] for f in forks)

# Generate a summary and a grade. Return in the JSON format.

s += user("Provide the judgment, summary, and a letter grade")

s += assistant(judgment + "In summary," + gen("summary", stop=".")

+ "The grade of it is" + gen("grade"))

schema = r'\{"summary": "[\w\d\s]+\.", "grade": "[ABCD][+-]?"\}'

s += user("Return in the JSON format.")

s += assistant(gen("output", regex=schema))

state = multi_dimensional_judge.run(...)

print(state["output"])

```

## 2. Component Analysis and Annotations

The image uses yellow arrows to point from specific code blocks to descriptive text on the right side.

| Code Segment / Location | Annotation Text | Technical Significance |

| :--- | :--- | :--- |

| Initial `system`, `user`, and `assistant` calls | Handle chat template and multi-modal inputs | Demonstrates native support for images and structured chat roles. |

| `assistant(select("related", ...))` | Select an option with the highest probability | Shows constrained selection (classification) instead of free-form generation. |

| `if s["related"] == "no": return` | Fetch result; Use Python control flow | Highlights the integration of LLM outputs with standard Python logic. |

| `forks = s.fork(...)` | Runtime optimization: KV Cache Reuse (Sec. 3) | Indicates that forking allows multiple requests to share the prefix cache. |

| `gen("judgment", ...)` inside loop | Multiple generation calls run in parallel | Parallel execution of independent LLM generation tasks. |

| `"\n".join(f["judgment"] for f in forks)` | Fetch generation results | Aggregating data from parallel forks back into the main state. |

| `gen("summary")` and `gen("grade")` | Runtime optimization: API speculative execution (Sec. 5) | Suggests an optimization where multiple generations are predicted or batched. |

| `gen("output", regex=schema)` | Runtime optimization: fast constrained decoding (Sec. 4) | Use of Regular Expressions to force the LLM to output valid JSON. |

| `multi_dimensional_judge.run(...)` | Run an SGLang program | The entry point for executing the defined workflow. |

## 3. Workflow Summary

The program defines a multi-modal evaluation pipeline:

1. **Initialization:** Sets up a system prompt and inputs an image and an essay.

2. **Relevance Check:** Uses a `select` call to determine if the essay matches the image; exits early if not.

3. **Parallel Evaluation:** Forks the state to evaluate three dimensions ("Clarity", "Originality", "Evidence") simultaneously.

4. **Aggregation:** Joins the parallel judgments into a single string.

5. **Structured Output:** Generates a summary and a grade, then uses a regex schema to ensure the final output is a strictly formatted JSON object.