TECHNICAL ASSET FINGERPRINT

b02b46e20d4196d9b9fa8e0a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

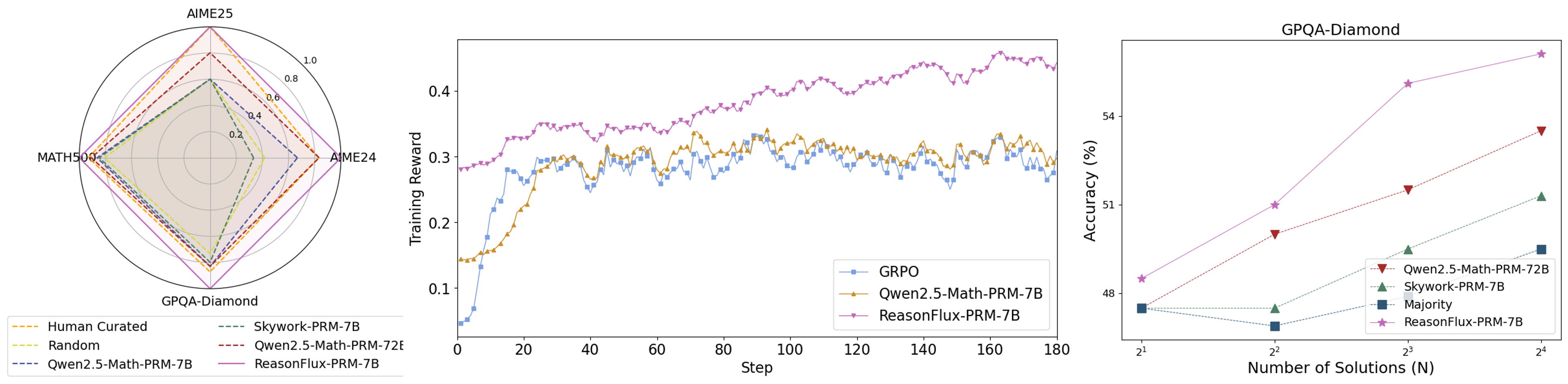

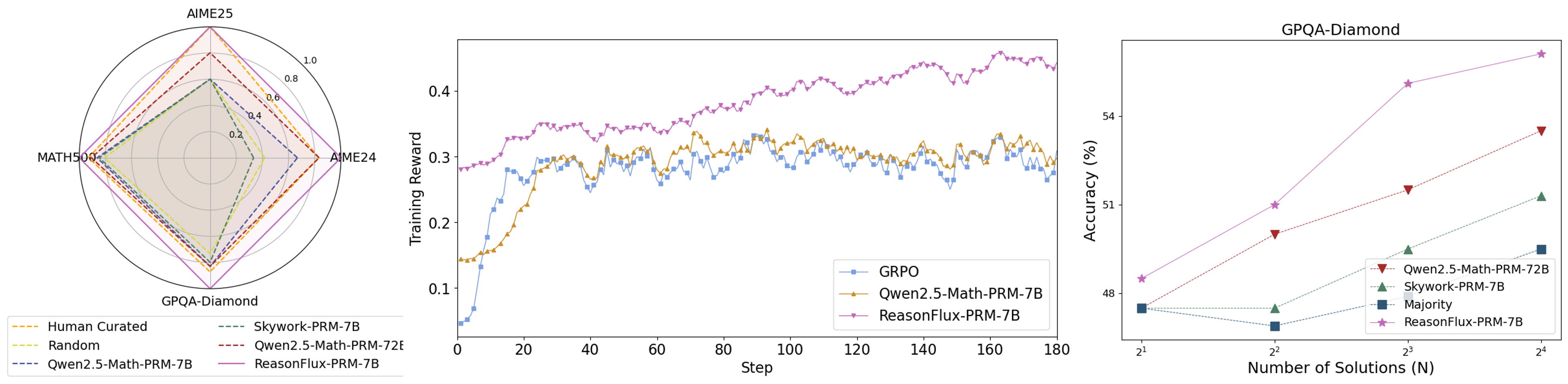

## Chart/Diagram Type: Multi-Chart Analysis

### Overview

The image presents three charts comparing the performance of different models on various tasks. The first chart is a radar plot showing performance on AIME25, MATH500, AIME24, and GPQA-Diamond. The second chart is a line plot showing the training reward over steps for GRPO, Qwen2.5-Math-PRM-7B, and ReasonFlux-PRM-7B. The third chart is a line plot showing accuracy (%) vs. Number of Solutions (N) for GPQA-Diamond, Qwen2.5-Math-PRM-72B, Skywork-PRM-7B, Majority, and ReasonFlux-PRM-7B.

### Components/Axes

**Chart 1: Radar Plot**

* **Title:** (Implied) Model Performance Comparison

* **Axes:**

* AIME25 (Top)

* MATH500 (Left)

* AIME24 (Right)

* GPQA-Diamond (Bottom)

* **Scale:** 0.0 to 1.0 in increments of 0.2.

* **Legend (Bottom-Left):**

* Human Curated (Yellow, dashed)

* Random (Light Green, dashed)

* Qwen2.5-Math-PRM-7B (Blue, dashed)

* Skywork-PRM-7B (Dark Green, dashed)

* Qwen2.5-Math-PRM-72E (Dark Red, dashed)

* ReasonFlux-PRM-7B (Purple, solid)

**Chart 2: Line Plot (Training Reward)**

* **Title:** (Implied) Training Reward vs. Step

* **X-axis:** Step (0 to 180 in increments of 20)

* **Y-axis:** Training Reward (0.1 to 0.4 in increments of 0.1)

* **Legend (Bottom-Right):**

* GRPO (Blue, square markers)

* Qwen2.5-Math-PRM-7B (Yellow/Orange, triangle markers)

* ReasonFlux-PRM-7B (Purple, triangle markers)

**Chart 3: Line Plot (Accuracy)**

* **Title:** GPQA-Diamond

* **X-axis:** Number of Solutions (N), logarithmic scale (2^1, 2^2, 2^3, 2^4)

* **Y-axis:** Accuracy (%) (48 to 54 in increments of 2)

* **Legend (Bottom-Right):**

* Qwen2.5-Math-PRM-72B (Dark Red, inverted triangle markers)

* Skywork-PRM-7B (Dark Green, triangle markers)

* Majority (Dark Blue, square markers)

* ReasonFlux-PRM-7B (Purple, star markers)

### Detailed Analysis or ### Content Details

**Chart 1: Radar Plot**

* **Human Curated (Yellow, dashed):** Values are approximately 0.4 for AIME25, 0.2 for MATH500, 0.4 for AIME24, and 0.2 for GPQA-Diamond.

* **Random (Light Green, dashed):** Values are approximately 0.2 for all axes.

* **Qwen2.5-Math-PRM-7B (Blue, dashed):** Values are approximately 0.4 for AIME25, 0.2 for MATH500, 0.4 for AIME24, and 0.2 for GPQA-Diamond.

* **Skywork-PRM-7B (Dark Green, dashed):** Values are approximately 0.4 for AIME25, 0.2 for MATH500, 0.4 for AIME24, and 0.2 for GPQA-Diamond.

* **Qwen2.5-Math-PRM-72E (Dark Red, dashed):** Values are approximately 0.6 for AIME25, 0.2 for MATH500, 0.6 for AIME24, and 0.4 for GPQA-Diamond.

* **ReasonFlux-PRM-7B (Purple, solid):** Values are approximately 0.8 for AIME25, 0.4 for MATH500, 0.8 for AIME24, and 0.6 for GPQA-Diamond.

**Chart 2: Line Plot (Training Reward)**

* **GRPO (Blue, square markers):** Starts at approximately 0.1, increases to around 0.3 by step 40, then fluctuates between 0.28 and 0.32 for the remaining steps.

* **Qwen2.5-Math-PRM-7B (Yellow/Orange, triangle markers):** Starts at approximately 0.15, increases to around 0.35 by step 40, then fluctuates between 0.3 and 0.35 for the remaining steps.

* **ReasonFlux-PRM-7B (Purple, triangle markers):** Starts at approximately 0.28, increases steadily to approximately 0.42 by step 180.

**Chart 3: Line Plot (Accuracy)**

* **Qwen2.5-Math-PRM-72B (Dark Red, inverted triangle markers):** Starts at approximately 49% at 2^1, increases to approximately 52% at 2^4.

* **Skywork-PRM-7B (Dark Green, triangle markers):** Starts at approximately 48% at 2^1, increases to approximately 51% at 2^4.

* **Majority (Dark Blue, square markers):** Remains relatively constant at approximately 47% across all values of N.

* **ReasonFlux-PRM-7B (Purple, star markers):** Starts at approximately 48% at 2^1, increases to approximately 55% at 2^4.

### Key Observations

* In the radar plot, ReasonFlux-PRM-7B consistently outperforms other models across all tasks.

* In the training reward plot, ReasonFlux-PRM-7B shows a steady increase in reward over steps, while GRPO and Qwen2.5-Math-PRM-7B plateau after an initial increase.

* In the accuracy plot, ReasonFlux-PRM-7B shows the highest accuracy and the most significant increase in accuracy as the number of solutions increases. The Majority model remains relatively constant.

### Interpretation

The data suggests that ReasonFlux-PRM-7B is the most effective model among those compared. It demonstrates superior performance across various tasks (AIME25, MATH500, AIME24, GPQA-Diamond) and exhibits a consistent improvement in training reward over time. Furthermore, its accuracy on the GPQA-Diamond task increases significantly with the number of solutions, indicating a better ability to leverage additional information. The Majority model's constant accuracy suggests it is not effectively utilizing the increasing number of solutions. The other models show varying degrees of improvement, but none match the overall performance of ReasonFlux-PRM-7B.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Performance Comparison of Reasoning Agents

### Overview

The image presents three charts comparing the performance of different reasoning agents (GRPO, Owen2.5-Math-PRM-TB, Skywork-PRM-TB, ReasonFlux-PRM-TB, and Majority) across three datasets (AIME25, MATH50K, and GPQA-Diamond). The charts show performance in terms of radial plots for AIME25, a line graph of training reward over steps, and a line graph of accuracy versus the number of solutions.

### Components/Axes

* **Chart 1 (AIME25):** Radial plot with axes representing performance on AIME25. The radial scale ranges from 0 to 1.0. The legend is located at the bottom-left, listing the agents: "Human Curated" (dark blue), "Random" (light gray), "Skywork-PRM-TB" (orange), "Owen2.5-Math-PRM-TB" (yellow), "Owen2.5-Math-PRM-72E" (dashed yellow), and "ReasonFlux-PRM-TB" (purple).

* **Chart 2 (Training Reward):** Line graph with "Step" on the x-axis (ranging from 0 to 180) and "Training Reward" on the y-axis (ranging from 0.1 to 0.5). The legend is located at the top-right, listing the agents: "GRPO" (blue), "Owen2.5-Math-PRM-TB" (orange), and "ReasonFlux-PRM-TB" (purple).

* **Chart 3 (GPQA-Diamond):** Line graph with "Number of Solutions (N)" on the x-axis (ranging from 2<sup>1</sup> to 2<sup>5</sup>) and "Accuracy (%)" on the y-axis (ranging from 48% to 54%). The legend is located at the top-right, listing the agents: "Owen2.5-Math-PRM-TB" (orange), "Skywork-PRM-TB" (green), "Majority" (black), and "ReasonFlux-PRM-TB" (purple).

### Detailed Analysis or Content Details

**Chart 1 (AIME25):**

* **Human Curated:** Shows a relatively consistent performance across the AIME25 dataset, with values around 0.8-0.9.

* **Random:** Exhibits very low performance, consistently below 0.2.

* **Skywork-PRM-TB:** Performance fluctuates, with values ranging from approximately 0.3 to 0.7.

* **Owen2.5-Math-PRM-TB:** Performance is moderate, with values ranging from approximately 0.4 to 0.8.

* **Owen2.5-Math-PRM-72E:** Similar to Owen2.5-Math-PRM-TB, with values ranging from approximately 0.4 to 0.8.

* **ReasonFlux-PRM-TB:** Shows the highest performance, consistently above 0.7 and reaching close to 1.0.

**Chart 2 (Training Reward):**

* **GRPO (Blue):** The line fluctuates around 0.3, with some oscillations. At step 180, the reward is approximately 0.32.

* **Owen2.5-Math-PRM-TB (Orange):** The line fluctuates around 0.35, with more pronounced oscillations than GRPO. At step 180, the reward is approximately 0.38.

* **ReasonFlux-PRM-TB (Purple):** The line shows a generally increasing trend, starting around 0.3 and reaching approximately 0.45 at step 180.

**Chart 3 (GPQA-Diamond):**

* **Owen2.5-Math-PRM-TB (Orange):** Starts at approximately 48% accuracy at N=2<sup>1</sup> and increases to approximately 52% at N=2<sup>5</sup>.

* **Skywork-PRM-TB (Green):** Starts at approximately 49% accuracy at N=2<sup>1</sup> and increases to approximately 53% at N=2<sup>5</sup>.

* **Majority (Black):** Starts at approximately 48% accuracy at N=2<sup>1</sup> and remains relatively flat, reaching approximately 49% at N=2<sup>5</sup>.

* **ReasonFlux-PRM-TB (Purple):** Starts at approximately 48% accuracy at N=2<sup>1</sup> and increases sharply to approximately 54% at N=2<sup>5</sup>.

### Key Observations

* ReasonFlux-PRM-TB consistently outperforms other agents across all three datasets.

* The training reward for ReasonFlux-PRM-TB shows a clear upward trend, suggesting continued learning.

* Accuracy on GPQA-Diamond increases with the number of solutions for most agents, but ReasonFlux-PRM-TB shows the most significant improvement.

* The "Majority" agent shows minimal improvement in accuracy with increasing solutions.

### Interpretation

The data suggests that ReasonFlux-PRM-TB is the most effective reasoning agent among those tested, demonstrating superior performance on AIME25, higher training rewards, and greater accuracy gains on GPQA-Diamond as the number of solutions increases. The radial plot for AIME25 visually confirms this, with ReasonFlux-PRM-TB extending furthest towards the outer edge of the plot, indicating higher performance. The increasing training reward for ReasonFlux-PRM-TB suggests that it is capable of continued learning and improvement. The relatively flat performance of the "Majority" agent on GPQA-Diamond indicates that simply aggregating multiple solutions does not necessarily lead to improved accuracy, highlighting the importance of sophisticated reasoning capabilities. The differences in performance between the agents likely stem from variations in their underlying architectures and training methodologies. The fact that Owen2.5-Math-PRM-72E and Owen2.5-Math-PRM-TB perform similarly suggests that the 72E parameter does not significantly impact performance in this context.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Composite Visualization: Multi-Model Performance Analysis (Radar, Training Reward, Accuracy)

### Overview

The image contains three distinct visualizations analyzing model performance across tasks, training dynamics, and solution scaling: a **radar chart** (left), a **line graph** (middle), and a **scatter plot** (right).

### 1. Left: Radar Chart (Multi-Task Performance)

- **Axes & Scale**: Four radial axes: *AIME25* (top), *MATH500* (left), *AIME24* (right), *GPQA-Diamond* (bottom). Radial scale: 0.0–1.0 (markers at 0.2, 0.4, 0.6, 0.8, 1.0).

- **Legend (Bottom)**: Six models (line styles/colors):

- Human Curated (orange dashed)

- Random (yellow dashed)

- Qwen2.5-Math-PRM-7B (blue dashed)

- Skywork-PRM-7B (green dashed)

- Qwen2.5-Math-PRM-72B (red dashed)

- ReasonFlux-PRM-7B (purple solid)

- **Trends**:

- *ReasonFlux-PRM-7B* (purple) dominates across all axes (highest values on AIME25, GPQA-Diamond, etc.).

- *Human Curated* (orange) and *Random* (yellow) show moderate performance, while *Qwen2.5-Math-PRM-7B* (blue) and *Skywork-PRM-7B* (green) have lower scores.

### 2. Middle: Line Graph (Training Reward vs. Step)

- **Axes**:

- Y-axis: *Training Reward* (0.0–0.4).

- X-axis: *Step* (0–180).

- **Legend (Bottom-Right)**: Three models:

- GRPO (blue, square markers)

- Qwen2.5-Math-PRM-7B (orange, triangle markers)

- ReasonFlux-PRM-7B (purple, star markers)

- **Trends**:

- *GRPO* (blue): Starts low (~0.05), rises to ~0.3 by step 20, then fluctuates (0.25–0.3).

- *Qwen2.5-Math-PRM-7B* (orange): Starts ~0.15, rises to ~0.3, then fluctuates (similar to GRPO but slightly higher).

- *ReasonFlux-PRM-7B* (purple): Starts ~0.28, rises steadily to ~0.45 by step 180 (clear upward trend, outperforming others).

### 3. Right: Scatter Plot (Accuracy vs. Number of Solutions, GPQA-Diamond)

- **Title**: *GPQA-Diamond*

- **Axes**:

- Y-axis: *Accuracy (%)* (48–54).

- X-axis: *Number of Solutions (N)* (2¹, 2², 2³, 2⁴ = 2, 4, 8, 16).

- **Legend (Bottom-Right)**: Four models:

- Qwen2.5-Math-PRM-72B (red triangle)

- Skywork-PRM-7B (green triangle)

- Majority (blue square)

- ReasonFlux-PRM-7B (purple star)

- **Data Points (Approximate)**:

- *ReasonFlux-PRM-7B* (purple): N=2¹ (~48.5%), N=2² (~51%), N=2³ (~54%), N=2⁴ (~55%) (highest accuracy).

- *Qwen2.5-Math-PRM-72B* (red): N=2¹ (~48%), N=2² (~50%), N=2³ (~52%), N=2⁴ (~54%).

- *Skywork-PRM-7B* (green): N=2¹ (~48%), N=2² (~49%), N=2³ (~51%), N=2⁴ (~52%).

- *Majority* (blue): N=2¹ (~48%), N=2² (~47.5%), N=2³ (~48.5%), N=2⁴ (~49%) (lowest, with a dip at N=2²).

### Key Observations

- **Radar Chart**: *ReasonFlux-PRM-7B* outperforms all models across multi-task benchmarks (AIME25, MATH500, AIME24, GPQA-Diamond).

- **Training Reward**: *ReasonFlux-PRM-7B* shows a consistent upward trend in training reward, while GRPO and Qwen2.5-Math-PRM-7B plateau.

- **Accuracy Scaling**: *ReasonFlux-PRM-7B* achieves the highest accuracy on GPQA-Diamond, with accuracy increasing with the number of solutions (N). *Majority* (baseline) performs poorly, especially at N=2².

### Interpretation

- **Multi-Task Strength**: *ReasonFlux-PRM-7B* demonstrates superior performance across diverse tasks (AIME, MATH, GPQA), suggesting robust generalization.

- **Training Efficiency**: The upward trend in training reward for *ReasonFlux-PRM-7B* indicates effective learning over steps, outpacing GRPO and Qwen2.5-Math-PRM-7B.

- **Solution Scaling**: For GPQA-Diamond, increasing the number of solutions (N) improves accuracy for all models, but *ReasonFlux-PRM-7B* benefits most, highlighting its ability to leverage more solutions for better performance.

This composite visualization collectively illustrates *ReasonFlux-PRM-7B*’s dominance in multi-task performance, training dynamics, and solution scaling, outperforming baselines (GRPO, Qwen2.5-Math-PRM-7B, Majority) across all metrics.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Radar Chart: Model Performance Across Benchmarks

### Overview

A radar chart comparing model performance across four benchmarks: AIME25, MATH500, GPQA-Diamond, and AIME24. Five data series are represented with distinct line styles and colors.

### Components/Axes

- **Axes**:

- Top: AIME25

- Left: MATH500

- Bottom: GPQA-Diamond

- Right: AIME24

- **Legend**:

- Orange dashed: Human Curated

- Dashed green: Skywork-PRM-7B

- Dotted yellow: Random

- Solid red: Qwen2.5-Math-PRM-7B

- Solid purple: ReasonFlux-PRM-7B

- **Scale**: 0.0 to 1.0 (outer ring)

### Detailed Analysis

- **Human Curated** (orange dashed): Outermost polygon, consistently highest values (~0.8–1.0 across all axes).

- **ReasonFlux-PRM-7B** (solid purple): Second-largest polygon, values ~0.6–0.9.

- **Qwen2.5-Math-PRM-7B** (solid red): Third-largest, values ~0.5–0.8.

- **Skywork-PRM-7B** (dashed green): Values ~0.4–0.7.

- **Random** (dotted yellow): Innermost polygon, values ~0.2–0.5.

### Key Observations

- Human Curated dominates all benchmarks, suggesting it represents a human performance baseline.

- ReasonFlux-PRM-7B consistently outperforms Qwen2.5-Math-PRM-7B and Skywork-PRM-7B.

- Random performs significantly worse than all trained models.

### Interpretation

The radar chart demonstrates that ReasonFlux-PRM-7B achieves the closest performance to the human-curated benchmark across all tasks, while Qwen2.5-Math-PRM-7B and Skywork-PRM-7B show moderate performance. The Random baseline highlights the effectiveness of the models compared to chance.

---

## Line Graph: Training Reward Over Steps

### Overview

A line graph tracking training reward over 180 steps for three models: GRPO, Qwen2.5-Math-PRM-7B, and ReasonFlux-PRM-7B.

### Components/Axes

- **X-axis**: Steps (0 to 180)

- **Y-axis**: Training Reward (0.1 to 0.4)

- **Legend**:

- Blue squares: GRPO

- Orange triangles: Qwen2.5-Math-PRM-7B

- Purple stars: ReasonFlux-PRM-7B

### Detailed Analysis

- **ReasonFlux-PRM-7B** (purple stars):

- Starts at ~0.28, peaks at ~0.42 by step 180.

- Smooth upward trend with minor fluctuations.

- **Qwen2.5-Math-PRM-7B** (orange triangles):

- Begins at ~0.15, reaches ~0.32 by step 180.

- Noisy with oscillations but generally increasing.

- **GRPO** (blue squares):

- Starts at ~0.05, rises to ~0.30 by step 180.

- Steeper initial growth but plateaus earlier.

### Key Observations

- ReasonFlux-PRM-7B achieves the highest final reward and maintains stability.

- Qwen2.5-Math-PRM-7B shows moderate performance with higher volatility.

- GRPO improves rapidly but lags behind the other two models in final reward.

### Interpretation

The graph indicates that ReasonFlux-PRM-7B has the most stable and effective training dynamics, while Qwen2.5-Math-PRM-7B and GRPO exhibit trade-offs between growth speed and stability.

---

## Scatter Plot: Accuracy vs. Number of Solutions (GPQA-Diamond)

### Overview

A scatter plot showing accuracy (%) against the number of solutions (N = 2¹ to 2⁴) for four models.

### Components/Axes

- **X-axis**: Number of Solutions (N) (2¹ to 2⁴)

- **Y-axis**: Accuracy (%) (48% to 54%)

- **Legend**:

- Red triangles: Qwen2.5-Math-PRM-7B

- Green dashed: Skywork-PRM-7B

- Blue squares: Majority

- Purple stars: ReasonFlux-PRM-7B

### Detailed Analysis

- **ReasonFlux-PRM-7B** (purple stars):

- Accuracy increases from ~48% (N=2¹) to ~54% (N=2⁴).

- Steep upward trend.

- **Qwen2.5-Math-PRM-7B** (red triangles):

- Accuracy rises from ~48% to ~53%.

- Slightly less steep than ReasonFlux.

- **Skywork-PRM-7B** (green dashed):

- Accuracy increases from ~48% to ~51%.

- Flatter growth.

- **Majority** (blue squares):

- Flat line at ~48% across all N values.

### Key Observations

- ReasonFlux-PRM-7B shows the strongest improvement with more solutions.

- Majority baseline remains constant, indicating no inherent model capability beyond random guessing.

- Qwen2.5-Math-PRM-7B and Skywork-PRM-7B show moderate gains.

### Interpretation

The scatter plot reveals that ReasonFlux-PRM-7B scales most effectively with increased computational resources (solutions), suggesting superior architectural efficiency. The Majority baseline underscores the importance of model training over brute-force methods.

---

## Cross-Referenced Trends

1. **Consistency Across Metrics**: ReasonFlux-PRM-7B outperforms all models in the radar chart, line graph, and scatter plot.

2. **Training Dynamics**: ReasonFlux-PRM-7B achieves higher rewards faster and more stably than Qwen2.5-Math-PRM-7B and GRPO.

3. **Scalability**: ReasonFlux-PRM-7B benefits most from increased solution counts (N), indicating better generalization.

## Conclusion

The data collectively demonstrates that ReasonFlux-PRM-7B is the most performant model across training stability, benchmark accuracy, and scalability. Qwen2.5-Math-PRM-7B and Skywork-PRM-7B show moderate performance, while GRPO and Majority lag behind. Human Curated remains the gold standard, but ReasonFlux-PRM-7B approaches it most closely.

DECODING INTELLIGENCE...