## Diagram: Attention Mechanism

### Overview

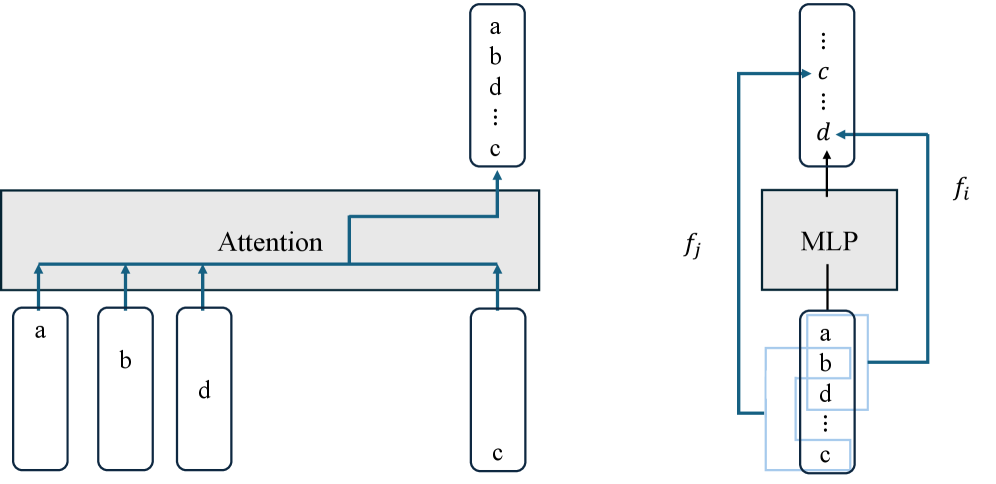

The image illustrates an attention mechanism, likely within a neural network architecture. It shows how inputs labeled 'a', 'b', 'd', and 'c' are processed through an "Attention" module, and how a separate "MLP" (Multi-Layer Perceptron) module interacts with a sequence of inputs.

### Components/Axes

* **Left Side:**

* Input tokens: Four rounded rectangles labeled 'a', 'b', 'd', and 'c' at the bottom.

* Attention Module: A large gray rectangle labeled "Attention" at the top-center.

* Output tokens: A rounded rectangle at the top labeled 'a', 'b', 'd', '...', 'c'.

* Arrows: Blue arrows indicate the flow of information.

* **Right Side:**

* Input tokens: A rounded rectangle at the bottom labeled 'a', 'b', 'd', '...', 'c'.

* MLP Module: A gray rectangle labeled "MLP" above the input tokens.

* Output tokens: A rounded rectangle at the top labeled '...', 'c', '...', 'd'.

* Arrows: Blue arrows indicate the flow of information, including a feedback loop.

* Labels: 'f\_j' and 'f\_i' are placed beside the right-side diagram.

### Detailed Analysis

* **Left Side (Attention):**

* The input tokens 'a', 'b', and 'd' each have a blue arrow pointing upwards to the "Attention" module.

* The input token 'c' has a blue arrow pointing upwards to the "Attention" module.

* The "Attention" module outputs a sequence of tokens 'a', 'b', 'd', '...', 'c'.

* There is a blue arrow from the "Attention" module to the output token 'c'.

* **Right Side (MLP):**

* The input tokens 'a', 'b', 'd', '...', 'c' feed into the "MLP" module.

* The "MLP" module outputs a sequence of tokens '...', 'c', '...', 'd'.

* There is a feedback loop from the output token 'd' back to the input tokens 'a', 'b', 'd', '...', 'c'.

* The output token 'c' also receives input from the sequence of tokens 'a', 'b', 'd', '...', 'c'.

### Key Observations

* The "Attention" module appears to be processing the input tokens and generating an output sequence.

* The "MLP" module is processing a sequence of tokens and has a feedback loop, suggesting a recurrent or iterative process.

* The '...' notation indicates that there are potentially more tokens in the sequences than explicitly shown.

### Interpretation

This diagram likely represents a component of a neural network architecture, possibly a transformer model or a similar attention-based system. The "Attention" module focuses on relevant parts of the input sequence, while the "MLP" module performs further processing, potentially learning complex relationships between the tokens. The feedback loop in the "MLP" module suggests that the model is refining its understanding of the input sequence over time. The labels 'f\_i' and 'f\_j' might represent feature vectors or intermediate representations at different stages of the process.