\n

## Diagram: Attention and MLP Block

### Overview

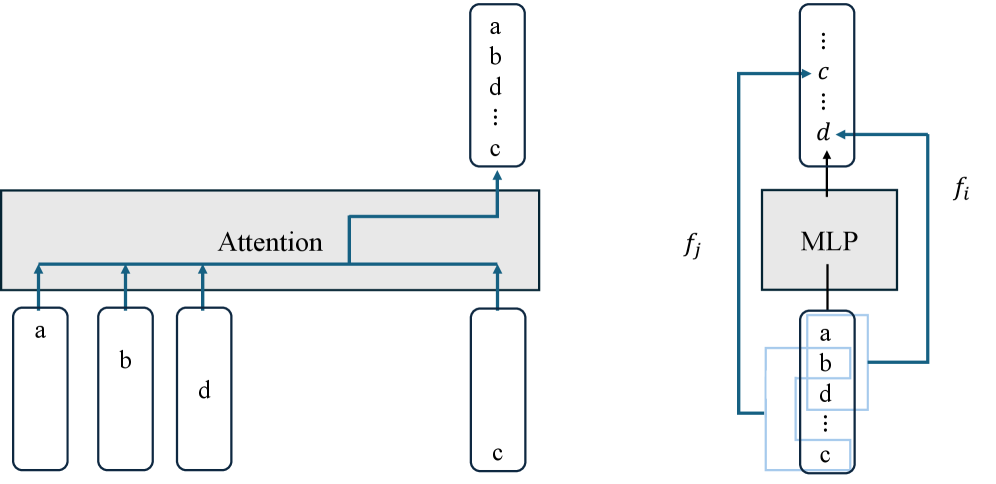

The image depicts a diagram illustrating the interaction between an Attention mechanism and a Multi-Layer Perceptron (MLP) block within a neural network architecture. The diagram shows the flow of data through these components, with labeled inputs and outputs. It appears to be a simplified representation of a feedforward layer within a transformer-like model.

### Components/Axes

The diagram consists of the following components:

* **Input Blocks:** Four rectangular blocks labeled 'a', 'b', 'd', and 'c'. These represent input vectors or features.

* **Attention Block:** A large, horizontally oriented rectangle labeled "Attention". This block receives input from the four input blocks.

* **MLP Block:** A rectangular block labeled "MLP". This block receives input from the Attention block and outputs to itself in a feedback loop.

* **Output Blocks:** Two sets of vertically stacked rectangular blocks, each labeled with 'a', 'b', 'd', and 'c' with additional ellipsis indicating more elements. These represent the output vectors or features.

* **Labels:** 'f<sub>j</sub>' and 'f<sub>i</sub>' are labels indicating input and output flows to the MLP block.

* **Arrows:** Arrows indicate the direction of data flow between the components.

### Detailed Analysis or Content Details

The diagram shows the following data flow:

1. **Inputs to Attention:** The four input blocks 'a', 'b', 'd', and 'c' are connected to the Attention block via arrows.

2. **Attention Output:** The Attention block outputs to a vertically stacked block containing 'a', 'b', 'd', and 'c' with ellipsis.

3. **MLP Input:** The output of the Attention block is fed as input to the MLP block, labeled 'f<sub>j</sub>'.

4. **MLP Output:** The MLP block outputs to another vertically stacked block containing 'a', 'b', 'd', and 'c' with ellipsis, labeled 'f<sub>i</sub>'.

5. **MLP Feedback Loop:** The output of the MLP block is also fed back as input to itself, creating a feedback loop.

The vertical blocks containing 'a', 'b', 'd', 'c' and ellipsis suggest a vector or a sequence of features. The ellipsis indicates that the vector/sequence has more elements than those explicitly listed.

### Key Observations

* The diagram highlights the interaction between Attention and MLP layers, which is a common pattern in modern neural network architectures like Transformers.

* The feedback loop within the MLP block suggests a recurrent or iterative processing of the data.

* The labels 'f<sub>j</sub>' and 'f<sub>i</sub>' suggest that the MLP block is performing a transformation on the input data.

### Interpretation

The diagram illustrates a key building block in a neural network architecture, likely a transformer. The Attention mechanism processes the input features ('a', 'b', 'd', 'c') to capture relationships between them. The output of the Attention mechanism is then fed into the MLP block, which performs a non-linear transformation. The feedback loop within the MLP block suggests that the transformation is applied iteratively, potentially refining the output over multiple steps. The labels 'f<sub>j</sub>' and 'f<sub>i</sub>' likely represent the input and output feature representations at different stages of the processing pipeline. The diagram suggests a modular design where Attention and MLP blocks are combined to create a powerful and flexible neural network architecture. The use of 'a', 'b', 'c', 'd' as labels for the input and output suggests these are feature vectors or embeddings. The ellipsis indicates that the feature vectors are likely higher dimensional than just four elements.