## Diagram: Multi-Agent Policy Architecture with Centralized Coordination

### Overview

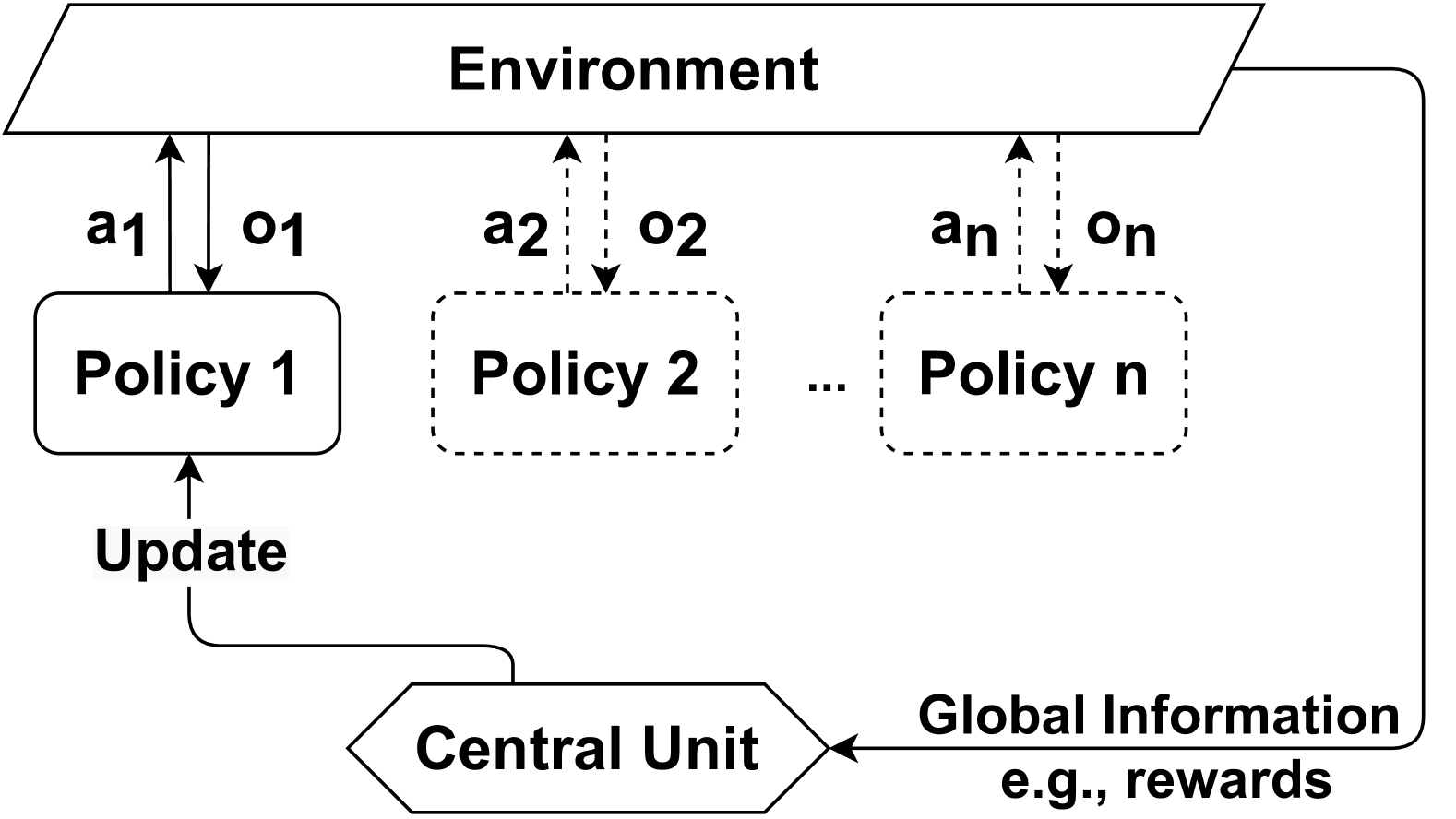

The image is a technical system architecture diagram illustrating a multi-agent or multi-policy control system interacting with a shared environment. It depicts a centralized learning or coordination framework where multiple policies operate in parallel, with a central unit facilitating updates based on global information. The diagram uses standard flowchart symbols and directional arrows to denote information flow and control relationships.

### Components/Axes

The diagram is composed of the following labeled components and connections, arranged in a hierarchical flow:

1. **Environment** (Top, Center): Represented by a parallelogram. This is the external system or context with which the policies interact.

2. **Policy Blocks** (Middle Row):

* **Policy 1**: A solid-lined, rounded rectangle on the left.

* **Policy 2**: A dashed-lined, rounded rectangle in the center.

* **Policy n**: A dashed-lined, rounded rectangle on the right.

* An ellipsis (`...`) between Policy 2 and Policy n indicates a sequence of policies from 2 to n.

3. **Central Unit** (Bottom, Center): Represented by a hexagon. This is the coordinating entity.

4. **Interaction Arrows (Environment ↔ Policies):**

* **From Environment to Policy 1**: A solid downward arrow labeled `o1` (observation 1).

* **From Policy 1 to Environment**: A solid upward arrow labeled `a1` (action 1).

* **From Environment to Policy 2**: A dashed downward arrow labeled `o2`.

* **From Policy 2 to Environment**: A dashed upward arrow labeled `a2`.

* **From Environment to Policy n**: A dashed downward arrow labeled `on`.

* **From Policy n to Environment**: A dashed upward arrow labeled `an`.

5. **Control & Information Flow Arrows:**

* **Update**: A solid arrow originates from the Central Unit, curves left, and points upward to **Policy 1**. The label `Update` is placed on the vertical segment of this arrow.

* **Global Information**: A solid arrow originates from the right side of the **Environment** block, curves down, and points left into the **Central Unit**. The label `Global Information` is placed above this arrow, with the sub-label `e.g., rewards` below it.

### Detailed Analysis

* **Spatial Layout**: The diagram has a clear top-down flow. The Environment is the top-level entity. The Policies are arranged horizontally in the middle layer, suggesting parallel operation. The Central Unit is at the bottom, acting as a foundational coordinator.

* **Line Style Semantics**: The solid lines for Policy 1 and its connections contrast with the dashed lines for Policy 2 through Policy n. This visually distinguishes Policy 1, possibly indicating it is the primary, active, or currently highlighted policy in the sequence, while the others represent a generalized set.

* **Data Flow**:

1. Each policy `i` receives an observation `oi` from the Environment.

2. Each policy `i` outputs an action `ai` to the Environment.

3. The Environment provides `Global Information` (e.g., rewards) to the Central Unit.

4. The Central Unit processes this global information and sends an `Update` signal specifically to Policy 1. The diagram implies this update mechanism could apply to all policies, but only the connection to Policy 1 is explicitly drawn.

### Key Observations

* **Centralized Training, Decentralized Execution (CTDE) Pattern**: The architecture strongly suggests a CTDE paradigm common in multi-agent reinforcement learning. Policies act independently (decentralized execution) based on local observations (`oi`), but are trained or updated (centralized training) using global information (`Global Information`) processed by a Central Unit.

* **Asymmetric Representation**: Policy 1 is visually emphasized with solid lines and a direct update link, while Policies 2..n are generalized with dashed lines. This is a common diagrammatic technique to show one instance of a repeated component.

* **Closed-Loop System**: The diagram forms a closed loop: Environment → Policies → Environment → Central Unit → Policies. This represents a continuous cycle of interaction, evaluation, and adaptation.

### Interpretation

This diagram models a sophisticated control or learning system designed for scenarios involving multiple agents or decision-making modules. The key insight is the separation of **execution** (handled by individual policies interacting directly with the environment) from **coordination and learning** (handled by the Central Unit using global feedback).

The `Global Information` (e.g., rewards) is critical. It allows the Central Unit to assess the overall system performance, not just the performance of individual policies. The `Update` signal likely contains optimized parameters, gradients, or instructions derived from this global assessment, which are then used to improve the policies.

The use of `n` policies indicates scalability. The system is designed to handle an arbitrary number of agents or sub-processes. The dashed lines for policies 2 through n abstract away repetitive detail, focusing the viewer on the architectural pattern rather than each individual component.

**In essence, the diagram answers the question: "How can multiple independent agents learn to cooperate or optimize a shared goal?"** The answer is by employing a central critic or coordinator (Central Unit) that uses system-wide data to guide the learning of all individual actors (Policies). This is a foundational concept in fields like multi-agent systems, swarm robotics, and distributed AI.