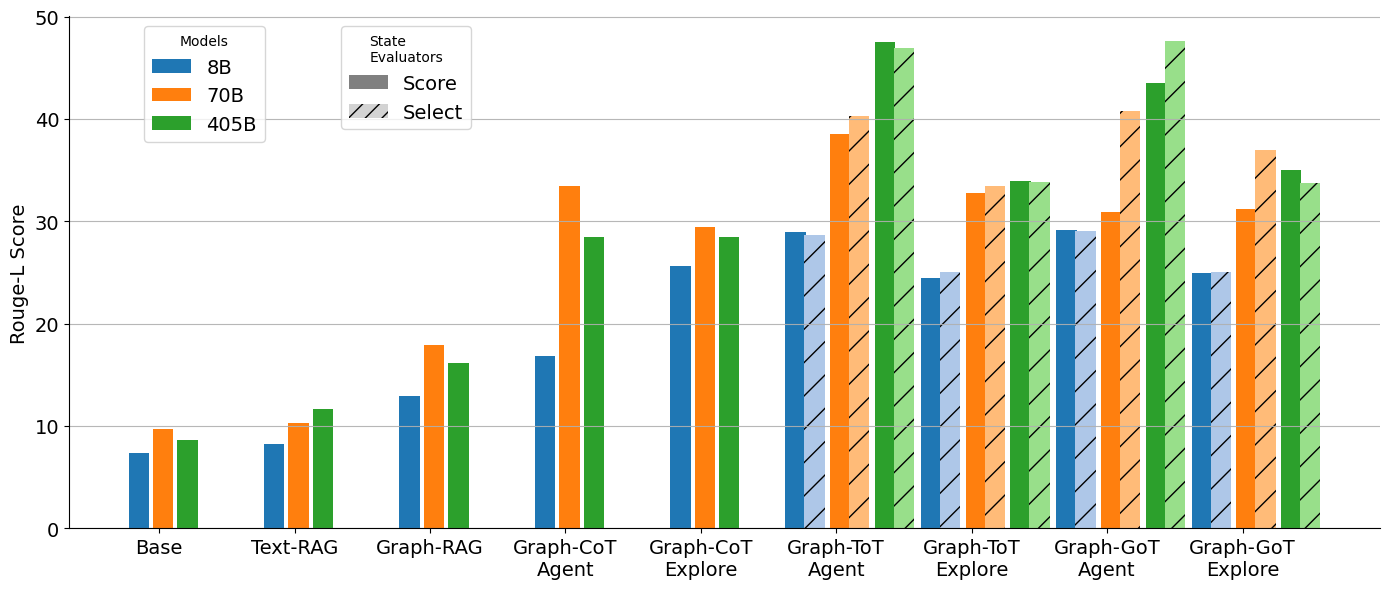

## Grouped Bar Chart: Rouge-L Score Comparison Across Models and Methods

### Overview

This image is a grouped bar chart comparing the performance of different AI models (8B, 70B, 405B parameters) across various reasoning methods, as measured by the Rouge-L Score. The chart evaluates two types of state evaluators ("Score" and "Select") for each method.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:** Labeled "Rouge-L Score". Scale ranges from 0 to 50, with major gridlines at intervals of 10.

* **X-Axis:** Lists 9 distinct reasoning methods. From left to right:

1. Base

2. Text-RAG

3. Graph-RAG

4. Graph-CoT Agent

5. Graph-CoT Explore

6. Graph-ToT Agent

7. Graph-ToT Explore

8. Graph-GoT Agent

9. Graph-GoT Explore

* **Legend 1 (Top-Left):** Titled "Models". Defines color coding for model size:

* Blue: 8B

* Orange: 70B

* Green: 405B

* **Legend 2 (Top-Center):** Titled "State Evaluators". Defines bar fill pattern:

* Solid Fill: "Score"

* Hatched Fill (diagonal lines): "Select"

* **Spatial Layout:** The two legends are positioned in the top-left and top-center of the chart area. The bars are grouped by method, with each group containing up to six bars (three models, each with two evaluator types).

### Detailed Analysis

Each method group on the x-axis contains bars for the 8B (blue), 70B (orange), and 405B (green) models. Within each model color, the left bar is solid ("Score" evaluator) and the right bar is hatched ("Select" evaluator).

**Trend Verification & Data Extraction (Approximate Values):**

1. **Base:**

* 8B (Blue): ~7.5 (Score), ~8 (Select)

* 70B (Orange): ~10 (Score), ~10 (Select)

* 405B (Green): ~9 (Score), ~9 (Select)

* *Trend:* Scores are low (<10). Larger models do not show a clear advantage here.

2. **Text-RAG:**

* 8B (Blue): ~8.5 (Score), ~8.5 (Select)

* 70B (Orange): ~10.5 (Score), ~10.5 (Select)

* 405B (Green): ~11.5 (Score), ~11.5 (Select)

* *Trend:* Slight improvement over Base. A small, consistent increase with model size.

3. **Graph-RAG:**

* 8B (Blue): ~13 (Score), ~13 (Select)

* 70B (Orange): ~18 (Score), ~18 (Select)

* 405B (Green): ~16 (Score), ~16 (Select)

* *Trend:* Notable jump from Text-RAG. The 70B model performs best here.

4. **Graph-CoT Agent:**

* 8B (Blue): ~17 (Score), ~17 (Select)

* 70B (Orange): ~33.5 (Score), ~33.5 (Select)

* 405B (Green): ~28.5 (Score), ~28.5 (Select)

* *Trend:* Significant increase, especially for 70B. 70B outperforms 405B.

5. **Graph-CoT Explore:**

* 8B (Blue): ~25.5 (Score), ~25.5 (Select)

* 70B (Orange): ~29.5 (Score), ~29.5 (Select)

* 405B (Green): ~28.5 (Score), ~28.5 (Select)

* *Trend:* Scores are high and clustered. 70B and 405B are nearly tied.

6. **Graph-ToT Agent:**

* 8B (Blue): ~29 (Score), ~29 (Select)

* 70B (Orange): ~38.5 (Score), ~40.5 (Select)

* 405B (Green): ~47.5 (Score), ~46.5 (Select)

* *Trend:* Highest scores observed so far. Clear advantage for larger models. 405B achieves the highest single score (~47.5).

7. **Graph-ToT Explore:**

* 8B (Blue): ~24.5 (Score), ~25 (Select)

* 70B (Orange): ~32.5 (Score), ~33.5 (Select)

* 405B (Green): ~34 (Score), ~34 (Select)

* *Trend:* Scores drop compared to the "Agent" variant. 405B and 70B are close.

8. **Graph-GoT Agent:**

* 8B (Blue): ~29.5 (Score), ~29.5 (Select)

* 70B (Orange): ~31 (Score), ~40.5 (Select)

* 405B (Green): ~43.5 (Score), ~47.5 (Select)

* *Trend:* Very high scores. A large discrepancy appears for the 70B model between "Score" (~31) and "Select" (~40.5) evaluators. 405B "Select" is very high (~47.5).

9. **Graph-GoT Explore:**

* 8B (Blue): ~25 (Score), ~25 (Select)

* 70B (Orange): ~31 (Score), ~37 (Select)

* 405B (Green): ~35 (Score), ~34 (Select)

* *Trend:* Similar pattern to Graph-ToT Explore, with "Agent" variants outperforming "Explore". 70B shows a notable gap between evaluators.

### Key Observations

1. **Method Progression:** There is a clear upward trend in Rouge-L scores as methods evolve from Base -> Text-RAG -> Graph-RAG -> CoT -> ToT/GoT. Graph-based Tree-of-Thought (ToT) and Graph-of-Thought (GoT) methods achieve the highest performance.

2. **Model Size Impact:** Generally, larger models (70B, 405B) outperform the 8B model. However, the advantage is not always linear; in some cases (e.g., Graph-RAG, Graph-CoT Agent), the 70B model outperforms the 405B model.

3. **Agent vs. Explore:** For ToT and GoT methods, the "Agent" variant consistently yields higher scores than the "Explore" variant for the same model size.

4. **Evaluator Discrepancy:** For most method/model combinations, the "Score" and "Select" evaluators produce nearly identical results (bars of equal height). The most significant exception is **Graph-GoT Agent with the 70B model**, where the "Select" evaluator bar is substantially taller (~40.5) than the "Score" evaluator bar (~31).

5. **Peak Performance:** The highest approximate score on the chart is ~47.5, achieved by the **405B model using the Graph-GoT Agent method with the "Select" evaluator**.

### Interpretation

This chart demonstrates the effectiveness of advanced, graph-augmented reasoning frameworks (ToT, GoT) over simpler RAG or chain-of-thought approaches for the task measured by Rouge-L. The data suggests that structuring reasoning as a tree or graph ("Agent" mode) is more effective than an exploratory approach ("Explore" mode).

The relationship between model size and performance is complex. While scaling from 8B to 70B provides a major boost, further scaling to 405B yields diminishing or inconsistent returns, indicating that methodological improvements (like switching from CoT to ToT) can be as impactful as raw parameter scaling.

The outlier in the Graph-GoT Agent (70B) results, where evaluators disagree, may indicate instability in that specific configuration or that the "Select" evaluator is better at capturing the benefits of the GoT method for that model size. Overall, the chart makes a strong case for investing in sophisticated graph-based reasoning architectures, particularly when paired with sufficiently large language models.