TECHNICAL ASSET FINGERPRINT

b14c7d6142ddd93564acc7a2

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

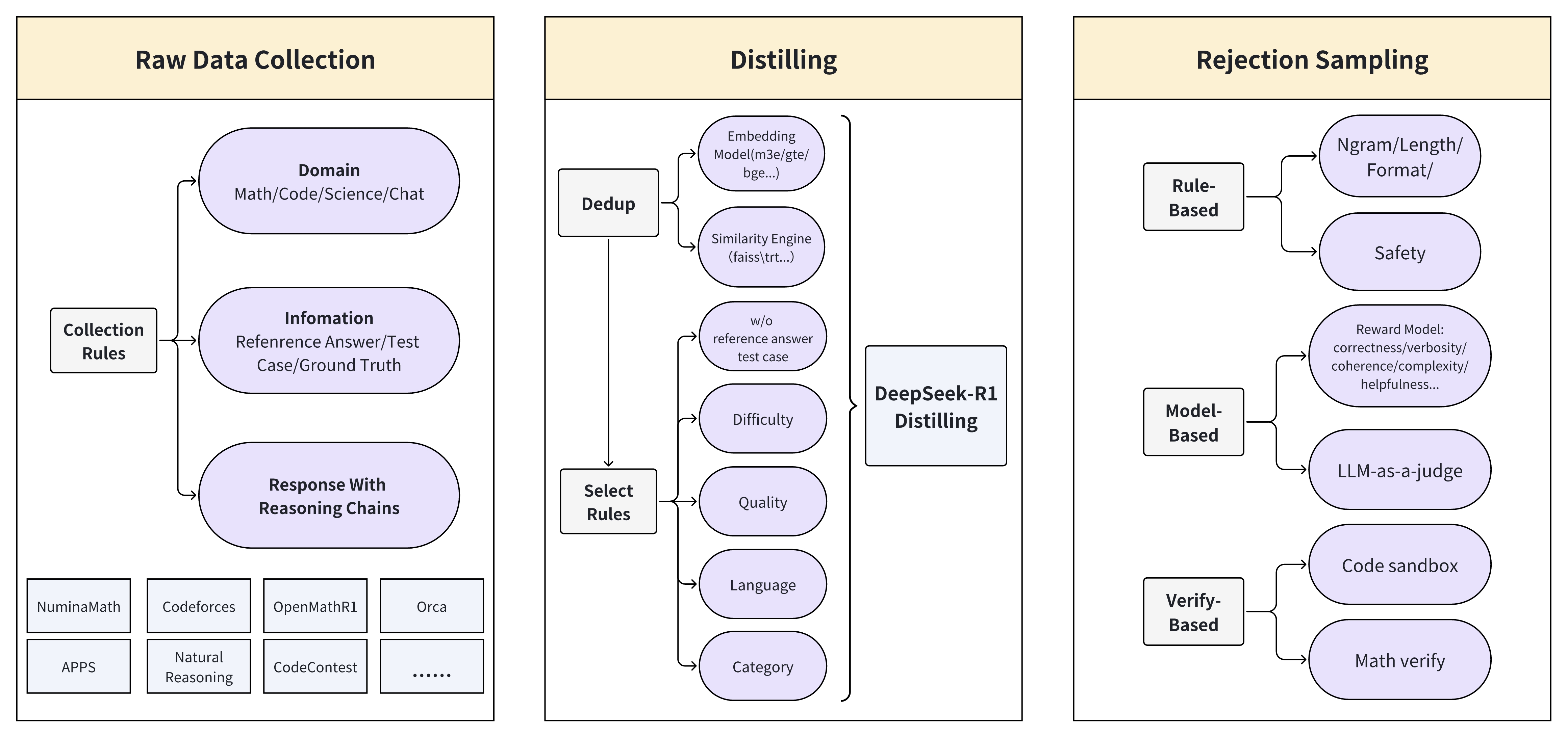

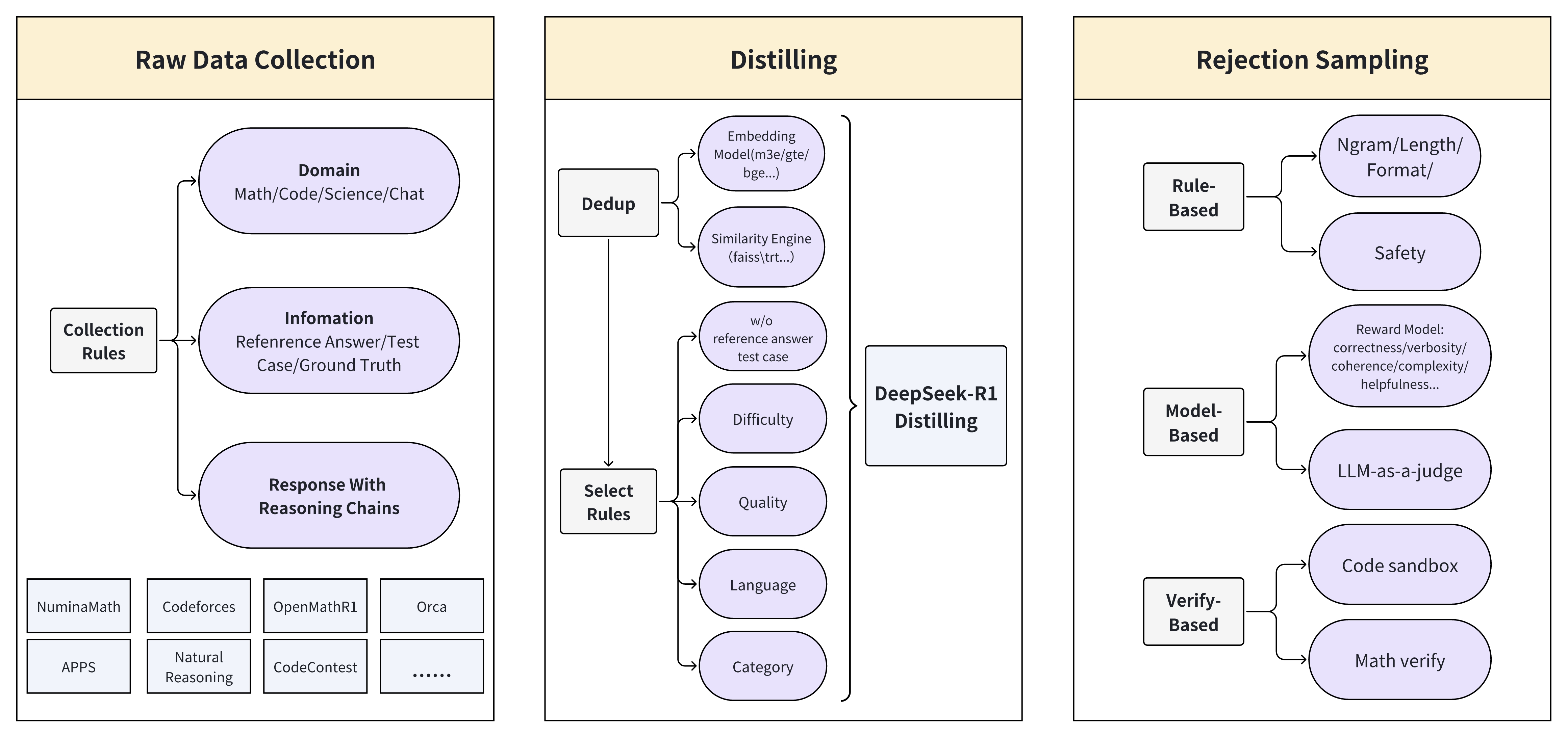

## Data Processing Pipeline: Raw Data Collection, Distilling, and Rejection Sampling

### Overview

The image presents a diagram illustrating a data processing pipeline, divided into three main stages: Raw Data Collection, Distilling, and Rejection Sampling. Each stage involves specific processes and rules to refine and filter data.

### Components/Axes

**1. Raw Data Collection:**

* **Title:** Raw Data Collection (located at the top of the section)

* **Elements:**

* Collection Rules (rectangular box on the left)

* Domain (rounded rectangle): Math/Code/Science/Chat

* Infomation (rounded rectangle): Reference Answer/Test Case/Ground Truth

* Response With Reasoning Chains (rounded rectangle)

* APPS (rectangular box): Contains sub-elements: NuminaMath, Codeforces, OpenMathR1, Orca, Natural Reasoning, CodeContest, and "..." indicating more elements.

**2. Distilling:**

* **Title:** Distilling (located at the top of the section)

* **Elements:**

* Dedup (rectangular box on the left)

* Embedding Model (m3e/gte/bge...) (rounded rectangle)

* Similarity Engine (faiss\trt...) (rounded rectangle)

* w/o reference answer test case (rounded rectangle)

* Select Rules (rectangular box on the left)

* Difficulty (rounded rectangle)

* Quality (rounded rectangle)

* Language (rounded rectangle)

* Category (rounded rectangle)

* DeepSeek-R1 Distilling (rectangular box on the right)

**3. Rejection Sampling:**

* **Title:** Rejection Sampling (located at the top of the section)

* **Elements:**

* Rule-Based (rectangular box on the left)

* Ngram/Length/Format/ (rounded rectangle)

* Safety (rounded rectangle)

* Model-Based (rectangular box on the left)

* Reward Model: correctness/verbosity/coherence/complexity/helpfulness... (rounded rectangle)

* LLM-as-a-judge (rounded rectangle)

* Verify-Based (rectangular box on the left)

* Code sandbox (rounded rectangle)

* Math verify (rounded rectangle)

### Detailed Analysis or ### Content Details

**1. Raw Data Collection:**

* Collection Rules feeds into Domain, Infomation, and Response With Reasoning Chains.

* APPS lists specific applications or datasets used in the raw data collection.

**2. Distilling:**

* Dedup feeds into Embedding Model and Similarity Engine.

* Select Rules feeds into Difficulty, Quality, Language, and Category.

* All elements feed into DeepSeek-R1 Distilling.

**3. Rejection Sampling:**

* Rule-Based feeds into Ngram/Length/Format/ and Safety.

* Model-Based feeds into Reward Model and LLM-as-a-judge.

* Verify-Based feeds into Code sandbox and Math verify.

### Key Observations

* The diagram outlines a multi-stage process for data refinement.

* Each stage has specific rules and processes to filter and improve data quality.

* The flow of data is generally from left to right, with specific rules feeding into various data characteristics or models.

### Interpretation

The diagram illustrates a comprehensive data processing pipeline designed to collect, refine, and filter data for specific applications, likely related to machine learning or AI. The Raw Data Collection stage gathers data from various sources and domains. The Distilling stage focuses on refining the data by removing duplicates, assessing quality, and categorizing it. Finally, the Rejection Sampling stage uses rule-based, model-based, and verification-based methods to filter out undesirable data points, ensuring the final dataset is of high quality and suitable for its intended purpose. The pipeline emphasizes the importance of data quality and relevance in AI and machine learning applications.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Data Pipeline for Model Training

### Overview

This diagram illustrates a data pipeline for training a model, encompassing raw data collection, distilling, and rejection sampling stages. The pipeline appears to be designed for generating high-quality training data, particularly for tasks involving math, code, science, and chat.

### Components/Axes

The diagram is structured into three main sections: "Raw Data Collection" (left), "Distilling" (center), and "Rejection Sampling" (right). Arrows indicate the flow of data between these stages. Within each section, several components are present, represented as boxes.

* **Raw Data Collection:**

* "Domain": Math/Code/Science/Chat

* "Collection Rules"

* "Information": Reference Answer/Test Case/Ground Truth

* "Response With Reasoning Chains"

* Datasets: "NumixMath", "Codeforces", "OpenMathR1", "Orca", "APPS", "Natural Reasoning", "CodeContest"

* **Distilling:**

* "Dedup": Input from "Raw Data Collection"

* "Similarity Engine": (faiss(trt...)) Input from "Dedup"

* "w/o reference answer test case" Input from "Similarity Engine"

* "DeepSeek-R1 Distilling" Input from "w/o reference answer test case", "Difficulty", "Quality", "Language", "Category"

* "Difficulty" Input from "Select Rules"

* "Quality" Input from "Select Rules"

* "Language" Input from "Select Rules"

* "Category" Input from "Select Rules"

* "Select Rules" Input from "Raw Data Collection"

* "Embedding Model(m3e/gte/bge...)" Input from "Dedup"

* **Rejection Sampling:**

* "Rule-Based": Input from "Distilling"

* "Model-Based": Input from "Distilling"

* "Verify-Based": Input from "Distilling"

* "Safety": Reward Model: correctness/verbosity/coherence/complexity/helpfulness...

* "LLM-as-a-judge"

* "Code sandbox"

* "Math verify"

* "Ngram/Length/Format/"

### Detailed Analysis

The data flow begins with "Raw Data Collection," where data from various sources (listed datasets) is gathered based on "Collection Rules" and "Information" (reference answers, test cases, ground truth). Responses with reasoning chains are also collected. This data is then fed into the "Distilling" stage.

Within "Distilling," the data undergoes deduplication ("Dedup") and similarity analysis using a "Similarity Engine" (faiss(trt...)). The "Dedup" process also utilizes an "Embedding Model" (m3e/gte/bge...). A key component is "DeepSeek-R1 Distilling," which receives input from the similarity engine and is influenced by factors like "Difficulty," "Quality," "Language," and "Category," which are selected by "Select Rules".

The output of "Distilling" is then passed to "Rejection Sampling," which employs three approaches: "Rule-Based," "Model-Based," and "Verify-Based." "Rule-Based" sampling incorporates a "Safety" component that evaluates data based on a "Reward Model" considering correctness, verbosity, coherence, complexity, and helpfulness. "Model-Based" sampling uses an "LLM-as-a-judge." "Verify-Based" sampling utilizes a "Code sandbox" and "Math verify." The "Rejection Sampling" stage also considers "Ngram/Length/Format/".

### Key Observations

The pipeline emphasizes data quality and relevance. The "Distilling" stage focuses on removing redundancy and selecting data based on specific criteria (difficulty, quality, language, category). The "Rejection Sampling" stage employs multiple methods to filter out undesirable data, ensuring the final training set is safe, accurate, and aligned with desired characteristics. The use of "faiss(trt...)" suggests a focus on efficient similarity search. The inclusion of "Code sandbox" and "Math verify" indicates a strong emphasis on correctness for code and mathematical tasks.

### Interpretation

This diagram represents a sophisticated data engineering pipeline designed to create a high-quality dataset for training large language models, particularly those focused on complex reasoning tasks like math and code. The pipeline's multi-stage approach—collection, distillation, and rejection sampling—reflects a commitment to data quality and safety. The use of multiple filtering mechanisms (rule-based, model-based, verify-based) suggests a robust approach to identifying and removing potentially harmful or inaccurate data. The pipeline is likely intended to address challenges associated with generating synthetic data or curating existing datasets for specialized applications. The emphasis on reasoning chains suggests the model is intended to not only provide answers but also explain its thought process. The pipeline is designed to create a dataset that is not only large but also carefully curated to maximize the performance and reliability of the resulting model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Data Processing Pipeline for DeepSeek-R1 Distilling

### Overview

The image is a technical flowchart illustrating a three-stage data processing pipeline for training or refining a model named "DeepSeek-R1." The pipeline flows from left to right, beginning with raw data collection, moving through a distillation/filtering phase, and concluding with a rejection sampling phase. The diagram uses a consistent visual language: beige header boxes for stage titles, light purple ovals for processes or criteria, and gray rectangles for input sources or rule categories. Arrows indicate the flow of data and decision logic.

### Components/Axes

The diagram is segmented into three primary vertical panels, each with a header:

1. **Left Panel: Raw Data Collection**

* **Header:** "Raw Data Collection"

* **Central Process:** "Collection Rules" (gray rectangle) which feeds into three data type categories (purple ovals).

* **Data Type Categories:**

* "Domain" (with sub-label: "Math/Code/Science/Chat")

* "Infomation" (Note: Likely a typo for "Information"; sub-label: "Refenrence Answer/Test Case/Ground Truth")

* "Response With Reasoning Chains"

* **Input Sources (Bottom Row):** A series of gray rectangles listing specific datasets or sources:

* "NuminaMath"

* "Codeforces"

* "OpenMathR1"

* "Orca"

* "APPS"

* "Natural Reasoning"

* "CodeContest"

* "......" (indicating additional, unspecified sources)

2. **Center Panel: Distilling**

* **Header:** "Distilling"

* **Primary Process Flow:** Two gray rectangles ("Dedup" and "Select Rules") feed into a central block labeled "DeepSeek-R1 Distilling."

* **Dedup Sub-processes (Purple Ovals):**

* "Embedding Model(m3e/gte/bge...)"

* "Similarity Engine (faiss\trt...)"

* **Select Rules Sub-processes (Purple Ovals):**

* "w/o reference answer test case"

* "Difficulty"

* "Quality"

* "Language"

* "Category"

3. **Right Panel: Rejection Sampling**

* **Header:** "Rejection Sampling"

* **Primary Method Categories (Gray Rectangles):** Three distinct approaches, each with sub-criteria (purple ovals).

* **Rule-Based:**

* "Ngram/Length/Format/"

* "Safety"

* **Model-Based:**

* "Reward Model: correctness/verbosity/coherence/complexity/helpfulness..."

* "LLM-as-a-judge"

* **Verify-Based:**

* "Code sandbox"

* "Math verify"

### Detailed Analysis

The diagram details a sequential and branching workflow:

1. **Stage 1 - Collection:** Raw data is gathered according to "Collection Rules." The data is categorized by domain (e.g., Math, Code), by the presence of verified information (reference answers, ground truth), and by the inclusion of reasoning chains. The sources for this data are diverse, including competitive programming platforms (Codeforces, CodeContest), math datasets (NuminaMath, OpenMathR1), and general reasoning corpora (Orca, Natural Reasoning).

2. **Stage 2 - Distilling:** The collected data undergoes two parallel filtering processes before being used for "DeepSeek-R1 Distilling."

* **Dedup (Deduplication):** Uses embedding models (like m3e, gte, bge) and similarity engines (like faiss, trt) to identify and remove duplicate or near-duplicate entries.

* **Select Rules:** Applies multiple filters to curate the dataset. This includes removing test cases without reference answers, and selecting based on difficulty, quality, language, and category.

3. **Stage 3 - Rejection Sampling:** The distilled dataset is further refined using three evaluation methods to reject low-quality samples.

* **Rule-Based:** Applies heuristic rules concerning n-grams, length, format, and safety.

* **Model-Based:** Uses a Reward Model to score responses on dimensions like correctness, verbosity, coherence, complexity, and helpfulness. It also employs an "LLM-as-a-judge" for evaluation.

* **Verify-Based:** Uses executable environments for verification: a "Code sandbox" for programming tasks and "Math verify" for mathematical problems.

### Key Observations

* **Process-Oriented:** The diagram is purely a process flowchart. It contains no numerical data, charts, or quantitative metrics. Its purpose is to outline the methodology, not present results.

* **Typographical Error:** The label "Infomation" in the first panel is a clear misspelling of "Information."

* **Technical Specificity:** The diagram names specific tools and models (e.g., faiss, m3e, gte, bge), indicating a concrete technical implementation rather than a conceptual overview.

* **Comprehensive Filtering:** The pipeline employs a multi-faceted approach to data quality, moving from broad collection rules to specific deduplication, attribute-based selection, and finally, multi-method rejection sampling.

### Interpretation

This diagram outlines a sophisticated data curation pipeline designed to create a high-quality training dataset for the "DeepSeek-R1" model. The process emphasizes **quality over quantity**.

* **The "Why":** The multi-stage filtering (Distilling + Rejection Sampling) suggests that the initial raw data is noisy or varied. The goal is to distill it down to a core set of high-value examples that are diverse (by domain/category), challenging (by difficulty), correct (via reference answers and verification), and well-formed (via rule and model-based checks).

* **Relationship Between Stages:** The stages are interdependent. "Raw Data Collection" defines the input universe. "Distilling" performs coarse-grained filtering to manage scale and remove obvious duplicates and low-relevance items. "Rejection Sampling" performs fine-grained, often computationally expensive, quality assessment on the remaining data to ensure only the best examples are used for model training.

* **Notable Methodology:** The inclusion of "Response With Reasoning Chains" as a primary data type and "LLM-as-a-judge" as an evaluation method indicates a focus on training the model for **chain-of-thought reasoning** and complex problem-solving, not just final answer accuracy. The "Verify-Based" methods (Code sandbox, Math verify) add a layer of objective, ground-truth validation that is crucial for technical domains like math and coding.

In essence, this pipeline is engineered to transform large, heterogeneous raw data into a refined, high-signal dataset capable of teaching a model robust reasoning and problem-solving skills.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Data Processing Pipeline for LLM-Based Systems

### Overview

The flowchart illustrates a three-stage pipeline for processing data in LLM-based systems: **Raw Data Collection**, **Distilling**, and **Rejection Sampling**. Each stage includes specific components and rules for data handling, quality control, and filtering.

---

### Components/Axes

#### 1. Raw Data Collection

- **Domain**: Math, Code, Science, Chat

- **Collection Rules**:

- **Information**: Reference Answer, Test Case, Ground Truth

- **Response With Reasoning Chains**:

- Sources: NuminaMath, Codeforces, OpenMathR1, Orca, APPS, Natural Reasoning, CodeContest

#### 2. Distilling

- **Dedup**:

- Embedding Model (m3e/gte/bge...)

- Similarity Engine (faiss/tr...)

- w/o reference answer test case

- **DeepSeek-R1 Distilling**:

- Select Rules: Difficulty, Quality, Language, Category

#### 3. Rejection Sampling

- **Rule-Based**:

- Ngram/Length/Format

- Safety

- LLM-as-a-judge

- **Model-Based**:

- Reward Model: correctness/verbosity/coherence/complexity/helpfulness

- Verify-Based: Code sandbox, Math verify

---

### Detailed Analysis

#### Raw Data Collection

- **Domain**: Broad categorization of data sources (Math, Code, Science, Chat).

- **Information**: Focuses on structured data (Reference Answer, Test Case, Ground Truth).

- **Response With Reasoning Chains**: Aggregates outputs from diverse LLM benchmarks (e.g., NuminaMath for math, Codeforces for coding).

#### Distilling

- **Dedup**:

- Uses embeddings (e.g., m3e/gte/bge) and similarity engines (faiss/tr) to remove duplicates.

- Excludes test cases without reference answers.

- **DeepSeek-R1 Distilling**:

- Applies **Select Rules** to refine data based on difficulty, quality, language, and category.

#### Rejection Sampling

- **Rule-Based**:

- Filters data using syntactic rules (Ngram/Length/Format) and safety checks.

- Employs LLM-as-a-judge for qualitative assessment.

- **Model-Based**:

- Uses a **Reward Model** to evaluate data on correctness, verbosity, coherence, complexity, and helpfulness.

- Verifies code and math solutions via sandboxing and automated checks.

---

### Key Observations

1. **Data Flow**: Raw data is collected, distilled to remove redundancy and improve quality, then filtered using hybrid rule/model-based methods.

2. **Hybrid Approach**: Combines rule-based (e.g., safety checks) and model-based (e.g., reward model) rejection criteria.

3. **Domain-Specific Tools**: Tools like Codeforces and OpenMathR1 suggest domain-specific data collection.

4. **DeepSeek-R1 Integration**: Indicates a focus on iterative refinement using specialized distillation techniques.

---

### Interpretation

This pipeline emphasizes **quality assurance** at every stage:

- **Raw Data Collection** ensures diverse, domain-specific inputs.

- **Distilling** refines data by removing duplicates and applying domain-specific rules.

- **Rejection Sampling** acts as a final gatekeeper, using both rigid rules (e.g., format constraints) and nuanced model evaluations (e.g., helpfulness).

The use of **DeepSeek-R1** in the Distilling stage suggests an emphasis on iterative improvement, while the **Reward Model** in Rejection Sampling highlights a focus on multi-dimensional data quality metrics. The pipeline likely aims to balance efficiency (via rule-based filtering) and accuracy (via model-based evaluation) in LLM training or inference workflows.

DECODING INTELLIGENCE...