## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

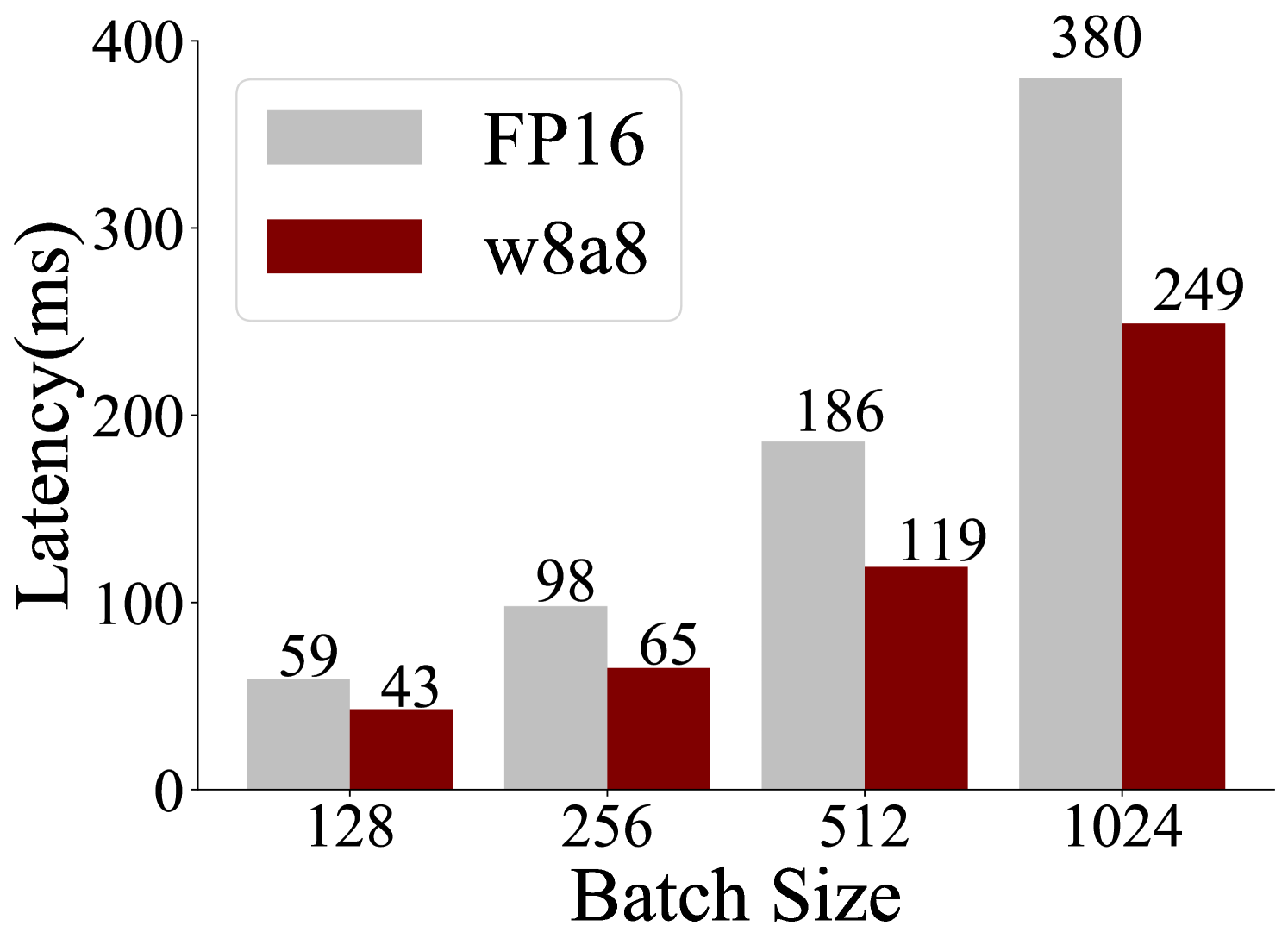

This bar chart displays the latency in milliseconds (ms) for two different configurations, FP16 and w8a8, across varying batch sizes. The batch sizes tested are 128, 256, 512, and 1024. The chart visually represents how latency changes with increasing batch sizes for each configuration.

### Components/Axes

* **Y-axis Title:** "Latency(ms)"

* **Scale:** Linear, ranging from 0 to 400, with major tick marks at 0, 100, 200, 300, and 400.

* **X-axis Title:** "Batch Size"

* **Categories:** 128, 256, 512, 1024.

* **Legend:** Located in the top-left quadrant of the chart.

* **FP16:** Represented by a light gray rectangle.

* **w8a8:** Represented by a dark red rectangle.

### Detailed Analysis

The chart presents paired bars for each batch size, with the left bar representing FP16 and the right bar representing w8a8.

* **Batch Size 128:**

* FP16 (light gray bar): 59 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 43 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 256:**

* FP16 (light gray bar): 98 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 65 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 512:**

* FP16 (light gray bar): 186 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 119 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 1024:**

* FP16 (light gray bar): 380 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 249 ms. This bar is positioned to the right of the FP16 bar.

### Key Observations

* **Trend:** For both FP16 and w8a8, latency generally increases as the batch size increases.

* **Comparison:** The w8a8 configuration consistently shows lower latency than the FP16 configuration across all tested batch sizes.

* **Magnitude of Difference:** The difference in latency between FP16 and w8a8 appears to grow with increasing batch size. For batch size 128, the difference is approximately 16 ms (59 - 43). For batch size 1024, the difference is approximately 131 ms (380 - 249).

* **Steepest Increase:** The most significant jump in latency for FP16 occurs between batch sizes 512 (186 ms) and 1024 (380 ms), an increase of 194 ms. For w8a8, the largest increase is between batch sizes 512 (119 ms) and 1024 (249 ms), an increase of 130 ms.

### Interpretation

This chart demonstrates the performance characteristics of two different data precision/quantization schemes (FP16 and w8a8) in terms of latency as a function of batch size.

The data suggests that the w8a8 configuration is more efficient, exhibiting lower latency across all batch sizes. This is likely due to its reduced precision (8-bit weights and 8-bit activations) compared to FP16 (16-bit floating-point), which can lead to faster computations and reduced memory bandwidth requirements.

The increasing latency with larger batch sizes is a common phenomenon in many computational systems, often attributed to factors like increased memory usage, cache contention, and parallel processing overhead. The fact that the latency difference between FP16 and w8a8 widens with larger batch sizes indicates that the benefits of w8a8 become more pronounced as the workload scales up. This implies that for applications requiring high throughput and processing large amounts of data (larger batch sizes), the w8a8 configuration offers a significant performance advantage. The steep increase in latency for FP16 at batch size 1024 might indicate a saturation point or a more significant bottleneck compared to w8a8 at that scale.