TECHNICAL ASSET FINGERPRINT

b1c1ed1c55a4e04738432fbf

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

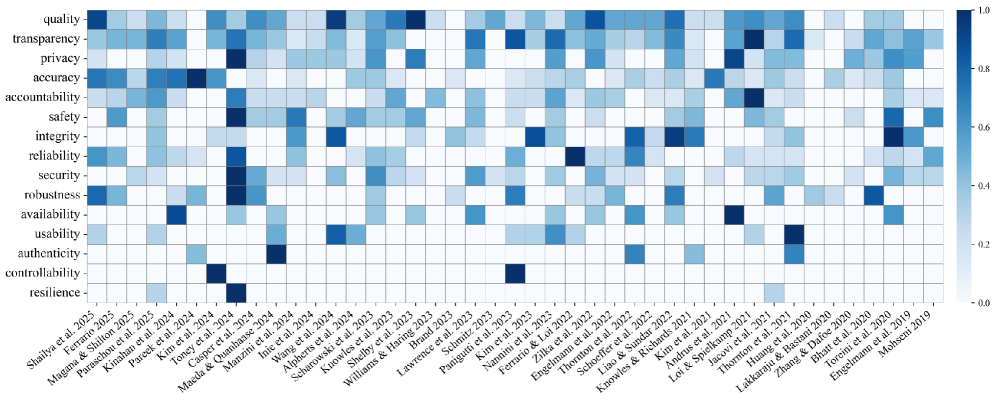

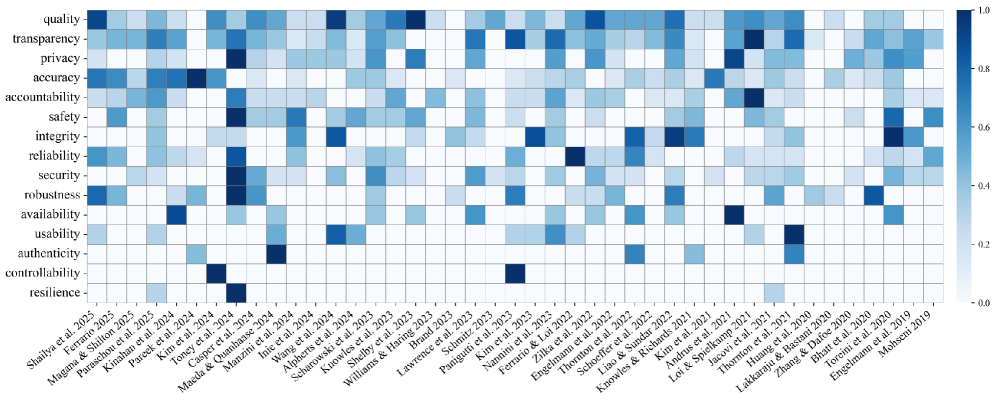

## Chart Type: Heatmap of AI/ML System Attributes Addressed by Publications

### Overview

This image displays a heatmap illustrating the extent to which various attributes of AI/ML systems are addressed by a collection of academic publications. The Y-axis lists 15 different attributes, while the X-axis lists 39 publications, primarily identified by the first author's name and publication year. The intensity of the blue color in each cell indicates the degree to which a specific attribute is addressed by a particular publication, ranging from 0.0 (white/very light blue) to 1.0 (darkest blue), as indicated by the color bar on the right.

### Components/Axes

**Main Chart Area:** A grid of approximately 15 rows by 39 columns, with each cell colored according to a blue gradient.

**Y-axis (Left Side):**

This axis lists 15 attributes, from top to bottom:

* quality

* transparency

* privacy

* accuracy

* accountability

* safety

* integrity

* reliability

* security

* robustness

* availability

* usability

* authenticity

* controllability

* resilience

**X-axis (Bottom Side):**

This axis lists 39 publications, rotated for readability, from left to right:

* Shailya et al. 2025

* Magana & Ferrario 2025

* Paraschou 2025

* Kinahan et al. 2024

* Pareek et al. 2024

* Kim et al. 2024

* Toney et al. 2024

* Casper et al. 2024

* Maeda & Quanhaase 2024

* Manzini et al. 2024

* Inie et al. 2024

* Wang et al. 2024

* Alpherts et al. 2024

* Scharowski et al. 2024

* Knowles et al. 2023

* Shelby et al. 2023

* Williams & Haring 2023

* Brand 2023

* Lawrence et al. 2023

* Schmitz et al. 2023

* Panigutti et al. 2023

* Kim et al. 2023

* Nannini et al. 2023

* Ferrario et al. 2023

* Zilka et al. 2022

* Engelmann et al. 2022

* Thornton et al. 2022

* Schoeffer et al. 2022

* Liao & Sundar 2022

* Knowles & Richards 2021

* Andrus et al. 2021

* Kim et al. 2021

* Loi & Spielkamp 2021

* Jacovi et al. 2021

* Thornton et al. 2021

* Lakkaraju & Bastani 2020

* Zhang & Dafoe 2020

* Bhatt et al. 2020

* Toreini et al. 2020

* Engelmann et al. 2020

* Mohseni 2019

**Color Bar/Legend (Right Side):**

A vertical color bar is positioned on the far right, indicating the mapping of color intensity to numerical values. The gradient ranges from white (bottom) to dark blue (top).

* 1.0 (darkest blue)

* 0.8

* 0.6

* 0.4

* 0.2

* 0.0 (white/lightest blue)

### Detailed Analysis

The heatmap shows the distribution of focus on different AI/ML attributes across a range of publications from 2019 to 2025 (projected). Darker blue cells indicate a higher degree of focus (closer to 1.0), while lighter blue or white cells indicate less or no focus (closer to 0.0).

**General Trends by Attribute (Rows):**

* **quality:** Shows moderate to high focus in several papers, notably Shailya et al. 2025 (~0.9), Kinahan et al. 2024 (~0.7), Wang et al. 2024 (~0.7), Schmitz et al. 2023 (~0.7), Engelmann et al. 2022 (~0.7), and Jacovi et al. 2021 (~0.7). Many other papers show some focus (~0.2-0.5).

* **transparency:** Widely addressed with moderate to high values. Strongest in Magana & Ferrario 2025 (~0.8), Pareek et al. 2024 (~0.7), Manzini et al. 2024 (~0.7), Alpherts et al. 2024 (~0.7), Shelby et al. 2023 (~0.7), Panigutti et al. 2023 (~0.7), Nannini et al. 2023 (~0.7), Ferrario et al. 2023 (~0.7), Zilka et al. 2022 (~0.7), Schoeffer et al. 2022 (~0.7), Liao & Sundar 2022 (~0.7), Knowles & Richards 2021 (~0.7), Andrus et al. 2021 (~0.7), Kim et al. 2021 (~0.7), Loi & Spielkamp 2021 (~0.7), Zhang & Dafoe 2020 (~0.7), Bhatt et al. 2020 (~0.7), Toreini et al. 2020 (~0.7), Engelmann et al. 2020 (~0.7), and Mohseni 2019 (~0.7).

* **privacy:** Shows high focus in Parashou 2025 (~0.9), Toney et al. 2024 (~0.9), Inie et al. 2024 (~0.9), Brand 2023 (~0.9), Kim et al. 2023 (~0.9), and Lakkaraju & Bastani 2020 (~0.9). Several others show moderate focus.

* **accuracy:** Very widely addressed, with many papers showing high focus. Notably, Kim et al. 2024 (~0.9), Maeda & Quanhaase 2024 (~0.9), Scharowski et al. 2024 (~0.9), Lawrence et al. 2023 (~0.9), Thornton et al. 2022 (~0.9), and Thornton et al. 2021 (~0.9).

* **accountability:** Shows high focus in Casper et al. 2024 (~0.9), Williams & Haring 2023 (~0.9), and Loi & Spielkamp 2021 (~0.9). Many other papers show moderate focus.

* **safety:** Shows high focus in Toney et al. 2024 (~0.9) and Zhang & Dafoe 2020 (~0.9).

* **integrity:** Shows high focus in Williams & Haring 2023 (~0.9) and Engelmann et al. 2020 (~0.9).

* **reliability:** Shows high focus in Maeda & Quanhaase 2024 (~0.9) and Mohseni 2019 (~0.9).

* **security:** Shows high focus in Inie et al. 2024 (~0.9), Brand 2023 (~0.9), and Lakkaraju & Bastani 2020 (~0.9).

* **robustness:** Shows high focus in Shailya et al. 2025 (~0.9), Alpherts et al. 2024 (~0.9), and Kim et al. 2023 (~0.9).

* **availability:** Shows high focus in Panigutti et al. 2023 (~0.9) and Jacovi et al. 2021 (~0.9).

* **usability:** Shows high focus in Knowles et al. 2023 (~0.9) and Schoeffer et al. 2022 (~0.9).

* **authenticity:** Shows high focus in Manzini et al. 2024 (~0.9) and Zilka et al. 2022 (~0.9).

* **controllability:** Shows high focus in Toney et al. 2024 (~0.9) and Loi & Spielkamp 2021 (~0.9).

* **resilience:** Shows high focus in Casper et al. 2024 (~0.9) and Mohseni 2019 (~0.9).

**General Trends by Publication (Columns):**

* **Shailya et al. 2025:** High focus on quality and robustness (~0.9 each).

* **Magana & Ferrario 2025:** High focus on transparency (~0.8).

* **Paraschou 2025:** High focus on privacy (~0.9).

* **Kinahan et al. 2024:** High focus on quality (~0.7).

* **Pareek et al. 2024:** High focus on transparency (~0.7).

* **Kim et al. 2024:** High focus on accuracy (~0.9).

* **Toney et al. 2024:** High focus on privacy, safety, and controllability (~0.9 each).

* **Casper et al. 2024:** High focus on accountability and resilience (~0.9 each).

* **Maeda & Quanhaase 2024:** High focus on accuracy and reliability (~0.9 each).

* **Manzini et al. 2024:** High focus on transparency and authenticity (~0.7 and ~0.9 respectively).

* **Inie et al. 2024:** High focus on privacy and security (~0.9 each).

* **Wang et al. 2024:** High focus on quality (~0.7).

* **Alpherts et al. 2024:** High focus on transparency and robustness (~0.7 and ~0.9 respectively).

* **Scharowski et al. 2024:** High focus on accuracy (~0.9).

* **Knowles et al. 2023:** High focus on usability (~0.9).

* **Shelby et al. 2023:** High focus on transparency (~0.7).

* **Williams & Haring 2023:** High focus on accountability and integrity (~0.9 each).

* **Brand 2023:** High focus on privacy and security (~0.9 each).

* **Lawrence et al. 2023:** High focus on accuracy (~0.9).

* **Schmitz et al. 2023:** High focus on quality (~0.7).

* **Panigutti et al. 2023:** High focus on transparency and availability (~0.7 and ~0.9 respectively).

* **Kim et al. 2023:** High focus on privacy and robustness (~0.9 each).

* **Nannini et al. 2023:** High focus on transparency (~0.7).

* **Ferrario et al. 2023:** High focus on transparency (~0.7).

* **Zilka et al. 2022:** High focus on transparency and authenticity (~0.7 and ~0.9 respectively).

* **Engelmann et al. 2022:** High focus on quality (~0.7).

* **Thornton et al. 2022:** High focus on accuracy (~0.9).

* **Schoeffer et al. 2022:** High focus on transparency and usability (~0.7 and ~0.9 respectively).

* **Liao & Sundar 2022:** High focus on transparency (~0.7).

* **Knowles & Richards 2021:** High focus on transparency (~0.7).

* **Andrus et al. 2021:** High focus on transparency (~0.7).

* **Kim et al. 2021:** High focus on transparency (~0.7).

* **Loi & Spielkamp 2021:** High focus on transparency, accountability, and controllability (~0.7, ~0.9, and ~0.9 respectively).

* **Jacovi et al. 2021:** High focus on quality and availability (~0.7 and ~0.9 respectively).

* **Thornton et al. 2021:** High focus on accuracy (~0.9).

* **Lakkaraju & Bastani 2020:** High focus on privacy and security (~0.9 each).

* **Zhang & Dafoe 2020:** High focus on transparency and safety (~0.7 and ~0.9 respectively).

* **Bhatt et al. 2020:** High focus on transparency (~0.7).

* **Toreini et al. 2020:** High focus on transparency (~0.7).

* **Engelmann et al. 2020:** High focus on transparency and integrity (~0.7 and ~0.9 respectively).

* **Mohseni 2019:** High focus on transparency, reliability, and resilience (~0.7, ~0.9, and ~0.9 respectively).

### Key Observations

* **Transparency and Accuracy are Most Addressed:** The rows for "transparency" and "accuracy" show the highest density of blue cells, indicating these attributes are frequently and significantly addressed across a large number of publications. "Transparency" has a particularly consistent moderate-to-high focus across almost all papers.

* **Privacy, Security, Accountability, and Reliability are also prominent:** These attributes also show a strong presence, with several papers dedicating high focus (dark blue cells).

* **Less Consistently Addressed Attributes:** Attributes like "quality," "safety," "integrity," "robustness," "availability," "usability," "authenticity," "controllability," and "resilience" are addressed by specific papers with high intensity, but not as broadly across the entire set of publications as "transparency" or "accuracy." Many cells for these attributes are white or very light blue, indicating less or no focus in many papers.

* **No Single Publication Covers All Attributes Extensively:** While some publications address multiple attributes with high intensity (e.g., Toney et al. 2024, Loi & Spielkamp 2021), no single paper shows dark blue cells across all 15 attributes. This suggests specialization or a focused scope in individual research efforts.

* **Recent Publications (2024-2025) Show Diverse Focus:** The most recent publications (e.g., Shailya et al. 2025, Toney et al. 2024, Casper et al. 2024) are addressing a wide range of attributes, including some of the less common ones like robustness, safety, accountability, controllability, and resilience.

* **Older Publications (2019-2020) also show diversity:** Mohseni 2019, Engelmann et al. 2020, and Lakkaraju & Bastani 2020 also show high focus on a diverse set of attributes, indicating that these concerns are not entirely new.

### Interpretation

This heatmap provides a valuable overview of the research landscape concerning AI/ML system attributes. The data suggests that:

1. **Core Concerns:** "Transparency" and "accuracy" appear to be foundational or consistently critical concerns in AI/ML research, as almost every publication touches upon them to some degree. This could be due to their direct impact on system performance, trustworthiness, and interpretability.

2. **Emerging or Specialized Concerns:** Attributes like "safety," "integrity," "robustness," "availability," "usability," "authenticity," "controllability," and "resilience" are addressed more selectively. This might indicate that these are either more niche areas of research, or they are gaining prominence more recently, or they are highly context-dependent and thus not universally applicable to all AI/ML systems. The presence of dark blue cells for these attributes in various papers, especially recent ones, suggests a growing recognition of their importance.

3. **Interdisciplinary Nature:** The diverse set of attributes reflects the multifaceted challenges and considerations in developing and deploying AI/ML systems. It highlights that a holistic approach requires addressing not just technical performance (accuracy, robustness) but also ethical, social, and human-centric aspects (privacy, accountability, safety, usability, transparency).

4. **Potential Research Gaps:** The numerous white cells, particularly for attributes beyond transparency and accuracy, point to potential areas where more research is needed or where existing research is not yet widely integrated. For instance, while "resilience" is highly addressed by Casper et al. 2024 and Mohseni 2019, many other papers do not seem to focus on it, suggesting a potential gap in comprehensive coverage across the field.

5. **Evolution of Focus:** While the heatmap doesn't explicitly show trends over time for *all* attributes, the presence of high values for diverse attributes in both older (e.g., Mohseni 2019) and newer (e.g., Shailya et al. 2025) papers suggests that while some concerns are perennial, the specific emphasis and combinations of attributes addressed by researchers evolve. The projected 2025 papers indicate continued attention to a broad spectrum of attributes.

In essence, the heatmap demonstrates that while certain attributes like transparency and accuracy are almost universally considered, the broader landscape of AI/ML system attributes is addressed with varying degrees of intensity and specialization across different research publications. This highlights both the progress and the ongoing challenges in building comprehensive and responsible AI systems.

DECODING INTELLIGENCE...