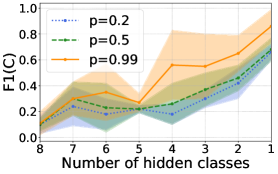

## Line Chart: F1(C) Score vs. Number of Hidden Classes

### Overview

The image displays a line chart with shaded confidence intervals, plotting the F1(C) score against the number of hidden classes. The chart compares three different values of a parameter `p` (0.2, 0.5, and 0.99). The general trend shows that as the number of hidden classes decreases (moving from left to right on the x-axis), the F1(C) score increases for all three series, with the highest `p` value (0.99) achieving the highest final score.

### Components/Axes

- **Chart Type:** Line chart with shaded confidence intervals.

- **X-Axis:**

- **Label:** "Number of hidden classes"

- **Scale:** Descending linear scale from 8 to 1. The markers are at integer intervals: 8, 7, 6, 5, 4, 3, 2, 1.

- **Y-Axis:**

- **Label:** "F1(C)"

- **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

- **Legend:**

- **Position:** Top-left corner of the plot area.

- **Entries:**

1. Blue dotted line: `p=0.2`

2. Green dashed line: `p=0.5`

3. Orange solid line: `p=0.99`

- **Data Series & Shading:** Each line is accompanied by a semi-transparent shaded area of the same color, representing the confidence interval or variance around the mean trend line.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **Series: p=0.2 (Blue, Dotted Line)**

- **Trend:** Starts low, shows a general upward trend with a notable dip at 5 hidden classes, and ends at its highest point.

- **Data Points (Hidden Classes, F1(C)):**

- (8, ~0.10)

- (7, ~0.08) - Slight dip.

- (6, ~0.30) - Sharp increase.

- (5, ~0.20) - Significant dip.

- (4, ~0.30) - Recovers.

- (3, ~0.40)

- (2, ~0.50)

- (1, ~0.60)

- **Confidence Interval:** Widest between 5 and 3 hidden classes, suggesting higher variance in results in that region.

2. **Series: p=0.5 (Green, Dashed Line)**

- **Trend:** Follows a similar pattern to p=0.2 but generally sits slightly higher, especially in the middle range. Also dips at 5 classes.

- **Data Points (Hidden Classes, F1(C)):**

- (8, ~0.10)

- (7, ~0.20)

- (6, ~0.30)

- (5, ~0.20) - Dip.

- (4, ~0.30)

- (3, ~0.40)

- (2, ~0.50)

- (1, ~0.60)

- **Confidence Interval:** Narrower than the p=0.2 series, indicating more consistent results.

3. **Series: p=0.99 (Orange, Solid Line)**

- **Trend:** Shows the strongest upward trajectory. While it shares the dip at 5 classes, its recovery and final ascent are the most pronounced.

- **Data Points (Hidden Classes, F1(C)):**

- (8, ~0.10)

- (7, ~0.20)

- (6, ~0.30)

- (5, ~0.20) - Dip.

- (4, ~0.40) - Stronger recovery.

- (3, ~0.50)

- (2, ~0.60)

- (1, ~0.80) - Highest point on the chart.

- **Confidence Interval:** The widest of all three series, particularly from 4 to 1 hidden classes. This indicates that while the mean performance is highest, the results are also the most variable.

### Key Observations

1. **Universal Dip at 5 Hidden Classes:** All three series show a distinct performance drop when the number of hidden classes is 5. This is a consistent anomaly across different `p` values.

2. **Performance Hierarchy:** For nearly all data points (except at 8 and 5 hidden classes where they converge), the F1(C) score follows the order: `p=0.99` > `p=0.5` ≥ `p=0.2`. The gap widens significantly as the number of hidden classes decreases below 4.

3. **Inverse Relationship:** There is a clear inverse relationship between the number of hidden classes and the F1(C) score. Fewer hidden classes lead to better performance.

4. **Variance Correlation:** The variance (shaded area) appears to increase as the performance (F1(C)) increases, especially for the `p=0.99` series. High performance comes with less predictability.

### Interpretation

This chart likely evaluates the performance of a machine learning model (possibly in unsupervised or semi-supervised learning, given the "hidden classes" terminology) under different levels of a constraint or regularization parameter `p`. The F1(C) score is a metric combining precision and recall for classification.

The data suggests that **reducing the complexity of the model (fewer hidden classes) improves its discriminative power (higher F1 score)**. The parameter `p` acts as a performance amplifier; a value close to 1 (`p=0.99`) yields the best results but at the cost of higher result variability. The consistent dip at 5 hidden classes is a critical finding—it indicates a specific model configuration or data characteristic that creates a "performance valley," which would be a key point for further investigation. The widening confidence intervals at higher performance levels imply that as the model becomes more effective, its outcomes become more sensitive to initial conditions or data sampling, a trade-off between peak performance and robustness.