TECHNICAL ASSET FINGERPRINT

b28bf51280dec7e8a58013b2

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Qwen3-8B and Qwen3-32B Performance

### Overview

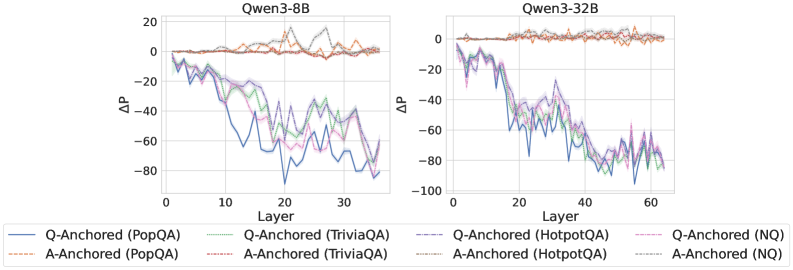

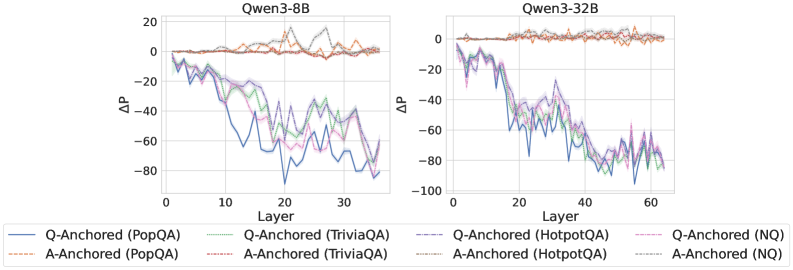

The image presents two line charts comparing the performance of Qwen3-8B and Qwen3-32B models across different layers, measured by ΔP (Delta P). Each chart displays multiple data series, representing different question-answering datasets (PopQA, TriviaQA, HotpotQA, and NQ) anchored by either the question (Q-Anchored) or the answer (A-Anchored).

### Components/Axes

* **Titles:**

* Left Chart: Qwen3-8B

* Right Chart: Qwen3-32B

* **Y-Axis:**

* Label: ΔP

* Scale: -100 to 20, with increments of 20 (-80, -60, -40, -20, 0, 20)

* **X-Axis:**

* Label: Layer

* Left Chart Scale: 0 to 30, with increments of 10 (0, 10, 20, 30)

* Right Chart Scale: 0 to 60, with increments of 20 (0, 20, 40, 60)

* **Legend:** Located at the bottom of the image, describing the data series:

* Blue solid line: Q-Anchored (PopQA)

* Brown dashed line: A-Anchored (PopQA)

* Green dotted line: Q-Anchored (TriviaQA)

* Pink dashed-dotted line: A-Anchored (TriviaQA)

* Dark Blue dashed line: Q-Anchored (HotpotQA)

* Orange dotted line: A-Anchored (HotpotQA)

* Purple dashed-dotted line: Q-Anchored (NQ)

* Gray dotted line: A-Anchored (NQ)

### Detailed Analysis

#### Qwen3-8B (Left Chart)

* **Q-Anchored (PopQA) (Blue solid line):** Starts at approximately -5 and decreases to around -65 at layer 20, then fluctuates between -40 and -60 until layer 30.

* **A-Anchored (PopQA) (Brown dashed line):** Remains relatively stable around 0 to 5 across all layers.

* **Q-Anchored (TriviaQA) (Green dotted line):** Starts around -5 and decreases to approximately -40 at layer 15, then fluctuates between -30 and -40 until layer 30.

* **A-Anchored (TriviaQA) (Pink dashed-dotted line):** Starts around -5 and decreases to approximately -40 at layer 15, then fluctuates between -30 and -40 until layer 30.

* **Q-Anchored (HotpotQA) (Dark Blue dashed line):** Starts around -5 and decreases to approximately -50 at layer 15, then fluctuates between -40 and -50 until layer 30.

* **A-Anchored (HotpotQA) (Orange dotted line):** Remains relatively stable around 0 to 5 across all layers.

* **Q-Anchored (NQ) (Purple dashed-dotted line):** Starts around -5 and decreases to approximately -50 at layer 15, then fluctuates between -40 and -50 until layer 30.

* **A-Anchored (NQ) (Gray dotted line):** Remains relatively stable around 0 to 5 across all layers.

#### Qwen3-32B (Right Chart)

* **Q-Anchored (PopQA) (Blue solid line):** Starts at approximately -5 and decreases to around -85 at layer 40, then fluctuates between -70 and -80 until layer 60.

* **A-Anchored (PopQA) (Brown dashed line):** Remains relatively stable around 0 to 5 across all layers.

* **Q-Anchored (TriviaQA) (Green dotted line):** Starts around -5 and decreases to approximately -70 at layer 40, then fluctuates between -60 and -70 until layer 60.

* **A-Anchored (TriviaQA) (Pink dashed-dotted line):** Starts around -5 and decreases to approximately -70 at layer 40, then fluctuates between -60 and -70 until layer 60.

* **Q-Anchored (HotpotQA) (Dark Blue dashed line):** Starts around -5 and decreases to approximately -80 at layer 40, then fluctuates between -70 and -80 until layer 60.

* **A-Anchored (HotpotQA) (Orange dotted line):** Remains relatively stable around 0 to 5 across all layers.

* **Q-Anchored (NQ) (Purple dashed-dotted line):** Starts around -5 and decreases to approximately -80 at layer 40, then fluctuates between -70 and -80 until layer 60.

* **A-Anchored (NQ) (Gray dotted line):** Remains relatively stable around 0 to 5 across all layers.

### Key Observations

* **Performance Difference:** The Qwen3-32B model generally shows a greater decrease in ΔP compared to Qwen3-8B for Q-Anchored datasets.

* **Anchoring Impact:** A-Anchored datasets consistently maintain a ΔP close to 0 across all layers for both models.

* **Dataset Similarity:** The Q-Anchored datasets (TriviaQA, HotpotQA, NQ) exhibit similar trends within each model.

* **Layer Impact:** The most significant decrease in ΔP for Q-Anchored datasets occurs in the initial layers (up to layer 20 for Qwen3-8B and layer 40 for Qwen3-32B).

### Interpretation

The data suggests that anchoring by the question (Q-Anchored) leads to a performance decrease (as measured by ΔP) as the model processes deeper layers, especially for the larger Qwen3-32B model. This could indicate that the model's ability to utilize question-related information diminishes with increasing layer depth. Conversely, anchoring by the answer (A-Anchored) results in stable performance, suggesting that the model effectively retains answer-related information throughout its layers. The similarity in trends among different Q-Anchored datasets implies a consistent pattern in how the model processes question-based information across various question-answering tasks. The more significant performance drop in Qwen3-32B compared to Qwen3-8B for Q-Anchored datasets might indicate that the larger model is more sensitive to the way questions are processed across layers.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: ΔP vs. Layer for Qwen Models

### Overview

The image presents two line charts comparing the change in performance (ΔP) across different layers of two Qwen language models: Qwen3-8B and Qwen3-32B. The charts display performance differences for various question-answering datasets, distinguished by anchoring methods (Q-Anchored and A-Anchored).

### Components/Axes

* **X-axis:** Layer (ranging from 0 to approximately 30 for Qwen3-8B and 0 to 60 for Qwen3-32B).

* **Y-axis:** ΔP (ranging from approximately -100 to 20).

* **Models:** Qwen3-8B (left chart), Qwen3-32B (right chart).

* **Datasets/Anchoring:**

* PopQA (Q-Anchored and A-Anchored)

* TriviaQA (Q-Anchored and A-Anchored)

* HotpotQA (Q-Anchored and A-Anchored)

* NQ (Q-Anchored and A-Anchored)

* **Legend:** Located at the bottom of the image, associating colors with specific datasets and anchoring methods.

### Detailed Analysis or Content Details

**Qwen3-8B (Left Chart)**

* **Q-Anchored (PopQA):** (Blue line) Starts at approximately 0 ΔP at Layer 0, declines steadily to approximately -60 ΔP at Layer 20, then fluctuates between -60 and -80 ΔP until Layer 30.

* **A-Anchored (PopQA):** (Brown dashed line) Remains relatively stable around 0-10 ΔP until Layer 15, then declines to approximately -20 ΔP at Layer 30.

* **Q-Anchored (TriviaQA):** (Green line) Starts at approximately 0 ΔP, declines to approximately -40 ΔP at Layer 10, then fluctuates between -40 and -70 ΔP until Layer 30.

* **A-Anchored (TriviaQA):** (Purple dashed line) Starts at approximately 0 ΔP, declines to approximately -30 ΔP at Layer 10, then fluctuates between -30 and -60 ΔP until Layer 30.

* **Q-Anchored (HotpotQA):** (Orange line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -50 ΔP until Layer 30.

* **A-Anchored (HotpotQA):** (Red dashed line) Starts at approximately 0 ΔP, declines to approximately -10 ΔP at Layer 10, then fluctuates between -10 and -40 ΔP until Layer 30.

* **Q-Anchored (NQ):** (Light Blue line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -50 ΔP until Layer 30.

* **A-Anchored (NQ):** (Gray dashed line) Starts at approximately 0 ΔP, declines to approximately -10 ΔP at Layer 10, then fluctuates between -10 and -40 ΔP until Layer 30.

**Qwen3-32B (Right Chart)**

* **Q-Anchored (PopQA):** (Blue line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -60 ΔP until Layer 60.

* **A-Anchored (PopQA):** (Brown dashed line) Remains relatively stable around 0-10 ΔP until Layer 20, then declines to approximately -20 ΔP at Layer 60.

* **Q-Anchored (TriviaQA):** (Green line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -80 ΔP until Layer 60.

* **A-Anchored (TriviaQA):** (Purple dashed line) Starts at approximately 0 ΔP, declines to approximately -10 ΔP at Layer 10, then fluctuates between -10 and -60 ΔP until Layer 60.

* **Q-Anchored (HotpotQA):** (Orange line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -80 ΔP until Layer 60.

* **A-Anchored (HotpotQA):** (Red dashed line) Starts at approximately 0 ΔP, declines to approximately -10 ΔP at Layer 10, then fluctuates between -10 and -50 ΔP until Layer 60.

* **Q-Anchored (NQ):** (Light Blue line) Starts at approximately 0 ΔP, declines to approximately -20 ΔP at Layer 10, then fluctuates between -20 and -80 ΔP until Layer 60.

* **A-Anchored (NQ):** (Gray dashed line) Starts at approximately 0 ΔP, declines to approximately -10 ΔP at Layer 10, then fluctuates between -10 and -50 ΔP until Layer 60.

### Key Observations

* All datasets exhibit a negative trend in ΔP as the layer number increases, indicating a performance decrease with depth in both models.

* The Q-Anchored lines generally show a more significant decline in ΔP compared to the A-Anchored lines.

* The Qwen3-32B model shows a more pronounced decline in ΔP across all datasets compared to the Qwen3-8B model.

* The PopQA and NQ datasets consistently show the most significant declines in ΔP.

### Interpretation

The charts demonstrate that as the model depth (layer number) increases, performance on question-answering tasks tends to decrease. This suggests that deeper layers may not always contribute positively to performance and could potentially introduce noise or hinder the model's ability to generalize. The difference between Q-Anchored and A-Anchored lines suggests that the anchoring method impacts performance, with Q-Anchored generally performing worse. The larger decline in ΔP for Qwen3-32B compared to Qwen3-8B could indicate that the larger model is more susceptible to performance degradation with depth, or that the optimal depth for the larger model is different. The consistent performance decline on PopQA and NQ datasets suggests these datasets may be more sensitive to the effects of model depth. These findings could inform strategies for model pruning, layer selection, or architectural modifications to improve performance and efficiency.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Qwen3-8B and Qwen3-32B Layer-wise ΔP Analysis

### Overview

The image displays two side-by-side line charts comparing the performance metric "ΔP" across neural network layers for two different model sizes: Qwen3-8B (left) and Qwen3-32B (right). Each chart plots multiple data series representing two anchoring methods ("Q-Anchored" and "A-Anchored") evaluated on four distinct question-answering datasets.

### Components/Axes

* **Titles:**

* Left Chart: `Qwen3-8B`

* Right Chart: `Qwen3-32B`

* **Axes:**

* **X-axis (both charts):** Label is `Layer`. The scale represents the layer number within the model.

* Qwen3-8B chart: Ticks at 0, 10, 20, 30. The data spans approximately layers 0 to 35.

* Qwen3-32B chart: Ticks at 0, 20, 40, 60. The data spans approximately layers 0 to 65.

* **Y-axis (both charts):** Label is `ΔP`. This likely represents a change in probability or performance metric.

* Qwen3-8B chart: Ticks at -80, -60, -40, -20, 0, 20.

* Qwen3-32B chart: Ticks at -100, -80, -60, -40, -20, 0.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, differentiating lines by color and style (solid vs. dashed).

* **Q-Anchored Series (Solid Lines):**

* `Q-Anchored (PopQA)`: Solid blue line.

* `Q-Anchored (TriviaQA)`: Solid green line.

* `Q-Anchored (HotpotQA)`: Solid purple line.

* `Q-Anchored (NQ)`: Solid pink/magenta line.

* **A-Anchored Series (Dashed Lines):**

* `A-Anchored (PopQA)`: Dashed orange line.

* `A-Anchored (TriviaQA)`: Dashed red line.

* `A-Anchored (HotpotQA)`: Dashed brown line.

* `A-Anchored (NQ)`: Dashed gray line.

### Detailed Analysis

**Qwen3-8B Chart (Left):**

* **Q-Anchored Lines (Solid):** All four lines (blue, green, purple, pink) exhibit a strong, noisy downward trend. They start near ΔP = 0 at layer 0 and decline to values between approximately -60 and -80 by layer 35. The decline is not monotonic; there are significant local peaks and troughs. The blue line (PopQA) often reaches the lowest points.

* **A-Anchored Lines (Dashed):** All four lines (orange, red, brown, gray) remain relatively stable and close to ΔP = 0 throughout all layers. They show minor fluctuations but no significant upward or downward trend, staying within a narrow band roughly between -5 and +5.

**Qwen3-32B Chart (Right):**

* **Q-Anchored Lines (Solid):** Similar to the 8B model, these lines show a pronounced downward trend. The decline appears steeper and reaches lower absolute values, dropping to between approximately -80 and -100 by layer 65. The noise/fluctuation is also very high. The separation between the different dataset lines is less distinct than in the 8B chart.

* **A-Anchored Lines (Dashed):** Consistent with the 8B model, these lines remain stable near ΔP = 0 across all layers, with minor fluctuations.

### Key Observations

1. **Anchoring Method Dichotomy:** There is a stark and consistent contrast between the two anchoring methods across both model sizes. Q-Anchored performance (ΔP) degrades dramatically with increasing layer depth, while A-Anchored performance remains stable.

2. **Model Size Effect:** The larger model (Qwen3-32B) shows a more severe degradation for Q-Anchored methods, reaching lower ΔP values (-80 to -100) compared to the smaller model (-60 to -80). The layer range is also extended.

3. **Dataset Consistency:** Within each anchoring method, the trend is highly consistent across all four datasets (PopQA, TriviaQA, HotpotQA, NQ). The lines for Q-Anchored datasets are tightly clustered in their downward trajectory, as are the lines for A-Anchored datasets in their stability.

4. **High Variance:** The Q-Anchored lines are characterized by high-frequency noise or variance from layer to layer, superimposed on the clear downward trend.

### Interpretation

The data suggests a fundamental difference in how "Q-Anchored" and "A-Anchored" processing pathways utilize information across the layers of these language models.

* **Q-Anchored Pathway:** The consistent, layer-wise decline in ΔP indicates that this method's effectiveness or signal strength diminishes as information is processed deeper into the network. This could imply that the "Q" (likely Question) representation becomes less informative or is progressively overwritten by other information as it passes through the layers. The high variance suggests instability in this process.

* **A-Anchored Pathway:** The stability of ΔP near zero across all layers suggests this method maintains a consistent level of performance or signal integrity throughout the network depth. The "A" (likely Answer or context) representation appears to be robustly preserved or utilized in a layer-invariant manner.

* **Model Scaling Impact:** The more severe decline in the larger model (32B) for the Q-Anchored method is intriguing. It may indicate that the increased depth and capacity of the larger model exacerbates the degradation of the question-centric signal, or that its processing dynamics are fundamentally different.

* **Overall Implication:** For tasks measured by ΔP, the A-Anchored approach appears far more robust to network depth than the Q-Anchored approach. This finding could be critical for understanding model internals and designing more effective prompting or fine-tuning strategies that leverage stable internal representations. The consistency across diverse QA datasets strengthens the generalizability of this observation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: ΔP vs Layer for Qwen3-8B and Qwen3-32B Models

### Overview

The image contains two line graphs comparing the performance metric ΔP (delta-P) across transformer model layers for two versions of the Qwen3 architecture: 8B (left) and 32B (right). Each graph shows four data series representing different anchoring strategies (Q-Anchored vs A-Anchored) across four datasets (PopQA, TriviaQA, HotpotQA, NQ). The graphs reveal layer-wise performance variations, with Q-Anchored models generally exhibiting more pronounced fluctuations than A-Anchored counterparts.

### Components/Axes

- **X-axis (Layer)**:

- Qwen3-8B: 0–30 (discrete increments)

- Qwen3-32B: 0–60 (discrete increments)

- **Y-axis (ΔP)**:

- Range: -100 to +20 (linear scale)

- Units: ΔP (delta-P, unspecified metric)

- **Legends**:

- **Q-Anchored**: Solid lines (blue, green, purple, pink)

- **A-Anchored**: Dashed lines (orange, brown, gray, black)

- **Datasets**:

- PopQA: Blue (solid) / Orange (dashed)

- TriviaQA: Green (solid) / Brown (dashed)

- HotpotQA: Purple (solid) / Gray (dashed)

- NQ: Pink (solid) / Black (dashed)

### Detailed Analysis

#### Qwen3-8B Graph

- **Q-Anchored (PopQA)**: Starts near 0, drops sharply to ~-80 at Layer 10, fluctuates between -60 and -20 until Layer 30.

- **A-Anchored (PopQA)**: Remains near 0 with minor oscillations (±5) throughout.

- **Q-Anchored (TriviaQA)**: Begins at 0, dips to ~-60 at Layer 15, stabilizes between -40 and -20.

- **A-Anchored (TriviaQA)**: Stays near 0 with slight dips to -5.

- **Q-Anchored (HotpotQA)**: Sharp drop to ~-70 at Layer 5, recovers to -30 by Layer 30.

- **A-Anchored (HotpotQA)**: Mild fluctuations between -5 and +5.

- **Q-Anchored (NQ)**: Oscillates between -50 and -10, peaking at -30 at Layer 20.

- **A-Anchored (NQ)**: Stable near 0 with minor ±3 variations.

#### Qwen3-32B Graph

- **Q-Anchored (PopQA)**: Starts at 0, plunges to ~-90 at Layer 10, recovers to -40 by Layer 60.

- **A-Anchored (PopQA)**: Remains near 0 with ±3 fluctuations.

- **Q-Anchored (TriviaQA)**: Drops to ~-70 at Layer 20, stabilizes between -50 and -30.

- **A-Anchored (TriviaQA)**: Stays near 0 with ±2 variations.

- **Q-Anchored (HotpotQA)**: Sharp decline to ~-85 at Layer 15, recovers to -50 by Layer 60.

- **A-Anchored (HotpotQA)**: Stable near 0 with ±1 fluctuations.

- **Q-Anchored (NQ)**: Oscillates between -80 and -20, peaking at -60 at Layer 40.

- **A-Anchored (NQ)**: Stays near 0 with ±2 variations.

### Key Observations

1. **Model Size Impact**: Qwen3-32B shows more extreme ΔP fluctuations than Qwen3-8B, particularly for Q-Anchored models.

2. **Anchoring Strategy**: A-Anchored models maintain near-zero ΔP across all layers and datasets, while Q-Anchored models exhibit significant layer-dependent variations.

3. **Dataset Sensitivity**:

- HotpotQA causes the most drastic ΔP drops in Q-Anchored models.

- NQ shows the largest amplitude fluctuations in Q-Anchored models.

4. **Layer Dynamics**:

- Early layers (0–10) show the most dramatic ΔP changes.

- Later layers (20–30/60) exhibit stabilization or partial recovery.

### Interpretation

The data suggests that anchoring strategy (Q vs A) critically influences model stability, with A-Anchored models demonstrating consistent performance across layers. Q-Anchored models, while potentially more expressive, suffer from layer-specific instability that worsens with model size. The dataset-specific patterns indicate varying sensitivity to anchoring methods, with complex reasoning tasks (HotpotQA, NQ) amplifying instability in Q-Anchored configurations. These findings highlight trade-offs between model expressiveness and stability in transformer architectures, with practical implications for model design and deployment.

DECODING INTELLIGENCE...