## Flowchart: LLM Question-Answering Process

### Overview

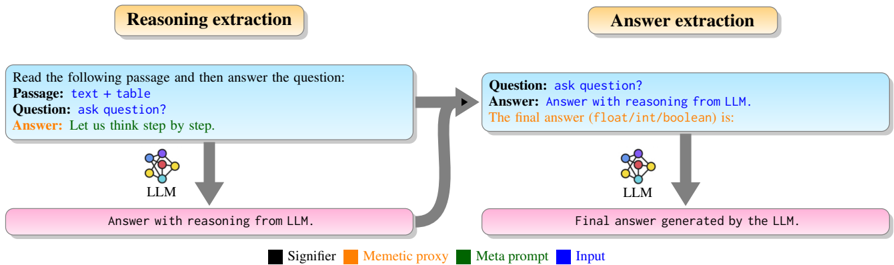

The image depicts a two-stage flowchart illustrating how a Large Language Model (LLM) processes a question and passage to generate an answer. The process involves "Reasoning extraction" followed by "Answer extraction," with explicit labels for components and a color-coded legend.

---

### Components/Axes

1. **Legend** (bottom center):

- **Black**: Signifier

- **Orange**: Mementic proxy

- **Green**: Meta prompt

- **Blue**: Input

2. **Reasoning Extraction Block** (top-left):

- **Input**: "Read the following passage and then answer the question: Passage: text + table. Question: ask question? Answer: Let us think step by step."

- **Output**: "Answer with reasoning from LLM."

3. **Answer Extraction Block** (top-right):

- **Input**: "Question: ask question? Answer: Answer with reasoning from LLM."

- **Output**: "Final answer generated by the LLM."

4. **Arrows**:

- Gray arrows connect the two blocks, indicating sequential processing.

- A bidirectional arrow links the reasoning output to the answer input.

5. **LLM Symbol**:

- A network diagram (blue, red, yellow nodes) appears below both blocks, representing the LLM.

---

### Detailed Analysis

- **Reasoning Extraction**:

- Inputs include a passage (text + table) and a question.

- The LLM generates intermediate reasoning ("Let us think step by step").

- Color coding: Blue (Input) dominates this block.

- **Answer Extraction**:

- Takes the reasoning output as input.

- Finalizes the answer in a structured format (e.g., float/int/boolean).

- Color coding: Blue (Input) and black (Signifier) are prominent.

- **Legend Placement**:

- Positioned at the bottom center, spanning the width of the flowchart.

- Colors correspond to labels but are not explicitly mapped to visual elements in the diagram.

---

### Key Observations

1. **Sequential Workflow**: The process is strictly linear, with reasoning preceding answer generation.

2. **LLM Centrality**: The LLM is depicted as the core component in both stages.

3. **Legend Ambiguity**: While the legend defines four categories, only "Input" and "Signifier" are visually represented (blue and black). The roles of "Mementic proxy" (orange) and "Meta prompt" (green) are not visually evident.

4. **Textual Focus**: The flowchart emphasizes textual inputs/outputs over numerical or categorical data.

---

### Interpretation

This flowchart represents a conceptual pipeline for LLM-based question answering, emphasizing:

- **Modularity**: Separation of reasoning and answer generation stages.

- **LLM Dependency**: The model is responsible for both intermediate reasoning and final output.

- **Input-Output Clarity**: Explicit labeling of inputs (passage, question) and outputs (reasoning, final answer).

The absence of numerical data or visual mappings for the legend suggests the diagram prioritizes process explanation over quantitative analysis. The bidirectional arrow between blocks implies iterative refinement, though this is not explicitly stated in the text. The LLM's role as both reasoning and answer generator highlights its versatility in handling complex tasks.