TECHNICAL ASSET FINGERPRINT

b302a4b7415bbcbe12788213

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## System Diagram: Megatron and vLLM Sidecar Architecture

### Overview

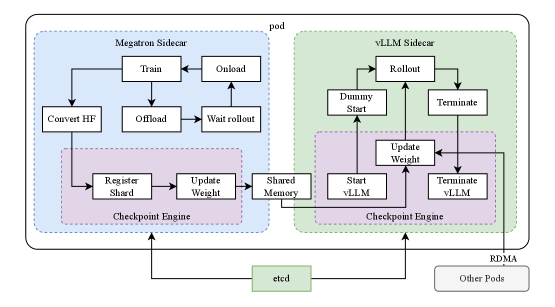

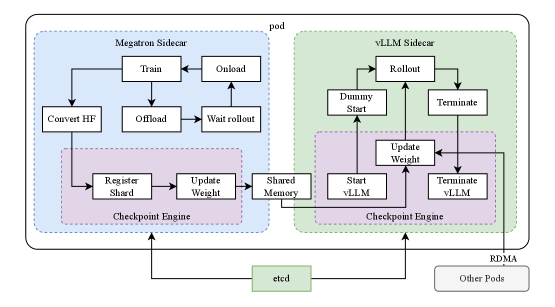

The image is a system diagram illustrating the architecture of a pod containing a Megatron Sidecar and a vLLM Sidecar, along with their interactions and dependencies. The diagram shows the flow of data and control between different components within the pod, as well as external communication with etcd and other pods via RDMA.

### Components/Axes

* **Pod:** The outermost container, encompassing both the Megatron Sidecar and the vLLM Sidecar.

* **Megatron Sidecar:** A component within the pod, responsible for training and managing large language models. It is enclosed in a light blue dashed box.

* **Train:** A process within the Megatron Sidecar.

* **Onload:** A process within the Megatron Sidecar.

* **Convert HF:** A process within the Megatron Sidecar.

* **Offload:** A process within the Megatron Sidecar.

* **Wait rollout:** A process within the Megatron Sidecar.

* **Checkpoint Engine (Megatron):** A component within the Megatron Sidecar, responsible for managing checkpoints. It is enclosed in a light purple dashed box.

* **Register Shard:** A process within the Checkpoint Engine.

* **Update Weight:** A process within the Checkpoint Engine.

* **Shared Memory:** A process that connects the Megatron and vLLM sidecars.

* **vLLM Sidecar:** A component within the pod, responsible for serving large language models. It is enclosed in a light green dashed box.

* **Rollout:** A process within the vLLM Sidecar.

* **Dummy Start:** A process within the vLLM Sidecar.

* **Terminate:** A process within the vLLM Sidecar.

* **Checkpoint Engine (vLLM):** A component within the vLLM Sidecar, responsible for managing checkpoints. It is enclosed in a light purple dashed box.

* **Update Weight:** A process within the Checkpoint Engine.

* **Start vLLM:** A process within the Checkpoint Engine.

* **Terminate vLLM:** A process within the Checkpoint Engine.

* **etcd:** An external service for distributed key-value store. It is enclosed in a light green box.

* **RDMA:** Remote Direct Memory Access, used for communication with other pods.

* **Other Pods:** Other pods in the system.

### Detailed Analysis

* **Megatron Sidecar Flow:**

* The "Train" process connects to the "Onload" process.

* The "Train" process connects to the "Offload" process.

* The "Convert HF" process connects to the "Register Shard" process in the Checkpoint Engine.

* The "Offload" process connects to the "Wait rollout" process.

* The "Wait rollout" process connects to the "Onload" process.

* The "Register Shard" process connects to the "Update Weight" process.

* The "Update Weight" process connects to the "Shared Memory" process.

* **vLLM Sidecar Flow:**

* The "Rollout" process connects to the "Dummy Start" process.

* The "Rollout" process connects to the "Terminate" process.

* The "Shared Memory" process connects to the "Update Weight" process in the Checkpoint Engine.

* The "Dummy Start" process connects to the "Rollout" process.

* The "Terminate" process connects to the "Rollout" process.

* The "Start vLLM" process connects to the "Update Weight" process.

* The "Terminate vLLM" process connects to the "Update Weight" process.

* The "Update Weight" process connects to the "Start vLLM" process.

* The "Update Weight" process connects to the "Terminate vLLM" process.

* **External Communication:**

* Both Checkpoint Engines (Megatron and vLLM) communicate with "etcd".

* The vLLM Sidecar communicates with "Other Pods" via "RDMA".

### Key Observations

* The diagram illustrates a clear separation of concerns between the Megatron Sidecar (training) and the vLLM Sidecar (serving).

* The Checkpoint Engine plays a crucial role in managing model checkpoints and enabling communication between the two sidecars.

* The use of "Shared Memory" facilitates data transfer between the Megatron and vLLM sidecars.

* External communication with "etcd" and "Other Pods" highlights the distributed nature of the system.

### Interpretation

The diagram depicts a sophisticated architecture for managing large language models. The separation of training and serving into distinct sidecars allows for independent scaling and optimization. The Checkpoint Engine and Shared Memory components enable seamless model updates and efficient resource utilization. The external communication with "etcd" and "Other Pods" suggests a distributed and scalable system capable of handling large workloads. The architecture is designed to facilitate continuous training, deployment, and serving of large language models in a dynamic environment.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: System Architecture for Model Training and Deployment

### Overview

The image depicts a system architecture diagram illustrating the interaction between two sidecars – Megatron and vLLM – within a pod, utilizing shared memory and a checkpoint engine. The diagram outlines the process of model training (Megatron) and subsequent rollout/deployment (vLLM). The system also interacts with `etcd` and other pods via RDMA.

### Components/Axes

The diagram consists of the following components:

* **Megatron Sidecar:** Represented by a light blue box.

* **vLLM Sidecar:** Represented by a light green box.

* **Checkpoint Engine:** Represented by a purple box, present in both sidecars.

* **Shared Memory:** A central block connecting the two sidecars.

* **etcd:** A key-value store, positioned at the bottom center.

* **Other Pods:** Represented by a gray box, positioned at the bottom right.

* **RDMA:** Indicates Remote Direct Memory Access, connecting "Other Pods" to the vLLM sidecar.

* **Pod:** A dashed gray rectangle encompassing both sidecars.

The diagram uses arrows to indicate the flow of data and control between these components. The following processes are depicted:

* **Megatron Sidecar Processes:** Convert HF, Train, Onload, Offload, Wait Rollout, Register Shard, Update Weight.

* **vLLM Sidecar Processes:** Rollout, Dummy Start, Update Weight, Start vLLM, Terminate vLLM, Terminate.

### Detailed Analysis / Content Details

The diagram illustrates a workflow as follows:

1. **Megatron Sidecar:**

* The process begins with "Convert HF".

* "Train" initiates the model training process.

* "Onload" and "Offload" represent data transfer operations.

* "Wait Rollout" waits for the vLLM sidecar to be ready.

* The "Checkpoint Engine" within the Megatron sidecar handles "Register Shard" and "Update Weight".

2. **Shared Memory:**

* Data is transferred between the Megatron and vLLM sidecars via "Shared Memory".

3. **vLLM Sidecar:**

* "Rollout" initiates the deployment process.

* "Dummy Start" is a placeholder or initialization step.

* "Update Weight" updates the model weights.

* "Start vLLM" starts the vLLM service.

* "Terminate vLLM" terminates the vLLM service.

* The "Checkpoint Engine" within the vLLM sidecar is involved in updating weights and managing the vLLM lifecycle.

4. **External Interactions:**

* The "Checkpoint Engine" in both sidecars interacts with `etcd`.

* "Other Pods" communicate with the vLLM sidecar via RDMA.

### Key Observations

* The diagram highlights a clear separation of concerns between model training (Megatron) and model deployment (vLLM).

* The use of "Shared Memory" suggests a high-performance data exchange mechanism.

* The "Checkpoint Engine" plays a crucial role in both training and deployment, likely managing model versions and updates.

* The interaction with `etcd` indicates a distributed coordination system.

* RDMA is used for efficient communication with other pods.

### Interpretation

This diagram represents a sophisticated system for training and deploying large language models. The architecture leverages sidecars to isolate the training and deployment processes, promoting modularity and scalability. The use of shared memory suggests an attempt to minimize data transfer overhead, crucial for large models. The checkpoint engine and `etcd` integration provide robust model management and coordination capabilities. The RDMA connection to other pods indicates a distributed deployment strategy.

The diagram suggests a workflow where the Megatron sidecar trains the model, periodically updating weights in shared memory. The vLLM sidecar then rolls out the updated model, utilizing the shared weights for inference. The `etcd` store likely maintains metadata about the model versions and their availability. The overall design emphasizes efficiency, scalability, and reliability in the context of large language model serving. The "Dummy Start" process in the vLLM sidecar is a curious element, potentially representing a pre-initialization step or a placeholder for future functionality.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## System Architecture Diagram: Distributed LLM Training and Inference Pod

### Overview

The image is a technical system architecture diagram illustrating the components and data flow within a single computational "pod" designed for distributed large language model (LLM) training and inference. The diagram is divided into two primary subsystems—the Megatron Sidecar and the vLLM Sidecar—which coordinate through shared memory and external services.

### Components/Axes

The diagram is structured within a large, rounded rectangle labeled **"pod"** at the top center. Inside, two major colored regions define the subsystems:

1. **Megatron Sidecar (Left, Light Blue Background):**

* **Components (Boxes):** `Train`, `Onload`, `Offload`, `Wait rollout`, `Convert HF`, `Register Shard`, `Update Weight`, `Checkpoint Engine`.

* **Flow:** Arrows indicate a cyclical process between `Train`, `Onload`, and `Offload`. `Offload` connects to `Wait rollout`. `Convert HF` feeds into `Register Shard`, which connects to `Update Weight`. Both `Register Shard` and `Update Weight` are contained within a dashed purple box labeled `Checkpoint Engine`.

2. **vLLM Sidecar (Right, Light Green Background):**

* **Components (Boxes):** `Rollout`, `Dummy Start`, `Terminate`, `Update Weight`, `Start vLLM`, `Terminate vLLM`, `Checkpoint Engine`.

* **Flow:** `Rollout` connects to both `Dummy Start` and `Terminate`. `Dummy Start` and `Start vLLM` both feed into `Update Weight`. `Update Weight` also receives input from `Terminate vLLM`. `Terminate` connects to `Terminate vLLM`. The components `Start vLLM`, `Update Weight`, and `Terminate vLLM` are contained within a dashed purple box labeled `Checkpoint Engine`.

3. **Shared Components & External Interfaces:**

* **Shared Memory (Center, Purple Background):** A central box labeled `Shared Memory` sits between the two sidecars. It receives an arrow from the Megatron Sidecar's `Update Weight` and sends an arrow to the vLLM Sidecar's `Update Weight`.

* **etcd (Bottom Center, Light Green Box):** An external service labeled `etcd` has bidirectional arrows connecting to both the Megatron and vLLM `Checkpoint Engine` components.

* **Other Pods (Bottom Right, Gray Box):** A component labeled `Other Pods` is connected via a line labeled **"RDMA"** to the vLLM Sidecar's `Checkpoint Engine`.

### Detailed Analysis

**Spatial Layout & Connections:**

* The **Megatron Sidecar** occupies the left ~45% of the pod. Its internal `Checkpoint Engine` (purple dashed box) is positioned at the bottom of its region.

* The **vLLM Sidecar** occupies the right ~45% of the pod. Its internal `Checkpoint Engine` is also at the bottom of its region.

* The **Shared Memory** component is centrally located, acting as a bridge between the two sidecars' `Update Weight` processes.

* **etcd** is positioned centrally below the pod, indicating its role as a shared coordination service for both checkpoint engines.

* **Other Pods** are external, connected to the vLLM side via a high-speed **RDMA** (Remote Direct Memory Access) link.

**Process Flow (Inferred from Arrows):**

1. **Megatron Sidecar (Training Focus):** The core loop appears to be `Train` -> `Onload` -> `Offload` -> `Wait rollout` -> back to `Train`. Parallel to this, model conversion (`Convert HF`) leads to sharding (`Register Shard`) and weight updates (`Update Weight`) within its checkpoint engine.

2. **vLLM Sidecar (Inference/Rollout Focus):** The process involves initiating rollouts (`Rollout`), which can start via a `Dummy Start` or a full `Start vLLM`. Weight updates (`Update Weight`) are a central hub, receiving inputs from start processes and termination signals (`Terminate vLLM`). The process can be cleanly stopped via `Terminate`.

3. **Coordination:** Model weights are synchronized from the Megatron training side to the vLLM inference side via **Shared Memory**. Both sides persist state and coordinate with the external **etcd** service. The vLLM side can also communicate with other pods via **RDMA**.

### Key Observations

* **Asymmetric Design:** The two sidecars have distinct, specialized component sets. Megatron is oriented around a training loop and model sharding, while vLLM is oriented around managing inference instances (`vLLM`) and rollout processes.

* **Centralized Weight Update:** The `Update Weight` component is a critical junction in both sidecars, suggesting that synchronizing model parameters is a key operation.

* **Dual Checkpoint Engines:** Each sidecar has its own `Checkpoint Engine`, implying independent state management for training and inference processes, coordinated via `etcd`.

* **Explicit External Links:** The diagram explicitly shows integration points with external systems (`etcd` for coordination, `Other Pods` via `RDMA` for distributed communication).

### Interpretation

This diagram depicts a sophisticated architecture for decoupling LLM training from online inference/rollout within a single pod. The **Megatron Sidecar** likely handles the heavy computation of model training, while the **vLLM Sidecar** manages low-latency inference, possibly for reinforcement learning from human feedback (RLHF) or online serving.

The **Shared Memory** bridge is crucial for efficiently transferring updated model weights from the training engine to the inference engine without going through slower storage or network layers. The use of **etcd** suggests a need for strong consistency in managing distributed state (like checkpoint metadata) across the two subsystems. The **RDMA** link to **Other Pods** indicates this pod is part of a larger cluster, where high-speed, low-latency communication between inference instances on different nodes is required.

The architecture solves a key challenge in modern AI systems: how to continuously improve a model (training) while simultaneously serving it or using it to generate new data (inference/rollout) with minimal latency and data transfer overhead. The separation into "sidecars" within a pod allows for independent scaling and lifecycle management of these two workloads.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Distributed Machine Learning Workflow Architecture

### Overview

The diagram illustrates a distributed machine learning workflow architecture with two primary components: **Megatron Sidecar** (blue) and **vLLM Sidecar** (green), connected via a **Checkpoint Engine** (purple). Processes flow between these components, with infrastructure elements like **etcd** (green) and **RDMA** (gray) at the bottom. Arrows indicate directional relationships between steps.

### Components/Axes

#### Key Labels and Elements:

1. **Megatron Sidecar (Blue)**:

- `Convert HF` → `Train` → `Onload`

- `Offload` → `Wait rollout`

- `Register Shard` → `Update Weight`

- `Checkpoint Engine` (central hub for shard/weight management).

2. **vLLM Sidecar (Green)**:

- `Rollout` → `Dummy Start`

- `Update Weight` → `Start vLLM` → `Terminate vLLM`

- Feedback loop: `Terminate vLLM` → `Update Weight`.

3. **Checkpoint Engine (Purple)**:

- Connects `Register Shard` (Megatron) and `Update Weight` (vLLM).

- Shares `Shared Memory` with vLLM Sidecar.

4. **Infrastructure**:

- `etcd` (green): Centralized key-value store for coordination.

- `RDMA` (gray): High-speed network interface.

- `Other Pods`: External components interacting via RDMA.

#### Flow Direction:

- **Megatron → Checkpoint Engine**: `Register Shard` and `Update Weight` propagate to the Checkpoint Engine.

- **Checkpoint Engine → vLLM**: `Update Weight` and `Shared Memory` are shared with the vLLM Sidecar.

- **vLLM → Checkpoint Engine**: `Update Weight` feedback loop from `Terminate vLLM`.

### Detailed Analysis

- **Megatron Sidecar**:

- `Convert HF`: Converts Hugging Face models for training.

- `Train` → `Onload`: Training process followed by data loading.

- `Offload` → `Wait rollout`: Model weights offloaded to disk, awaiting rollout completion.

- `Register Shard`: Shard registration for distributed training.

- `Update Weight`: Weight updates synchronized via the Checkpoint Engine.

- **vLLM Sidecar**:

- `Rollout`: Model deployment for inference.

- `Dummy Start`: Placeholder for model initialization.

- `Update Weight`: Weight updates from Megatron or feedback loops.

- `Start vLLM` → `Terminate vLLM`: Lifecycle management of inference instances.

- **Checkpoint Engine**:

- Acts as a central coordinator for shard/weight synchronization.

- `Shared Memory`: Enables low-latency communication between sidecars.

- **Infrastructure**:

- `etcd`: Likely manages distributed state (e.g., pod status, configuration).

- `RDMA`: Facilitates high-throughput, low-latency data transfer between pods.

### Key Observations

1. **Feedback Loops**:

- `Terminate vLLM` → `Update Weight` suggests iterative model refinement.

- `Wait rollout` → `Offload` indicates staged model deployment.

2. **Component Coupling**:

- The Checkpoint Engine bridges Megatron (training) and vLLM (inference), enabling real-time weight updates.

- `Shared Memory` reduces latency in cross-sidecar communication.

3. **Infrastructure Role**:

- `etcd` and `RDMA` support scalability and performance in distributed environments.

### Interpretation

This architecture represents a **model-as-a-service (MaaS)** system where:

- **Megatron Sidecar** handles training and weight management.

- **vLLM Sidecar** manages inference rollouts and lifecycle.

- The **Checkpoint Engine** ensures consistency between training and inference by synchronizing weights and shards.

- **RDMA** and **etcd** optimize performance and coordination in a distributed setup.

The diagram emphasizes **modularity** (separate training/inference pipelines) and **efficiency** (low-latency updates via shared memory and RDMA). The feedback loops suggest a dynamic system where inference results may inform training adjustments.

DECODING INTELLIGENCE...