\n

## Diagram: Processing Unit and Memory Interaction - Conventional vs. Computational

### Overview

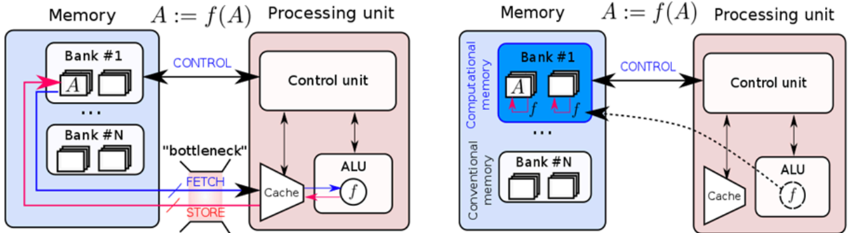

The image presents a comparative diagram illustrating two architectures for processing unit and memory interaction. The left side depicts a conventional architecture, while the right side shows a computational memory architecture. Both diagrams represent the flow of data and control signals during a computation defined as A := f(A), where A is a variable and f is a function.

### Components/Axes

The diagram consists of two main sections, each representing an architecture. Each section contains:

* **Memory:** Divided into banks labeled "Bank #1" through "Bank #N".

* **Processing Unit:** Comprising a Control Unit, Arithmetic Logic Unit (ALU), and Cache.

* **Connections:** Arrows indicating data and control flow. Labels on these arrows include "CONTROL", "FETCH", "STORE", and "bottleneck".

* **Mathematical Notation:** "A := f(A)" appears above each diagram, representing the computation being performed.

* **Data Representation:** The variable 'A' is shown within the memory banks. In the computational memory architecture, the function 'f' is also stored within the memory bank alongside 'A'.

### Detailed Analysis or Content Details

**Left Diagram (Conventional Architecture):**

* **Memory:** The memory is divided into 'N' banks. The variable 'A' is located in Bank #1. Data flow between the memory and the processing unit is represented by pink arrows for 'FETCH' and 'STORE', and a black arrow for 'CONTROL'.

* **Processing Unit:** The 'FETCH' signal retrieves data from memory to the Cache. The Cache then passes the data to the ALU, which applies the function 'f' to it. The result is then 'STORE'd back into memory. The 'bottleneck' label is placed near the 'FETCH' and 'STORE' arrows, indicating a potential performance limitation.

* **ALU:** The output of the ALU is labeled 'f'.

**Right Diagram (Computational Memory Architecture):**

* **Memory:** The memory is divided into 'N' banks. Bank #1 contains both the variable 'A' and the function 'f'. The memory is further labeled as "Computational memory" and "Conventional memory".

* **Processing Unit:** The 'CONTROL' signal is sent from the Control Unit to the memory. The ALU receives the function 'f' directly from the memory (Bank #1) via a dotted arrow, bypassing the Cache.

* **ALU:** The output of the ALU is labeled 'f' with a circular arrow around it, suggesting an iterative process or feedback loop.

### Key Observations

* The computational memory architecture aims to reduce the "bottleneck" associated with data transfer between memory and the processing unit by storing the function 'f' directly in memory alongside the data 'A'.

* The dotted arrow in the computational memory architecture indicates a direct connection between the memory and the ALU for the function 'f', bypassing the Cache.

* The conventional architecture relies heavily on the Cache for intermediate data and function storage, potentially leading to performance limitations.

### Interpretation

The diagram illustrates a shift in computing paradigms from a traditional Von Neumann architecture (conventional) to a more modern computational memory architecture. The conventional architecture suffers from the "memory wall" problem, where the speed of data transfer between the processor and memory limits overall performance. The computational memory architecture attempts to address this by bringing computation closer to the data, reducing the need for frequent data transfers.

The presence of 'f' within the memory bank in the computational architecture suggests that the processing is partially or fully offloaded to the memory itself. This is a key characteristic of in-memory computing and near-data processing. The dotted line represents a more efficient pathway for the function 'f' to reach the ALU, potentially improving performance.

The diagram highlights the potential benefits of integrating computation and memory, leading to reduced latency, increased bandwidth, and improved energy efficiency. The 'A := f(A)' notation emphasizes the iterative nature of many computations, and the computational memory architecture is designed to optimize this iterative process.