TECHNICAL ASSET FINGERPRINT

b38d38598538bce83220bd41

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

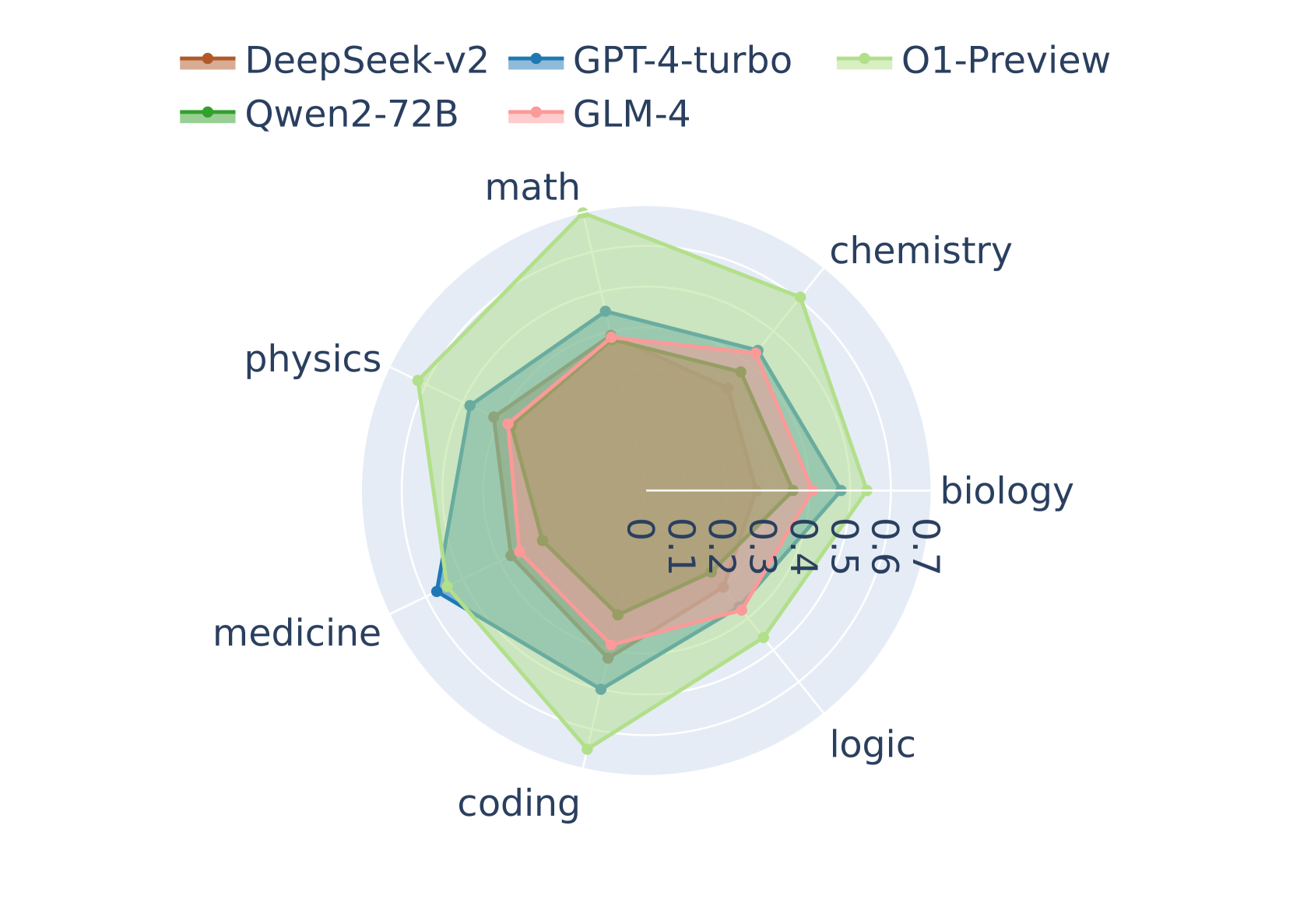

## Radar Chart: Model Performance Across Disciplines

### Overview

The image is a radar chart comparing the performance of five different models (DeepSeek-v2, GPT-4-turbo, O1-Preview, Qwen2-72B, and GLM-4) across seven disciplines: math, chemistry, biology, logic, coding, medicine, and physics. The chart visualizes the relative strengths and weaknesses of each model in these areas.

### Components/Axes

* **Axes:** The chart has seven radial axes, each representing a different discipline: math, chemistry, biology, logic, coding, medicine, and physics.

* **Scale:** The radial scale ranges from 0 to 0.7, with increments of 0.1.

* **Models (Legend):**

* DeepSeek-v2 (brown)

* GPT-4-turbo (blue)

* O1-Preview (light green)

* Qwen2-72B (green)

* GLM-4 (light red)

### Detailed Analysis

Here's a breakdown of each model's performance in each discipline:

* **DeepSeek-v2 (brown):**

* math: ~0.45

* chemistry: ~0.45

* biology: ~0.45

* logic: ~0.45

* coding: ~0.45

* medicine: ~0.45

* physics: ~0.45

* Trend: Relatively consistent performance across all disciplines.

* **GPT-4-turbo (blue):**

* math: ~0.3

* chemistry: ~0.3

* biology: ~0.3

* logic: ~0.3

* coding: ~0.1

* medicine: ~0.2

* physics: ~0.3

* Trend: Relatively consistent performance across most disciplines, with a dip in coding and medicine.

* **O1-Preview (light green):**

* math: ~0.65

* chemistry: ~0.7

* biology: ~0.7

* logic: ~0.7

* coding: ~0.7

* medicine: ~0.7

* physics: ~0.7

* Trend: Consistently high performance across all disciplines.

* **Qwen2-72B (green):**

* math: ~0.35

* chemistry: ~0.4

* biology: ~0.4

* logic: ~0.4

* coding: ~0.5

* medicine: ~0.4

* physics: ~0.4

* Trend: Relatively consistent performance across most disciplines, with a slight increase in coding.

* **GLM-4 (light red):**

* math: ~0.3

* chemistry: ~0.3

* biology: ~0.3

* logic: ~0.3

* coding: ~0.3

* medicine: ~0.3

* physics: ~0.3

* Trend: Consistent performance across all disciplines.

### Key Observations

* O1-Preview (light green) consistently outperforms the other models across all disciplines.

* DeepSeek-v2 (brown) shows relatively consistent performance across all disciplines, but at a lower level than O1-Preview.

* GPT-4-turbo (blue) has a noticeable dip in performance in coding compared to other disciplines.

* GLM-4 (light red) shows consistent performance across all disciplines.

* Qwen2-72B (green) shows a slight increase in coding performance compared to other disciplines.

### Interpretation

The radar chart provides a visual comparison of the strengths and weaknesses of different models across various academic disciplines. O1-Preview appears to be the most versatile and high-performing model, while the other models exhibit varying degrees of specialization or consistent performance at lower levels. The chart highlights the importance of considering specific domain requirements when selecting a model, as some models may excel in certain areas while underperforming in others. The consistent performance of DeepSeek-v2 and GLM-4 suggests a balanced approach, while the dip in GPT-4-turbo's coding performance indicates a potential area for improvement or a trade-off in its design.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

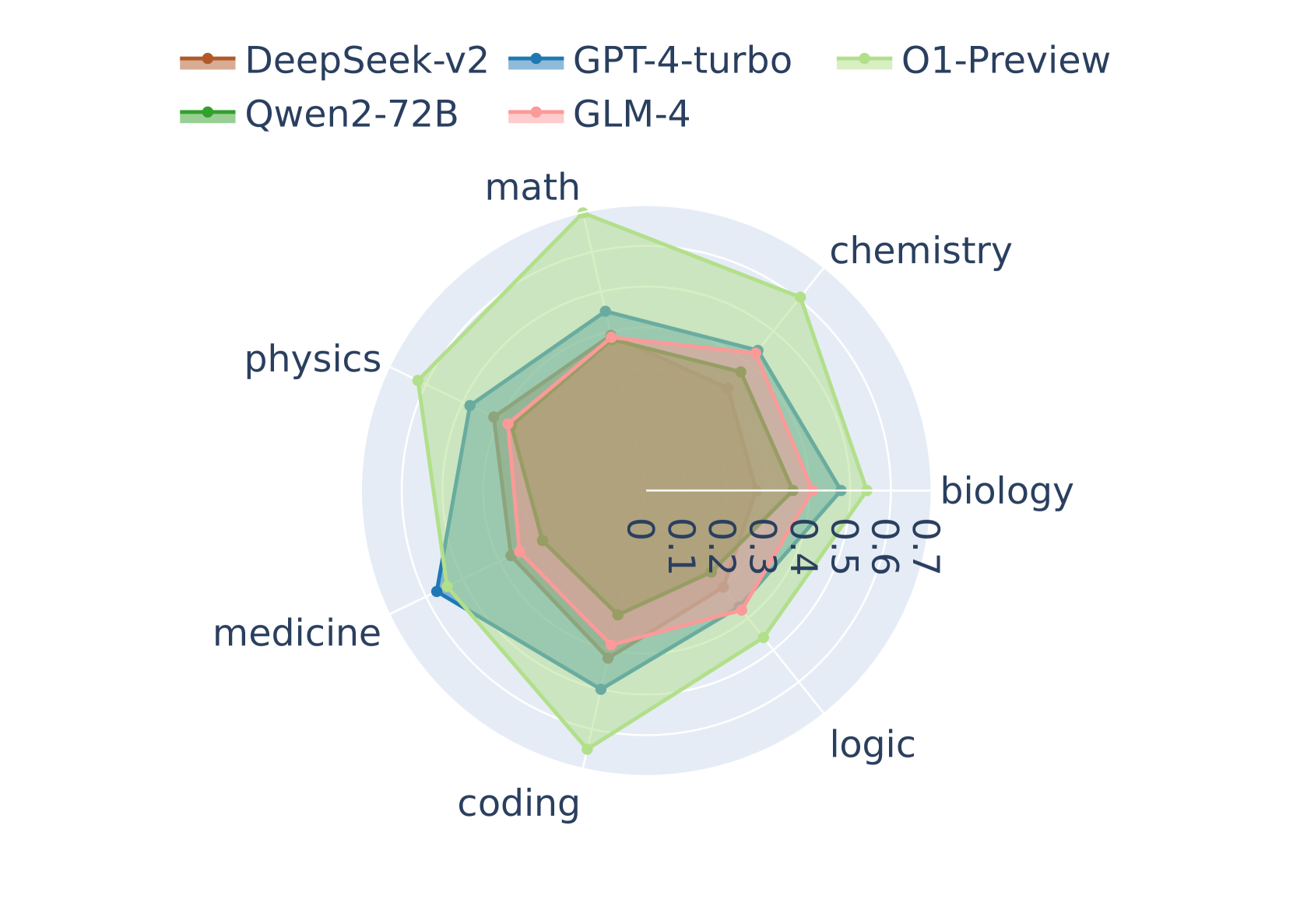

## Radar Chart: Model Performance Across Domains

### Overview

This image displays a radar chart comparing the performance of five different models across six distinct domains: math, chemistry, biology, logic, coding, and medicine. Each model is represented by a colored line and shaded area, with data points plotted at the intersection of the model's performance and each domain. The chart uses a radial scale to indicate performance values.

### Components/Axes

**Legend:**

The legend is located in the top-left quadrant of the image and identifies the following models with their corresponding colors:

* **DeepSeek-v2**: Brown line with circular markers.

* **Qwen2-72B**: Green line with circular markers.

* **GPT-4-turbo**: Blue line with circular markers.

* **GLM-4**: Pink line with circular markers.

* **O1-Preview**: Light green line with circular markers.

**Radial Axes (Domains):**

The chart has seven radial axes, each representing a domain:

* **math**: Located at the top.

* **chemistry**: Located to the top-right.

* **biology**: Located to the right.

* **logic**: Located to the bottom-right.

* **coding**: Located at the bottom.

* **medicine**: Located to the bottom-left.

* **physics**: Located to the left.

**Radial Scale:**

The radial scale is displayed along the right side of the chart, indicating performance values from 0.0 to 0.7, with increments of 0.1. The scale is marked as follows: 0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7.

### Detailed Analysis

The following data points are extracted by visually inspecting the chart and cross-referencing the colored markers with the legend and radial scale. Approximate values are provided with an estimated uncertainty of +/- 0.02 due to visual interpretation.

**DeepSeek-v2 (Brown):**

* **Trend:** The brown line shows a generally high and relatively consistent performance across most domains, peaking in math and chemistry, and dipping slightly in medicine and coding.

* **Data Points:**

* math: ~0.68

* chemistry: ~0.65

* biology: ~0.55

* logic: ~0.50

* coding: ~0.45

* medicine: ~0.48

* physics: ~0.60

**Qwen2-72B (Green):**

* **Trend:** The green line exhibits the highest performance across all domains, forming the outermost boundary of the chart.

* **Data Points:**

* math: ~0.70

* chemistry: ~0.68

* biology: ~0.65

* logic: ~0.62

* coding: ~0.60

* medicine: ~0.63

* physics: ~0.67

**GPT-4-turbo (Blue):**

* **Trend:** The blue line shows moderate performance, with a notable dip in coding and medicine, and a peak in math.

* **Data Points:**

* math: ~0.45

* chemistry: ~0.35

* biology: ~0.30

* logic: ~0.32

* coding: ~0.15

* medicine: ~0.20

* physics: ~0.40

**GLM-4 (Pink):**

* **Trend:** The pink line indicates performance generally higher than GPT-4-turbo but lower than DeepSeek-v2 and Qwen2-72B, with a relatively even distribution across domains.

* **Data Points:**

* math: ~0.55

* chemistry: ~0.50

* biology: ~0.45

* logic: ~0.48

* coding: ~0.40

* medicine: ~0.42

* physics: ~0.52

**O1-Preview (Light Green):**

* **Trend:** The light green line shows performance that is generally lower than DeepSeek-v2 and GLM-4, with a significant dip in medicine and coding, and a peak in math and chemistry.

* **Data Points:**

* math: ~0.60

* chemistry: ~0.58

* biology: ~0.48

* logic: ~0.45

* coding: ~0.35

* medicine: ~0.38

* physics: ~0.55

### Key Observations

* **Qwen2-72B** demonstrates superior performance across all measured domains, consistently achieving the highest scores.

* **O1-Preview** and **DeepSeek-v2** show strong performance in STEM-related fields (math, chemistry, physics, biology) but tend to perform less well in logic, coding, and medicine compared to their scores in other areas.

* **GPT-4-turbo** exhibits the lowest overall performance among the models, with a particularly pronounced weakness in coding and medicine.

* **GLM-4** offers a balanced performance across all domains, falling in the middle tier of the models.

* There is a clear clustering of performance for most models in the STEM domains, with some models showing specific areas of weakness, most notably GPT-4-turbo in coding.

### Interpretation

This radar chart visually represents a comparative performance evaluation of several AI models across different subject areas. The data suggests that:

1. **Model Specialization vs. Generalization:** Qwen2-72B appears to be a highly generalized and performant model across the board. Other models, like O1-Preview and DeepSeek-v2, show strengths in certain areas (STEM) but may require further development in others (coding, medicine). GPT-4-turbo's performance profile indicates potential limitations or areas where it is less optimized compared to the others.

2. **Competitive Landscape:** The chart highlights the competitive nature of AI model development. Qwen2-72B is currently leading in this specific evaluation. The relative positioning of the other models (DeepSeek-v2, GLM-4, O1-Preview, GPT-4-turbo) provides insights into their current capabilities and potential areas for improvement.

3. **Domain-Specific Challenges:** The varying performance across domains suggests that different AI models may be better suited for specific tasks. For instance, models excelling in math and chemistry might be more suitable for scientific research or data analysis in those fields, while models with strong coding capabilities would be preferred for software development tasks. The significant drop in performance for some models in coding and medicine could indicate that these domains present unique challenges for current AI architectures or training methodologies.

4. **Peircean Investigative Reading:** From a semiotic perspective, the chart acts as a signifier of model capabilities. The visual representation allows for a quick, albeit simplified, interpretation of complex performance data. The "iconic" nature of the radar chart, where the shape and extent of the polygons visually represent the performance, facilitates immediate understanding. However, a deeper "indexical" reading would require understanding the specific metrics used to generate these scores and the context of the evaluation. The "symbolic" aspect is present in the labels and the established conventions of radar charts. The data suggests a hierarchy of performance, with Qwen2-72B as the apex, and GPT-4-turbo at the nadir, in this particular assessment. The relative distances between the lines and the center, and between the lines themselves, are crucial indices of comparative strength and weakness. The clustering of points for some models in specific domains might indicate underlying architectural biases or training data limitations.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Radar Chart: AI Model Performance Across Scientific and Technical Domains

### Overview

This image is a radar chart (also known as a spider chart) comparing the performance of five different Large Language Models (LLMs) across seven distinct academic and technical subject areas. The chart uses concentric heptagonal grid lines to represent a numerical scoring scale, with the models' scores plotted as colored polygons. All text in the image is in English.

### Components/Axes

**1. Header Region (Legend)**

Located at the top center of the image, the legend identifies the five data series (AI models) by color and line style. All lines feature circular markers at the data points.

* **DeepSeek-v2:** Brown/Orange line

* **GPT-4-turbo:** Blue line

* **O1-Preview:** Light Green line

* **Qwen2-72B:** Dark Green line

* **GLM-4:** Pink/Light Red line

**2. Main Chart Region (Axes and Scale)**

* **Categories (Axes):** Seven axes radiate from the center, dividing the chart into equal segments. Reading clockwise from the top (12 o'clock position), the categories are:

* `math` (Top)

* `chemistry` (Top-Right)

* `biology` (Right)

* `logic` (Bottom-Right)

* `coding` (Bottom)

* `medicine` (Bottom-Left)

* `physics` (Top-Left)

* **Scale:** The numerical scale is explicitly printed along the horizontal axis pointing towards `biology`. It starts at the center and moves outward.

* Markers: `0`, `0.1`, `0.2`, `0.3`, `0.4`, `0.5`, `0.6`, `0.7`.

* The grid consists of seven concentric white heptagons set against a light blue circular background. Each heptagon corresponds to an increment of 0.1.

### Detailed Analysis

**Trend Verification & Visual Hierarchy:**

Visually, the polygons form a distinct nested hierarchy.

* The **Light Green polygon (O1-Preview)** is the outermost shape, encompassing almost all other lines, indicating the highest overall performance. It spikes significantly outward on the `math` and `coding` axes.

* The **Blue polygon (GPT-4-turbo)** is generally the second-largest shape, sitting just inside O1-Preview, except on the `medicine` axis where it slightly overtakes O1-Preview.

* The **Pink polygon (GLM-4)** forms the third layer, closely tracking just inside GPT-4-turbo.

* The **Dark Green polygon (Qwen2-72B)** forms the fourth layer.

* The **Brown polygon (DeepSeek-v2)** is the innermost shape, indicating the lowest relative scores among the group across all categories.

**Estimated Data Points:**

*Note: Values are approximate (±0.02) based on visual interpolation between the 0.1 grid lines.*

| Category | O1-Preview (Light Green) | GPT-4-turbo (Blue) | GLM-4 (Pink) | Qwen2-72B (Dark Green) | DeepSeek-v2 (Brown) |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **math** | ~0.70 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **chemistry** | ~0.60 | ~0.48 | ~0.50 | ~0.40 | ~0.38 |

| **biology** | ~0.60 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **logic** | ~0.50 | ~0.38 | ~0.40 | ~0.38 | ~0.30 |

| **coding** | ~0.70 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

| **medicine** | ~0.58 | ~0.60 | ~0.50 | ~0.40 | ~0.38 |

| **physics** | ~0.60 | ~0.50 | ~0.48 | ~0.40 | ~0.38 |

### Key Observations

1. **O1-Preview's Dominance:** O1-Preview shows exceptional performance peaks in `math` and `coding`, reaching the maximum chart value of 0.7. It leads in 6 out of 7 categories.

2. **The Medicine Anomaly:** `medicine` is the only category where O1-Preview does not hold the top score. GPT-4-turbo peaks at ~0.60 here, slightly edging out O1-Preview (~0.58).

3. **Logic as a Weak Point:** All models show a relative dip in performance on the `logic` axis compared to their scores in other scientific domains. Even the leading model (O1-Preview) drops to ~0.50 here.

4. **Tight Clustering of Inner Models:** DeepSeek-v2 and Qwen2-72B exhibit very similar performance profiles, forming a tight inner cluster. Their shapes are nearly identical, with Qwen2-72B maintaining a consistent, slight lead over DeepSeek-v2 across all axes.

### Interpretation

This radar chart illustrates a comparative benchmark of advanced AI models on complex, reasoning-heavy STEM (Science, Technology, Engineering, and Mathematics) and logic tasks.

The data suggests a clear stratification in model capabilities. **O1-Preview** represents a significant leap in quantitative and algorithmic reasoning, evidenced by its massive advantage in math and coding. This aligns with the industry understanding of "O1" class models being specifically optimized for chain-of-thought reasoning and mathematical problem-solving.

**GPT-4-turbo** remains a highly capable generalist, holding a strong second place and demonstrating superior domain-specific knowledge in medicine.

The models **GLM-4**, **Qwen2-72B**, and **DeepSeek-v2** (which are notably prominent models originating from Chinese AI labs) show respectable, balanced performance but lag behind the top-tier proprietary models (O1 and GPT-4) in these specific, rigorous benchmarks. Their highly uniform, concentric shapes suggest they share similar architectural limitations or were trained on similar distributions of scientific data, resulting in a proportional scaling of capabilities rather than domain-specific spikes.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Radar Chart: Model Performance Across Disciplines

### Overview

This image presents a radar chart comparing the performance of six different models – DeepSeek-v2, GPT-4-turbo, O1-Preview, Qwen2-72B, GLM-4 – across six disciplines: math, chemistry, biology, logic, coding, and medicine. The performance is represented on a scale from 0 to 7.

### Components/Axes

* **Axes:** Six radial axes, each representing a discipline: math, chemistry, biology, logic, coding, and medicine. The axes are labeled clockwise starting from the top.

* **Scale:** The scale ranges from 0 to 7, marked at intervals of 1 along each axis.

* **Legend:** Located at the top-right of the chart, the legend maps colors to models:

* DeepSeek-v2 (Orange)

* GPT-4-turbo (Red)

* O1-Preview (Light Green)

* Qwen2-72B (Dark Green)

* GLM-4 (Pink)

### Detailed Analysis

The chart displays a polygon for each model, representing its performance across the six disciplines. The further a polygon extends along an axis, the higher the model's performance in that discipline.

* **DeepSeek-v2 (Orange):** The polygon for DeepSeek-v2 shows moderate performance across all disciplines. It peaks at approximately 6.5 in math and chemistry, and dips to around 3 in medicine.

* **GPT-4-turbo (Red):** GPT-4-turbo exhibits a relatively consistent performance across all disciplines, ranging from approximately 4 to 6. It shows a slight peak in math and chemistry, around 6.

* **O1-Preview (Light Green):** O1-Preview demonstrates the highest overall performance, with values consistently above 6. It peaks at approximately 7 in math and chemistry, and maintains a value of around 6 in other disciplines.

* **Qwen2-72B (Dark Green):** Qwen2-72B shows a similar pattern to DeepSeek-v2, with moderate performance across all disciplines. It peaks at approximately 6 in math and chemistry, and dips to around 3 in medicine.

* **GLM-4 (Pink):** GLM-4 shows a relatively consistent performance across all disciplines, ranging from approximately 3 to 5. It shows a slight peak in math and chemistry, around 5.

* **Math:** O1-Preview and DeepSeek-v2 are the highest performers, both around 7. GPT-4-turbo, Qwen2-72B, and GLM-4 are around 6, and 5 respectively.

* **Chemistry:** O1-Preview and DeepSeek-v2 are the highest performers, both around 7. GPT-4-turbo, Qwen2-72B, and GLM-4 are around 6, and 5 respectively.

* **Biology:** O1-Preview is the highest performer, around 6.5. GPT-4-turbo and Qwen2-72B are around 5.5, and DeepSeek-v2 and GLM-4 are around 4.

* **Logic:** O1-Preview is the highest performer, around 6.5. GPT-4-turbo and Qwen2-72B are around 5.5, and DeepSeek-v2 and GLM-4 are around 4.

* **Coding:** O1-Preview is the highest performer, around 6.5. GPT-4-turbo and Qwen2-72B are around 5.5, and DeepSeek-v2 and GLM-4 are around 4.

* **Medicine:** O1-Preview is the highest performer, around 6. GPT-4-turbo and Qwen2-72B are around 5, and DeepSeek-v2 and GLM-4 are around 3.

### Key Observations

* O1-Preview consistently outperforms other models across all disciplines.

* DeepSeek-v2 and Qwen2-72B exhibit similar performance profiles.

* GLM-4 generally shows the lowest performance across all disciplines.

* All models perform best in math and chemistry, and worst in medicine.

### Interpretation

The radar chart effectively visualizes the relative strengths and weaknesses of each model across a diverse set of disciplines. O1-Preview emerges as the most versatile model, demonstrating strong performance in all areas. The consistent performance of GPT-4-turbo suggests a balanced skillset. The similarities between DeepSeek-v2 and Qwen2-72B indicate they may share underlying architectural or training characteristics. The lower scores of GLM-4 suggest it may require further development or specialization to compete with the other models. The consistent trend of higher scores in math and chemistry, and lower scores in medicine, could indicate a bias in the training data or a greater inherent difficulty in the medicine domain. This chart provides a valuable comparative analysis for selecting the most appropriate model for a given task or application.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Radar Chart: AI Model Performance Across Academic Subjects

### Overview

The image displays a radar chart (also known as a spider chart) comparing the performance of five different large language models (LLMs) across seven academic subject domains. The chart uses a radial layout where each axis represents a subject, and the distance from the center indicates a performance score, likely normalized between 0.0 and 0.7.

### Components/Axes

* **Chart Type:** Radar Chart.

* **Legend:** Positioned at the top-left of the chart. It contains five entries, each with a colored line and marker symbol:

* **DeepSeek-v2:** Brown line with a circular marker.

* **GPT-4-turbo:** Blue line with a circular marker.

* **O1-Preview:** Light green line with a circular marker.

* **Qwen2-72B:** Green line with a circular marker.

* **GLM-4:** Pink line with a circular marker.

* **Axes (Subjects):** Seven axes radiate from the center, labeled clockwise from the top:

1. math

2. chemistry

3. biology

4. logic

5. coding

6. medicine

7. physics

* **Scale:** Concentric circles represent the scoring scale. The innermost circle is labeled `0.0`. Moving outward, the circles are labeled `0.1`, `0.2`, `0.3`, `0.4`, `0.5`, `0.6`, and the outermost visible circle is `0.7`. The labels are rotated and placed along the "biology" axis.

### Detailed Analysis

Performance scores are approximate, estimated by the radial distance of each model's data point from the center along each subject axis.

**1. O1-Preview (Light Green):**

* **Trend:** This model forms the outermost polygon, indicating the highest overall performance. It shows a pronounced peak in `math` and strong performance in `physics` and `chemistry`.

* **Approximate Scores:**

* math: ~0.68

* chemistry: ~0.62

* biology: ~0.55

* logic: ~0.58

* coding: ~0.60

* medicine: ~0.52

* physics: ~0.65

**2. GPT-4-turbo (Blue):**

* **Trend:** Generally the second-highest performer, forming a polygon just inside O1-Preview. It shows relatively balanced performance, with a slight dip in `medicine`.

* **Approximate Scores:**

* math: ~0.58

* chemistry: ~0.55

* biology: ~0.52

* logic: ~0.53

* coding: ~0.57

* medicine: ~0.48

* physics: ~0.56

**3. Qwen2-72B (Green):**

* **Trend:** Performance is clustered in the middle range, often overlapping with or slightly inside GPT-4-turbo. It appears strongest in `coding` and `logic`.

* **Approximate Scores:**

* math: ~0.52

* chemistry: ~0.50

* biology: ~0.48

* logic: ~0.51

* coding: ~0.54

* medicine: ~0.47

* physics: ~0.50

**4. DeepSeek-v2 (Brown):**

* **Trend:** Forms a polygon similar in size to Qwen2-72B but with a different shape. It shows a notable relative strength in `medicine` compared to its other scores.

* **Approximate Scores:**

* math: ~0.50

* chemistry: ~0.48

* biology: ~0.47

* logic: ~0.49

* coding: ~0.51

* medicine: ~0.52

* physics: ~0.49

**5. GLM-4 (Pink):**

* **Trend:** This model forms the innermost polygon, indicating the lowest overall performance across all subjects in this comparison. Its scores are the most tightly clustered.

* **Approximate Scores:**

* math: ~0.45

* chemistry: ~0.44

* biology: ~0.43

* logic: ~0.44

* coding: ~0.46

* medicine: ~0.42

* physics: ~0.44

### Key Observations

1. **Clear Performance Hierarchy:** There is a distinct layering of models, with O1-Preview consistently outermost, followed by GPT-4-turbo, then Qwen2-72B and DeepSeek-v2 in a middle tier, and GLM-4 innermost.

2. **Subject-Specific Strengths:** O1-Preview shows a significant advantage in `math` and `physics`. DeepSeek-v2's performance in `medicine` is an outlier relative to its own profile, nearly matching GPT-4-turbo in that specific domain.

3. **Tight Clustering in Medicine:** The spread between the highest and lowest scores appears smallest on the `medicine` axis, suggesting more comparable performance among these models in that field.

4. **Balanced vs. Specialized Profiles:** GPT-4-turbo and GLM-4 show relatively balanced polygons. O1-Preview and DeepSeek-v2 show more pronounced peaks, indicating potential specialization.

### Interpretation

This radar chart provides a comparative snapshot of LLM capabilities across STEM and medical domains. The data suggests that **O1-Preview is the leading model in this evaluation**, demonstrating superior performance, particularly in quantitative and physical sciences. **GPT-4-turbo maintains a strong, consistent second-place position.**

The middle-tier competition between **Qwen2-72B** and **DeepSeek-v2** is nuanced; while Qwen2-72B may have a slight edge in logic and coding, DeepSeek-v2 appears more capable in medicine. This could indicate different training data emphases or architectural strengths.

**GLM-4**, while scoring lower across the board, shows a very consistent performance profile, which might indicate a different optimization strategy focused on breadth rather than peak performance in specific areas.

The chart effectively communicates that model selection should be task-dependent. For a math or physics-intensive application, O1-Preview is the clear choice. For medical applications, the gap between models narrows, and factors like efficiency or cost might become more decisive. The visualization underscores that no single model dominates every category by an equal margin, highlighting the importance of benchmarking for specific use cases.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Radar Chart: AI Model Performance Across Academic Disciplines

### Overview

The image is a radar chart comparing the performance of five AI models (DeepSeek-v2, GPT-4-turbo, O1-Preview, Qwen2-72B, GLM-4) across seven academic disciplines: math, chemistry, biology, logic, coding, medicine, and physics. The chart uses a circular layout with axes radiating from the center, each labeled with a subject. Data points are plotted for each model, with shaded areas indicating performance ranges.

### Components/Axes

- **Axes**:

- **Subjects**: math (top), chemistry (top-right), biology (right), logic (bottom-right), coding (bottom), medicine (bottom-left), physics (left).

- **Scale**: 0 to 0.7, with increments of 0.1.

- **Legend**:

- **Colors**:

- DeepSeek-v2: brown

- GPT-4-turbo: blue

- O1-Preview: light green

- Qwen2-72B: dark green

- GLM-4: pink

- **Position**: Top-left of the chart.

### Detailed Analysis

- **Math**:

- O1-Preview (0.7), Qwen2-72B (0.6), GPT-4-turbo (0.5), GLM-4 (0.4), DeepSeek-v2 (0.3).

- **Chemistry**:

- O1-Preview (0.65), Qwen2-72B (0.55), GPT-4-turbo (0.5), GLM-4 (0.45), DeepSeek-v2 (0.35).

- **Biology**:

- O1-Preview (0.6), Qwen2-72B (0.55), GPT-4-turbo (0.5), GLM-4 (0.5), DeepSeek-v2 (0.4).

- **Logic**:

- O1-Preview (0.55), Qwen2-72B (0.5), GPT-4-turbo (0.45), GLM-4 (0.4), DeepSeek-v2 (0.35).

- **Coding**:

- O1-Preview (0.7), Qwen2-72B (0.65), GPT-4-turbo (0.6), GLM-4 (0.55), DeepSeek-v2 (0.45).

- **Medicine**:

- GPT-4-turbo (0.7), O1-Preview (0.6), Qwen2-72B (0.55), GLM-4 (0.5), DeepSeek-v2 (0.4).

- **Physics**:

- O1-Preview (0.6), Qwen2-72B (0.6), GPT-4-turbo (0.55), GLM-4 (0.5), DeepSeek-v2 (0.45).

### Key Observations

1. **O1-Preview** dominates in **math** (0.7) and **coding** (0.7), with strong performance in **physics** (0.6) and **chemistry** (0.65).

2. **GPT-4-turbo** excels in **medicine** (0.7) and has balanced performance across other subjects.

3. **Qwen2-72B** performs well in **coding** (0.65) and **physics** (0.6), with moderate scores in other areas.

4. **GLM-4** shows the lowest performance in **math** (0.4) and **coding** (0.55), but matches others in **biology** (0.5).

5. **DeepSeek-v2** is the weakest across all subjects, with the lowest score in **math** (0.3).

### Interpretation

The chart highlights the **specialization** of AI models in specific disciplines. O1-Preview demonstrates the broadest competence, particularly in math and coding, suggesting it may be optimized for general-purpose problem-solving. GPT-4-turbo’s peak in medicine indicates a focus on biomedical or clinical applications. Qwen2-72B’s strength in coding and physics aligns with technical or computational tasks. GLM-4’s lower scores across most subjects suggest limitations in general knowledge, though it performs adequately in biology. DeepSeek-v2’s consistently low performance raises questions about its training data or architecture.

The data implies that **model selection** should depend on the target discipline: O1-Preview for math/coding, GPT-4-turbo for medicine, and Qwen2-72B for technical fields. GLM-4 and DeepSeek-v2 may require further refinement for broader applicability.

DECODING INTELLIGENCE...