## Diagram: Proof of Thought

### Overview

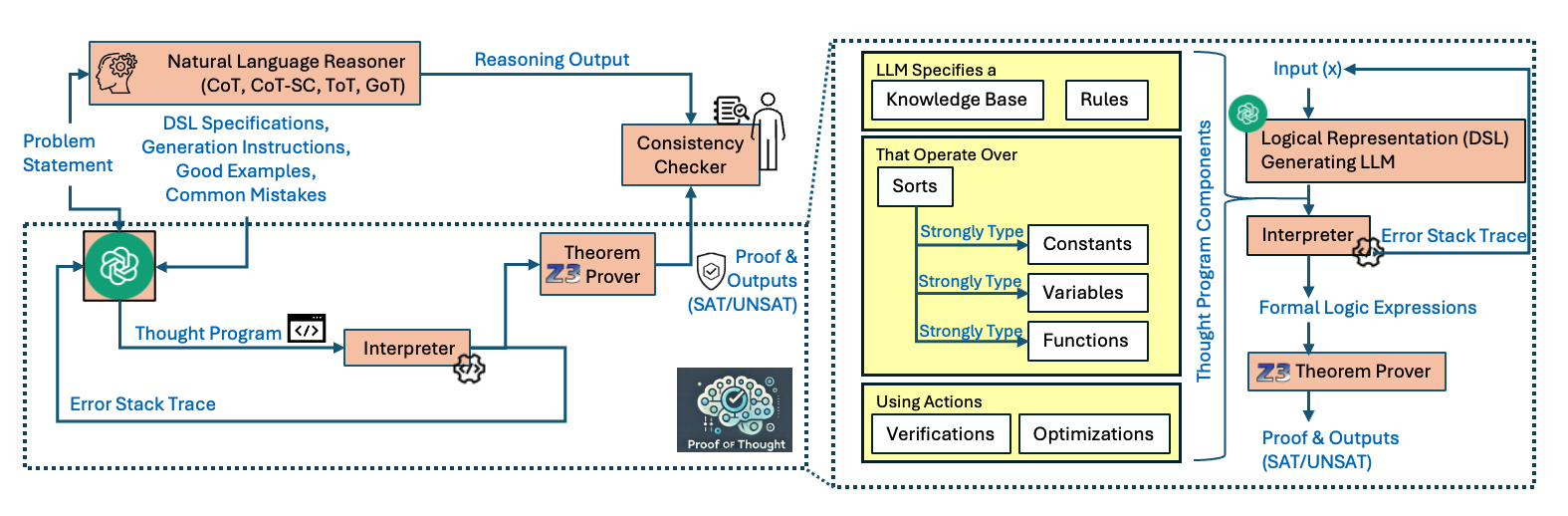

The image presents two interconnected diagrams illustrating a "Proof of Thought" process. The left diagram outlines a system where a natural language reasoner generates a thought program, which is then checked for consistency and verified by a theorem prover. The right diagram details the components of the thought program itself, including its knowledge base, rules, data types, and actions.

### Components/Axes

**Left Diagram:**

* **Nodes:**

* Problem Statement

* Natural Language Reasoner (CoT, CoT-SC, ToT, GoT)

* DSL Specifications, Generation Instructions, Good Examples, Common Mistakes

* Consistency Checker

* Theorem Z3 Prover

* Thought Program

* Interpreter

* Proof & Outputs (SAT/UNSAT)

* Proof of Thought (image of brain with checkmark)

* **Edges:** Arrows indicating the flow of information between components.

* **Labels:** Text descriptions associated with each node and edge.

**Right Diagram:**

* **Nodes:**

* LLM Specifies a: Knowledge Base, Rules

* That Operate Over: Sorts, Strongly Type Constants, Strongly Type Variables, Strongly Type Functions

* Using Actions: Verifications, Optimizations

* Logical Representation (DSL) Generating LLM

* Interpreter

* Formal Logic Expressions

* Z3 Theorem Prover

* Proof & Outputs (SAT/UNSAT)

* **Labels:** Text descriptions associated with each node and edge.

* **Grouping:** "Thought Program Components" label encompassing the nodes related to the thought program's structure.

### Detailed Analysis

**Left Diagram:**

1. **Problem Statement:** The process begins with a problem statement.

2. **Natural Language Reasoner:** The problem statement is fed into a natural language reasoner, which uses techniques like Chain of Thought (CoT), Chain of Thought Self-Consistency (CoT-SC), Tree of Thoughts (ToT), and Graph of Thoughts (GoT). The reasoner also uses DSL Specifications, Generation Instructions, Good Examples, and Common Mistakes.

3. **Reasoning Output:** The reasoner generates a reasoning output.

4. **Consistency Checker:** The reasoning output is passed to a consistency checker.

5. **Thought Program:** The natural language reasoner generates a thought program.

6. **Interpreter:** The thought program is processed by an interpreter.

7. **Theorem Prover:** The interpreter's output is fed into a theorem prover (Z3 Prover).

8. **Proof & Outputs:** The theorem prover generates proofs and outputs (SAT/UNSAT).

9. **Error Stack Trace:** Both the interpreter and the theorem prover can generate error stack traces, which are fed back into the thought program.

10. **Proof of Thought:** The final output is a "Proof of Thought," represented by an image of a brain with a checkmark.

**Right Diagram:**

1. **LLM Specification:** The LLM specifies a knowledge base and rules.

2. **Data Types:** The LLM operates over sorts, including strongly typed constants, variables, and functions.

3. **Actions:** The LLM uses actions such as verifications and optimizations.

4. **Logical Representation:** Input (x) is used to generate a logical representation (DSL) by generating an LLM.

5. **Interpreter:** The logical representation is processed by an interpreter.

6. **Formal Logic Expressions:** The interpreter generates formal logic expressions.

7. **Theorem Prover:** The formal logic expressions are fed into a theorem prover (Z3 Theorem Prover).

8. **Proof & Outputs:** The theorem prover generates proofs and outputs (SAT/UNSAT).

9. **Error Stack Trace:** Both the interpreter can generate error stack traces, which are fed back into the logical representation.

### Key Observations

* The diagram illustrates a closed-loop system where the output of the theorem prover and interpreter can influence the thought program and logical representation through error stack traces.

* The right diagram provides a detailed breakdown of the components that make up the thought program.

* The use of a theorem prover (Z3) suggests a focus on formal verification and logical reasoning.

### Interpretation

The diagram presents a framework for "Proof of Thought," which involves using natural language reasoning, formal verification, and error feedback to generate and validate thought programs. The system leverages LLMs to create logical representations that can be rigorously checked for consistency and correctness. The inclusion of error stack traces allows for iterative refinement of the thought program, potentially leading to more robust and reliable reasoning processes. The diagram highlights the importance of combining natural language understanding with formal methods to achieve verifiable AI systems.