## Line Chart: Ablation study of buffer-manager -- Time

### Overview

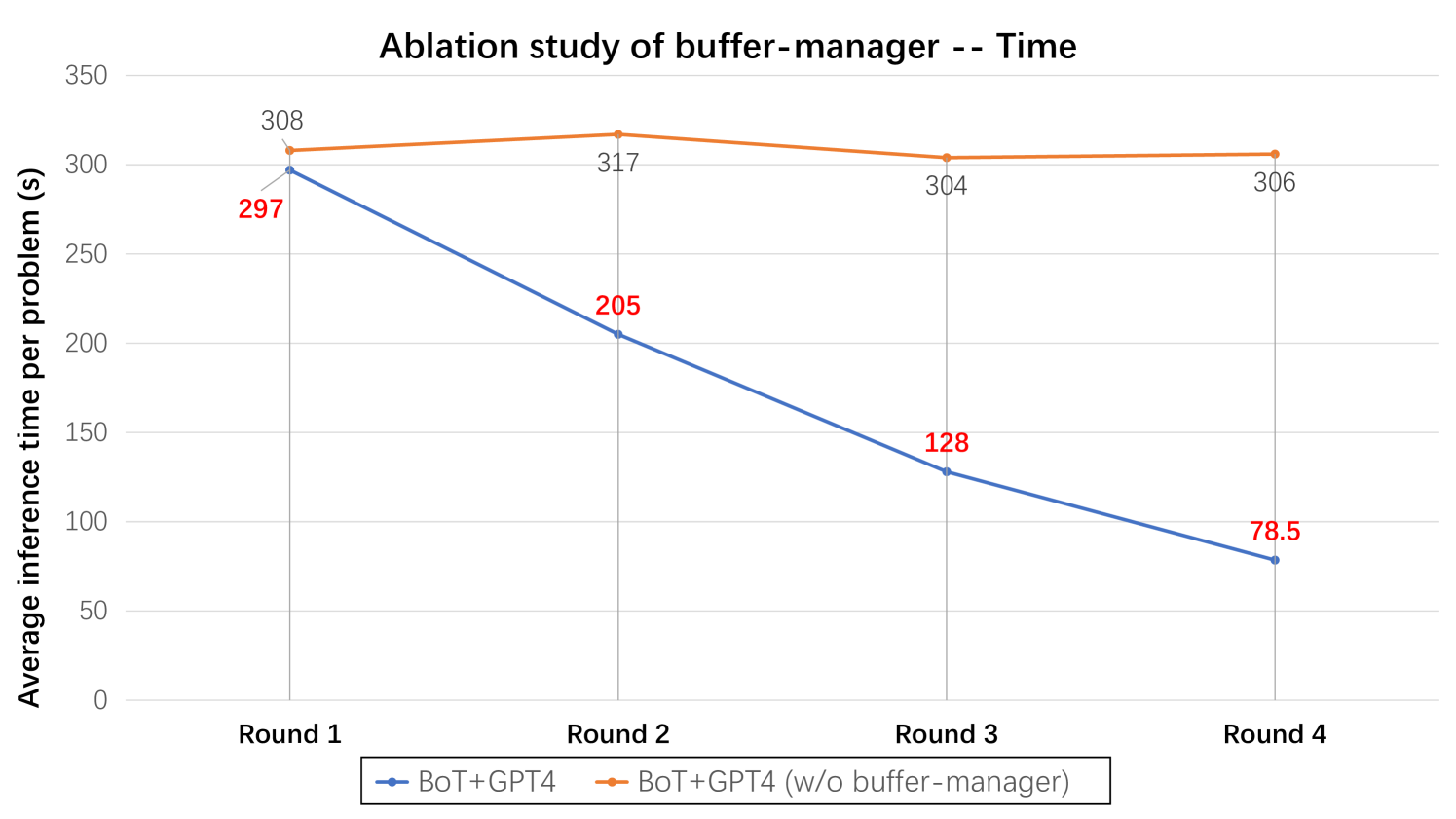

The image is a line chart comparing the average inference time per problem (in seconds) over four rounds for two configurations: "BoT+GPT4" and "BoT+GPT4 (w/o buffer-manager)". The x-axis represents the round number (1 to 4), and the y-axis represents the average inference time in seconds, ranging from 0 to 350. The chart aims to show the impact of the buffer-manager on the inference time.

### Components/Axes

* **Title:** Ablation study of buffer-manager -- Time

* **X-axis:**

* Label: Round

* Ticks: Round 1, Round 2, Round 3, Round 4

* **Y-axis:**

* Label: Average inference time per problem (s)

* Scale: 0 to 350, with increments of 50 (0, 50, 100, 150, 200, 250, 300, 350)

* **Legend:** Located at the bottom of the chart.

* Blue line: BoT+GPT4

* Orange line: BoT+GPT4 (w/o buffer-manager)

### Detailed Analysis

* **BoT+GPT4 (Blue Line):**

* Trend: The average inference time decreases over the four rounds.

* Round 1: 297 seconds

* Round 2: 205 seconds

* Round 3: 128 seconds

* Round 4: 78.5 seconds

* **BoT+GPT4 (w/o buffer-manager) (Orange Line):**

* Trend: The average inference time starts high, increases slightly, then decreases slightly, and then increases slightly again.

* Round 1: 308 seconds

* Round 2: 317 seconds

* Round 3: 304 seconds

* Round 4: 306 seconds

### Key Observations

* The "BoT+GPT4" configuration consistently has a lower average inference time compared to the "BoT+GPT4 (w/o buffer-manager)" configuration across all rounds.

* The "BoT+GPT4" configuration shows a significant decrease in inference time from Round 1 to Round 4, indicating a learning or optimization effect.

* The "BoT+GPT4 (w/o buffer-manager)" configuration shows a relatively stable, but higher, inference time across all rounds.

### Interpretation

The data suggests that the buffer-manager significantly improves the inference time of the "BoT+GPT4" configuration, especially as the number of rounds increases. The decreasing inference time for "BoT+GPT4" indicates that the buffer-manager is effectively learning and optimizing the process over time. The relatively constant inference time for "BoT+GPT4 (w/o buffer-manager)" suggests that the buffer-manager plays a crucial role in the optimization process. The buffer manager is likely caching results and reducing redundant computations.