\n

## Line Chart: Ablation study of buffer-manager -- Time

### Overview

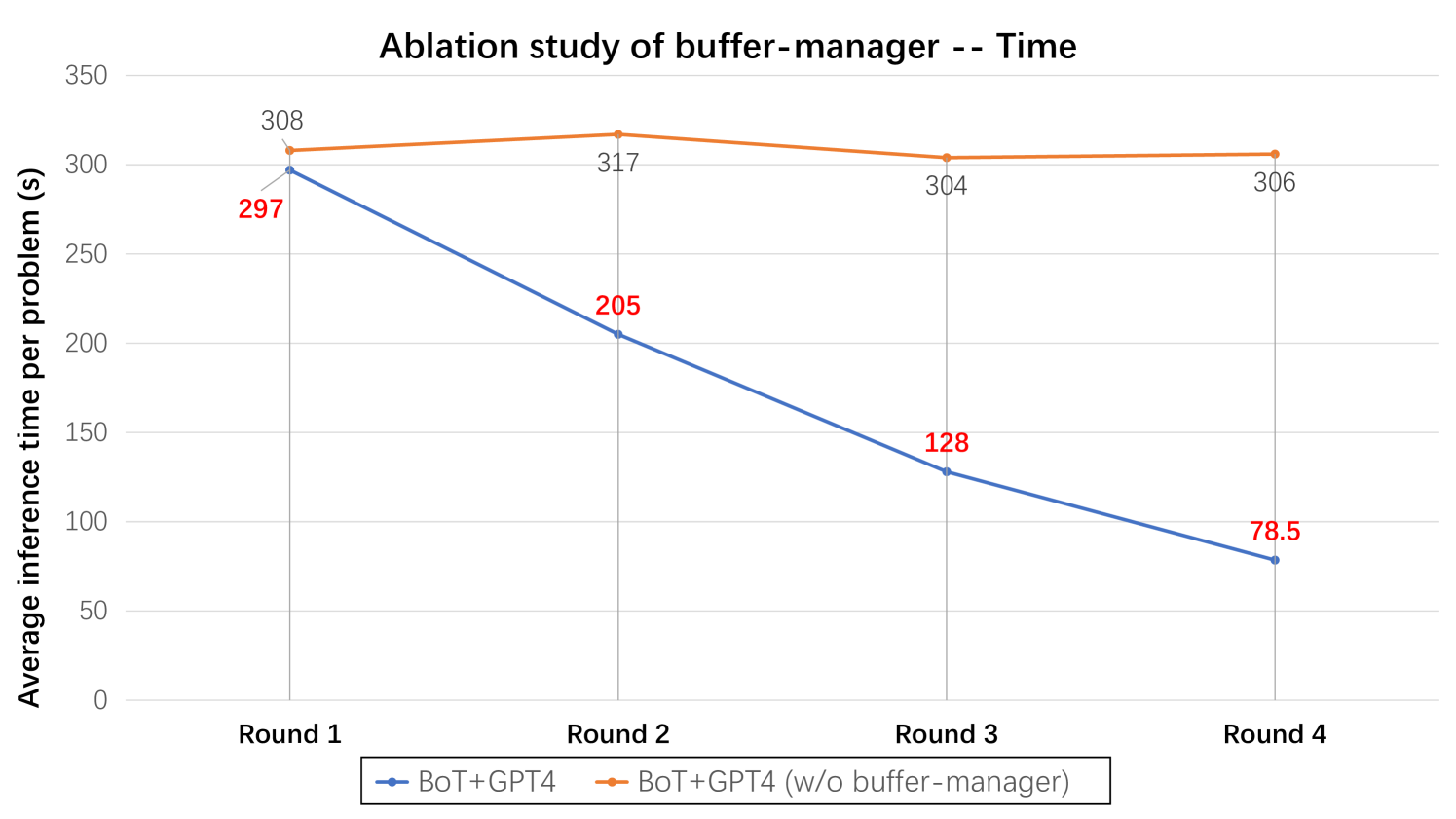

This line chart presents the results of an ablation study examining the impact of a "buffer-manager" on inference time per problem. It compares two configurations: one *with* the buffer-manager (BoT+GPT4, represented by a blue line) and one *without* the buffer-manager (BoT+GPT4 (w/o buffer-manager), represented by an orange line) across four rounds. The y-axis represents the average inference time in seconds, while the x-axis represents the round number.

### Components/Axes

* **Title:** "Ablation study of buffer-manager -- Time" (centered at the top)

* **X-axis Label:** "Round" (centered at the bottom)

* **Markers:** Round 1, Round 2, Round 3, Round 4 (equally spaced)

* **Y-axis Label:** "Average inference time per problem (s)" (left side, vertical)

* **Scale:** 0 to 350 seconds, with increments of 50.

* **Legend:** Located at the bottom-center of the chart.

* **BoT+GPT4:** Blue line

* **BoT+GPT4 (w/o buffer-manager):** Orange line

### Detailed Analysis

**BoT+GPT4 (Blue Line):**

The blue line representing BoT+GPT4 exhibits a strong downward trend. It starts at approximately 297 seconds in Round 1 and steadily decreases to approximately 78.5 seconds in Round 4.

* Round 1: ~297 seconds

* Round 2: ~205 seconds

* Round 3: ~128 seconds

* Round 4: ~78.5 seconds

**BoT+GPT4 (w/o buffer-manager) (Orange Line):**

The orange line representing BoT+GPT4 without the buffer-manager shows a relatively flat trend, with slight fluctuations. It begins at approximately 308 seconds in Round 1 and ends at approximately 306 seconds in Round 4.

* Round 1: ~308 seconds

* Round 2: ~317 seconds

* Round 3: ~304 seconds

* Round 4: ~306 seconds

### Key Observations

* The inference time for BoT+GPT4 *decreases significantly* across the four rounds, indicating improved performance with each round.

* The inference time for BoT+GPT4 without the buffer-manager remains relatively constant across the four rounds.

* In all four rounds, the inference time for BoT+GPT4 (with buffer-manager) is *lower* than that of BoT+GPT4 (without buffer-manager).

* The difference in inference time between the two configurations is most pronounced in Round 1 (approximately 11 seconds) and Round 4 (approximately 227.5 seconds).

### Interpretation

The data strongly suggests that the buffer-manager significantly improves the inference time of BoT+GPT4. The consistent decrease in inference time for the configuration *with* the buffer-manager indicates that it becomes more effective as the process iterates through rounds. The relatively stable inference time for the configuration *without* the buffer-manager suggests that it does not benefit from the iterative process in the same way.

The ablation study demonstrates the value of the buffer-manager component. The large difference in inference time, particularly in later rounds, highlights the potential for substantial performance gains by including this feature. The consistent downward trend of the blue line suggests that the buffer-manager is effectively optimizing the process over time, leading to faster inference speeds. The orange line's flatness indicates that the absence of the buffer-manager results in a plateau in performance, unable to capitalize on iterative improvements.