## Line Chart: Ablation Study of Buffer-Manager -- Time

### Overview

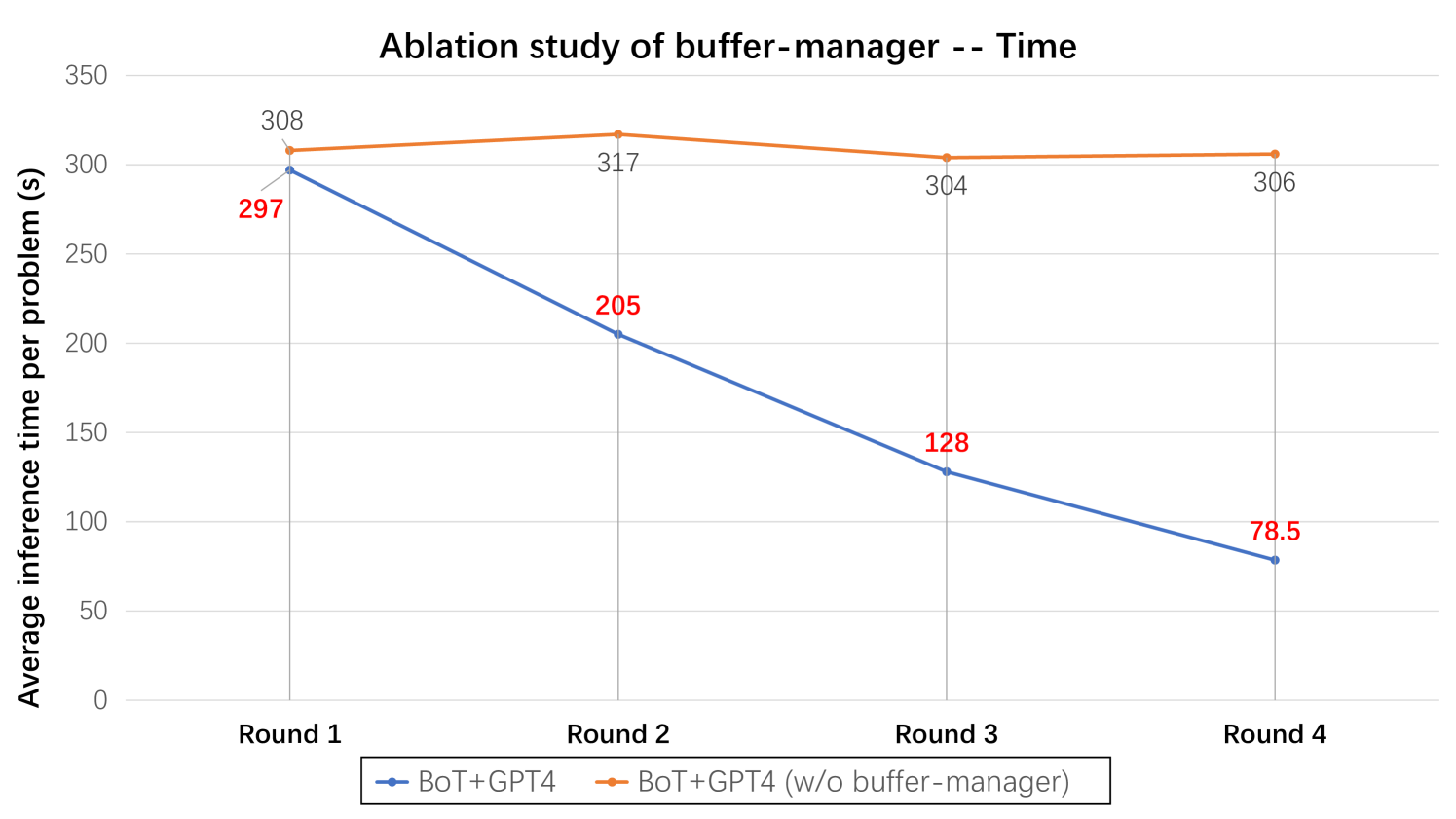

This image is a line chart presenting the results of an ablation study. It compares the average inference time per problem across four sequential rounds for two different system configurations: one with a "buffer-manager" component and one without. The chart clearly demonstrates the impact of the buffer-manager on performance over time.

### Components/Axes

* **Chart Title:** "Ablation study of buffer-manager -- Time"

* **Y-Axis:**

* **Label:** "Average inference time per problem (s)"

* **Scale:** Linear scale from 0 to 350 seconds, with major gridlines at intervals of 50 seconds (0, 50, 100, 150, 200, 250, 300, 350).

* **X-Axis:**

* **Label:** Implicitly represents sequential rounds or iterations.

* **Categories:** "Round 1", "Round 2", "Round 3", "Round 4".

* **Legend:** Located at the bottom center of the chart.

* **Blue Line with Square Marker:** "BoT+GPT4" (This is the configuration *with* the buffer-manager).

* **Orange Line with Diamond Marker:** "BoT+GPT4 (w/o buffer-manager)" (This is the configuration *without* the buffer-manager).

### Detailed Analysis

The chart plots two data series, with exact values labeled at each data point.

**Data Series 1: BoT+GPT4 (Blue Line)**

* **Trend:** Shows a strong, consistent downward slope from Round 1 to Round 4.

* **Data Points:**

* Round 1: 297 seconds

* Round 2: 205 seconds

* Round 3: 128 seconds

* Round 4: 78.5 seconds

**Data Series 2: BoT+GPT4 (w/o buffer-manager) (Orange Line)**

* **Trend:** Remains relatively flat and stable across all rounds, with a slight peak in Round 2.

* **Data Points:**

* Round 1: 308 seconds

* Round 2: 317 seconds

* Round 3: 304 seconds

* Round 4: 306 seconds

### Key Observations

1. **Performance Divergence:** The two configurations start with similar performance in Round 1 (297s vs. 308s). However, their paths diverge dramatically immediately after.

2. **Improvement Trend:** The "BoT+GPT4" system (with buffer-manager) exhibits a significant and continuous improvement in speed, reducing its average inference time by approximately 73.6% from Round 1 to Round 4 (from 297s to 78.5s).

3. **Stagnation Trend:** The system without the buffer-manager shows no meaningful improvement. Its performance fluctuates slightly around a high baseline of approximately 309 seconds (average of the four points).

4. **Crossover Point:** The performance lines cross between Round 1 and Round 2. By Round 2, the system with the buffer-manager is already substantially faster (205s vs. 317s).

### Interpretation

This ablation study provides strong evidence for the efficacy of the "buffer-manager" component within the "BoT+GPT4" system architecture.

* **What the data suggests:** The buffer-manager is not merely an incremental improvement; it is a critical component for achieving performance gains over successive operational rounds. The system without it fails to learn or optimize its process, resulting in stagnant, high inference times.

* **How elements relate:** The x-axis (Rounds) likely represents iterative problem-solving or learning cycles. The y-axis measures efficiency. The stark contrast between the two lines isolates the buffer-manager as the causal factor for the observed efficiency gains. The blue line's steep negative slope indicates effective optimization or caching, while the orange line's flatness indicates a lack thereof.

* **Notable Anomalies:** The slight peak for the orange line at Round 2 (317s) is a minor anomaly but does not change the overall narrative of stagnation. The most striking "anomaly" is the sheer magnitude of the performance gap that opens up by Round 4 (78.5s vs. 306s), highlighting the component's importance.

* **Underlying Implication:** The study implies that the buffer-manager enables the system to retain and leverage information or state from previous rounds, leading to faster solutions in subsequent rounds. Without it, each round is treated as a largely independent, and therefore slower, computation. This has significant implications for the scalability and practical deployment of the system.