## Line Chart: Ablation study of buffer-manager -- Time

### Overview

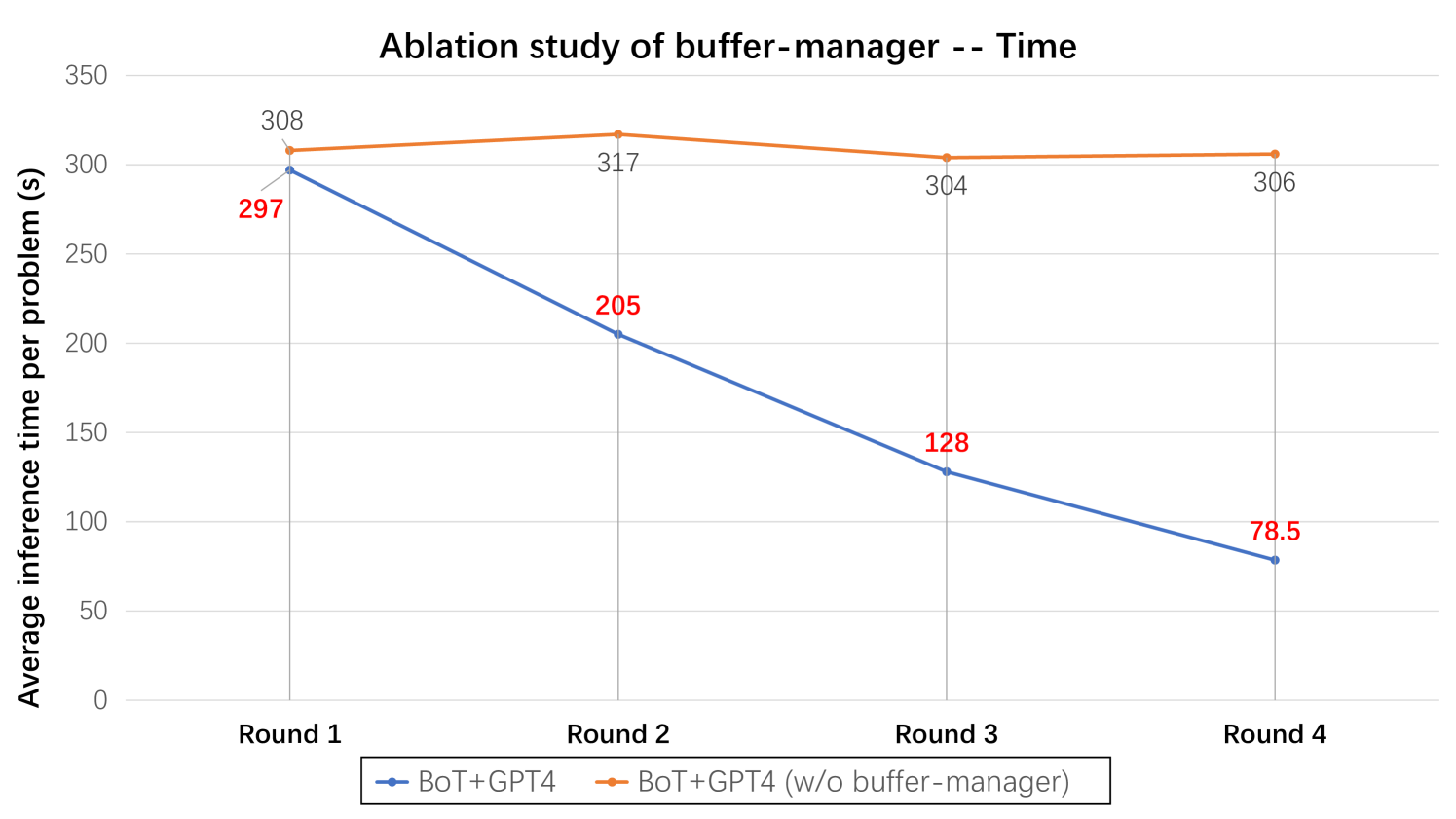

The chart compares the average inference time (in seconds) per problem across four rounds for two configurations: "BoT+GPT4" (blue line) and "BoT+GPT4 (w/o buffer-manager)" (orange line). The y-axis ranges from 0 to 350 seconds, and the x-axis represents four sequential rounds.

### Components/Axes

- **X-axis (Rounds)**: Labeled "Round 1," "Round 2," "Round 3," and "Round 4" at equal intervals.

- **Y-axis (Average Inference Time)**: Scaled from 0 to 350 seconds in increments of 50.

- **Legend**: Positioned at the bottom center, with:

- Blue line: "BoT+GPT4"

- Orange line: "BoT+GPT4 (w/o buffer-manager)"

- **Data Points**: Embedded numerical values on the lines for each round.

### Detailed Analysis

- **BoT+GPT4 (Blue Line)**:

- **Round 1**: 297 seconds

- **Round 2**: 205 seconds

- **Round 3**: 128 seconds

- **Round 4**: 78.5 seconds

- **Trend**: Steady linear decrease across all rounds.

- **BoT+GPT4 (w/o buffer-manager) (Orange Line)**:

- **Round 1**: 308 seconds

- **Round 2**: 317 seconds

- **Round 3**: 304 seconds

- **Round 4**: 306 seconds

- **Trend**: Slight fluctuation but remains stable around 300–317 seconds.

### Key Observations

1. The "BoT+GPT4" configuration shows a consistent reduction in inference time, dropping from 297s to 78.5s over four rounds.

2. The "BoT+GPT4 (w/o buffer-manager)" configuration maintains near-constant inference times (~300–317s), with minor fluctuations.

3. The buffer-manager appears to significantly improve performance over time, as evidenced by the steep decline in the blue line.

### Interpretation

The data suggests that the buffer-manager plays a critical role in reducing inference time for the BoT+GPT4 system. Without the buffer-manager, performance remains stagnant, while its inclusion enables progressive optimization. The linear decline in the blue line indicates that the buffer-manager’s benefits compound across rounds, possibly due to adaptive resource allocation or caching mechanisms. This ablation study highlights the buffer-manager as a key component for efficiency in large-scale inference tasks.