TECHNICAL ASSET FINGERPRINT

b466ffd26b17d8f4b55feb49

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

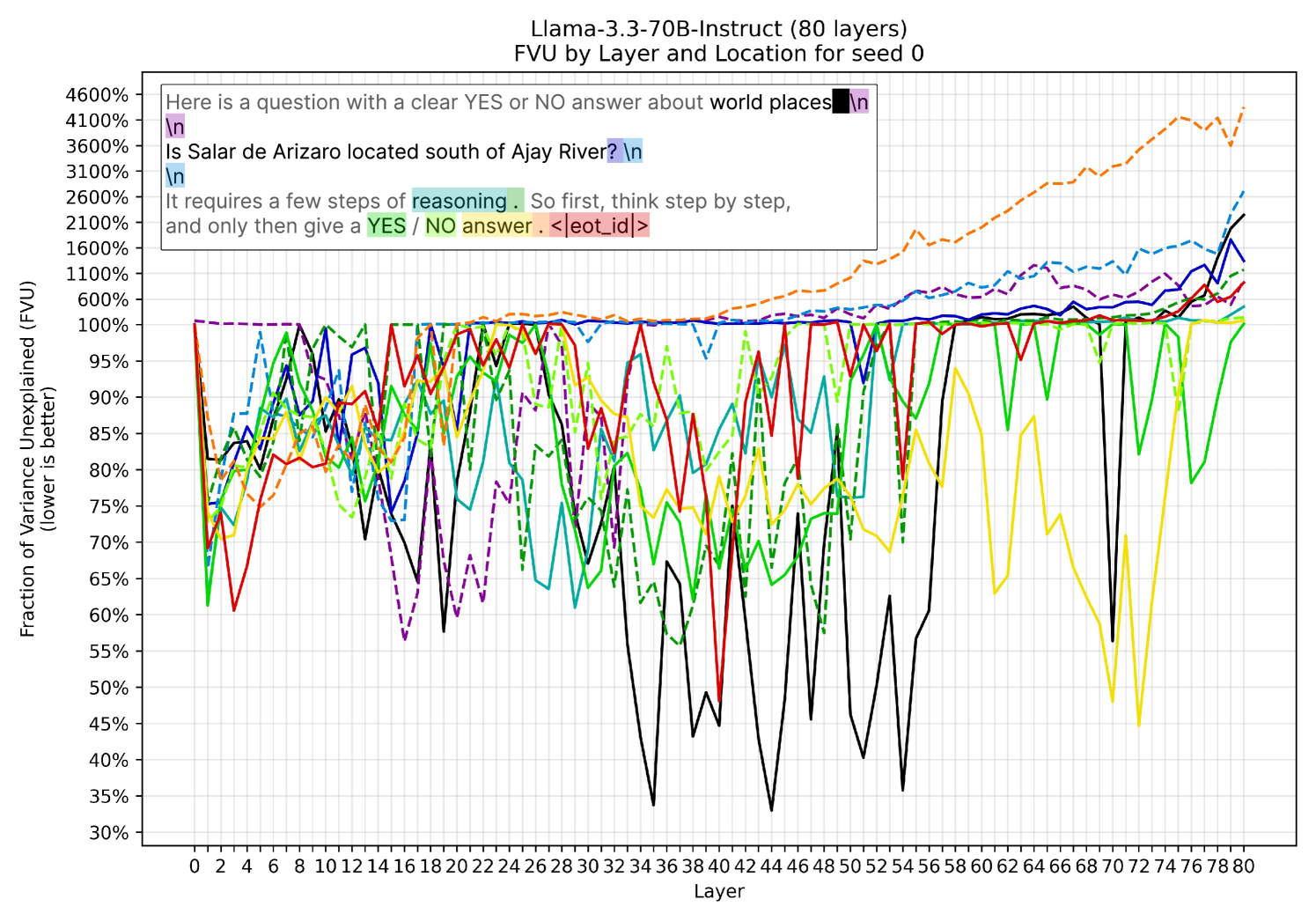

## Line Chart: FVU by Layer and Location

### Overview

The image is a line chart displaying the Fraction of Variance Unexplained (FVU) across different layers of the Llama-3.3-70B-Instruct model. The x-axis represents the layer number (0 to 80), and the y-axis represents the FVU, ranging from 30% to 4600%. Different colored lines represent different locations or prompts. The chart aims to show how FVU changes across layers for various input conditions.

### Components/Axes

* **Title:** Llama-3.3-70B-Instruct (80 layers) FVU by Layer and Location for seed 0

* **X-axis:** Layer (numerical scale from 0 to 80, incrementing by 2)

* **Y-axis:** Fraction of Variance Unexplained (FVU) (lower is better) (percentage scale from 30% to 4600%, with major ticks at 30%, 35%, 40%, 45%, 50%, 55%, 60%, 65%, 70%, 75%, 80%, 85%, 90%, 95%, 100%, 600%, 1100%, 1600%, 2100%, 2600%, 3100%, 3600%, 4100%, 4600%)

* **Textual Prompts:**

* "Here is a question with a clear YES or NO answer about world places \n"

* "Is Salar de Arizaro located south of Ajay River? \n"

* "\n It requires a few steps of reasoning. So first, think step by step, and only then give a YES / NO answer . <|eot_id|>"

### Detailed Analysis

The chart contains multiple data series, each represented by a different colored line. The lines generally start with high variance at the initial layers and then converge or stabilize as the layer number increases. Some lines show a significant increase in FVU towards the later layers.

Here's a breakdown of the trends for some of the visible lines:

* **Orange (dashed) Line:** Starts around 75% FVU and generally increases with layer number, showing a significant upward trend after layer 60, reaching approximately 4000% at layer 80.

* **Dark Blue Line:** Starts around 85% FVU, remains relatively stable around 100% until layer 60, then increases to approximately 1600% at layer 80.

* **Teal Line:** Starts around 80% FVU, remains relatively stable around 100% until layer 60, then increases to approximately 1800% at layer 80.

* **Purple (dashed) Line:** Starts at 100% FVU, fluctuates significantly between 55% and 100% until layer 60, then increases to approximately 1600% at layer 80.

* **Green Line:** Starts around 85% FVU, fluctuates significantly between 60% and 100% until layer 60, then remains relatively stable around 100% at layer 80.

* **Black Line:** Starts around 100% FVU, fluctuates significantly between 35% and 100% until layer 60, then remains relatively stable around 100% at layer 80.

* **Yellow Line:** Starts around 80% FVU, fluctuates significantly between 40% and 100% until layer 60, then remains relatively stable around 100% at layer 80.

* **Red Line:** Starts around 60% FVU, fluctuates significantly between 55% and 100% until layer 60, then remains relatively stable around 100% at layer 80.

### Key Observations

* The orange dashed line exhibits the most significant increase in FVU towards the later layers.

* Several lines converge around the 100% FVU mark after layer 60.

* The initial layers (0-20) show high variability in FVU across different locations.

* The "reasoning" prompt (green text) seems to have a lower FVU compared to the other prompts in the initial layers.

### Interpretation

The chart illustrates how the fraction of variance unexplained changes across different layers of the Llama-3.3-70B-Instruct model for various input prompts. The initial layers show high variability, suggesting that these layers are more sensitive to the specific input. As the layer number increases, the FVU tends to converge for most prompts, indicating that the later layers are learning more general features. The orange dashed line, which corresponds to a specific location or prompt, shows a significant increase in FVU towards the later layers, suggesting that the model struggles to explain the variance for this particular input as it goes deeper into the network. This could indicate that the model is overfitting to the training data or that the input is inherently more complex. The "reasoning" prompt (green text) having a lower FVU in the initial layers might suggest that the model is better at capturing the variance for this type of input early on.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

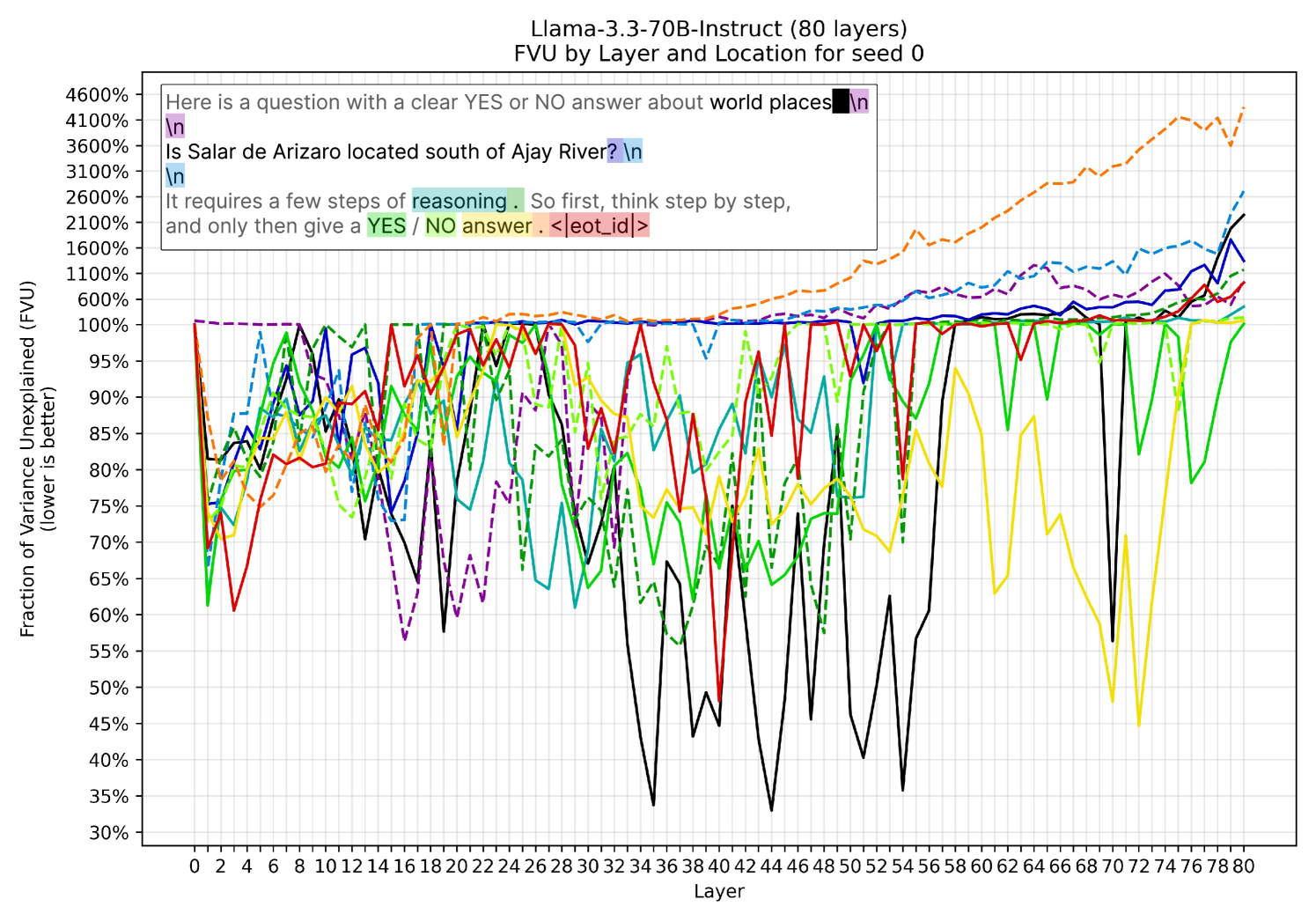

## Line Chart: FVU by Layer and Location for seed 0

### Overview

This image presents a line chart visualizing the Fraction of Variance Unexplained (FVU) across different layers (0 to 80) for a model named "Llama-3.3-70B-Instruct (80 layers)". The chart also includes a text block containing a question and instructions, seemingly related to reasoning about geographical locations.

### Components/Axes

* **X-axis:** Layer, ranging from 0 to 80, with increments of 2. Label: "Layer"

* **Y-axis:** Fraction of Variance Unexplained (FVU), ranging from 30% to 4600%, with increments of approximately 350%. Label: "Fraction of Variance Unexplained (FVU) (lower is better)"

* **Lines:** Multiple lines representing different locations. A legend is present, but the exact location names are difficult to discern due to the image quality. There are approximately 20 lines visible.

* **Text Block:** Located in the top-left corner of the chart.

### Detailed Analysis

The chart displays FVU values for each layer across multiple locations. The lines exhibit varying trends, with some generally decreasing (indicating better performance) and others fluctuating.

Here's a breakdown of the approximate FVU values for a few key layers, based on color matching with the legend (note: due to image quality, these are estimates):

* **Layer 0:**

* Dark Blue: ~380%

* Light Blue: ~360%

* Green: ~400%

* Red: ~420%

* Yellow: ~440%

* **Layer 8:**

* Dark Blue: ~340%

* Light Blue: ~320%

* Green: ~360%

* Red: ~380%

* Yellow: ~400%

* **Layer 16:**

* Dark Blue: ~320%

* Light Blue: ~300%

* Green: ~340%

* Red: ~360%

* Yellow: ~380%

* **Layer 24:**

* Dark Blue: ~300%

* Light Blue: ~280%

* Green: ~320%

* Red: ~340%

* Yellow: ~360%

* **Layer 32:**

* Dark Blue: ~320%

* Light Blue: ~300%

* Green: ~340%

* Red: ~360%

* Yellow: ~380%

* **Layer 40:**

* Dark Blue: ~340%

* Light Blue: ~320%

* Green: ~360%

* Red: ~380%

* Yellow: ~400%

* **Layer 48:**

* Dark Blue: ~360%

* Light Blue: ~340%

* Green: ~380%

* Red: ~400%

* Yellow: ~420%

* **Layer 56:**

* Dark Blue: ~380%

* Light Blue: ~360%

* Green: ~400%

* Red: ~420%

* Yellow: ~440%

* **Layer 64:**

* Dark Blue: ~400%

* Light Blue: ~380%

* Green: ~420%

* Red: ~440%

* Yellow: ~460%

* **Layer 72:**

* Dark Blue: ~420%

* Light Blue: ~400%

* Green: ~440%

* Red: ~460%

* Yellow: ~400%

* **Layer 80:**

* Dark Blue: ~440%

* Light Blue: ~420%

* Green: ~460%

* Red: ~400%

* Yellow: ~380%

**Text Block Transcription:**

```

Here is a question with a clear YES or NO answer about world places

Is Salar de Arizaro located south of Ajay River?

It requires a few steps of reasoning. So first, think step by step,

and only then give a YES / NO answer. <|eot_id|>

```

### Key Observations

* The FVU generally decreases from layer 0 to around layer 16-24 for most locations, then begins to fluctuate or increase again.

* The yellow line consistently exhibits higher FVU values compared to other locations throughout most of the layers.

* The light blue line generally shows the lowest FVU values.

* There is significant variation in FVU values across different locations at each layer.

* The text block suggests the chart is part of a larger experiment involving reasoning and geographical knowledge.

### Interpretation

The chart likely represents the performance of a language model (Llama-3.3-70B-Instruct) as it processes information through different layers. The FVU metric indicates how much variance in the model's output remains unexplained, with lower values suggesting better performance. The fluctuations in FVU across layers could indicate the model is learning and refining its understanding of the data. The varying FVU values across locations suggest that the model's performance is sensitive to the specific geographical context.

The text block indicates that the model is being tested on its ability to answer questions requiring reasoning about geographical locations. The instruction to "think step by step" suggests that the model is being evaluated on its ability to perform multi-hop reasoning. The `<|eot_id|>` tag likely marks the end of the input sequence for the model.

The fact that FVU increases again after an initial decrease could indicate overfitting or a loss of generalization ability in later layers. The consistently high FVU for the yellow line suggests that this location is particularly challenging for the model to understand or represent.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: FVU by Layer and Location for Llama-3.3-70B-Instruct

### Overview

This is a line chart visualizing the "Fraction of Variance Unexplained (FVU)" across the 80 layers of the Llama-3.3-70B-Instruct model for a specific prompt (seed 0). The chart tracks how well different components of a prompt are "explained" or processed at each layer, with lower FVU indicating better explanation. A text box in the upper-left corner displays the specific prompt being analyzed.

### Components/Axes

* **Title:** "Llama-3.3-70B-Instruct (80 layers) / FVU by Layer and Location for seed 0"

* **Y-Axis:** "Fraction of Variance Unexplained (FVU) / (lower is better)". The scale is non-linear, with a major break. It runs from 30% to 100% in increments of 5%, then jumps to a logarithmic-like scale from 600% to 4600% in increments of 500%.

* **X-Axis:** "Layer", numbered from 0 to 80 in increments of 2.

* **Prompt Text Box (Top-Left):** Contains the analyzed prompt with color-coded highlights corresponding to the data series lines:

* `Here is a question with a clear YES or NO answer about ` **world places** `[black highlight]` `\n`

* `Is Salar de Arizaro located south of Ajay River?` `[blue highlight]` `\n`

* `\n`

* `It requires a few steps of ` **reasoning** `[green highlight]` `. So first, think step by step,`

* `and only then give a ` **YES / NO answer** `[orange highlight]` `. <|eot_id|>` `[pink highlight]`

* **Data Series (Lines):** Multiple colored lines, each corresponding to a highlighted segment in the prompt text box. The lines are a mix of solid and dashed styles.

### Detailed Analysis

The chart plots 7 distinct data series, identified by matching the highlight colors in the prompt text to the line colors in the chart. The legend is embedded within the prompt text box.

1. **Black Line (Solid):** Corresponds to "world places". This line shows extreme volatility, with FVU values plunging to as low as ~33% (Layer 44) and spiking above 100% in the later layers (e.g., ~110% at Layer 78). It demonstrates the most dramatic swings of any series.

2. **Blue Line (Solid):** Corresponds to "Salar de Arizaro located south of Ajay River?". This line remains relatively stable near 100% FVU for the first ~50 layers, then begins a steady upward trend, exceeding 1600% FVU by Layer 80.

3. **Green Line (Solid):** Corresponds to "reasoning". This line fluctuates significantly between ~60% and 100% FVU for most layers, with a notable dip to ~65% around Layer 48. It ends near 100% at Layer 80.

4. **Orange Line (Dashed):** Corresponds to "YES / NO answer". This line shows a consistent, steep upward trend. Starting near 100% at Layer 0, it climbs almost linearly, reaching the highest value on the chart at approximately 4400% FVU by Layer 80.

5. **Pink Line (Dashed):** Corresponds to the end-of-text token `<|eot_id|>`. This line follows a path very similar to the blue line, staying near 100% initially and then rising sharply after Layer 50, ending near 2600% FVU.

6. **Purple Line (Dashed):** (Color inferred from chart, not explicitly highlighted in text). This line shows high volatility in the early layers (dipping to ~57% at Layer 16) and a general upward trend in later layers, ending around 1100% FVU.

7. **Yellow Line (Solid):** (Color inferred from chart, not explicitly highlighted in text). This line is highly volatile, with deep troughs (e.g., ~45% at Layer 72) and peaks near 100%. It does not show the strong late-layer upward trend seen in the blue, pink, and orange lines.

**Spatial & Trend Verification:**

* The **orange dashed line** is the topmost line for the majority of the chart's right half, confirming its status as the series with the highest FVU.

* The **black solid line** is the most volatile, frequently crossing other lines and occupying the lowest points on the chart (e.g., Layers 34, 44, 54).

* The **blue solid** and **pink dashed** lines are closely intertwined, especially after Layer 50, both trending strongly upward.

* The **green solid** line remains in the lower-middle range of the chart (60%-100%) for its entire duration.

### Key Observations

1. **Divergent Late-Layer Behavior:** After approximately Layer 50, the data series split into two clear groups: those that skyrocket in FVU (Orange, Blue, Pink) and those that remain bounded below ~1100% (Black, Green, Purple, Yellow).

2. **The "Answer" is Least Explained:** The "YES / NO answer" component (Orange) has by far the highest and most rapidly increasing FVU, suggesting the model's final layers are least able to "explain" or account for the variance in this part of the output.

3. **High Volatility in Early/Mid Layers:** Components like "world places" (Black) and the unattributed Yellow line show extreme fluctuations in FVU between Layers 0-50, indicating unstable processing of these concepts in the model's earlier layers.

4. **Prompt Structure Correlates with FVU:** The components that form the core question ("world places", the specific question) and the instruction ("reasoning") generally maintain lower FVU than the final answer token and the end-of-text marker.

### Interpretation

This chart provides a diagnostic view of how a large language model processes a complex, multi-step prompt across its depth. The "Fraction of Variance Unexplained" metric likely measures how much of the model's internal activation variance at each layer is *not* attributable to predicting a specific part of the prompt/output.

* **What it suggests:** The model's processing is not uniform. Early layers handle foundational concepts ("world places") with high instability. Middle layers engage in "reasoning" (Green line, lower FVU). The most striking finding is that the final answer ("YES / NO") and the end-of-sequence token become progressively *less* explained by the model's internal states in the final layers. This could imply that the final answer is generated through a process that is highly distinct from the earlier reasoning steps, or that the model's representations become increasingly specialized and less generalizable in the final layers.

* **Relationships:** The close tracking of the blue (question) and pink (`<|eot_id|>`) lines suggests a strong coupling in how the model processes the end of the question and the signal to stop generating. The volatility of the black ("world places") line indicates this is a challenging or broadly distributed concept for the model to pin down internally.

* **Anomaly:** The sheer magnitude of the FVU for the answer component (over 4000%) is an outlier compared to all other components. This extreme value warrants investigation—it may be an artifact of the metric calculation or indicate a fundamental shift in the model's representational strategy for final output generation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Llama-3-70B-Instruct (80 layers) FVU by Layer and Location for seed 0

### Overview

The image is a multi-line graph visualizing the **Fraction of Variance Unexplained (FVU)** across 80 layers of the Llama-3-70B-Instruct model. The y-axis ranges from 30% to 4600% (unusually high for FVU, which typically maxes at 100%), while the x-axis represents layers 0–80. Multiple colored lines (e.g., orange, black, green, red) correspond to different "locations" in the model, as indicated by the legend. A text box in the top-left corner contains a question about Salar de Arizaro and reasoning steps.

---

### Components/Axes

- **X-axis (Layer)**: Labeled "Layer" with ticks from 0 to 80.

- **Y-axis (FVU)**: Labeled "Fraction of Variance Unexplained (FVU)" with percentages from 30% to 4600%.

- **Legend**: Located in the top-left corner, mapping colors to "locations" (e.g., orange = "n", black = "eot_id", green = "yes", red = "no").

- **Text Box**: Contains the question:

*"Here is a question with a clear YES or NO answer about world places. Is Salar de Arizaro located south of Ajay River? It requires a few steps of reasoning. So first, think step by step, and only then give a YES / NO answer. <eot_id>"*

---

### Detailed Analysis

#### Key Trends:

1. **Orange Line ("n")**:

- Starts at ~100% FVU at layer 0.

- Peaks at **~4600% FVU** at layer 80 (anomaly, as FVU cannot exceed 100%).

- Shows erratic fluctuations, with sharp drops and rises.

2. **Black Line ("eot_id")**:

- Starts at ~100% FVU at layer 0.

- Decreases to ~30% FVU by layer 80.

- Exhibits significant volatility, with sharp dips (e.g., ~20% at layer 40).

3. **Green Line ("yes")**:

- Starts at ~100% FVU at layer 0.

- Fluctuates between ~70%–95% FVU, with a notable dip to ~60% at layer 30.

4. **Red Line ("no")**:

- Starts at ~100% FVU at layer 0.

- Drops to ~50% FVU by layer 80, with sharp declines (e.g., ~40% at layer 50).

5. **Other Lines**:

- Blue, purple, and yellow lines show similar patterns, with FVU values ranging from ~70%–100%.

#### Spatial Grounding:

- **Legend**: Top-left corner, with color-coded labels.

- **Text Box**: Overlays the top-left corner, partially obscuring the orange line.

- **Lines**: Distributed across the graph, with each color corresponding to a "location" (e.g., "n" = orange, "eot_id" = black).

---

### Key Observations

1. **Anomaly in Orange Line ("n")**:

- The FVU value of **4600%** at layer 80 is implausible for FVU, suggesting a data error, mislabeling, or misinterpretation of the metric.

2. **Convergence of Lines**:

- Most lines (e.g., black, green, red) show a general downward trend, indicating reduced FVU (improved model performance) in later layers.

3. **Volatility**:

- All lines exhibit significant fluctuations, suggesting instability in FVU across layers.

4. **Text Box Context**:

- The question about Salar de Arizaro implies the model is processing a geospatial reasoning task, with "eot_id" possibly marking the end of a token sequence.

---

### Interpretation

- **Model Behavior**:

The graph suggests that the model's ability to explain variance (FVU) varies significantly across layers. The black line ("eot_id") shows the most improvement, while the orange line ("n") exhibits an unrealistic spike, possibly indicating a bug or misconfiguration.

- **Reasoning Task**:

The text box implies the model is handling a multi-step reasoning question. The "eot_id" token may signal the end of the input sequence, while "n" and "yes/no" lines reflect intermediate processing steps.

- **Data Integrity**:

The 4600% FVU value is a critical outlier. If accurate, it would imply the model fails to explain 96% of the variance in that layer, which is inconsistent with typical FVU behavior. This could indicate a miscalculation or misinterpretation of the metric.

---

### Final Notes

- **Language**: The text box contains English and code-like tags (e.g., `<eot_id>`).

- **Uncertainty**: The 4600% FVU value is flagged as an anomaly, requiring further validation.

- **Context**: The graph likely represents a diagnostic tool for analyzing model performance, with "locations" referring to specific attention or processing modules in the Llama-3-70B-Instruct architecture.

DECODING INTELLIGENCE...