\n

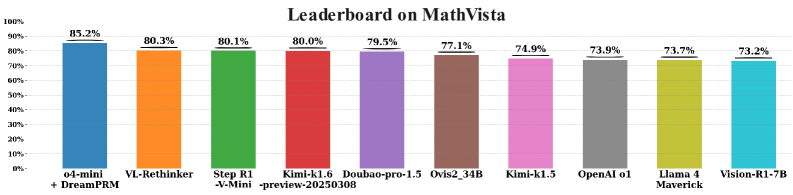

## Bar Chart: Leaderboard on MathVista

### Overview

The image presents a bar chart displaying the performance of various models on the MathVista benchmark. The chart compares the accuracy scores of nine different models, ranging from approximately 73% to 85%. The y-axis represents the percentage score, while the x-axis lists the model names.

### Components/Axes

* **Title:** "Leaderboard on MathVista" (positioned at the top-center)

* **Y-axis:** Percentage (ranging from 0% to 100%, with markers at 0%, 10%, 20%, 30%, 40%, 50%, 60%, 70%, 80%, 90%, and 100%)

* **X-axis:** Model Names:

* o4-mini + DreamPRM

* VL-Rethinker

* Step R1 -V-Mini -preview-20230308

* Kimi-kl.6

* Doubao-pro-1.5

* Qvls2_31B

* Kimi-kl.1

* OpenAI 01

* Llama 4 Maverick

* Vision-R1-7B

### Detailed Analysis

The bars represent the accuracy scores of each model. The trend is generally decreasing from left to right, with some fluctuations.

* **o4-mini + DreamPRM:** Approximately 85.2% (Blue bar, leftmost)

* **VL-Rethinker:** Approximately 80.3% (Orange bar, second from left)

* **Step R1 -V-Mini -preview-20230308:** Approximately 80.1% (Green bar, third from left)

* **Kimi-kl.6:** Approximately 80.0% (Red bar, fourth from left)

* **Doubao-pro-1.5:** Approximately 79.5% (Purple bar, fifth from left)

* **Qvls2_31B:** Approximately 77.1% (Brown bar, sixth from left)

* **Kimi-kl.1:** Approximately 74.9% (Pink bar, seventh from left)

* **OpenAI 01:** Approximately 73.9% (Gray bar, eighth from left)

* **Llama 4 Maverick:** Approximately 73.7% (Yellow bar, ninth from left)

* **Vision-R1-7B:** Approximately 73.2% (Teal bar, rightmost)

### Key Observations

* The model "o4-mini + DreamPRM" significantly outperforms all other models, with a score of approximately 85.2%.

* The models "VL-Rethinker", "Step R1 -V-Mini -preview-20230308", and "Kimi-kl.6" have very similar performance, all around 80%.

* The lowest performing models, "OpenAI 01", "Llama 4 Maverick", and "Vision-R1-7B", are clustered around 73-74%.

* There is a noticeable gap in performance between the top-performing model and the rest.

### Interpretation

The chart demonstrates a clear ranking of different models based on their performance on the MathVista benchmark. The substantial lead of "o4-mini + DreamPRM" suggests it is a particularly effective model for this specific task. The clustering of several models around the 80% mark indicates a competitive landscape among those options. The lower scores of "OpenAI 01", "Llama 4 Maverick", and "Vision-R1-7B" may indicate areas for improvement in those models or suggest they are less suited for the types of mathematical problems included in the MathVista benchmark. The data suggests that model architecture and training data play a significant role in achieving high accuracy on MathVista. The inclusion of the preview date in "Step R1 -V-Mini -preview-20230308" suggests that the model is under active development and its performance may change over time.