TECHNICAL ASSET FINGERPRINT

b517a4898957bcff8d2a9e44

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

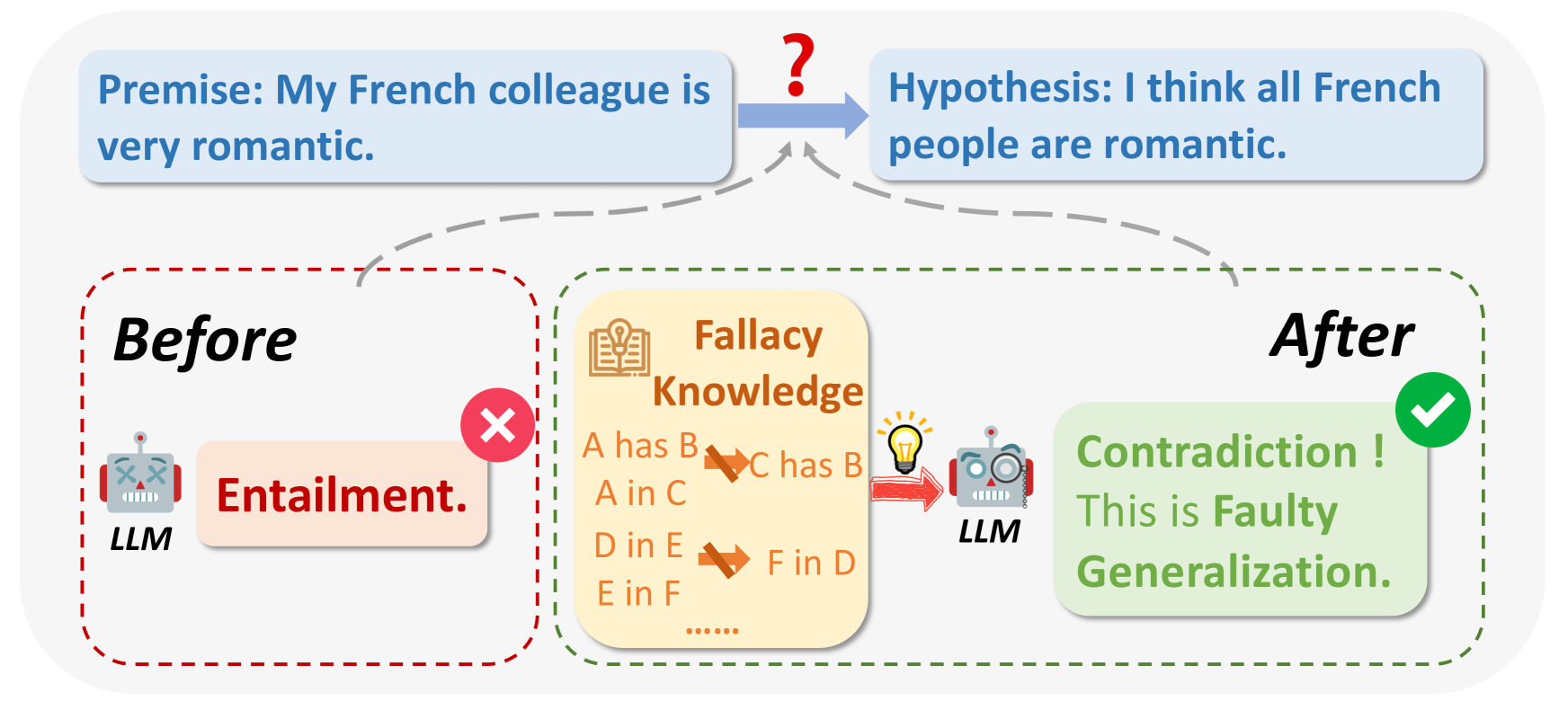

## Diagram: Fallacy Detection by LLM

### Overview

The diagram illustrates how a Large Language Model (LLM) can identify a fallacy in reasoning. It shows the transformation of a premise into a hypothesis and the LLM's role in detecting a faulty generalization. The diagram is divided into "Before" and "After" states, highlighting the LLM's intervention.

### Components/Axes

* **Top:**

* **Premise:** "My French colleague is very romantic." (Contained in a light blue rounded rectangle)

* **Arrow:** A blue arrow with a question mark above it, pointing from the premise to the hypothesis.

* **Hypothesis:** "I think all French people are romantic." (Contained in a light blue rounded rectangle)

* **Bottom-Left ("Before"):**

* **Label:** "Before" (Bold text)

* **LLM (Robot):** A cartoon robot labeled "LLM"

* **Entailment:** A light pink rounded rectangle containing the word "Entailment."

* **Red X:** A red "X" mark above the "Entailment" box.

* **Red Dashed Border:** A red dashed line surrounds the "Before" section.

* **Center:**

* **Fallacy Knowledge:** A light yellow rounded rectangle labeled "Fallacy Knowledge" with a book and lightbulb icon.

* **Fallacy Rules:**

* "A has B -> C has B"

* "A in C"

* "D in E -> F in D"

* "E in F"

* "......"

* **Bottom-Right ("After"):**

* **Label:** "After" (Bold text)

* **LLM (Robot):** A cartoon robot labeled "LLM" with a magnifying glass.

* **Contradiction! This is Faulty Generalization.:** A light green rounded rectangle containing the text "Contradiction! This is Faulty Generalization."

* **Green Checkmark:** A green checkmark above the "Contradiction!" box.

* **Green Dashed Border:** A green dashed line surrounds the "After" section.

* **Arrows:**

* A red arrow points from the "Fallacy Knowledge" box to the "LLM" in the "After" section.

* Grey dashed lines connect the "Before" and "After" sections to the premise and hypothesis.

### Detailed Analysis

* **Premise to Hypothesis:** The premise "My French colleague is very romantic" is transformed into the hypothesis "I think all French people are romantic." This transformation is indicated by a blue arrow with a question mark, suggesting a potential logical leap or inference.

* **Before State:** Initially, the LLM incorrectly identifies the premise as entailing the hypothesis, indicated by the "Entailment" label and the red "X" mark.

* **Fallacy Knowledge:** The "Fallacy Knowledge" box contains general rules or patterns of fallacious reasoning. The rules are represented as "A has B -> C has B", "A in C", "D in E -> F in D", and "E in F". The "......" suggests that there are more rules not explicitly listed.

* **After State:** After applying its "Fallacy Knowledge," the LLM correctly identifies the hypothesis as a "Contradiction!" and a "Faulty Generalization," indicated by the green checkmark. The LLM in the "After" state has a magnifying glass, suggesting it is analyzing the information.

### Key Observations

* The diagram highlights the LLM's ability to evolve its understanding of a statement from an initial incorrect assessment ("Entailment") to a correct identification of a fallacy ("Contradiction! This is Faulty Generalization.").

* The "Fallacy Knowledge" box is crucial, as it provides the LLM with the necessary rules to detect logical fallacies.

* The use of visual cues (red "X" and green checkmark) clearly indicates the LLM's initial error and subsequent correction.

### Interpretation

The diagram demonstrates how an LLM can be used to identify and correct faulty reasoning. The LLM initially makes an incorrect assessment, but by applying its "Fallacy Knowledge," it can identify the logical fallacy and correct its assessment. This suggests that LLMs can be valuable tools for improving the quality of reasoning and decision-making. The diagram illustrates the importance of equipping LLMs with knowledge of common fallacies to improve their reasoning capabilities. The transformation from "Entailment" to "Contradiction!" shows the LLM's ability to learn and adapt its understanding based on new information.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Logical Fallacy Illustration

### Overview

This diagram illustrates a logical fallacy, specifically a faulty generalization, stemming from an initial premise and hypothesis. It visually contrasts a state "Before" understanding the fallacy with a state "After" recognizing it. The diagram uses a question mark and arrows to show the progression of thought and the identification of the fallacy.

### Components/Axes

The diagram is divided into three main sections:

1. **Premise & Hypothesis:** Located at the top, presenting the initial statement and resulting hypothesis.

2. **Before:** A dashed-border section on the left, representing the initial flawed reasoning.

3. **After:** A dashed-border section on the right, representing the corrected understanding.

Within the "Before" section, there's a box labeled "Fallacy Knowledge" containing a series of statements:

* A has B

* A in C

* D in E

* E in F

* F in D

* ...... (ellipsis indicating continuation)

The diagram also includes:

* A question mark icon positioned between the premise and hypothesis.

* Arrows indicating the flow of thought.

* A red "X" symbol over the "Entailment" label in the "Before" section.

* A green checkmark symbol next to "Contradiction!" in the "After" section.

* Icons representing a "LLM" (Large Language Model) in both the "Before" and "After" sections.

### Detailed Analysis or Content Details

The diagram presents a logical progression:

1. **Premise:** "My French colleague is very romantic."

2. **Hypothesis:** "I think all French people are romantic."

3. **Before (Flawed Reasoning):** The initial state incorrectly assumes entailment. The "Fallacy Knowledge" box contains a series of seemingly unrelated statements (A has B, A in C, etc.) which likely represent the flawed reasoning process. The "Entailment" label is marked with a red "X", indicating it is incorrect.

4. **After (Corrected Reasoning):** The corrected state identifies the flaw as a "Contradiction!" and labels it as a "Faulty Generalization." A green checkmark signifies the correct identification of the fallacy.

The arrows show the flow of thought from the premise to the hypothesis, and then to the identification of the fallacy. The LLM icons suggest that this diagram is relevant to the understanding of logical fallacies within the context of Large Language Models.

### Key Observations

* The diagram highlights the danger of making generalizations based on limited observations.

* The "Fallacy Knowledge" section is intentionally abstract and serves to represent the flawed reasoning process rather than providing concrete data.

* The use of visual cues (red "X", green checkmark, arrows) effectively communicates the correction of the logical error.

* The inclusion of LLM icons suggests the diagram is intended for an audience interested in the logical capabilities and potential pitfalls of AI models.

### Interpretation

The diagram demonstrates how a seemingly innocuous premise can lead to a flawed generalization. The initial hypothesis, based on a single observation (a romantic French colleague), incorrectly assumes that all French people share that characteristic. The diagram effectively illustrates the importance of critical thinking and avoiding hasty generalizations. The "Fallacy Knowledge" section, while abstract, represents the internal, often unexamined, reasoning that leads to the error. The correction, identifying the fallacy as a "Faulty Generalization," highlights the need to base conclusions on sufficient evidence rather than anecdotal experiences. The presence of the LLM icons suggests that this type of logical reasoning is crucial for developing and evaluating AI systems, as LLMs are prone to making similar generalizations based on the data they are trained on. The diagram serves as a cautionary tale about the potential for bias and flawed reasoning in both human and artificial intelligence.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: LLM Reasoning Improvement via Fallacy Knowledge

### Overview

The image is a conceptual diagram illustrating how a Large Language Model's (LLM) reasoning can be improved by incorporating knowledge of logical fallacies. It contrasts two states: a "Before" state where the LLM makes an incorrect logical judgment, and an "After" state where, after being equipped with "Fallacy Knowledge," it correctly identifies the flawed reasoning. The diagram uses a specific example about French people and romanticism to demonstrate the concept.

### Components/Axes

The diagram is structured into three main visual regions within a light grey rounded rectangle container:

1. **Top Region (Problem Statement):**

* **Left Box (Premise):** A light blue rectangle containing the text: "Premise: My French colleague is very romantic."

* **Right Box (Hypothesis):** A light blue rectangle containing the text: "Hypothesis: I think all French people are romantic."

* **Connection:** A blue arrow points from the Premise to the Hypothesis. Above this arrow is a large red question mark ("?"), indicating the logical relationship between them is in question. Two grey dashed arrows point from the "Before" and "After" sections below up to this central question mark, showing that the LLM's assessment of this relationship is the subject of the diagram.

2. **Left Region ("Before" State):**

* **Label:** The word "**Before**" in large, bold, black italic font.

* **Icon:** A grey robot icon labeled "**LLM**" below it.

* **Output Box:** A light red rectangle with the text "**Entailment.**" in bold red font.

* **Indicator:** A red circle with a white "X" (✗) is positioned to the right of the output box, signifying an incorrect judgment.

* **Container:** This entire section is enclosed by a red dashed-line border.

3. **Central Region ("Fallacy Knowledge"):**

* **Title:** The text "**Fallacy Knowledge**" in brown font, accompanied by an icon of an open book with a lightbulb.

* **Content:** A list of abstract logical patterns written in brown text:

* "A has B → C has B"

* "A in C"

* "D in E ↔ F in D"

* "E in F"

* "......" (ellipsis indicating more patterns)

* **Connector:** A red arrow with a lightbulb icon points from this knowledge box to the "After" section's LLM, indicating the knowledge is being provided to the model.

4. **Right Region ("After" State):**

* **Label:** The word "**After**" in large, bold, black italic font.

* **Icon:** A grey robot icon labeled "**LLM**" below it.

* **Output Box:** A light green rectangle containing the text:

* "**Contradiction !**" (in bold green)

* "This is **Faulty Generalization.**" (with "Faulty Generalization" in bold green).

* **Indicator:** A green circle with a white checkmark (✓) is positioned to the right of the output box, signifying a correct judgment.

* **Container:** This entire section is enclosed by a green dashed-line border.

### Detailed Analysis

The diagram presents a before-and-after workflow for an LLM's reasoning task.

* **Task:** Evaluate the logical relationship between a specific premise ("My French colleague is very romantic") and a general hypothesis ("I think all French people are romantic").

* **"Before" Process:** The LLM, without specialized knowledge, incorrectly assesses this as **"Entailment."** This means it believes the premise logically guarantees the truth of the hypothesis. The red "X" marks this as an error.

* **Intervention:** The LLM is provided with **"Fallacy Knowledge."** This is represented as a set of abstract logical rules or patterns (e.g., "A has B → C has B") that describe common reasoning errors. The specific pattern relevant to this example is the fallacy of **hasty or faulty generalization**—drawing a broad conclusion from a single or limited instance.

* **"After" Process:** The same LLM, now equipped with this fallacy knowledge, correctly identifies the relationship as a **"Contradiction."** More precisely, it labels the reasoning from premise to hypothesis as a **"Faulty Generalization."** The green checkmark confirms this as the correct logical assessment. The diagram implies that the hypothesis does not logically follow from the premise and, in fact, represents a flawed inference.

### Key Observations

1. **Visual Coding:** The diagram uses a consistent color scheme to convey correctness: red for error ("Before" state, "Entailment," X mark) and green for correctness ("After" state, "Contradiction/Faulty Generalization," ✓ mark).

2. **Spatial Flow:** The central "Fallacy Knowledge" box acts as a bridge or catalyst between the two states. The dashed arrows from the top question mark to both states emphasize that the same logical problem is being evaluated under different conditions.

3. **Abstraction vs. Specificity:** The "Fallacy Knowledge" is presented as abstract symbolic patterns (A, B, C, D, E, F), while the applied example is concrete (French colleague, romanticism). This highlights the transfer of general logical principles to a specific case.

4. **LLM Representation:** The LLM is depicted as a simple robot icon, personifying the model as an agent that receives input (knowledge) and produces output (judgment).

### Interpretation

This diagram is a pedagogical or conceptual illustration of a key challenge and solution in AI reasoning. It argues that:

* **The Problem:** Standard LLMs may perform surface-level pattern matching or rely on biased training data, leading them to commit logical fallacies like faulty generalization. They might incorrectly "entail" a general statement from a specific one because such patterns appear frequently in text, not because the logic is sound.

* **The Solution:** Explicitly training or augmenting LLMs with knowledge of formal logical fallacies and reasoning patterns can improve their robustness. By learning the abstract structure of fallacies (e.g., "A has property P, therefore all members of category C have property P"), the model can better identify and flag flawed reasoning in novel contexts.

* **The Outcome:** An LLM enhanced with "Fallacy Knowledge" transitions from being a passive text predictor to a more active, critical reasoner. It can move beyond simple entailment judgments to provide more nuanced and accurate analyses, such as identifying contradictions and naming the specific fallacy committed. This has significant implications for developing more reliable, trustworthy, and logically consistent AI systems for tasks like argument analysis, fact-checking, and educational tutoring.

The diagram ultimately advocates for the integration of symbolic logic and critical thinking frameworks into the training paradigms of large language models.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Logical Fallacy Analysis in LLM Reasoning

### Overview

The flowchart illustrates a logical reasoning process involving an LLM (Large Language Model) analyzing a premise and hypothesis about French people being romantic. It demonstrates how the model identifies and corrects a fallacy through structured knowledge application.

### Components/Axes

1. **Premise Box** (Top-left):

- Text: "Premise: My French colleague is very romantic."

- Color: Light blue

- Position: Top-left quadrant

2. **Hypothesis Box** (Top-right):

- Text: "Hypothesis: I think all French people are romantic."

- Color: Light blue

- Position: Top-right quadrant

- Connection: Dashed arrow from Premise with red question mark

3. **Before Stage** (Bottom-left):

- Box: Red dashed border

- Text: "Before" (black header)

- Content:

- LLM robot icon (gray with red arms)

- Text: "Entailment." (red)

- Red X icon

- Position: Bottom-left quadrant

4. **Fallacy Knowledge** (Center):

- Box: Yellow background

- Header: "Fallacy Knowledge" (brown)

- Content:

- Relationships:

- "A has B" → "C has B" (crossed out)

- "A in C" → "D in E" (crossed out)

- "D in E" → "F in D" (crossed out)

- Icon: Open book with lightbulb

- Position: Center

5. **After Stage** (Bottom-right):

- Box: Green dashed border

- Text: "After" (black header)

- Content:

- LLM robot icon (gray with magnifying glass)

- Text: "Contradiction ! This is Faulty Generalization." (green)

- Green checkmark

- Position: Bottom-right quadrant

6. **Flow Arrows**:

- Dashed gray arrow from Premise to Hypothesis

- Solid red arrow from Hypothesis to Fallacy Knowledge

- Solid green arrow from Fallacy Knowledge to After stage

### Detailed Analysis

- **Premise to Hypothesis**: The initial premise ("My French colleague is romantic") leads to an overgeneralized hypothesis ("All French people are romantic") via a logical leap (question mark).

- **Before Stage**: The LLM initially applies "Entailment" (incorrectly assuming the premise directly supports the hypothesis), marked as wrong (red X).

- **Fallacy Knowledge**: The model accesses structured knowledge about logical relationships, revealing invalid inferences (crossed-out arrows).

- **After Stage**: The LLM corrects itself, identifying the fallacy as "Faulty Generalization" with a positive confirmation (green check).

### Key Observations

1. The red X/green check system visually distinguishes incorrect vs. corrected reasoning.

2. The Fallacy Knowledge section acts as a knowledge base for logical validation.

3. The crossed-out arrows in the Fallacy Knowledge box explicitly show invalidated inferences.

4. The LLM's icon evolves from a basic robot (Before) to an analytical version with a magnifying glass (After).

### Interpretation

This flowchart demonstrates how LLMs can:

1. Detect overgeneralization errors (from specific premise to universal hypothesis)

2. Utilize structured knowledge to identify logical fallacies

3. Self-correct through contradiction recognition

4. Differentiate between valid entailment and invalid generalization

The progression from red X to green check symbolizes the model's ability to refine its reasoning through knowledge integration. The specific fallacy identified ("Faulty Generalization") aligns with Peircean principles of abductive reasoning, where the model reconstructs the most plausible explanation that accounts for the observed contradiction between premise and hypothesis.

DECODING INTELLIGENCE...