## Flowchart: Logical Fallacy Analysis in LLM Reasoning

### Overview

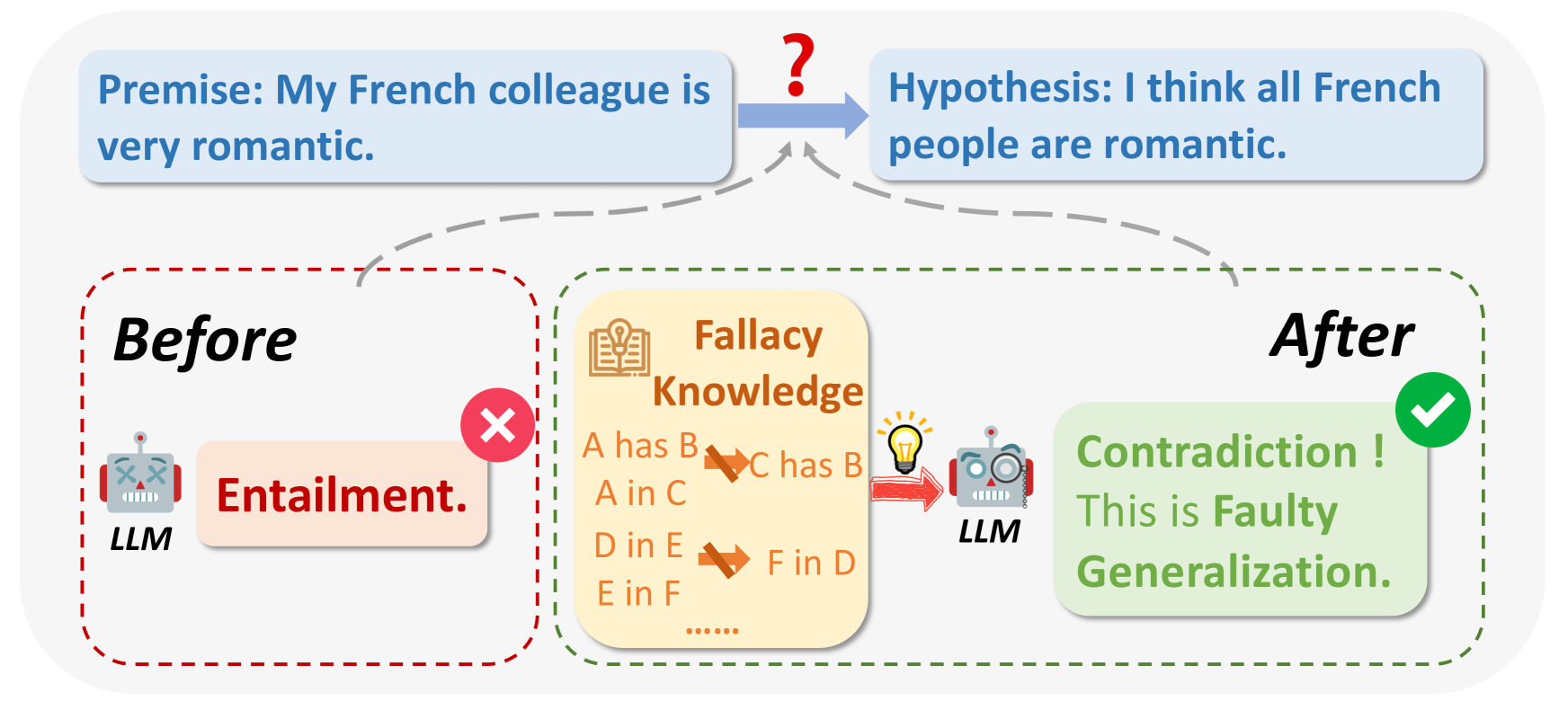

The flowchart illustrates a logical reasoning process involving an LLM (Large Language Model) analyzing a premise and hypothesis about French people being romantic. It demonstrates how the model identifies and corrects a fallacy through structured knowledge application.

### Components/Axes

1. **Premise Box** (Top-left):

- Text: "Premise: My French colleague is very romantic."

- Color: Light blue

- Position: Top-left quadrant

2. **Hypothesis Box** (Top-right):

- Text: "Hypothesis: I think all French people are romantic."

- Color: Light blue

- Position: Top-right quadrant

- Connection: Dashed arrow from Premise with red question mark

3. **Before Stage** (Bottom-left):

- Box: Red dashed border

- Text: "Before" (black header)

- Content:

- LLM robot icon (gray with red arms)

- Text: "Entailment." (red)

- Red X icon

- Position: Bottom-left quadrant

4. **Fallacy Knowledge** (Center):

- Box: Yellow background

- Header: "Fallacy Knowledge" (brown)

- Content:

- Relationships:

- "A has B" → "C has B" (crossed out)

- "A in C" → "D in E" (crossed out)

- "D in E" → "F in D" (crossed out)

- Icon: Open book with lightbulb

- Position: Center

5. **After Stage** (Bottom-right):

- Box: Green dashed border

- Text: "After" (black header)

- Content:

- LLM robot icon (gray with magnifying glass)

- Text: "Contradiction ! This is Faulty Generalization." (green)

- Green checkmark

- Position: Bottom-right quadrant

6. **Flow Arrows**:

- Dashed gray arrow from Premise to Hypothesis

- Solid red arrow from Hypothesis to Fallacy Knowledge

- Solid green arrow from Fallacy Knowledge to After stage

### Detailed Analysis

- **Premise to Hypothesis**: The initial premise ("My French colleague is romantic") leads to an overgeneralized hypothesis ("All French people are romantic") via a logical leap (question mark).

- **Before Stage**: The LLM initially applies "Entailment" (incorrectly assuming the premise directly supports the hypothesis), marked as wrong (red X).

- **Fallacy Knowledge**: The model accesses structured knowledge about logical relationships, revealing invalid inferences (crossed-out arrows).

- **After Stage**: The LLM corrects itself, identifying the fallacy as "Faulty Generalization" with a positive confirmation (green check).

### Key Observations

1. The red X/green check system visually distinguishes incorrect vs. corrected reasoning.

2. The Fallacy Knowledge section acts as a knowledge base for logical validation.

3. The crossed-out arrows in the Fallacy Knowledge box explicitly show invalidated inferences.

4. The LLM's icon evolves from a basic robot (Before) to an analytical version with a magnifying glass (After).

### Interpretation

This flowchart demonstrates how LLMs can:

1. Detect overgeneralization errors (from specific premise to universal hypothesis)

2. Utilize structured knowledge to identify logical fallacies

3. Self-correct through contradiction recognition

4. Differentiate between valid entailment and invalid generalization

The progression from red X to green check symbolizes the model's ability to refine its reasoning through knowledge integration. The specific fallacy identified ("Faulty Generalization") aligns with Peircean principles of abductive reasoning, where the model reconstructs the most plausible explanation that accounts for the observed contradiction between premise and hypothesis.