## Chart Type: Bar Chart: Accuracy Comparison of Language Models on Generation vs. Multiple-choice Tasks

### Overview

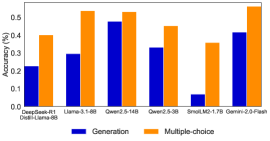

This image displays a bar chart comparing the accuracy of seven different language models across two distinct task types: "Generation" and "Multiple-choice". Each model is represented by a pair of bars, with blue indicating Generation accuracy and orange indicating Multiple-choice accuracy. The Y-axis represents accuracy as a percentage (though values are shown as fractions of 1), and the X-axis lists the different language models.

### Components/Axes

* **Chart Type**: Vertical Bar Chart.

* **Y-axis (Left)**:

* **Label**: "Accuracy (%)"

* **Scale**: Ranges from 0.0 to 0.5, with major grid lines at 0.0, 0.1, 0.2, 0.3, 0.4, and 0.5. The maximum value observed on the chart extends slightly above 0.5.

* **X-axis (Bottom)**:

* **Label**: Implicitly represents different language models.

* **Categories (from left to right)**:

1. DeepSeek-R1

2. Distil-Llama-6B

3. Llama-3.1-8B

4. Qwen2.5-14B

5. Qwen2.5-3B

6. SnoLM2-1.7B

7. Gemini-2.0-Flash

* **Legend (Bottom-center)**:

* A blue square swatch labeled "Generation".

* An orange square swatch labeled "Multiple-choice".

### Detailed Analysis

The chart presents accuracy scores for each model on both Generation and Multiple-choice tasks. For every model, the orange bar (Multiple-choice) is consistently higher than the blue bar (Generation).

1. **DeepSeek-R1**:

* **Generation (Blue)**: The bar reaches approximately 0.22.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.40.

* **Trend**: Multiple-choice accuracy is significantly higher than Generation accuracy.

2. **Distil-Llama-6B**:

* **Generation (Blue)**: The bar reaches approximately 0.29.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.53.

* **Trend**: Multiple-choice accuracy is substantially higher than Generation accuracy.

3. **Llama-3.1-8B**:

* **Generation (Blue)**: The bar reaches approximately 0.48.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.53.

* **Trend**: Multiple-choice accuracy is slightly higher than Generation accuracy, showing the smallest gap among all models.

4. **Qwen2.5-14B**:

* **Generation (Blue)**: The bar reaches approximately 0.33.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.45.

* **Trend**: Multiple-choice accuracy is notably higher than Generation accuracy.

5. **Qwen2.5-3B**:

* **Generation (Blue)**: The bar reaches approximately 0.07.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.36.

* **Trend**: Multiple-choice accuracy is dramatically higher than Generation accuracy, representing the largest absolute difference.

6. **SnoLM2-1.7B**:

* **Generation (Blue)**: The bar reaches approximately 0.42.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.53.

* **Trend**: Multiple-choice accuracy is significantly higher than Generation accuracy.

7. **Gemini-2.0-Flash**:

* **Generation (Blue)**: The bar reaches approximately 0.42.

* **Multiple-choice (Orange)**: The bar reaches approximately 0.53.

* **Trend**: Multiple-choice accuracy is significantly higher than Generation accuracy.

### Key Observations

* **Consistent Pattern**: For all seven evaluated models, accuracy on multiple-choice tasks is higher than on generation tasks.

* **Highest Multiple-choice Accuracy**: Distil-Llama-6B, Llama-3.1-8B, SnoLM2-1.7B, and Gemini-2.0-Flash all achieve the highest multiple-choice accuracy, approximately 0.53.

* **Highest Generation Accuracy**: Llama-3.1-8B shows the highest generation accuracy at approximately 0.48.

* **Lowest Generation Accuracy**: Qwen2.5-3B exhibits the lowest generation accuracy at approximately 0.07.

* **Largest Performance Gap**: Qwen2.5-3B demonstrates the most substantial difference between multiple-choice (0.36) and generation (0.07) performance.

* **Smallest Performance Gap**: Llama-3.1-8B has the narrowest gap between multiple-choice (0.53) and generation (0.48) accuracies.

### Interpretation

The data strongly suggests a general trend across the evaluated language models: they are more proficient at tasks requiring selection from predefined options (multiple-choice) than at tasks requiring free-form content creation (generation). This could indicate that current language models, or at least those represented here, are better at recognizing correct answers or patterns within given choices than at synthesizing novel, accurate responses.

The varying magnitudes of the performance gap between generation and multiple-choice tasks across different models highlight their diverse strengths and weaknesses. Models like Llama-3.1-8B appear relatively balanced, performing well in both categories with a smaller disparity. In contrast, models such as Qwen2.5-3B and Distil-Llama-6B show a pronounced specialization towards multiple-choice tasks, with significantly lower performance in generation. This could be attributed to differences in their architectural design, training methodologies, or the specific datasets used for their development, which might emphasize discriminative abilities over generative capabilities.

From a practical standpoint, this data implies that for applications requiring high accuracy in generative tasks, further research and development are needed to bridge this performance gap. For tasks where selecting the best option is sufficient, these models already demonstrate considerable capability. The "Accuracy (%)" label, despite the decimal values, indicates that these scores can be directly interpreted as percentages (e.g., 0.53 means 53% accuracy).