## Bar Chart: Model Accuracy Comparison

### Overview

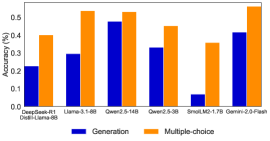

The image is a bar chart comparing the accuracy of different language models on two tasks: generation and multiple-choice. The chart displays the accuracy percentage for each model on each task, allowing for a direct comparison of their performance.

### Components/Axes

* **X-axis:** Lists the language models being compared:

* DeepGeek-R1 Distill-Llama-6B

* Llama-3.1-8B

* Qwen2.5-14B

* Qwen2.5-3B

* SmolLM2-1.7B

* Gemini-2.0-Flash

* **Y-axis:** Represents the accuracy percentage, ranging from 0.0% to 0.5%.

* **Legend:** Located at the bottom of the chart, indicating:

* Blue bars: "Generation" task

* Orange bars: "Multiple-choice" task

### Detailed Analysis

Here's a breakdown of the accuracy for each model on both tasks:

* **DeepGeek-R1 Distill-Llama-6B:**

* Generation: Approximately 0.23%

* Multiple-choice: Approximately 0.40%

* **Llama-3.1-8B:**

* Generation: Approximately 0.30%

* Multiple-choice: Approximately 0.52%

* **Qwen2.5-14B:**

* Generation: Approximately 0.48%

* Multiple-choice: Approximately 0.53%

* **Qwen2.5-3B:**

* Generation: Approximately 0.33%

* Multiple-choice: Approximately 0.45%

* **SmolLM2-1.7B:**

* Generation: Approximately 0.07%

* Multiple-choice: Approximately 0.36%

* **Gemini-2.0-Flash:**

* Generation: Approximately 0.42%

* Multiple-choice: Approximately 0.54%

**Trend Verification:**

* For all models, the "Multiple-choice" accuracy is higher than the "Generation" accuracy.

### Key Observations

* The "Multiple-choice" task consistently yields higher accuracy than the "Generation" task across all models.

* SmolLM2-1.7B has the lowest accuracy on both tasks compared to the other models.

* Gemini-2.0-Flash and Qwen2.5-14B show the highest accuracy on the "Multiple-choice" task.

### Interpretation

The data suggests that the language models perform better on multiple-choice tasks than on generation tasks. This could be due to the nature of the tasks; multiple-choice requires selecting from a set of predefined options, while generation requires creating novel text, which is generally more challenging. The significant difference in accuracy for SmolLM2-1.7B indicates that it may be less capable than the other models in both tasks. The high performance of Gemini-2.0-Flash and Qwen2.5-14B on the multiple-choice task suggests they are particularly well-suited for tasks involving selection and recognition.