\n

## Text Document: Analysis of Assistant-Checker-Reflection Dialogue

### Overview

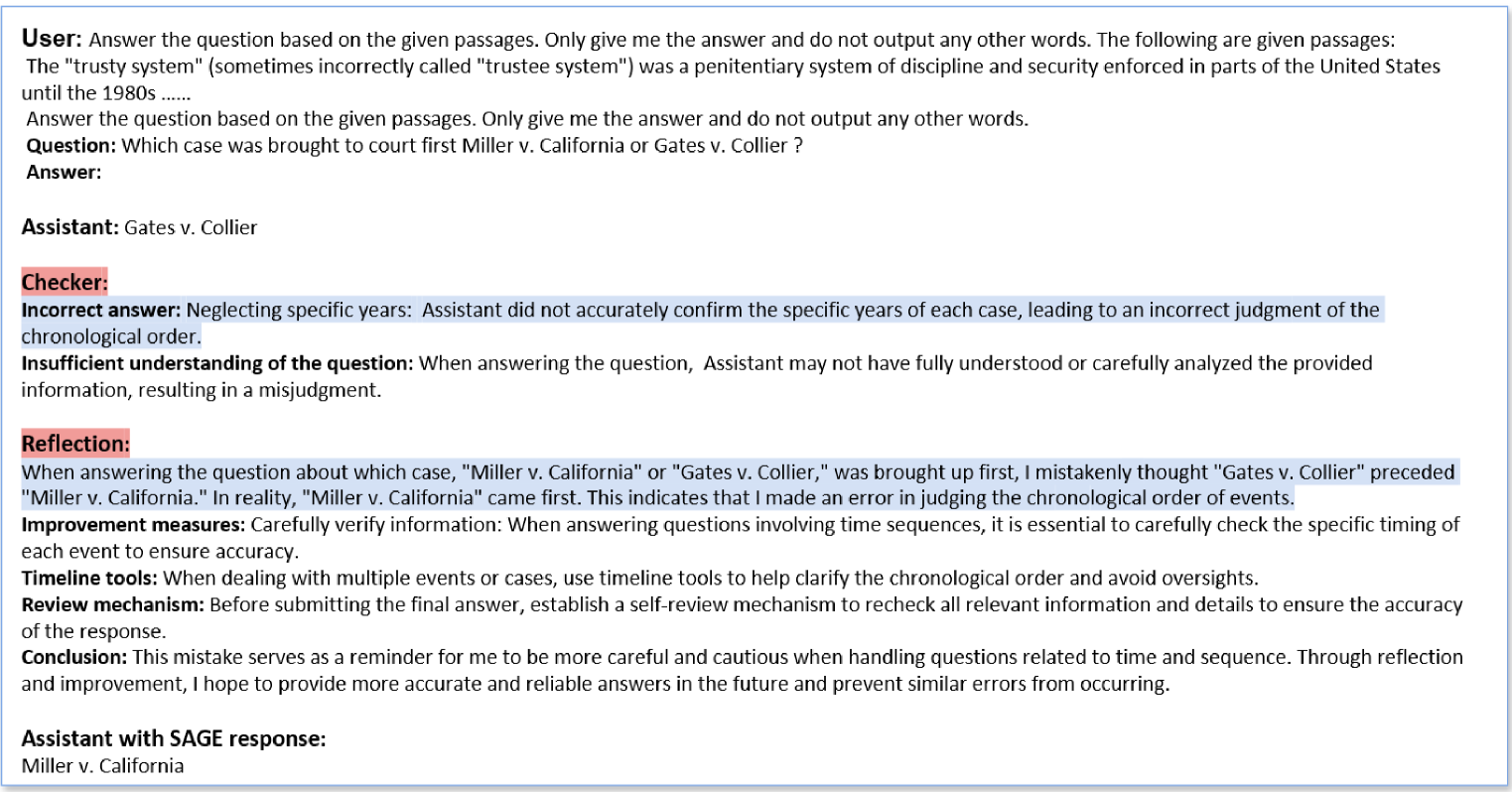

The image presents a text-based dialogue between a User, an Assistant, a Checker, and a final Assistant with SAGE response. The dialogue concerns a question about the chronological order of court cases ("Miller v. California" and "Gates v. Collier"). The initial Assistant provides an incorrect answer, which is then analyzed by a Checker, leading to a Reflection section outlining the errors and improvement measures. Finally, an Assistant with SAGE provides the correct answer.

### Components/Axes

The document is structured into four distinct sections:

1. **User:** Presents the initial context and question.

2. **Assistant:** Provides the first, incorrect answer.

3. **Checker:** Analyzes the Assistant's response, identifying errors.

4. **Reflection:** Details the reasoning behind the error, proposes improvement measures, and concludes with a commitment to future accuracy.

5. **Assistant with SAGE response:** Provides the correct answer.

### Detailed Analysis or Content Details

**User Input:**

"Answer the question based on the given passages. Only give me the answer and do not output any other words. The following are given passages: The "trusty system" (sometimes incorrectly called "trustee system") was a penitentiary system of discipline and security enforced in the United States until the 1980s ..... Answer the question based on the given passages. Only give me the answer and do not output any other words. Question: Which case was brought to court first Miller v. California or Gates v. Collier ?"

**Assistant Response:**

"Gates v. Collier"

**Checker Analysis:**

"Incorrect answer: Neglecting specific years: Assistant did not accurately confirm the specific years of each case, leading to an incorrect judgment of the chronological order. Insufficient understanding of the question: When answering the question, Assistant may not have fully understood or carefully analyzed the provided information, resulting in a misjudgment."

**Reflection:**

"When answering the question about which case, “Miller v. California” or “Gates v. Collier,” was brought up first, I mistakenly thought “Gates v. Collier” preceded “Miller v. California.” In reality, “Miller v. California” came first. This indicates that I made an error in judging the chronological order of events. Improvement measures: Verify carefully information: When answering involving time sequences, it is essential to carefully check the specific timing of each event to ensure accuracy. Timeline tools: When dealing with multiple events or cases, timeline tools to help clarify the chronological order and avoid oversights. Review mechanism: Before submitting the final answer, establish a self-review mechanism to recheck all relevant information and details to ensure the accuracy of the response. Conclusion: This mistake serves as a reminder for me to be more careful and cautious when handling questions related to time and sequence. Through reflection and improvement, I hope to provide more accurate and reliable answers in the future and prevent similar errors from occurring."

**Assistant with SAGE Response:**

"Miller v. California"

### Key Observations

* The initial Assistant failed to correctly identify the chronological order of the two court cases.

* The Checker accurately pinpointed the error as a failure to verify specific dates and a potential misunderstanding of the question.

* The Reflection section demonstrates a strong self-awareness of the error and proposes concrete steps for improvement.

* The SAGE-enhanced Assistant provides the correct answer, indicating a successful application of the identified improvement measures.

### Interpretation

This document illustrates a critical process in the development of AI systems: error detection, analysis, and correction. The dialogue highlights the importance of not only providing answers but also understanding *why* an answer is correct or incorrect. The Checker's analysis and the Reflection section are crucial for identifying the root cause of the error (in this case, a failure to consider specific dates) and implementing strategies to prevent similar errors in the future. The use of "SAGE" suggests an enhanced reasoning capability, leading to the correct answer. The document serves as a valuable case study in the iterative process of improving AI performance through feedback and self-reflection. The emphasis on timeline tools and review mechanisms suggests a focus on enhancing the system's ability to handle temporal reasoning and ensure accuracy.