## Diagram: Data Pipeline for Software Engineering Task Instance Generation

### Overview

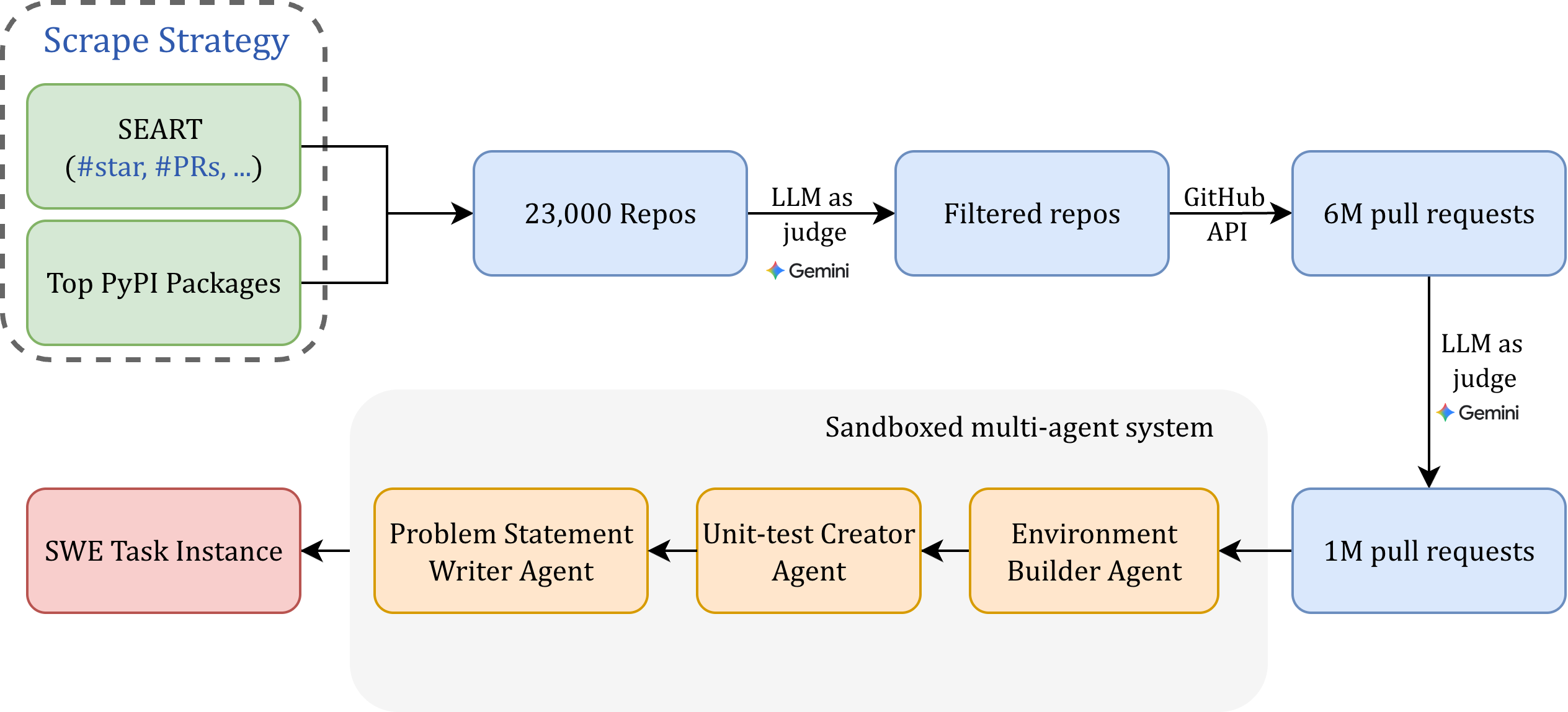

This image is a technical flowchart diagram illustrating a multi-stage data pipeline designed to collect, filter, and process software repository data into standardized "SWE Task Instances." The process begins with a broad scraping strategy, applies multiple filtering steps using Large Language Models (LLMs), and culminates in a sandboxed multi-agent system that constructs the final task instances.

### Components/Axes

The diagram is organized into three main visual regions:

1. **Top-Left (Scrape Strategy):** A dashed-line box containing two green rounded rectangles.

2. **Main Pipeline (Center/Right):** A series of blue rounded rectangles connected by arrows, representing data flow and transformation stages.

3. **Bottom (Sandboxed multi-agent system):** A light gray rounded rectangle containing three orange/yellow rounded rectangles, which feed into a final pink rectangle.

**Labels and Text Elements:**

* **Scrape Strategy** (Title, top-left)

* **SEART (#star, #PRs, ...)** (Green box, top-left)

* **Top PyPI Packages** (Green box, bottom-left)

* **23,000 Repos** (Blue box, center-left)

* **LLM as judge** (Text on arrow, with a small multi-colored star logo labeled "Gemini")

* **Filtered repos** (Blue box, center)

* **GitHub API** (Text on arrow)

* **6M pull requests** (Blue box, top-right)

* **LLM as judge** (Text on arrow, with a small multi-colored star logo labeled "Gemini")

* **1M pull requests** (Blue box, bottom-right)

* **Sandboxed multi-agent system** (Title, bottom-center)

* **Environment Builder Agent** (Orange box, rightmost in the sandbox)

* **Unit-test Creator Agent** (Orange box, center in the sandbox)

* **Problem Statement Writer Agent** (Orange box, leftmost in the sandbox)

* **SWE Task Instance** (Pink box, bottom-left)

### Detailed Analysis

The pipeline flow is as follows:

1. **Data Source Identification (Scrape Strategy):**

* Two primary sources are targeted: repositories identified via **SEART** (using metrics like star count and pull request count) and **Top PyPI Packages**.

2. **Initial Repository Collection:**

* These sources yield an initial set of **23,000 Repos**.

3. **First Filtering Stage:**

* The 23,000 repositories are processed by an **"LLM as judge"** (specifically identified as **Gemini** by the logo).

* This results in a set of **Filtered repos**.

4. **Pull Request Extraction:**

* Using the **GitHub API**, the system extracts pull requests from the filtered repositories.

* This yields a dataset of **6M (6 million) pull requests**.

5. **Second Filtering Stage:**

* The 6 million pull requests undergo another round of filtering by an **"LLM as judge"** (again, **Gemini**).

* This significantly reduces the dataset to **1M (1 million) pull requests**.

6. **Task Instance Construction (Sandboxed multi-agent system):**

* The 1 million filtered pull requests are input into a **Sandboxed multi-agent system**.

* This system consists of three specialized agents operating in sequence (right-to-left flow):

* **Environment Builder Agent:** Likely sets up the code environment for the task.

* **Unit-test Creator Agent:** Generates or validates unit tests related to the pull request.

* **Problem Statement Writer Agent:** Formulates a clear problem description based on the code change.

* The final output of this multi-agent system is a **SWE Task Instance**.

### Key Observations

* **Funnel Effect:** The pipeline demonstrates a massive data reduction funnel: from 23,000 repos to 6M PRs, then filtered down to 1M PRs for final processing.

* **LLM-Centric Filtering:** The core filtering mechanism at two critical stages is an LLM (Gemini) acting as a judge, suggesting automated quality or relevance assessment.

* **Modular Agent Design:** The final construction phase uses a specialized, multi-agent architecture where each agent has a distinct responsibility (environment, tests, description).

* **Spatial Flow:** The diagram uses a clear left-to-right flow for the data processing pipeline, which then feeds into a right-to-left flow within the sandboxed system, creating a logical loop that ends at the final output on the left.

### Interpretation

This diagram outlines a sophisticated, automated pipeline for creating a large-scale benchmark or training dataset for software engineering AI agents. The process is designed to curate high-quality, real-world coding tasks from open-source repositories.

* **Purpose:** The system aims to solve the problem of obtaining realistic, well-defined software engineering tasks at scale. Manually creating such tasks is prohibitively expensive.

* **Methodology:** It leverages existing, popular code repositories (via SEART and PyPI) as a source of authentic code changes (pull requests). The dual-stage LLM filtering is crucial for ensuring the selected tasks are suitable—likely filtering for clarity, self-contained nature, and educational value.

* **Significance:** The final "SWE Task Instance" is the key product. Each instance likely includes a codebase state, a problem description, and a test suite, providing a complete environment for an AI to practice or be evaluated on software engineering skills. The scale (1M processed PRs) suggests an ambition to create a very comprehensive dataset.

* **Notable Design Choice:** The use of a "sandboxed" multi-agent system for the final step implies that constructing a valid task instance is complex and requires isolated, controlled steps to avoid interference and ensure reliability.