\n

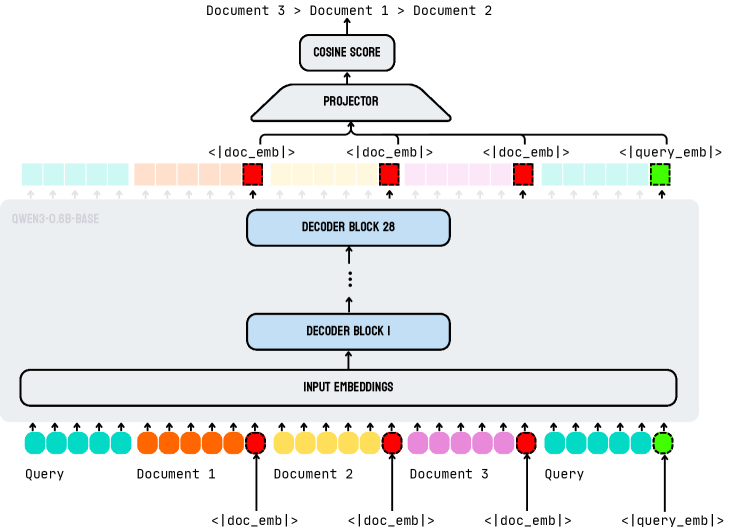

## Diagram: Document Ranking Model Architecture

### Overview

The image depicts a diagram illustrating the architecture of a document ranking model. The model takes a query and multiple documents as input, processes them through a series of decoder blocks, and outputs a cosine score representing the relevance of each document to the query. The diagram highlights the flow of information from input embeddings through decoder layers to a projection layer and finally to the cosine score calculation.

### Components/Axes

The diagram is structured into several key components:

* **Input Embeddings:** Represented as a horizontal block at the bottom, containing embeddings for a "Query", "Document 1", "Document 2", and "Document 3", repeated twice. Each embedding is visually represented by a series of colored circles with upward-pointing arrows.

* **Decoder Blocks:** A stack of rectangular blocks labeled "DECODER BLOCK 1" and "DECODER BLOCK 28" (with an ellipsis indicating intermediate blocks). These blocks process the input embeddings.

* **Projector:** A triangular block labeled "PROJECTOR" that receives output from the decoder blocks.

* **Cosine Score:** A triangular block labeled "COSINE SCORE" at the top, representing the final output of the model.

* **Labels:** "<doc_emb>" and "<query_emb>" are used to label the embeddings.

* **Model Base:** "OWEN3-0.6B-BASE" is labeled at the top left.

* **Document Ranking:** "Document 3 > Document 1 > Document 2" is labeled at the top.

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. **Input:** The process begins with input embeddings for a query and three documents. The query embeddings are represented by teal circles, Document 1 by orange, Document 2 by yellow, and Document 3 by magenta.

2. **Embedding Layer:** The input embeddings are fed into the "INPUT EMBEDDINGS" layer.

3. **Decoder Blocks:** The embeddings are then passed through a series of decoder blocks, starting with "DECODER BLOCK 1" and progressing to "DECODER BLOCK 28". The ellipsis indicates that there are multiple intermediate decoder blocks.

4. **Projection:** The output of the decoder blocks is fed into the "PROJECTOR" layer.

5. **Cosine Score:** Finally, the output of the projector is used to calculate the "COSINE SCORE". The diagram shows arrows indicating that Document 3 has the highest score, followed by Document 1, and then Document 2.

6. **Embedding Representation:** Each document and query is represented by a sequence of colored circles, each with an upward-pointing arrow. The number of circles in each sequence appears to be approximately 8-10.

7. **Embedding Labels:** The embeddings are labeled as "<doc_emb>" for document embeddings and "<query_emb>" for query embeddings.

### Key Observations

* The diagram emphasizes the sequential processing of information through the decoder blocks.

* The "PROJECTOR" layer appears to be a crucial component for transforming the decoder output into a format suitable for cosine similarity calculation.

* The diagram visually indicates that Document 3 is ranked highest, followed by Document 1, and then Document 2, based on their cosine scores.

* The model is based on "OWEN3-0.6B-BASE".

### Interpretation

The diagram illustrates a document ranking model that utilizes a transformer-based architecture with multiple decoder blocks. The model takes embeddings of queries and documents as input, processes them through the decoder layers to learn contextual representations, and then projects these representations into a space where cosine similarity can be used to determine the relevance of each document to the query. The ranking "Document 3 > Document 1 > Document 2" suggests that the model has learned to identify Document 3 as the most relevant document, followed by Document 1 and Document 2. The use of embeddings and decoder blocks indicates that the model is capable of capturing complex relationships between the query and the documents. The "OWEN3-0.6B-BASE" label suggests that the model is built upon a pre-trained language model with 0.6 billion parameters. The diagram provides a high-level overview of the model's architecture and does not provide specific details about the implementation of the decoder blocks or the projection layer.