## Diagram: Neural Network Architecture for Document Retrieval

### Overview

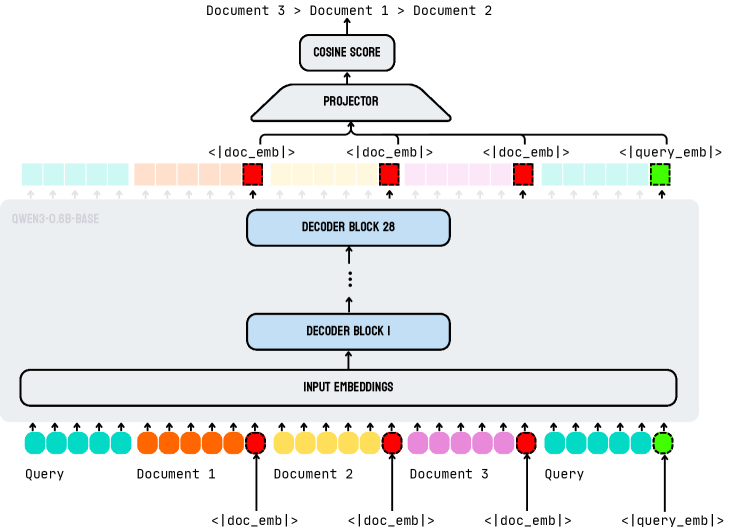

The diagram illustrates a neural network architecture designed for document retrieval or semantic search. It shows the flow of data through input embeddings, decoder blocks, a projector, and a cosine score calculation. The architecture uses color-coded embeddings to track document/query relationships and includes attention mechanisms (indicated by arrows).

### Components/Axes

1. **Input Embeddings Layer**:

- Contains color-coded embeddings for:

- Query (cyan)

- Document 1 (orange)

- Document 2 (yellow)

- Document 3 (pink)

- Legend at bottom shows color coding for each element

2. **Decoder Blocks**:

- Two stacked layers:

- Decoder Block 1 (bottom)

- Decoder Block 28 (top)

- Arrows indicate sequential processing flow

3. **Projector**:

- Intermediate layer between decoder blocks and cosine score

- Represents dimensionality reduction/feature transformation

4. **Cosine Score**:

- Final output at top of architecture

- Represents similarity between query and documents

5. **Attention Mechanism**:

- Visualized by upward-pointing arrows between components

- Indicates cross-attention between query and document embeddings

### Detailed Analysis

- **Input Embeddings**:

- Each document/query has distinct color coding

- Embeddings are processed through multiple attention heads (implied by multiple arrows)

- **Decoder Blocks**:

- Block 1 processes initial embeddings

- Block 28 represents deeper contextual processing

- Positioned vertically to show sequential processing

- **Projector**:

- Transforms decoder output into a space suitable for cosine similarity

- Positioned between decoder blocks and cosine score

- **Cosine Score**:

- Final output indicates document-query relevance

- Calculated using query/document embeddings from projector

### Key Observations

1. **Color-Coded Tracking**:

- Cyan (query) and orange/yellow/pink (documents) maintain distinct identities through all layers

- Suggests explicit tracking of document-query relationships

2. **Multi-Layer Processing**:

- 28 decoder blocks indicate deep contextual understanding

- Positioned between input and output layers for progressive refinement

3. **Attention Flow**:

- Arrows show bidirectional attention between query and documents

- Implies cross-document comparison during processing

4. **Dimensionality Reduction**:

- Projector layer suggests feature compression before final scoring

### Interpretation

This architecture demonstrates a transformer-based approach to document retrieval:

1. **Input Processing**:

- Documents and queries are embedded with distinct color coding

- Maintains identity through all processing layers

2. **Contextual Understanding**:

- 28 decoder blocks enable deep contextual analysis

- Progressive refinement from raw embeddings to contextual representations

3. **Relevance Calculation**:

- Projector transforms contextual embeddings into a similarity-optimized space

- Cosine score directly measures document-query relevance

4. **Attention Mechanism**:

- Cross-attention between query and documents enables:

- Document-document comparison

- Query-document alignment

- Contextual refinement through multiple layers

The architecture suggests a sophisticated semantic search system where:

- Documents are encoded with rich contextual representations

- Queries are compared against these representations through attention mechanisms

- Final cosine scores enable efficient ranking of document relevance

The use of 28 decoder blocks indicates a balance between computational complexity and representational capacity, while the color-coded tracking ensures document-query relationships are preserved throughout processing.