## Scatter Plot: Frontier Reward Modeling Performance

### Overview

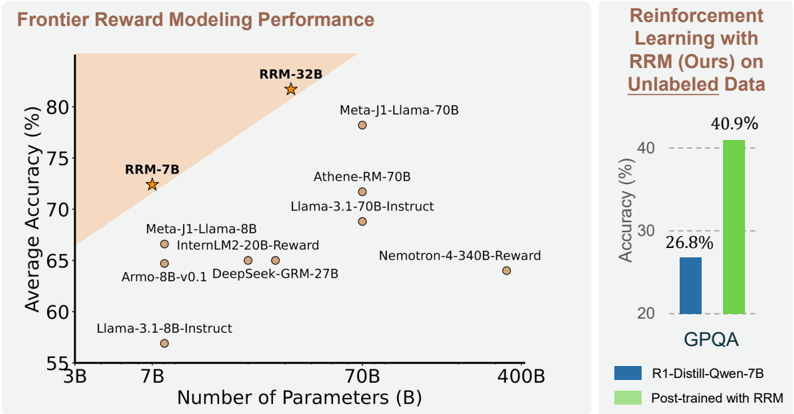

The image presents a scatter plot comparing the average accuracy of various reward modeling approaches against the number of parameters used in the models. A secondary bar chart shows the accuracy of a reinforcement learning approach with and without RRM (Reward-based Reinforcement Modeling) on unlabeled data.

### Components/Axes

* **X-axis:** Number of Parameters (B) - Scale ranges from approximately 3B to 400B. Marked values are 3B, 7B, 70B, and 400B.

* **Y-axis:** Average Accuracy (%) - Scale ranges from approximately 55% to 85%. Marked values are 55%, 60%, 65%, 70%, 75%, 80%, and 85%.

* **Scatter Plot Data Points:** Represent different reward modeling approaches.

* **Shaded Region:** A light orange shaded region in the top-left corner, indicating a performance frontier.

* **Legend (Scatter Plot):**

* RRM-32B (Star symbol, dark orange)

* RRM-7B (Star symbol, dark orange)

* Other models (Circle symbol, gray)

* **Bar Chart:** Compares accuracy with and without RRM.

* **Legend (Bar Chart):**

* R1-Distill-Qwen-7B (Blue bar)

* Post-trained with RRM (Green bar)

* **Bar Chart X-axis:** GPQA

* **Bar Chart Y-axis:** Accuracy (%) - Scale ranges from 0% to 50%. Marked values are 0%, 20%, 30%, 40%, and 50%.

### Detailed Analysis or Content Details

**Scatter Plot:**

* **RRM-32B:** Located at approximately (320B, 82%).

* **RRM-7B:** Located at approximately (7B, 74%).

* **Meta-J1-Llama-70B:** Located at approximately (70B, 79%).

* **Athene-RM-70B:** Located at approximately (70B, 72%).

* **Llama-3.1-70B-Instruct:** Located at approximately (70B, 69%).

* **Meta-J1-Llama-8B:** Located at approximately (8B, 68%).

* **InternLM2-20B-Reward:** Located at approximately (20B, 66%).

* **Nемоtron-4-340B-Reward:** Located at approximately (340B, 64%).

* **Armo-8B-v0.1:** Located at approximately (8B, 64%).

* **DeepSeek-GRM-27B:** Located at approximately (27B, 63%).

* **Llama-3.1-8B-Instruct:** Located at approximately (8B, 58%).

**Bar Chart:**

* **R1-Distill-Qwen-7B:** Accuracy is approximately 26.8%.

* **Post-trained with RRM:** Accuracy is approximately 40.9%.

### Key Observations

* The scatter plot shows a general trend of increasing accuracy with increasing model size (number of parameters).

* RRM-32B and RRM-7B models achieve higher accuracy compared to other models with similar parameter counts.

* The bar chart demonstrates a significant improvement in accuracy when using RRM for post-training on the GPQA dataset.

* The shaded region suggests a performance frontier, with RRM-32B and RRM-7B models approaching or exceeding it.

### Interpretation

The data suggests that RRM is an effective technique for improving the performance of reward modeling, particularly when combined with larger models. The scatter plot illustrates a positive correlation between model size and accuracy, but RRM appears to enhance this relationship, allowing models to achieve higher accuracy for a given number of parameters. The bar chart provides concrete evidence of RRM's effectiveness in a reinforcement learning context, showing a substantial increase in accuracy on the GPQA dataset. The positioning of RRM-32B and RRM-7B near the performance frontier indicates that these models represent state-of-the-art performance in reward modeling. The outlier is the significant jump in accuracy when using RRM, suggesting it is a key component for achieving high performance. The data implies that RRM is a valuable tool for developing more effective and efficient reward models, which can lead to improvements in reinforcement learning and other AI applications.