# Technical Data Extraction: Llama 7B Performance Metrics

## 1. Document Metadata

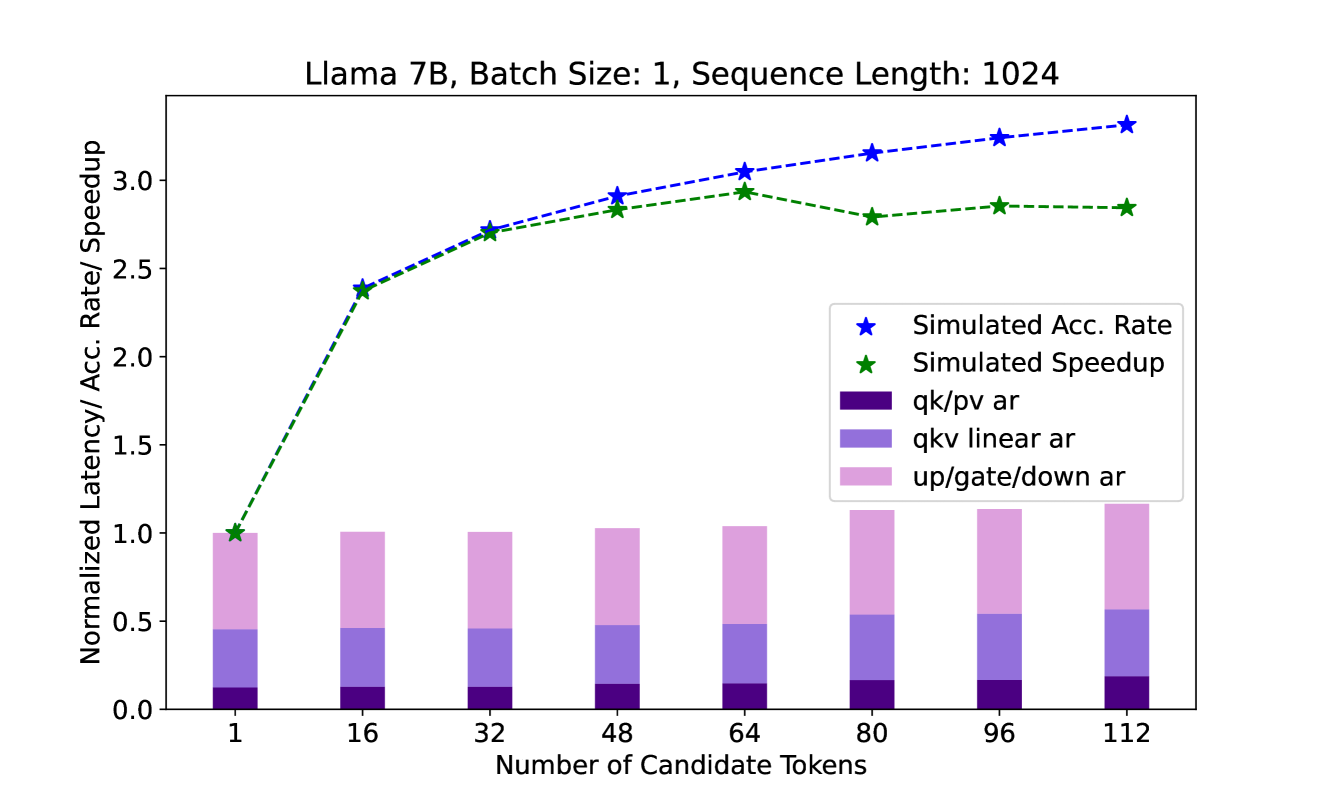

* **Title:** Llama 7B, Batch Size: 1, Sequence Length: 1024

* **Primary Language:** English

* **Image Type:** Combined Line Graph and Stacked Bar Chart

## 2. Component Isolation

### A. Header

* **Text:** "Llama 7B, Batch Size: 1, Sequence Length: 1024"

* **Context:** Defines the model architecture and specific inference parameters used for the data collection.

### B. Main Chart Area (Axes)

* **Y-Axis Label:** "Normalized Latency/ Acc. Rate/ Speedup"

* **Y-Axis Scale:** Linear, ranging from 0.0 to 3.0+ (increments of 0.5).

* **X-Axis Label:** "Number of Candidate Tokens"

* **X-Axis Markers (Categories):** 1, 16, 32, 48, 64, 80, 96, 112.

### C. Legend

* **Blue Star ($\star$):** Simulated Acc. Rate (Line)

* **Green Star ($\star$):** Simulated Speedup (Line)

* **Dark Purple Block:** qk/pv ar (Stacked Bar component)

* **Medium Purple Block:** qkv linear ar (Stacked Bar component)

* **Light Pink/Lavender Block:** up/gate/down ar (Stacked Bar component)

---

## 3. Data Series Analysis & Trend Verification

### Series 1: Simulated Acc. Rate (Blue Star / Dashed Blue Line)

* **Visual Trend:** Consistent upward slope. The rate of increase is steepest between 1 and 32 tokens, then continues to climb at a shallower, steady gradient through to 112 tokens.

* **Estimated Data Points:**

* 1: ~1.0

* 16: ~2.4

* 32: ~2.7

* 48: ~2.9

* 64: ~3.05

* 80: ~3.15

* 96: ~3.25

* 112: ~3.3

### Series 2: Simulated Speedup (Green Star / Dashed Green Line)

* **Visual Trend:** Initial rapid growth matching the Acc. Rate until 32 tokens. It plateaus between 64 and 112 tokens, showing a slight dip at 80 before stabilizing.

* **Estimated Data Points:**

* 1: 1.0 (Baseline)

* 16: ~2.4

* 32: ~2.7

* 48: ~2.85

* 64: ~2.95

* 80: ~2.8

* 96: ~2.85

* 112: ~2.85

### Series 3: Normalized Latency Components (Stacked Bars)

* **Visual Trend:** The total height of the bars (representing total normalized latency) remains very close to 1.0 for candidate tokens 1 through 64. Starting at 80 tokens, the total latency begins to increase visibly, reaching approximately 1.2 by 112 tokens.

* **Component Breakdown:**

* **qk/pv ar (Dark Purple):** Smallest contributor; remains relatively constant with a very slight increase as token count grows.

* **qkv linear ar (Medium Purple):** Middle contributor; remains stable until 80 tokens, where it expands slightly.

* **up/gate/down ar (Light Pink):** Largest contributor; remains stable until 80 tokens, then shows the most significant growth in height, driving the overall latency increase.

---

## 4. Data Table Reconstruction (Estimated Values)

| Number of Candidate Tokens | Simulated Acc. Rate (Blue) | Simulated Speedup (Green) | Total Normalized Latency (Bar Height) |

| :--- | :--- | :--- | :--- |

| **1** | 1.0 | 1.0 | 1.0 |

| **16** | 2.4 | 2.4 | 1.0 |

| **32** | 2.7 | 2.7 | 1.0 |

| **48** | 2.9 | 2.85 | 1.02 |

| **64** | 3.05 | 2.95 | 1.04 |

| **80** | 3.15 | 2.8 | 1.13 |

| **96** | 3.25 | 2.85 | 1.15 |

| **112** | 3.3 | 2.85 | 1.18 |

---

## 5. Technical Summary

The chart illustrates the performance of a Llama 7B model using speculative decoding or a similar candidate-token-based acceleration method.

* **Efficiency Peak:** The "Simulated Speedup" tracks closely with the "Simulated Acceptance Rate" until approximately 32-48 candidate tokens.

* **Diminishing Returns:** Beyond 64 tokens, the "Simulated Speedup" plateaus and even slightly regresses. This is explained by the "Normalized Latency" bars, which show that the computational overhead (specifically in the `up/gate/down` and `qkv linear` layers) begins to increase significantly after 64 tokens, offsetting the gains from a higher acceptance rate.