\n

## Diagram: Layer 1 Architecture

### Overview

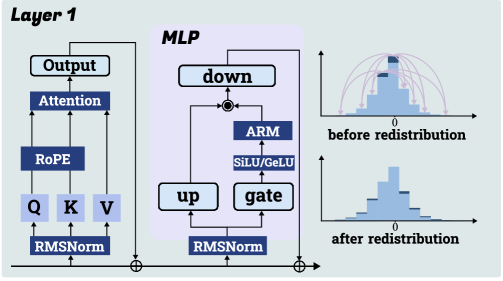

This diagram illustrates the architecture of Layer 1 in a neural network, likely a transformer-based model. It depicts the flow of data through several components, including Attention, RoPE, RMSNorm, MLP (Multi-Layer Perceptron), and activation functions. The diagram also includes visualizations of data distribution before and after a redistribution process.

### Components/Axes

The diagram is segmented into three main regions: a left-side processing chain, a central MLP block, and a right-side visualization of data distributions.

* **Left Side:**

* "Output"

* "Attention"

* "RoPE"

* "Q K V" (Query, Key, Value)

* "RMSNorm"

* **Central MLP Block:**

* "MLP" (title)

* "down"

* "up"

* "ARM"

* "SiLU/GeLU"

* "gate"

* "RMSNorm"

* **Right Side:**

* "before redistribution" (title of top histogram)

* "after redistribution" (title of bottom histogram)

* X-axis: Numerical scale, ranging from approximately -4 to +4.

* Y-axis: Represents frequency or probability density.

### Detailed Analysis or Content Details

The diagram shows a data flow starting from the bottom with "RMSNorm". The output of "RMSNorm" feeds into "Q K V". The output of "Q K V" is then passed to "RoPE", which in turn feeds into "Attention". The output of "Attention" is labeled "Output".

The "MLP" block receives input from "RMSNorm" (bottom) and "Attention" (top). Inside the MLP:

* The input splits into two paths: "up" and "down".

* "down" feeds into "ARM" and then into "SiLU/GeLU".

* "up" feeds into "RMSNorm" and then into "gate".

* The outputs of "ARM/SiLU/GeLU" and "gate" are combined at a circular node (likely an addition operation).

The right side shows two histograms:

* **Before Redistribution:** The histogram is multi-modal, with several peaks. The peaks are centered around approximately -2.5, -1, 0, +1, and +2.5. The height of the peaks are roughly equal. Arcs are drawn from the top of each peak to the x-axis, indicating the spread of the distribution.

* **After Redistribution:** The histogram is unimodal, centered around approximately 0. The distribution is more concentrated and has a narrower spread than the "before redistribution" histogram.

### Key Observations

* The diagram illustrates a typical transformer layer structure with attention and a feedforward network (MLP).

* The MLP block includes a complex internal structure with "ARM", "SiLU/GeLU", and "gate" components.

* The redistribution process appears to transform a multi-modal distribution into a unimodal distribution, suggesting a regularization or normalization effect.

* The circular node within the MLP suggests an additive operation.

### Interpretation

The diagram likely represents a component within a larger neural network architecture, possibly a transformer model. The "RoPE" component suggests the use of Rotary Positional Embeddings, a technique for incorporating positional information into the attention mechanism. The MLP block is designed to process the output of the attention mechanism and introduce non-linearity.

The redistribution process, as visualized by the histograms, is a key aspect of the architecture. It transforms a potentially complex and dispersed distribution into a more focused and stable distribution. This could be a form of normalization, regularization, or feature selection. The change from a multi-modal to a unimodal distribution suggests that the redistribution process is reducing the variance and concentrating the data around a central value.

The diagram provides a high-level overview of the data flow and component interactions within Layer 1. It does not provide specific numerical values or parameters, but it conveys the overall structure and functionality of the layer.