## Diagram: Dual-Track Algorithm Evaluation Framework

### Overview

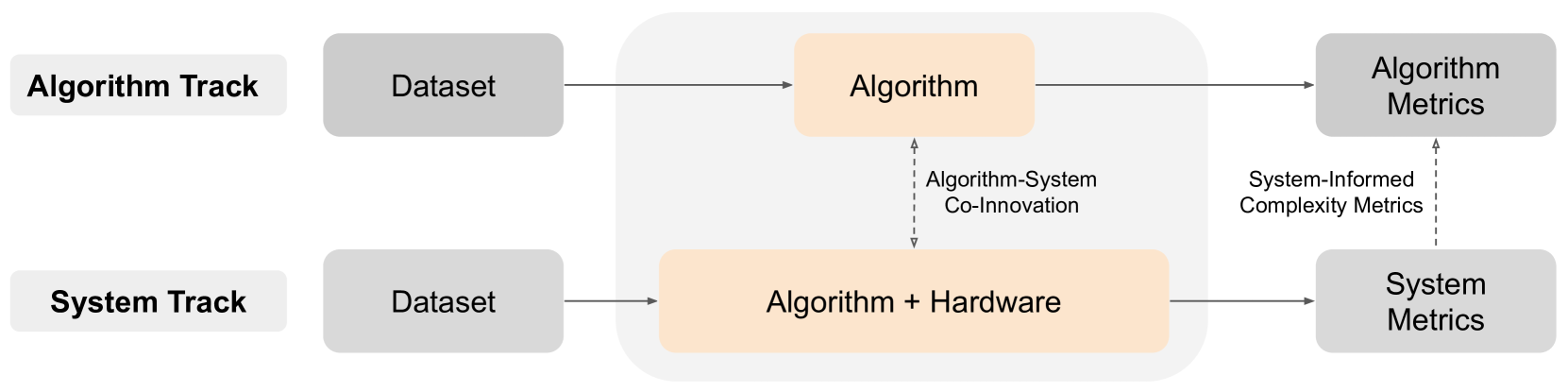

The image is a flowchart diagram illustrating a dual-track framework for evaluating and developing algorithms. It presents two parallel pathways—the "Algorithm Track" and the "System Track"—which converge in a central zone of co-innovation. The diagram emphasizes the relationship between pure algorithmic performance and performance when integrated with specific hardware.

### Components/Axes

The diagram is structured into three horizontal sections: Inputs (left), Processing Core (center), and Outputs (right).

**Left Section (Inputs):**

* **Top-Left Label:** "Algorithm Track" (black text on a light gray rounded rectangle).

* **Bottom-Left Label:** "System Track" (black text on a light gray rounded rectangle).

* **Input Data:** Both tracks begin with a box labeled "Dataset" (black text on a medium gray rounded rectangle).

**Center Section (Processing Core):**

* This area is enclosed by a large, light gray rounded rectangle, signifying a unified processing or co-innovation space.

* **Top Processing Unit:** "Algorithm" (black text on a light orange rounded rectangle). It receives input from the top "Dataset".

* **Bottom Processing Unit:** "Algorithm + Hardware" (black text on a light orange rounded rectangle). It receives input from the bottom "Dataset".

* **Interaction Label:** A vertical, double-headed dashed arrow connects the two processing units. The text "Algorithm-System Co-Innovation" is placed to the right of this arrow.

**Right Section (Outputs):**

* **Top Output:** "Algorithm Metrics" (black text on a medium gray rounded rectangle). It receives a solid arrow from the "Algorithm" box.

* **Bottom Output:** "System Metrics" (black text on a medium gray rounded rectangle). It receives a solid arrow from the "Algorithm + Hardware" box.

* **Feedback Label:** A vertical, upward-pointing dashed arrow connects "System Metrics" to "Algorithm Metrics". The text "System-Informed Complexity Metrics" is placed to the left of this arrow.

### Detailed Analysis

The flow of information and evaluation is as follows:

1. **Algorithm Track Flow:**

* **Path:** Dataset → Algorithm → Algorithm Metrics.

* **Trend/Direction:** A linear, left-to-right progression. This represents a traditional evaluation pipeline where an algorithm is tested on a dataset, and its performance is measured by isolated algorithmic metrics (e.g., accuracy, loss).

2. **System Track Flow:**

* **Path:** Dataset → Algorithm + Hardware → System Metrics.

* **Trend/Direction:** A parallel linear, left-to-right progression. This represents a holistic evaluation where the algorithm is co-designed or tightly integrated with target hardware, and performance is measured by system-level metrics (e.g., latency, throughput, power efficiency).

3. **Cross-Track Interactions:**

* **Algorithm-System Co-Innovation:** The dashed, bidirectional arrow indicates a feedback loop or iterative design process between the pure algorithm development and the hardware-aware system development. Insights from one track can inform and improve the other.

* **System-Informed Complexity Metrics:** The dashed, upward arrow indicates that the metrics derived from the system-level evaluation ("System Metrics") are used to define or refine the complexity metrics used to evaluate the standalone algorithm ("Algorithm Metrics"). This suggests that algorithmic efficiency should be understood in the context of real-world hardware constraints.

### Key Observations

* **Color Coding:** The diagram uses color to group related elements. Light orange highlights the core processing units ("Algorithm" and "Algorithm + Hardware"), while medium gray is used for data inputs and metric outputs. Light gray denotes track labels and the overarching co-innovation zone.

* **Spatial Grounding:** The "Algorithm-System Co-Innovation" label is positioned centrally between the two processing units, physically representing its role as a bridge. The "System-Informed Complexity Metrics" label is positioned on the right, linking the output of one track to the evaluation criteria of the other.

* **Arrow Types:** Solid arrows denote the primary, direct flow of data and evaluation within each track. Dashed arrows denote secondary, informational, or feedback relationships between the tracks.

### Interpretation

This diagram presents a framework for moving beyond isolated algorithmic benchmarking. It argues that for meaningful progress, especially in performance-critical fields like machine learning or high-performance computing, algorithm development cannot be divorced from the hardware it runs on.

The **Algorithm Track** represents the "theoretical" or "idealized" performance ceiling. The **System Track** represents the "practical" or "deployable" performance. The core message is that these two perspectives must inform each other (**Co-Innovation**). A breakthrough in algorithm design might enable new hardware efficiencies, while a constraint in hardware architecture might inspire a novel, more efficient algorithmic approach.

The most critical insight is the feedback loop labeled **"System-Informed Complexity Metrics."** It suggests that the standard metrics used to judge algorithms (like FLOPs or parameter count) are insufficient. True algorithmic complexity and efficiency should be measured in terms that account for system-level behavior, such as memory access patterns, parallelism, and energy consumption per operation. This framework advocates for a holistic, co-design methodology where the end goal is not just a better algorithm on paper, but a more efficient and powerful computational system.