\n

## Diagram: Continuous Thought Frameworks

### Overview

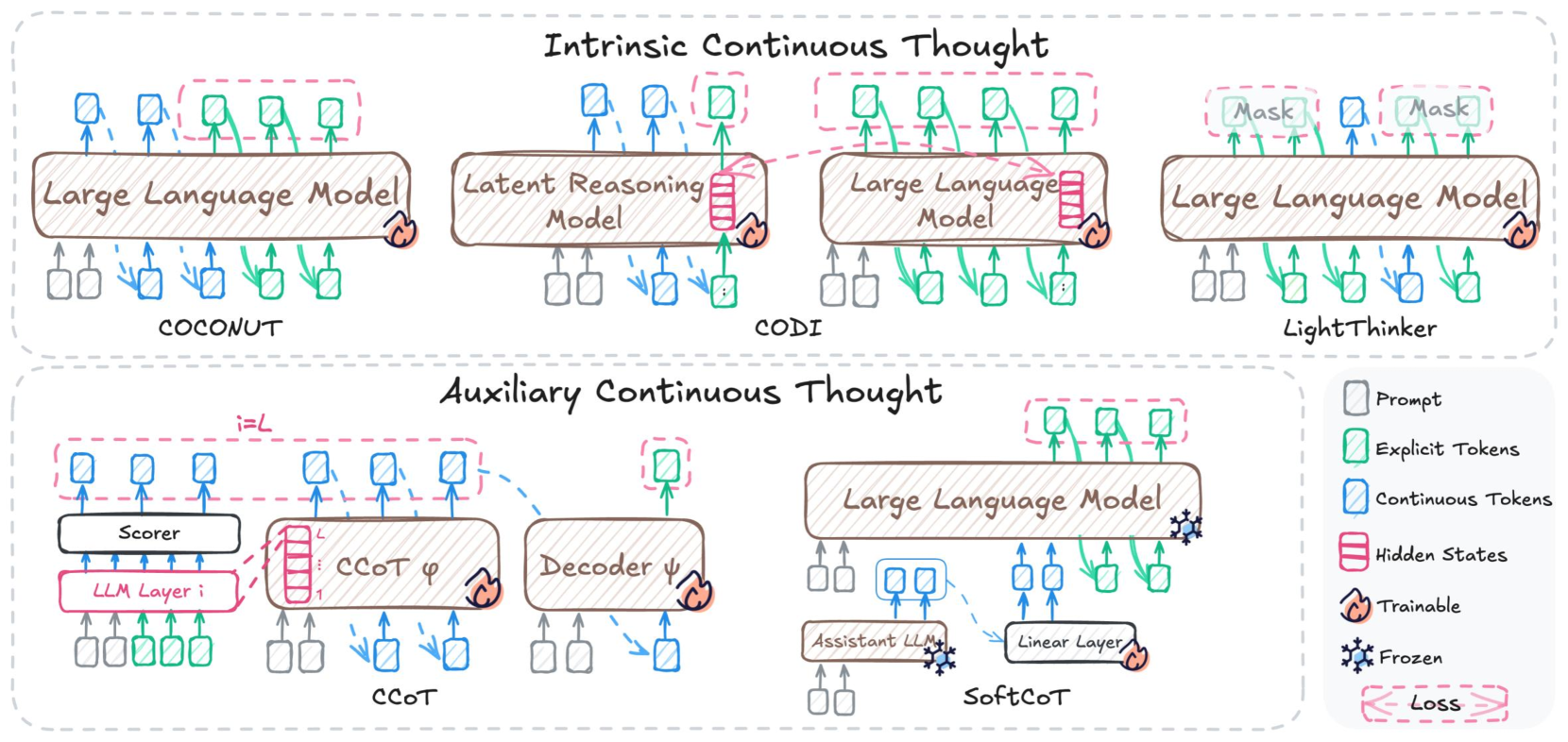

The image presents a diagram illustrating two main approaches to "Continuous Thought" within Large Language Models (LLMs): Intrinsic Continuous Thought and Auxiliary Continuous Thought. The diagram depicts the architecture of several models (COCONUT, CODI, LightThinker, cCoT, SoftCoT) and their components, along with the flow of information between them. It uses a visual language of boxes representing LLMs, arrows indicating data flow, and specific icons to denote different types of tokens and trainable/frozen parameters.

### Components/Axes

The diagram is divided into two main sections: "Intrinsic Continuous Thought" (top) and "Auxiliary Continuous Thought" (bottom).

**Legend (Top-Right):**

* White boxes with rounded corners: "Prompt"

* Green squares: "Explicit Tokens"

* Light Green squares: "Continuous Tokens"

* Red rectangles: "Hidden States"

* Blue star: "Trainable"

* Frozen symbol (snowflake): "Frozen"

* Red arrow pointing down: "Loss"

**Intrinsic Continuous Thought:**

* **COCONUT:** Large Language Model with input/output data streams.

* **Latent Reasoning Model:** Box with input/output data streams.

* **CODI:** Large Language Model with input/output data streams, connected to the Latent Reasoning Model via a green arrow.

* **LightThinker:** Large Language Model with input/output data streams, including "Mask" tokens.

**Auxiliary Continuous Thought:**

* **Scorer:** Box with input/output data streams, connected to a "Linear Layer i".

* **cCoT φ:** Box with input/output data streams.

* **Decoder:** Box with input/output data streams.

* **Assistant LLM:** Large Language Model with input/output data streams.

* **SoftCoT:** Box with input/output data streams, connected to the Assistant LLM via a "Linear Layer".

### Detailed Analysis or Content Details

**Intrinsic Continuous Thought:**

* **COCONUT:** The LLM receives input data (represented by multiple lines) and produces output data.

* **Latent Reasoning Model:** Receives input data and produces output data.

* **CODI:** The LLM receives input data and produces output data. It is connected to the Latent Reasoning Model via a green arrow, indicating the flow of "Continuous Tokens".

* **LightThinker:** The LLM receives input data and produces output data. It includes "Mask" tokens (indicated by the "Mask" label within the LLM box). The LLM is marked with a blue star, indicating it is "Trainable".

**Auxiliary Continuous Thought:**

* **Scorer:** Receives input data and outputs to "cCoT φ". The Scorer is connected to a "Linear Layer i".

* **cCoT φ:** Receives input from the Scorer and outputs to the Decoder.

* **Decoder:** Receives input from cCoT φ and produces output data.

* **Assistant LLM:** Receives input data and produces output data. It is connected to "SoftCoT" via a "Linear Layer".

* **SoftCoT:** Receives input from the Assistant LLM and produces output data. The Assistant LLM is marked with a blue star, indicating it is "Trainable". The SoftCoT is marked with a snowflake, indicating it is "Frozen".

* A red arrow labeled "Loss" points downwards from the SoftCoT.

The arrows between the components generally indicate the flow of data. Green arrows represent "Continuous Tokens".

### Key Observations

* The diagram highlights two distinct approaches to integrating continuous thought into LLMs.

* Intrinsic methods (COCONUT, CODI, LightThinker) appear to embed the continuous reasoning process *within* the LLM itself.

* Auxiliary methods (cCoT, SoftCoT) use separate modules (Scorer, Decoder) to augment the LLM's capabilities.

* Trainability is explicitly indicated for some components (LightThinker, Assistant LLM), while others are frozen (SoftCoT).

* The "Loss" signal suggests a training process is involved in the Auxiliary Continuous Thought framework.

### Interpretation

The diagram illustrates different strategies for enabling LLMs to engage in more complex, multi-step reasoning. The "Intrinsic" approach attempts to build continuous thought directly into the LLM's architecture, potentially leveraging internal mechanisms like masking (LightThinker). The "Auxiliary" approach, on the other hand, treats continuous thought as a separate process that interacts with the LLM, potentially offering more flexibility and control. The presence of trainable and frozen components suggests that these frameworks are designed to be fine-tuned or adapted to specific tasks. The "Loss" signal indicates that the Auxiliary approach is likely trained using a supervised learning paradigm. The use of different token types (explicit vs. continuous) suggests that the frameworks may handle different types of information or reasoning steps in distinct ways. The diagram provides a high-level overview of these frameworks and does not delve into the specific implementation details of each component. It is a conceptual illustration of the architectural differences between these approaches.