## Chart: CWQ Latency Comparison (with PathHD pruning)

### Overview

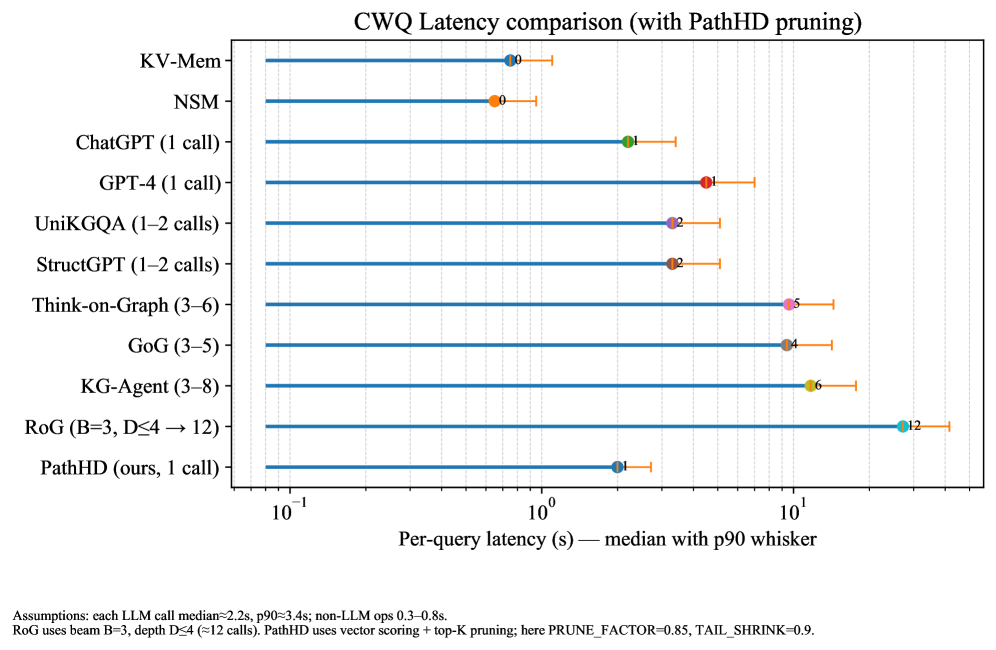

This chart compares the per-query latency of several models (KV-Mem, NSM, ChatGPT, GPT-4, UniKGQA, StructGPT, Think-on-Graph, GoG, KG-Agent, RoG, and PathHD) using a logarithmic scale. The data is presented as median latency with a p90 whisker, indicating the 90th percentile latency. The chart demonstrates the impact of PathHD pruning on latency.

### Components/Axes

* **Title:** CWQ Latency comparison (with PathHD pruning)

* **X-axis:** Per-query latency (s) – median with p90 whisker. The scale is logarithmic, with markers at 10<sup>-1</sup>, 10<sup>0</sup>, and 10<sup>1</sup>.

* **Y-axis:** Model names (KV-Mem, NSM, ChatGPT (1 call), GPT-4 (1 call), UniKGQA (1–2 calls), StructGPT (1–2 calls), Think-on-Graph (3–6), GoG (3–5), KG-Agent (3–8), RoG (B=3, D≤4 → 12), PathHD (ours, 1 call)).

* **Data Series:** Each model is represented by a horizontal line with a marker indicating the median latency and a whisker extending to the p90 latency.

* **Legend:** The legend is implicit, with each line color corresponding to a model name on the Y-axis.

* **Assumptions (Footer):** "Assumptions: each LLM call median=2.5s, p90=3.4s; non-LLM ops 0.3–0.8s. RoG uses beam B=3, depth D≤4 (=12 calls). PathHD uses vector scoring + top-K pruning; here PRUNE_FACTOR=0.85, TAIL_SHRINK=0.9."

### Detailed Analysis

The chart displays the following approximate latency values (median with p90 whisker):

* **KV-Mem:** Approximately 0.3s (median), whisker extends to approximately 0.35s. (Blue)

* **NSM:** Approximately 0.4s (median), whisker extends to approximately 0.5s. (Orange)

* **ChatGPT (1 call):** Approximately 0.1s (median), whisker extends to approximately 0.15s. (Green)

* **GPT-4 (1 call):** Approximately 0.2s (median), whisker extends to approximately 0.3s. (Light Blue)

* **UniKGQA (1–2 calls):** Approximately 0.8s (median), whisker extends to approximately 1.0s. (Red)

* **StructGPT (1–2 calls):** Approximately 0.8s (median), whisker extends to approximately 1.0s. (Purple)

* **Think-on-Graph (3–6):** Approximately 3s (median), whisker extends to approximately 5s. (Pink)

* **GoG (3–5):** Approximately 2s (median), whisker extends to approximately 4s. (Brown)

* **KG-Agent (3–8):** Approximately 4s (median), whisker extends to approximately 6s. (Yellow)

* **RoG (B=3, D≤4 → 12):** Approximately 10s (median), whisker extends to approximately 12s. (Cyan)

* **PathHD (ours, 1 call):** Approximately 0.15s (median), whisker extends to approximately 0.2s. (Dark Blue)

**Trends:**

* PathHD exhibits the lowest median latency among all models.

* RoG has the highest median latency.

* ChatGPT and PathHD have the lowest latencies, both around 0.1-0.15s.

* UniKGQA and StructGPT have similar latencies, around 0.8s.

* The latency generally increases with the number of calls (as indicated in the model names).

### Key Observations

* PathHD significantly reduces latency compared to other models, particularly RoG.

* The p90 whiskers indicate the variability in latency for each model.

* The logarithmic scale compresses the differences between lower latency models.

* The assumptions at the bottom of the chart provide context for the latency values, indicating the expected latency of LLM calls and non-LLM operations.

### Interpretation

The chart demonstrates the effectiveness of PathHD pruning in reducing query latency. PathHD achieves comparable or lower latency than other models while potentially using fewer resources (1 call vs. multiple calls for other models). The large difference in latency between PathHD and RoG suggests that PathHD's pruning strategy is particularly effective in this context. The p90 whiskers highlight the potential for variability in latency, which is important to consider in real-world applications. The assumptions provided at the bottom of the chart suggest that the latency of LLM calls is a significant factor in overall query latency. The chart suggests that optimizing LLM call frequency and utilizing pruning techniques like PathHD can significantly improve performance. The use of a logarithmic scale is appropriate given the wide range of latency values, but it also means that small differences in latency at the lower end of the scale may be less visually apparent. The chart provides a clear and concise comparison of the latency of different models, allowing for informed decision-making regarding model selection and optimization.