## Diagram: Hierarchical Flowchart of Rule Extraction Methods from Feedforward Neural Networks

### Overview

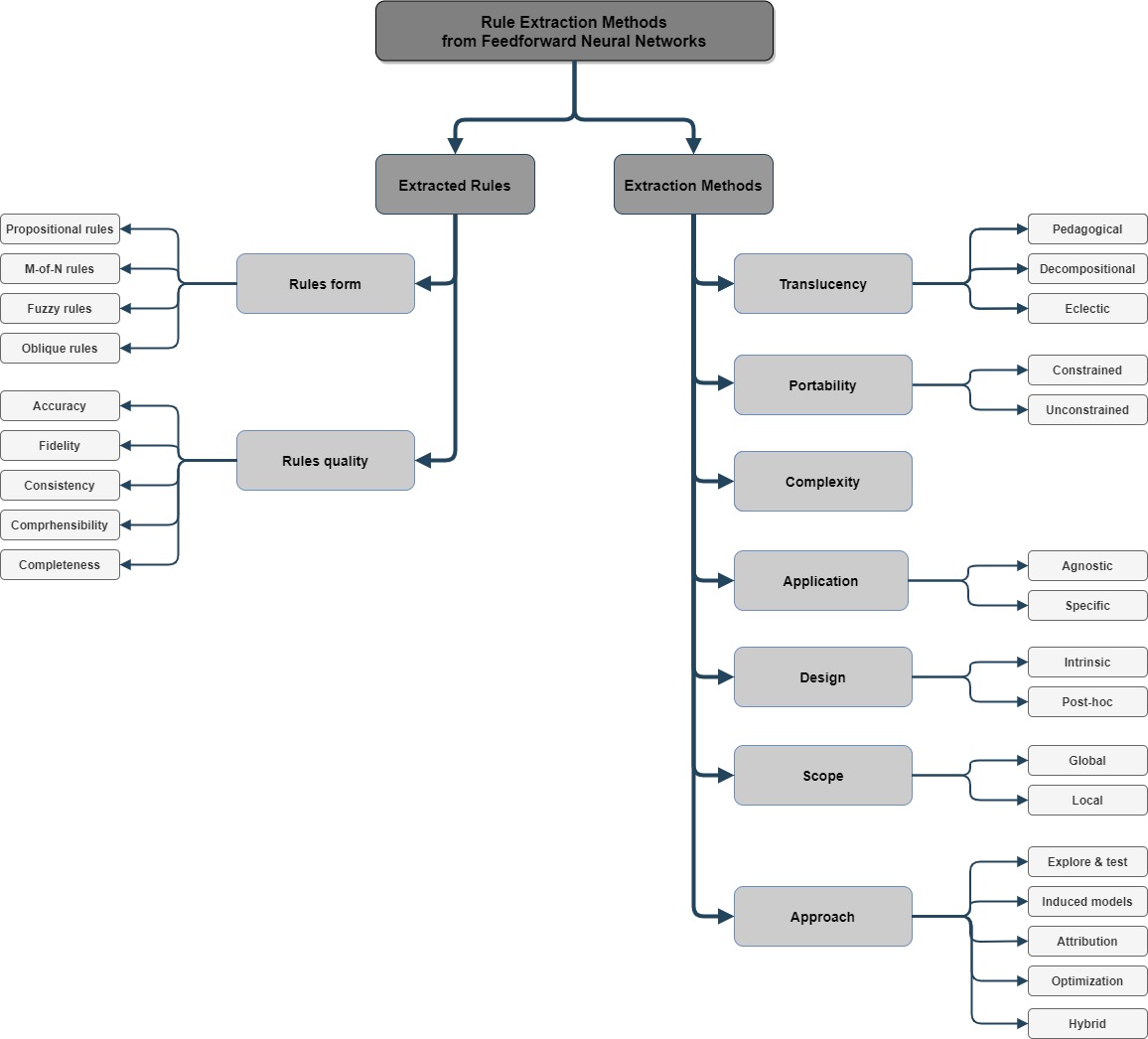

The image is a hierarchical flowchart diagram illustrating the taxonomy and classification of "Rule Extraction Methods from Feedforward Neural Networks." It organizes the field into two primary branches: the characteristics of the extracted rules themselves and the methods used for extraction. The diagram uses a top-down tree structure with rectangular nodes connected by directional arrows.

### Components/Axes

* **Main Title (Top-Center):** "Rule Extraction Methods from Feedforward Neural Networks" in a dark grey box.

* **Primary Branches (Second Level):** Two dark grey boxes stemming from the main title:

* Left: "Extracted Rules"

* Right: "Extraction Methods"

* **Secondary Categories (Third Level):** Lighter grey boxes connected to the primary branches.

* **Leaf Nodes (Final Level):** White boxes with black text, representing specific types, criteria, or sub-methods.

* **Flow Direction:** The flow is strictly top-down and hierarchical. Arrows point from parent categories to their constituent sub-categories.

### Detailed Analysis

The diagram is segmented into two main regions for analysis:

**Region 1: Left Branch - "Extracted Rules"**

This branch classifies the output of the extraction process.

* **"Extracted Rules"** splits into two sub-categories:

1. **"Rules form"** (Light grey box, center-left). This node branches into four specific rule types:

* "Propositional rules"

* "M-of-N rules"

* "Fuzzy rules"

* "Oblique rules"

2. **"Rules quality"** (Light grey box, below "Rules form"). This node branches into five evaluation criteria:

* "Accuracy"

* "Fidelity"

* "Consistency"

* "Comprehensibility"

* "Completeness"

**Region 2: Right Branch - "Extraction Methods"**

This branch classifies the techniques used to derive rules from neural networks.

* **"Extraction Methods"** (Dark grey box, top-right) is the parent node for seven classification dimensions, each represented by a light grey box:

1. **"Translucency"**: Branches into "Pedagogical", "Decompositional", "Eclectic".

2. **"Portability"**: Branches into "Constrained", "Unconstrained".

3. **"Complexity"**: A terminal node with no further sub-branches shown.

4. **"Application"**: Branches into "Agnostic", "Specific".

5. **"Design"**: Branches into "Intrinsic", "Post-hoc".

6. **"Scope"**: Branches into "Global", "Local".

7. **"Approach"**: Branches into five methodological approaches: "Explore & test", "Induced models", "Attribution", "Optimization", "Hybrid".

### Key Observations

* **Structural Symmetry:** The diagram presents a balanced taxonomy, with the "Extracted Rules" branch focusing on the *what* (outputs and their qualities) and the "Extraction Methods" branch focusing on the *how* (techniques and their attributes).

* **Categorization Depth:** The "Extraction Methods" branch is more extensively subdivided, indicating a richer and more varied landscape of techniques compared to the forms and quality metrics of the rules themselves.

* **Terminal Nodes:** Some categories, like "Complexity" under Extraction Methods, are presented as standalone concepts without further subdivision in this diagram.

* **Visual Hierarchy:** Color coding (dark grey for primary categories, light grey for secondary, white for tertiary) and spatial positioning (left vs. right main branch) are used effectively to denote hierarchy and separate conceptual domains.

### Interpretation

This diagram serves as a conceptual map for the field of rule extraction from neural networks. It demonstrates that the discipline is not monolithic but is organized along multiple, orthogonal axes of classification.

* **Relationship Between Elements:** The structure implies that any specific rule extraction technique can be described by selecting one option from each of the seven dimensions under "Extraction Methods" (e.g., a method could be Pedagogical, Unconstrained, Agnostic, Post-hoc, Global, and based on an Optimization approach). The quality of the rules it produces ("Extracted Rules") can then be evaluated against the five criteria listed.

* **Underlying Message:** The taxonomy highlights the trade-offs and design choices inherent in the field. For instance, the "Translucency" dimension (Pedagogical vs. Decompositional) represents a fundamental choice between treating the neural network as a black box or opening it up. The "Design" dimension (Intrinsic vs. Post-hoc) distinguishes between methods built into the network's training and those applied afterward.

* **Utility:** This framework is essential for researchers to position their work, for practitioners to select appropriate methods for their needs (prioritizing, e.g., "Comprehensibility" or "Fidelity"), and for students to gain a structured overview of a complex topic. It systematizes what could otherwise be a disjointed collection of algorithms and metrics.