TECHNICAL ASSET FINGERPRINT

b81a95acab269a58788bfb3c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

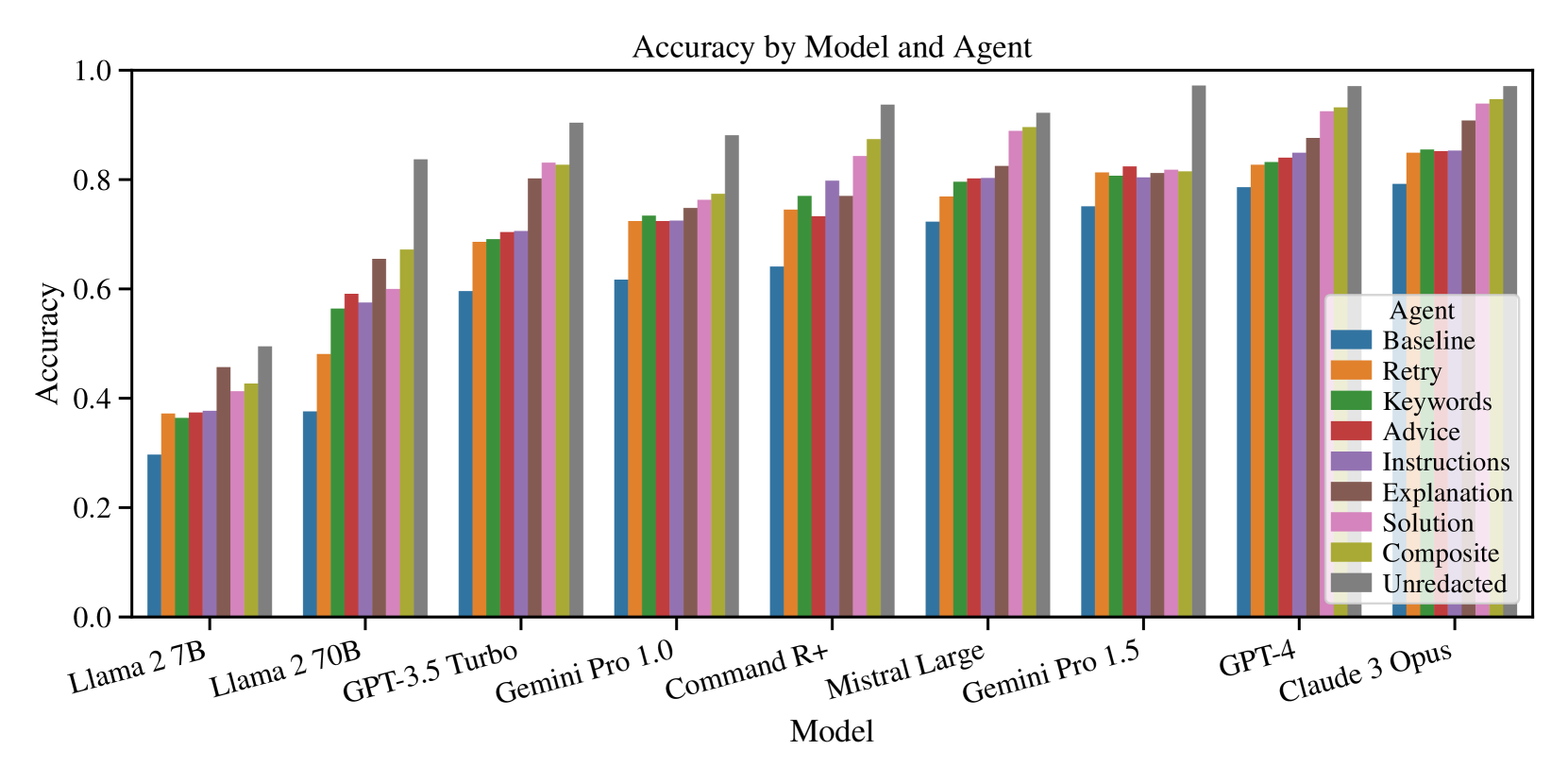

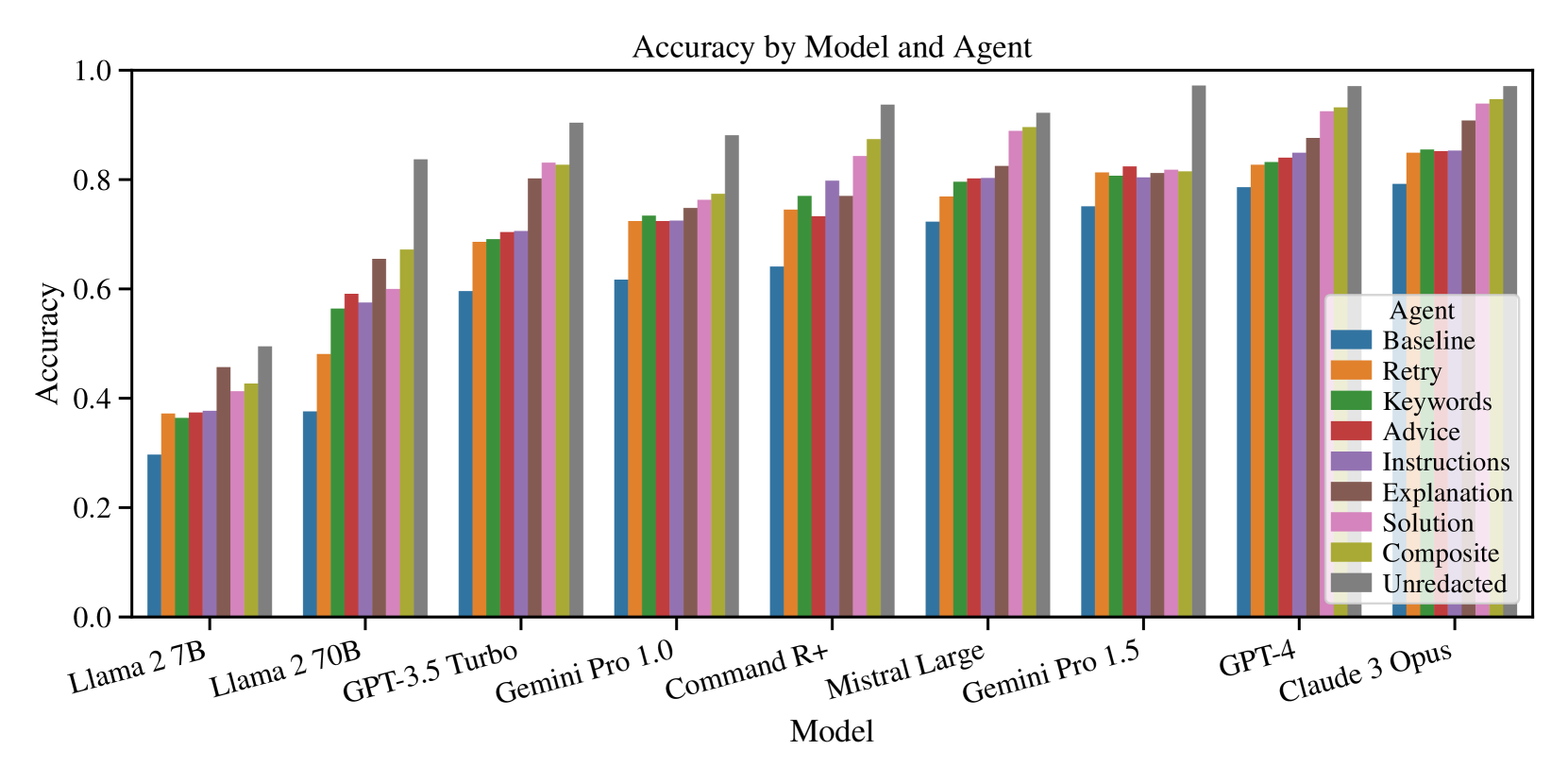

## Bar Chart: Accuracy by Model and Agent

### Overview

The image is a bar chart comparing the accuracy of different language models (Llama 2 7B, Llama 2 70B, GPT-3.5 Turbo, Gemini Pro 1.0, Command R+, Mistral Large, Gemini Pro 1.5, GPT-4, Claude 3 Opus) when using various prompting strategies ("Agents": Baseline, Retry, Keywords, Advice, Instructions, Explanation, Solution, Composite, Unredacted). The y-axis represents accuracy, ranging from 0.0 to 1.0. Each model has a cluster of bars, each representing a different agent.

### Components/Axes

* **Title:** Accuracy by Model and Agent

* **X-axis:** Model (Llama 2 7B, Llama 2 70B, GPT-3.5 Turbo, Gemini Pro 1.0, Command R+, Mistral Large, Gemini Pro 1.5, GPT-4, Claude 3 Opus)

* **Y-axis:** Accuracy (scale from 0.0 to 1.0, with increments of 0.2)

* **Legend (top-right):**

* Baseline (Blue)

* Retry (Orange)

* Keywords (Green)

* Advice (Red)

* Instructions (Purple)

* Explanation (Brown)

* Solution (Pink)

* Composite (Olive/Yellow-Green)

* Unredacted (Gray)

### Detailed Analysis

**Data Extraction and Trend Verification:**

* **Llama 2 7B:**

* Baseline (Blue): ~0.3

* Retry (Orange): ~0.37

* Keywords (Green): ~0.37

* Advice (Red): ~0.4

* Instructions (Purple): ~0.45

* Explanation (Brown): ~0.48

* Solution (Pink): ~0.4

* Composite (Olive/Yellow-Green): ~0.42

* Unredacted (Gray): ~0.5

* Trend: Accuracy increases from Baseline to Unredacted.

* **Llama 2 70B:**

* Baseline (Blue): ~0.37

* Retry (Orange): ~0.48

* Keywords (Green): ~0.55

* Advice (Red): ~0.58

* Instructions (Purple): ~0.6

* Explanation (Brown): ~0.65

* Solution (Pink): ~0.58

* Composite (Olive/Yellow-Green): ~0.6

* Unredacted (Gray): ~0.7

* Trend: Accuracy increases from Baseline to Unredacted.

* **GPT-3.5 Turbo:**

* Baseline (Blue): ~0.6

* Retry (Orange): ~0.68

* Keywords (Green): ~0.7

* Advice (Red): ~0.7

* Instructions (Purple): ~0.78

* Explanation (Brown): ~0.8

* Solution (Pink): ~0.7

* Composite (Olive/Yellow-Green): ~0.7

* Unredacted (Gray): ~0.83

* Trend: Accuracy increases from Baseline to Unredacted.

* **Gemini Pro 1.0:**

* Baseline (Blue): ~0.6

* Retry (Orange): ~0.72

* Keywords (Green): ~0.72

* Advice (Red): ~0.72

* Instructions (Purple): ~0.75

* Explanation (Brown): ~0.78

* Solution (Pink): ~0.72

* Composite (Olive/Yellow-Green): ~0.75

* Unredacted (Gray): ~0.88

* Trend: Accuracy increases from Baseline to Unredacted.

* **Command R+:**

* Baseline (Blue): ~0.6

* Retry (Orange): ~0.75

* Keywords (Green): ~0.75

* Advice (Red): ~0.75

* Instructions (Purple): ~0.78

* Explanation (Brown): ~0.8

* Solution (Pink): ~0.75

* Composite (Olive/Yellow-Green): ~0.78

* Unredacted (Gray): ~0.88

* Trend: Accuracy increases from Baseline to Unredacted.

* **Mistral Large:**

* Baseline (Blue): ~0.7

* Retry (Orange): ~0.78

* Keywords (Green): ~0.75

* Advice (Red): ~0.75

* Instructions (Purple): ~0.8

* Explanation (Brown): ~0.85

* Solution (Pink): ~0.78

* Composite (Olive/Yellow-Green): ~0.8

* Unredacted (Gray): ~0.9

* Trend: Accuracy increases from Baseline to Unredacted.

* **Gemini Pro 1.5:**

* Baseline (Blue): ~0.7

* Retry (Orange): ~0.8

* Keywords (Green): ~0.8

* Advice (Red): ~0.8

* Instructions (Purple): ~0.83

* Explanation (Brown): ~0.85

* Solution (Pink): ~0.8

* Composite (Olive/Yellow-Green): ~0.83

* Unredacted (Gray): ~0.95

* Trend: Accuracy increases from Baseline to Unredacted.

* **GPT-4:**

* Baseline (Blue): ~0.8

* Retry (Orange): ~0.8

* Keywords (Green): ~0.83

* Advice (Red): ~0.85

* Instructions (Purple): ~0.9

* Explanation (Brown): ~0.93

* Solution (Pink): ~0.85

* Composite (Olive/Yellow-Green): ~0.88

* Unredacted (Gray): ~0.98

* Trend: Accuracy increases from Baseline to Unredacted.

* **Claude 3 Opus:**

* Baseline (Blue): ~0.85

* Retry (Orange): ~0.85

* Keywords (Green): ~0.9

* Advice (Red): ~0.85

* Instructions (Purple): ~0.9

* Explanation (Brown): ~0.93

* Solution (Pink): ~0.85

* Composite (Olive/Yellow-Green): ~0.9

* Unredacted (Gray): ~0.95

* Trend: Accuracy increases from Baseline to Unredacted.

### Key Observations

* The "Unredacted" agent consistently yields the highest accuracy across all models.

* The "Baseline" agent generally results in the lowest accuracy.

* Accuracy generally increases as the models progress from Llama 2 7B to Claude 3 Opus.

* The difference in accuracy between different agents is more pronounced for weaker models like Llama 2 7B and Llama 2 70B.

### Interpretation

The chart demonstrates the impact of different prompting strategies ("Agents") on the accuracy of various language models. The "Unredacted" agent, which presumably involves providing the model with the complete, unaltered input, consistently outperforms other strategies. This suggests that providing more context or information to the model generally improves its performance. The "Baseline" agent, likely representing a minimal or default prompting approach, yields the lowest accuracy, highlighting the importance of effective prompt engineering. The trend of increasing accuracy from Llama 2 7B to Claude 3 Opus reflects the advancements in language model capabilities over time. The fact that the difference in accuracy between agents is more significant for weaker models suggests that prompt engineering plays a more crucial role in maximizing the performance of less capable models.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart Type: Grouped Bar Chart: Accuracy by Model and Agent

### Overview

This image displays a grouped bar chart titled "Accuracy by Model and Agent". It compares the accuracy performance of nine different language models across nine distinct "Agent" strategies. The X-axis represents the language models, ordered generally from less capable to more capable, while the Y-axis represents the accuracy score, ranging from 0.0 to 1.0. Each group of bars corresponds to a specific language model, and within each group, individual bars represent the accuracy achieved by different agent strategies, color-coded according to a legend located in the bottom-right of the chart.

### Components/Axes

* **Chart Title**: "Accuracy by Model and Agent" (centered at the top).

* **Y-axis Label**: "Accuracy" (vertical, on the left side).

* **Y-axis Scale**: Ranges from 0.0 to 1.0, with major tick marks and labels at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis Label**: "Model" (horizontal, below the model names).

* **X-axis Categories (Models)**: Listed horizontally below the bars, rotated slightly for readability. From left to right, these are:

1. Llama 2 7B

2. Llama 2 70B

3. GPT-3.5 Turbo

4. Gemini Pro 1.0

5. Command R+

6. Mistral Large

7. Gemini Pro 1.5

8. GPT-4

9. Claude 3 Opus

* **Legend**: Located in the bottom-right corner of the chart, titled "Agent". It lists the nine agent strategies and their corresponding colors:

* **Baseline**: Blue

* **Retry**: Orange

* **Keywords**: Green

* **Advice**: Red

* **Instructions**: Purple/Brown

* **Explanation**: Light Brown/Pinkish

* **Solution**: Pink

* **Composite**: Yellow/Olive Green

* **Unredacted**: Grey

### Detailed Analysis

The chart presents accuracy scores for each combination of model and agent. For each model, the bars are grouped, and within each group, the bars generally show an increasing trend in accuracy from left to right (from Baseline to Unredacted), indicating that most agent strategies improve performance over the baseline.

Here is a detailed breakdown of accuracy values for each model and agent:

* **Llama 2 7B**

* Baseline (blue): Approximately 0.30

* Retry (orange): Approximately 0.37

* Keywords (green): Approximately 0.37

* Advice (red): Approximately 0.38

* Instructions (purple/brown): Approximately 0.38

* Explanation (light brown/pinkish): Approximately 0.40

* Solution (pink): Approximately 0.42

* Composite (yellow/olive green): Approximately 0.43

* Unredacted (grey): Approximately 0.48

* **Llama 2 70B**

* Baseline (blue): Approximately 0.38

* Retry (orange): Approximately 0.48

* Keywords (green): Approximately 0.55

* Advice (red): Approximately 0.58

* Instructions (purple/brown): Approximately 0.59

* Explanation (light brown/pinkish): Approximately 0.60

* Solution (pink): Approximately 0.64

* Composite (yellow/olive green): Approximately 0.66

* Unredacted (grey): Approximately 0.68

* **GPT-3.5 Turbo**

* Baseline (blue): Approximately 0.59

* Retry (orange): Approximately 0.68

* Keywords (green): Approximately 0.69

* Advice (red): Approximately 0.70

* Instructions (purple/brown): Approximately 0.71

* Explanation (light brown/pinkish): Approximately 0.75

* Solution (pink): Approximately 0.78

* Composite (yellow/olive green): Approximately 0.82

* Unredacted (grey): Approximately 0.84

* **Gemini Pro 1.0**

* Baseline (blue): Approximately 0.62

* Retry (orange): Approximately 0.72

* Keywords (green): Approximately 0.73

* Advice (red): Approximately 0.72

* Instructions (purple/brown): Approximately 0.75

* Explanation (light brown/pinkish): Approximately 0.76

* Solution (pink): Approximately 0.77

* Composite (yellow/olive green): Approximately 0.78

* Unredacted (grey): Approximately 0.80

* **Command R+**

* Baseline (blue): Approximately 0.63

* Retry (orange): Approximately 0.73

* Keywords (green): Approximately 0.76

* Advice (red): Approximately 0.74

* Instructions (purple/brown): Approximately 0.77

* Explanation (light brown/pinkish): Approximately 0.78

* Solution (pink): Approximately 0.80

* Composite (yellow/olive green): Approximately 0.82

* Unredacted (grey): Approximately 0.88

* **Mistral Large**

* Baseline (blue): Approximately 0.72

* Retry (orange): Approximately 0.79

* Keywords (green): Approximately 0.80

* Advice (red): Approximately 0.81

* Instructions (purple/brown): Approximately 0.82

* Explanation (light brown/pinkish): Approximately 0.85

* Solution (pink): Approximately 0.87

* Composite (yellow/olive green): Approximately 0.90

* Unredacted (grey): Approximately 0.93

* **Gemini Pro 1.5**

* Baseline (blue): Approximately 0.74

* Retry (orange): Approximately 0.80

* Keywords (green): Approximately 0.82

* Advice (red): Approximately 0.83

* Instructions (purple/brown): Approximately 0.85

* Explanation (light brown/pinkish): Approximately 0.88

* Solution (pink): Approximately 0.90

* Composite (yellow/olive green): Approximately 0.92

* Unredacted (grey): Approximately 0.94

* **GPT-4**

* Baseline (blue): Approximately 0.79

* Retry (orange): Approximately 0.81

* Keywords (green): Approximately 0.82

* Advice (red): Approximately 0.82

* Instructions (purple/brown): Approximately 0.84

* Explanation (light brown/pinkish): Approximately 0.86

* Solution (pink): Approximately 0.88

* Composite (yellow/olive green): Approximately 0.90

* Unredacted (grey): Approximately 0.96

* **Claude 3 Opus**

* Baseline (blue): Approximately 0.80

* Retry (orange): Approximately 0.85

* Keywords (green): Approximately 0.86

* Advice (red): Approximately 0.86

* Instructions (purple/brown): Approximately 0.88

* Explanation (light brown/pinkish): Approximately 0.90

* Solution (pink): Approximately 0.92

* Composite (yellow/olive green): Approximately 0.94

* Unredacted (grey): Approximately 0.97

### Key Observations

1. **Overall Performance Trend**: There is a clear upward trend in accuracy across the models from left to right (Llama 2 7B to Claude 3 Opus), indicating that newer and generally more advanced models achieve higher accuracy. Claude 3 Opus consistently shows the highest accuracy across all agent types, closely followed by GPT-4 and Gemini Pro 1.5.

2. **Agent Effectiveness**: For almost every model, the "Baseline" agent (blue bar) consistently yields the lowest accuracy. Conversely, the "Unredacted" agent (grey bar) and "Composite" agent (yellow/olive green bar) consistently achieve the highest accuracy scores within each model group.

3. **Impact of Agents**: The use of specific agent strategies generally improves accuracy compared to the "Baseline". The improvement is more pronounced for less capable models (e.g., Llama 2 7B, Llama 2 70B) where the absolute difference between Baseline and Unredacted can be substantial (e.g., ~0.18 for Llama 2 7B). For more capable models, while the absolute difference might be smaller, the relative improvement is still significant.

4. **Agent Ranking Consistency**: While the exact values vary, the relative ranking of agents within each model group tends to be somewhat consistent: Baseline < Retry/Keywords/Advice/Instructions < Explanation/Solution < Composite/Unredacted. "Unredacted" is almost always the top performer, followed closely by "Composite".

5. **Narrowing Gaps**: As model capabilities increase, the overall accuracy scores converge towards 1.0, and the performance differences between various agent strategies, while still present, appear to become slightly smaller in absolute terms for the highest-performing models.

### Interpretation

The data strongly suggests that both the choice of the underlying language model and the implementation of specific agent strategies significantly impact accuracy.

1. **Model Advancement**: The progressive increase in accuracy from older/smaller models (Llama 2 7B) to state-of-the-art models (Claude 3 Opus) highlights the continuous improvement in language model capabilities. This implies that for tasks requiring high accuracy, leveraging the most advanced models available is crucial.

2. **Value of Agentic Behavior**: The consistent outperformance of agent strategies over the "Baseline" indicates that structured approaches to problem-solving, such as retrying, providing keywords, offering advice, giving instructions, explaining reasoning, or suggesting solutions, are highly effective in boosting model performance. This underscores the importance of prompt engineering and agentic design in practical applications of LLMs.

3. **"Unredacted" Performance**: The superior performance of the "Unredacted" agent across all models is a critical finding. This suggests that withholding or redacting information, even if intended for privacy or security, might inadvertently hinder the model's ability to achieve optimal accuracy. It implies that models perform best when given full context, and any form of redaction or summarization could lead to information loss that impacts decision-making or task completion.

4. **"Composite" Strategy**: The high performance of the "Composite" agent indicates that combining multiple strategies can be a powerful approach. This suggests that a multi-faceted agent architecture, which dynamically applies different techniques based on the problem or context, could be more robust and effective than relying on a single strategy.

5. **Diminishing Returns (Relative)**: While agents always help, the absolute gain from using agents might be less dramatic for models that are already performing at a very high level (e.g., 0.90+ accuracy). However, even small gains at the high end of performance can be significant in critical applications. For less capable models, agents offer a substantial boost, making them more viable for certain tasks.

In essence, to maximize accuracy, one should aim to use the most capable language model available and augment it with sophisticated agentic strategies, particularly those that provide comprehensive context ("Unredacted") or combine multiple problem-solving techniques ("Composite"). The trade-off between data redaction and accuracy needs careful consideration based on the specific application's requirements.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: Accuracy by Model and Agent

### Overview

This bar chart visualizes the accuracy achieved by different language models when paired with various agent strategies. The x-axis represents the language model, and the y-axis represents the accuracy score, ranging from 0.0 to 1.0. Each model has a group of bars, each representing a different agent strategy.

### Components/Axes

* **Title:** Accuracy by Model and Agent

* **X-axis:** Model (Labels: Llama 2 7B, Llama 2 70B, GPT-3.5 Turbo, Gemini Pro 1.0, Command R+, Mistral Large, Gemini Pro 1.5, GPT-4, Claude 3 Opus)

* **Y-axis:** Accuracy (Scale: 0.0 to 1.0)

* **Legend:**

* Agent (Blue)

* Baseline (Orange)

* Retry (Yellow)

* Keywords (Red)

* Advice (Brown)

* Instructions (Dark Gray)

* Explanation (Light Gray)

* Solution (Purple)

* Composite (Green)

* Unredacted (Teal)

### Detailed Analysis

The chart consists of grouped bar plots for each model. I will analyze each model and its corresponding agent strategies. Note that values are approximate due to the resolution of the image.

* **Llama 2 7B:**

* Agent: ~0.48

* Baseline: ~0.45

* Retry: ~0.47

* Keywords: ~0.46

* Advice: ~0.44

* Instructions: ~0.43

* Explanation: ~0.42

* Solution: ~0.41

* Composite: ~0.40

* Unredacted: ~0.39

* **Llama 2 70B:**

* Agent: ~0.58

* Baseline: ~0.55

* Retry: ~0.57

* Keywords: ~0.56

* Advice: ~0.54

* Instructions: ~0.53

* Explanation: ~0.52

* Solution: ~0.51

* Composite: ~0.50

* Unredacted: ~0.49

* **GPT-3.5 Turbo:**

* Agent: ~0.75

* Baseline: ~0.72

* Retry: ~0.74

* Keywords: ~0.73

* Advice: ~0.71

* Instructions: ~0.70

* Explanation: ~0.69

* Solution: ~0.68

* Composite: ~0.67

* Unredacted: ~0.66

* **Gemini Pro 1.0:**

* Agent: ~0.78

* Baseline: ~0.75

* Retry: ~0.77

* Keywords: ~0.76

* Advice: ~0.74

* Instructions: ~0.73

* Explanation: ~0.72

* Solution: ~0.71

* Composite: ~0.70

* Unredacted: ~0.69

* **Command R+:**

* Agent: ~0.72

* Baseline: ~0.69

* Retry: ~0.71

* Keywords: ~0.70

* Advice: ~0.68

* Instructions: ~0.67

* Explanation: ~0.66

* Solution: ~0.65

* Composite: ~0.64

* Unredacted: ~0.63

* **Mistral Large:**

* Agent: ~0.85

* Baseline: ~0.82

* Retry: ~0.84

* Keywords: ~0.83

* Advice: ~0.81

* Instructions: ~0.80

* Explanation: ~0.79

* Solution: ~0.78

* Composite: ~0.77

* Unredacted: ~0.76

* **Gemini Pro 1.5:**

* Agent: ~0.88

* Baseline: ~0.85

* Retry: ~0.87

* Keywords: ~0.86

* Advice: ~0.84

* Instructions: ~0.83

* Explanation: ~0.82

* Solution: ~0.81

* Composite: ~0.80

* Unredacted: ~0.79

* **GPT-4:**

* Agent: ~0.92

* Baseline: ~0.89

* Retry: ~0.91

* Keywords: ~0.90

* Advice: ~0.88

* Instructions: ~0.87

* Explanation: ~0.86

* Solution: ~0.85

* Composite: ~0.84

* Unredacted: ~0.83

* **Claude 3 Opus:**

* Agent: ~0.95

* Baseline: ~0.92

* Retry: ~0.94

* Keywords: ~0.93

* Advice: ~0.91

* Instructions: ~0.90

* Explanation: ~0.89

* Solution: ~0.88

* Composite: ~0.87

* Unredacted: ~0.86

### Key Observations

* Accuracy generally increases with more powerful models (moving from left to right on the x-axis).

* The "Agent" strategy consistently outperforms other strategies across all models.

* The difference in accuracy between strategies is more pronounced for stronger models.

* Llama 2 7B and Llama 2 70B have significantly lower accuracy scores compared to the other models.

* The "Unredacted" strategy consistently has the lowest accuracy across all models.

### Interpretation

The data demonstrates a clear correlation between model capability and accuracy when combined with different agent strategies. More advanced models like Claude 3 Opus and GPT-4 achieve significantly higher accuracy scores than less powerful models like Llama 2 7B. The "Agent" strategy appears to be the most effective overall, suggesting that a well-designed agent can significantly improve the performance of a language model. The consistently lower performance of the "Unredacted" strategy suggests that redacting information may be beneficial for certain tasks, potentially by reducing noise or ambiguity. The increasing gap in performance between strategies as model capability increases suggests that more powerful models are better able to leverage the benefits of sophisticated agent strategies. This data could be used to inform the selection of appropriate models and agent strategies for specific applications, optimizing for accuracy and performance. The consistent trend across all models suggests a robust relationship between these variables.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: Accuracy by Model and Agent

### Overview

This is a grouped bar chart comparing the performance accuracy of nine different large language models (LLMs) across nine distinct "agent" configurations or prompting strategies. The chart visualizes how accuracy varies both by model and by the agent method applied.

### Components/Axes

* **Title:** "Accuracy by Model and Agent"

* **Y-Axis:** Labeled "Accuracy". Scale ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Model". Lists nine distinct models:

1. Llama 2 7B

2. Llama 2 70B

3. GPT-3.5 Turbo

4. Gemini Pro 1.0

5. Command R+

6. Mistral Large

7. Gemini Pro 1.5

8. GPT-4

9. Claude 3 Opus

* **Legend:** Positioned in the bottom-right quadrant, overlapping the bars for the last two models. It defines the "Agent" types by color:

* **Baseline:** Blue

* **Retry:** Orange

* **Keywords:** Green

* **Advice:** Red

* **Instructions:** Purple

* **Explanation:** Brown

* **Solution:** Pink

* **Composite:** Olive/Yellow-Green

* **Unredacted:** Gray

### Detailed Analysis

Below are the approximate accuracy values for each agent within each model group, derived from visual inspection of the bar heights. Values are estimated to the nearest 0.01.

**1. Llama 2 7B**

* **Trend:** Generally low accuracy, with a gradual increase from Baseline to Unredacted.

* **Values:** Baseline (~0.30), Retry (~0.37), Keywords (~0.36), Advice (~0.37), Instructions (~0.38), Explanation (~0.45), Solution (~0.41), Composite (~0.42), Unredacted (~0.50).

**2. Llama 2 70B**

* **Trend:** Significant improvement over the 7B model. A clear upward trend from Baseline to Unredacted, with a notable jump for the Unredacted agent.

* **Values:** Baseline (~0.38), Retry (~0.48), Keywords (~0.56), Advice (~0.59), Instructions (~0.57), Explanation (~0.65), Solution (~0.60), Composite (~0.67), Unredacted (~0.84).

**3. GPT-3.5 Turbo**

* **Trend:** Higher overall accuracy. A steady, step-wise increase across agents, with Unredacted performing best.

* **Values:** Baseline (~0.60), Retry (~0.69), Keywords (~0.69), Advice (~0.70), Instructions (~0.71), Explanation (~0.80), Solution (~0.83), Composite (~0.82), Unredacted (~0.90).

**4. Gemini Pro 1.0**

* **Trend:** Similar pattern to GPT-3.5 Turbo but with slightly lower peak accuracy for Unredacted.

* **Values:** Baseline (~0.61), Retry (~0.72), Keywords (~0.73), Advice (~0.72), Instructions (~0.72), Explanation (~0.75), Solution (~0.77), Composite (~0.78), Unredacted (~0.88).

**5. Command R+**

* **Trend:** Strong performance, with a pronounced peak for the Unredacted agent.

* **Values:** Baseline (~0.64), Retry (~0.74), Keywords (~0.77), Advice (~0.73), Instructions (~0.80), Explanation (~0.77), Solution (~0.84), Composite (~0.87), Unredacted (~0.94).

**6. Mistral Large**

* **Trend:** High and relatively flat performance across most agents, with Unredacted and Composite leading.

* **Values:** Baseline (~0.72), Retry (~0.77), Keywords (~0.79), Advice (~0.80), Instructions (~0.80), Explanation (~0.82), Solution (~0.89), Composite (~0.90), Unredacted (~0.92).

**7. Gemini Pro 1.5**

* **Trend:** Very high accuracy, with most agents clustering above 0.80. Unredacted is the clear outlier at the top.

* **Values:** Baseline (~0.75), Retry (~0.81), Keywords (~0.81), Advice (~0.82), Instructions (~0.81), Explanation (~0.81), Solution (~0.81), Composite (~0.81), Unredacted (~0.97).

**8. GPT-4**

* **Trend:** Consistently high accuracy across all agents, with a gradual increase towards the rightmost agents.

* **Values:** Baseline (~0.79), Retry (~0.83), Keywords (~0.83), Advice (~0.84), Instructions (~0.85), Explanation (~0.88), Solution (~0.93), Composite (~0.93), Unredacted (~0.97).

**9. Claude 3 Opus**

* **Trend:** The highest-performing model overall. All agents score above 0.80, with a tight cluster at the top end.

* **Values:** Baseline (~0.79), Retry (~0.85), Keywords (~0.85), Advice (~0.85), Instructions (~0.85), Explanation (~0.91), Solution (~0.94), Composite (~0.95), Unredacted (~0.97).

### Key Observations

1. **Model Performance Hierarchy:** There is a clear progression in overall accuracy from left to right on the x-axis. Llama 2 7B is the lowest-performing model, while Claude 3 Opus and GPT-4 are the highest.

2. **Agent Effect:** Within every single model group, the **Unredacted** agent (gray bar) achieves the highest accuracy. The **Baseline** agent (blue bar) is consistently the lowest or among the lowest.

3. **Performance Clustering:** For the top-performing models (Gemini Pro 1.5, GPT-4, Claude 3 Opus), the accuracy scores for many agents (Retry, Keywords, Advice, Instructions) are very similar, forming a plateau. The major differentiators at the top are the Explanation, Solution, Composite, and especially Unredacted agents.

4. **Non-Linear Improvement:** The jump in accuracy from Llama 2 7B to Llama 2 70B is substantial, particularly for the more advanced agents (Explanation, Composite, Unredacted), indicating that model scale significantly amplifies the benefits of these prompting strategies.

### Interpretation

This chart demonstrates two key findings in LLM evaluation:

1. **Model Capability is Foundational:** The base capability of the model (represented by its position on the x-axis) sets the primary ceiling for performance. No agent strategy can elevate a weaker model (e.g., Llama 2 7B) to the level of a stronger one (e.g., Claude 3 Opus).

2. **Agent Strategies Unlock Potential:** The choice of agent or prompting strategy has a profound and consistent impact on accuracy *within* a given model. The "Unredacted" strategy, which likely involves providing the model with full, unfiltered context or information, universally yields the best results. This suggests that performance bottlenecks are often related to information access or framing rather than pure model reasoning. The "Baseline" strategy's poor performance highlights the inadequacy of minimal prompting.

The data implies that for optimal performance, one should use the most capable model available **and** employ advanced agent strategies like "Unredacted," "Composite," or "Solution." The diminishing returns between agents for the top models suggest they are approaching a performance ceiling on this specific task, where further gains require either better base models or fundamentally different approaches.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Accuracy by Model and Agent

### Overview

The chart compares the accuracy of various AI models (x-axis) across different agent types (y-axis). Each model is evaluated using eight agent configurations, with accuracy scores ranging from 0.0 to 1.0. The data suggests a correlation between model complexity and agent performance, with larger models generally achieving higher accuracy.

### Components/Axes

- **X-axis (Models)**:

- Llama 2 7B

- Llama 2 70B

- GPT-3.5 Turbo

- Gemini Pro 1.0

- Command R+

- Mistral Large

- Gemini Pro 1.5

- GPT-4

- Claude 3 Opus

- **Y-axis (Accuracy)**: 0.0 to 1.0 in increments of 0.2

- **Legend (Agents)**:

- Blue: Baseline

- Orange: Retry

- Green: Keywords

- Red: Advice

- Purple: Instructions

- Brown: Explanation

- Pink: Solution

- Olive: Composite

- Gray: Unredacted

### Detailed Analysis

1. **Llama 2 7B**:

- Baseline: ~0.3

- Retry: ~0.4

- Keywords: ~0.4

- Advice: ~0.4

- Instructions: ~0.4

- Explanation: ~0.5

- Solution: ~0.5

- Composite: ~0.5

- Unredacted: ~0.5

2. **Llama 2 70B**:

- Baseline: ~0.4

- Retry: ~0.5

- Keywords: ~0.6

- Advice: ~0.6

- Instructions: ~0.6

- Explanation: ~0.7

- Solution: ~0.7

- Composite: ~0.7

- Unredacted: ~0.8

3. **GPT-3.5 Turbo**:

- Baseline: ~0.6

- Retry: ~0.7

- Keywords: ~0.7

- Advice: ~0.7

- Instructions: ~0.7

- Explanation: ~0.8

- Solution: ~0.8

- Composite: ~0.8

- Unredacted: ~0.9

4. **Gemini Pro 1.0**:

- Baseline: ~0.6

- Retry: ~0.7

- Keywords: ~0.7

- Advice: ~0.7

- Instructions: ~0.7

- Explanation: ~0.8

- Solution: ~0.8

- Composite: ~0.8

- Unredacted: ~0.9

5. **Command R+**:

- Baseline: ~0.6

- Retry: ~0.7

- Keywords: ~0.7

- Advice: ~0.7

- Instructions: ~0.7

- Explanation: ~0.8

- Solution: ~0.8

- Composite: ~0.8

- Unredacted: ~0.9

6. **Mistral Large**:

- Baseline: ~0.7

- Retry: ~0.8

- Keywords: ~0.8

- Advice: ~0.8

- Instructions: ~0.8

- Explanation: ~0.9

- Solution: ~0.9

- Composite: ~0.9

- Unredacted: ~0.9

7. **Gemini Pro 1.5**:

- Baseline: ~0.7

- Retry: ~0.8

- Keywords: ~0.8

- Advice: ~0.8

- Instructions: ~0.8

- Explanation: ~0.9

- Solution: ~0.9

- Composite: ~0.9

- Unredacted: ~0.9

8. **GPT-4**:

- Baseline: ~0.8

- Retry: ~0.8

- Keywords: ~0.8

- Advice: ~0.8

- Instructions: ~0.8

- Explanation: ~0.9

- Solution: ~0.9

- Composite: ~0.9

- Unredacted: ~0.9

9. **Claude 3 Opus**:

- Baseline: ~0.8

- Retry: ~0.8

- Keywords: ~0.8

- Advice: ~0.8

- Instructions: ~0.8

- Explanation: ~0.9

- Solution: ~0.9

- Composite: ~0.9

- Unredacted: ~0.9

### Key Observations

- **Model Size Correlation**: Larger models (e.g., GPT-4, Claude 3 Opus) consistently outperform smaller models (e.g., Llama 2 7B) across all agent types.

- **Agent Performance**: The Composite agent achieves the highest accuracy in most cases, followed by Unredacted. Baseline agents perform the worst.

- **Trend**: Accuracy improves with model complexity, with smaller models showing minimal variation between agents.

### Interpretation

The data demonstrates that model architecture and size significantly influence accuracy, with larger models achieving near-optimal performance across all agent configurations. The Composite agent's consistent superiority suggests it is particularly effective at leveraging model capabilities. The Baseline agent's poor performance highlights the importance of specialized agent configurations for task-specific accuracy. This analysis underscores the need for model selection based on task complexity and the value of agent optimization for maximizing performance.

DECODING INTELLIGENCE...