\n

## Bar Chart: Attack Success Rate vs. Ablated Head Numbers for Llama-2-7b-chat-hf

### Overview

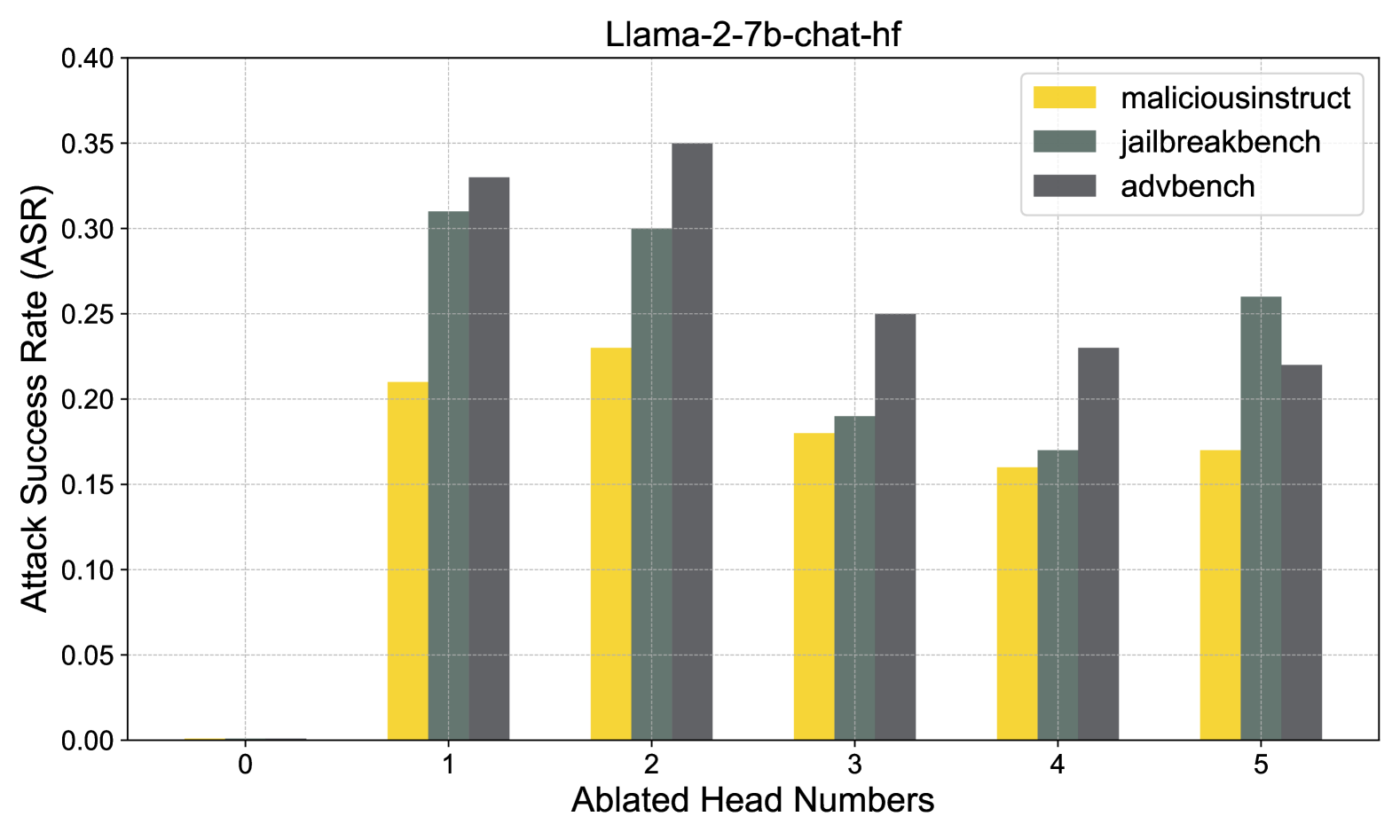

This bar chart visualizes the Attack Success Rate (ASR) for three different attack benchmarks – maliciousinstruct, jailbreakbench, and advbench – against the Llama-2-7b-chat-hf model, as the number of ablated heads increases from 0 to 5. Each attack benchmark is represented by a different colored bar for each ablated head number. The chart aims to demonstrate how removing attention heads affects the model's vulnerability to adversarial attacks.

### Components/Axes

* **Title:** Llama-2-7b-chat-hf

* **X-axis:** Ablated Head Numbers (0, 1, 2, 3, 4, 5)

* **Y-axis:** Attack Success Rate (ASR) – Scale ranges from 0.00 to 0.40

* **Legend:**

* maliciousinstruct (Yellow)

* jailbreakbench (Light Grey)

* advbench (Dark Grey)

### Detailed Analysis

The chart consists of stacked bars for each Ablated Head Number. Each bar is composed of three segments representing the ASR for each attack benchmark.

* **Ablated Head Number 0:**

* maliciousinstruct: Approximately 0.21

* jailbreakbench: Approximately 0.06

* advbench: Approximately 0.04

* **Ablated Head Number 1:**

* maliciousinstruct: Approximately 0.22

* jailbreakbench: Approximately 0.11

* advbench: Approximately 0.32

* **Ablated Head Number 2:**

* maliciousinstruct: Approximately 0.24

* jailbreakbench: Approximately 0.08

* advbench: Approximately 0.31

* **Ablated Head Number 3:**

* maliciousinstruct: Approximately 0.18

* jailbreakbench: Approximately 0.07

* advbench: Approximately 0.26

* **Ablated Head Number 4:**

* maliciousinstruct: Approximately 0.23

* jailbreakbench: Approximately 0.08

* advbench: Approximately 0.24

* **Ablated Head Number 5:**

* maliciousinstruct: Approximately 0.25

* jailbreakbench: Approximately 0.07

* advbench: Approximately 0.26

**Trends:**

* **maliciousinstruct:** The ASR for maliciousinstruct generally increases as the number of ablated heads increases, with some fluctuations. It starts at approximately 0.21 and reaches approximately 0.25 at Ablated Head Number 5.

* **jailbreakbench:** The ASR for jailbreakbench remains relatively stable, fluctuating between approximately 0.06 and 0.11 throughout the different ablated head numbers.

* **advbench:** The ASR for advbench shows a more pronounced increase initially, peaking at approximately 0.32 at Ablated Head Number 1, then decreasing to approximately 0.26 at Ablated Head Number 5.

### Key Observations

* The advbench attack shows the most significant variation in ASR as heads are ablated, initially increasing sharply and then leveling off.

* The jailbreakbench attack consistently has the lowest ASR across all ablated head numbers.

* The maliciousinstruct attack shows a gradual increase in ASR with more ablated heads.

* The combined height of the stacked bars represents the total ASR for all three attacks at each ablated head number.

### Interpretation

The data suggests that ablating attention heads can have a varying impact on the model's vulnerability to different types of attacks. The initial increase in ASR for the advbench attack (at Ablated Head Number 1) could indicate that certain attention heads are crucial for defending against this specific attack. The relatively stable ASR for jailbreakbench suggests that this attack is less sensitive to the removal of attention heads. The gradual increase in ASR for maliciousinstruct suggests a more distributed vulnerability across the attention mechanism.

The relationship between the number of ablated heads and ASR is not strictly linear, indicating that the importance of individual attention heads is not uniform. Some heads may play a more critical role in mitigating specific attacks than others. The chart highlights the importance of considering the specific attack vector when evaluating the impact of ablating attention heads in large language models. The data could be used to inform strategies for improving the robustness of the model against adversarial attacks by selectively removing or modifying attention heads.