## Scatter Plot: AI Model Accuracy Comparison

### Overview

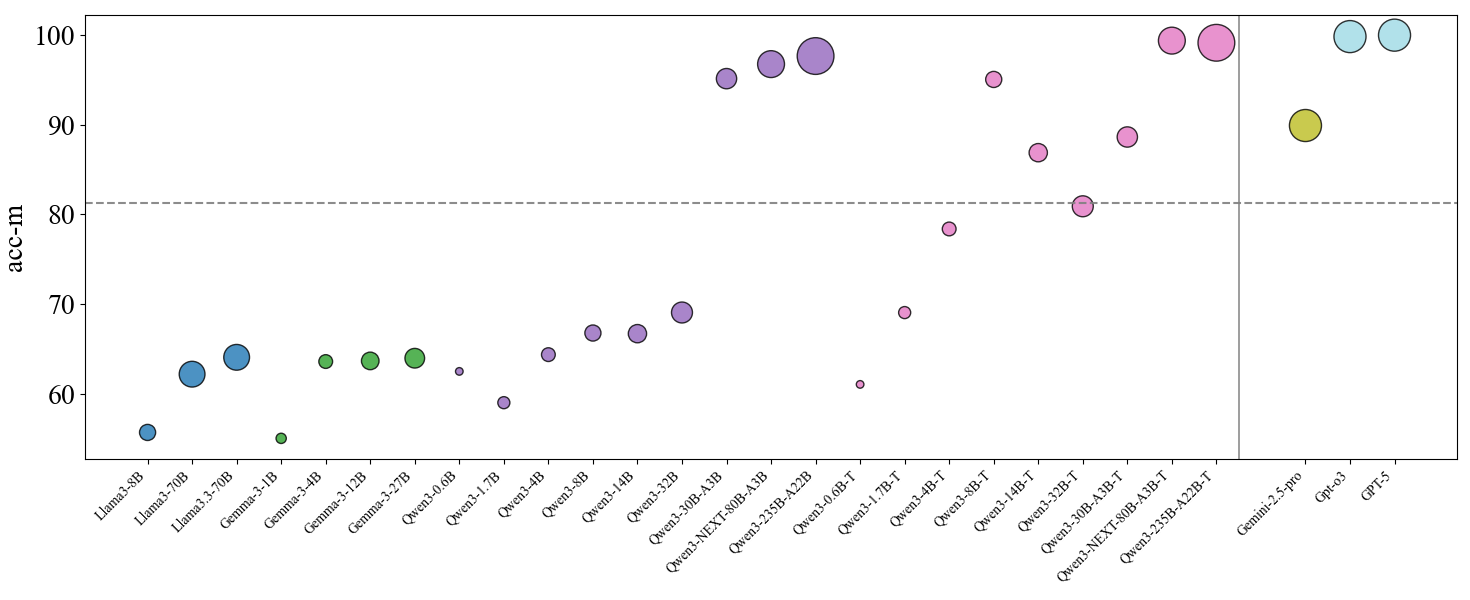

The image is a scatter plot comparing the accuracy metric ("acc-m") of various large language models (LLMs). The plot displays individual data points for each model, with the y-axis representing accuracy and the x-axis listing model names. A horizontal dashed line at acc-m = 80 serves as a reference benchmark. The data points are colored and sized, likely to group model families or indicate another variable (like model size), though no explicit legend is provided within the image frame.

### Components/Axes

* **Y-Axis:** Labeled "acc-m". Scale ranges from 50 to 100, with major tick marks at 50, 60, 70, 80, 90, and 100.

* **X-Axis:** Lists model names. The models are grouped into distinct families, separated by visual spacing and color.

* **Reference Line:** A horizontal dashed gray line at y = 80.

* **Data Points:** Circles of varying sizes and colors. Each circle represents a single model's performance.

* **Model Families (from left to right):**

* **Llama (Blue):** Llama3-8B, Llama3-70B, Llama3.3-70B

* **Gemma (Green):** Gemma-3-1B, Gemma-3-4B, Gemma-3-12B, Gemma-3-27B

* **Qwen (Purple):** Qwen2.5-0.5B, Qwen2.5-1.5B, Qwen2.5-3B, Qwen2.5-7B, Qwen2.5-14B, Qwen2.5-32B, Qwen3-30B-A3B, Qwen3-NEXT-80B-A3B, Qwen3-235B-A22B

* **Qwen-T (Pink):** Qwen3-0.6B-T, Qwen3-1.7B-T, Qwen3-4B-T, Qwen3-8B-T, Qwen3-14B-T, Qwen3-32B-T, Qwen3-30B-A3B-T, Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T

* **Other Models (Right of vertical divider):** Gemini-2.5-pro (Yellow), Gpt-4o (Light Blue), GPT-5 (Light Blue)

### Detailed Analysis

**Data Points (Approximate acc-m values, read from y-axis):**

* **Llama Family (Blue):**

* Llama3-8B: ~55

* Llama3-70B: ~62

* Llama3.3-70B: ~64

* **Gemma Family (Green):**

* Gemma-3-1B: ~55

* Gemma-3-4B: ~63

* Gemma-3-12B: ~63

* Gemma-3-27B: ~64

* **Qwen Family (Purple):**

* Qwen2.5-0.5B: ~62

* Qwen2.5-1.5B: ~59

* Qwen2.5-3B: ~64

* Qwen2.5-7B: ~67

* Qwen2.5-14B: ~67

* Qwen2.5-32B: ~69

* Qwen3-30B-A3B: ~95

* Qwen3-NEXT-80B-A3B: ~97

* Qwen3-235B-A22B: ~98

* **Qwen-T Family (Pink):**

* Qwen3-0.6B-T: ~61

* Qwen3-1.7B-T: ~69

* Qwen3-4B-T: ~78

* Qwen3-8B-T: ~95

* Qwen3-14B-T: ~87

* Qwen3-32B-T: ~81

* Qwen3-30B-A3B-T: ~89

* Qwen3-NEXT-80B-A3B-T: ~99

* Qwen3-235B-A22B-T: ~99

* **Other Models:**

* Gemini-2.5-pro: ~90

* Gpt-4o: ~100

* GPT-5: ~100

**Trend Verification:**

* **Within Qwen (Purple):** The line of points shows a clear upward trend from left to right, indicating that larger or more advanced Qwen models achieve higher accuracy.

* **Within Qwen-T (Pink):** The trend is less linear but generally high-performing, with several models clustered near the top of the chart.

* **Across Families:** There is a general progression from lower accuracy on the left (Llama, Gemma, smaller Qwen) to higher accuracy on the right (larger Qwen, Qwen-T, and the final group of Gemini/GPT).

### Key Observations

1. **Performance Threshold:** The dashed line at acc-m=80 clearly separates two performance tiers. Most Llama, Gemma, and smaller Qwen models fall below this line, while larger Qwen models, Qwen-T models, and the final group (Gemini, GPT) are above it.

2. **Model Size Correlation:** Within the Qwen (purple) series, there is a strong positive correlation between the model identifier (which likely correlates with size/capability) and accuracy.

3. **Top Performers:** The highest accuracy values (~99-100) are achieved by Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T, Gpt-4o, and GPT-5.

4. **Outliers/Notable Points:**

* Qwen3-30B-A3B (purple) shows a significant jump in accuracy compared to its predecessor Qwen2.5-32B.

* Qwen3-8B-T (pink) has a very high accuracy (~95) for its apparent model size, outperforming many larger models in the standard Qwen series.

* The two GPT models (Gpt-4o, GPT-5) and the top Qwen-T models are clustered at the very top of the accuracy scale.

### Interpretation

This chart visualizes a benchmark comparison of LLM performance on a specific task measured by "acc-m". The data suggests several key insights:

1. **Architectural/Training Advances:** The significant performance gap between the Qwen2.5 series (purple, lower) and the Qwen3 series (both purple and pink, higher) indicates substantial improvements in the Qwen3 generation, likely due to architectural changes, better training data, or more compute.

2. **The "T" Variant Advantage:** The Qwen3-T models (pink) generally outperform their non-T counterparts of similar size (e.g., Qwen3-8B-T vs. Qwen3-8B is not directly shown, but Qwen3-8B-T is very high). This implies the "T" denotes a specialized variant (e.g., fine-tuned, distilled, or trained with a different objective) that is highly effective for this specific metric.

3. **State-of-the-Art Frontier:** The cluster of points at the top-right (Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T, Gpt-4o, GPT-5) defines the current state-of-the-art frontier for this task. The fact that an open-weight model (Qwen) is performing in the same range as proprietary models (GPT, Gemini) is a notable finding.

4. **Benchmark Context:** The dashed line at 80 likely represents a human-performance baseline or a previous state-of-the-art threshold. Crossing this line signifies a model achieving a high level of proficiency on the underlying task.

**In summary, the chart demonstrates rapid progress in LLM capabilities, highlights the effectiveness of specific model variants (Qwen-T), and shows that the performance gap between leading open and closed models has narrowed considerably on this particular benchmark.**