## Confusion Matrices and Bar Chart: Model Performance Analysis

### Overview

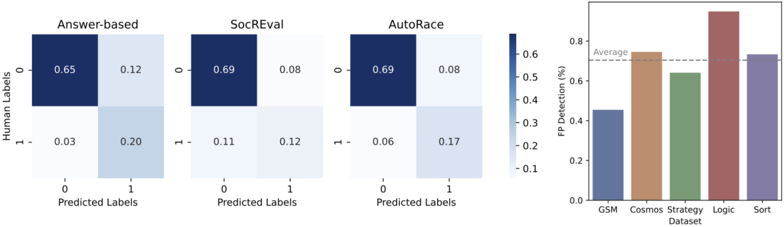

The image presents a composite visualization comparing the performance of three different models or methods ("Answer-based", "SocREval", "AutoRace") using confusion matrices, alongside a bar chart showing False Positive (FP) Detection rates across five different datasets. The overall theme is the evaluation of model accuracy and error rates.

### Components/Axes

**Left Section: Three Confusion Matrices (Heatmaps)**

* **Layout:** Three square heatmaps arranged horizontally.

* **Common Axes:**

* **Y-axis (Vertical):** Labeled "Human Labels". Ticks at positions 0 (top) and 1 (bottom).

* **X-axis (Horizontal):** Labeled "Predicted Labels". Ticks at positions 0 (left) and 1 (right).

* **Titles (Top of each matrix):** "Answer-based", "SocREval", "AutoRace".

* **Color Scale:** A vertical color bar is positioned to the right of the third matrix. It ranges from light blue (low values, ~0.0) to dark blue (high values, ~0.7). The scale is labeled with values 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7.

**Right Section: Bar Chart**

* **Title:** None visible.

* **Y-axis (Vertical):** Labeled "FP Detection (%)". Scale ranges from 0.0 to 0.8, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8.

* **X-axis (Horizontal):** Labeled "Dataset". Categories from left to right: "GSM", "Cosmos", "Strategy", "Logic", "Sort".

* **Legend/Reference Line:** A horizontal dashed gray line labeled "Average" is drawn across the chart at approximately the 0.6 (60%) mark on the y-axis.

* **Bar Colors:** Each bar has a distinct color: GSM (blue-gray), Cosmos (tan/brown), Strategy (green), Logic (red-brown), Sort (purple).

### Detailed Analysis

**1. Confusion Matrices (Left Section)**

Each matrix is a 2x2 grid showing the relationship between human-assigned labels (ground truth) and model-predicted labels. Values represent proportions or counts (normalized, as they sum to ~1.0 per row).

* **Answer-based Matrix:**

* **Top-Left Cell (Human 0, Predicted 0):** 0.65 (True Negative)

* **Top-Right Cell (Human 0, Predicted 1):** 0.12 (False Positive)

* **Bottom-Left Cell (Human 1, Predicted 0):** 0.03 (False Negative)

* **Bottom-Right Cell (Human 1, Predicted 1):** 0.20 (True Positive)

* *Trend:* High accuracy for class 0 (65% correct), lower for class 1 (20% correct). Very low false negative rate (3%).

* **SocREval Matrix:**

* **Top-Left Cell (Human 0, Predicted 0):** 0.69 (True Negative)

* **Top-Right Cell (Human 0, Predicted 1):** 0.08 (False Positive)

* **Bottom-Left Cell (Human 1, Predicted 0):** 0.11 (False Negative)

* **Bottom-Right Cell (Human 1, Predicted 1):** 0.12 (True Positive)

* *Trend:* Highest accuracy for class 0 (69%). Lower true positive rate (12%) and higher false negative rate (11%) compared to Answer-based.

* **AutoRace Matrix:**

* **Top-Left Cell (Human 0, Predicted 0):** 0.69 (True Negative)

* **Top-Right Cell (Human 0, Predicted 1):** 0.08 (False Positive)

* **Bottom-Left Cell (Human 1, Predicted 0):** 0.06 (False Negative)

* **Bottom-Right Cell (Human 1, Predicted 1):** 0.17 (True Positive)

* *Trend:* Similar high accuracy for class 0 (69%) as SocREval. Better true positive rate (17%) and lower false negative rate (6%) than SocREval, but slightly worse than Answer-based.

**2. Bar Chart (Right Section)**

The chart shows the percentage of False Positive (FP) detections across five datasets. The "Average" line serves as a benchmark.

* **GSM:** Bar height is approximately **0.45 (45%)**. This is the lowest value, significantly below the average.

* **Cosmos:** Bar height is approximately **0.65 (65%)**. Slightly above the average line.

* **Strategy:** Bar height is approximately **0.55 (55%)**. Below the average.

* **Logic:** Bar height is approximately **0.75 (75%)**. This is the highest value, well above the average.

* **Sort:** Bar height is approximately **0.65 (65%)**. Similar to Cosmos, slightly above average.

### Key Observations

1. **Consistent Class 0 Performance:** All three models (Answer-based, SocREval, AutoRace) show high and nearly identical performance in correctly identifying class 0 (True Negative rates of 0.65-0.69).

2. **Variable Class 1 Performance:** Performance on class 1 varies significantly. "Answer-based" has the highest True Positive rate (0.20) but also a very low False Negative rate (0.03). "SocREval" has the lowest True Positive rate (0.12).

3. **FP Detection Disparity:** The bar chart reveals a wide range in FP Detection rates across datasets. The "Logic" dataset has the highest rate (~75%), while "GSM" has the lowest (~45%).

4. **Average Benchmark:** Three out of five datasets (Cosmos, Logic, Sort) have FP Detection rates at or above the indicated "Average" line (~60%).

### Interpretation

This visualization provides a multi-faceted view of model evaluation. The confusion matrices focus on **classification accuracy** for a binary task, highlighting that while all models are reliable at identifying negative cases (class 0), their ability to correctly identify positive cases (class 1) is a key differentiator. "Answer-based" appears most robust for class 1 detection.

The bar chart shifts focus to **error analysis**, specifically the rate of false positives across different data domains. The significant variation (from ~45% to ~75%) suggests that model performance is highly sensitive to the dataset or task type. The "Logic" dataset appears particularly challenging, generating the most false positives, while "GSM" is the least problematic in this regard.

**Synthesis:** A model might have good overall accuracy (as seen in the matrices) but still exhibit problematic error rates on specific data types (as shown in the bar chart). The "Average" line in the bar chart is a useful but simplistic benchmark; the high variance indicates that a single average metric would mask important performance disparities across domains. This underscores the need for dataset-specific evaluation and error analysis.