## Screenshot: Language Model Failure Example

### Overview

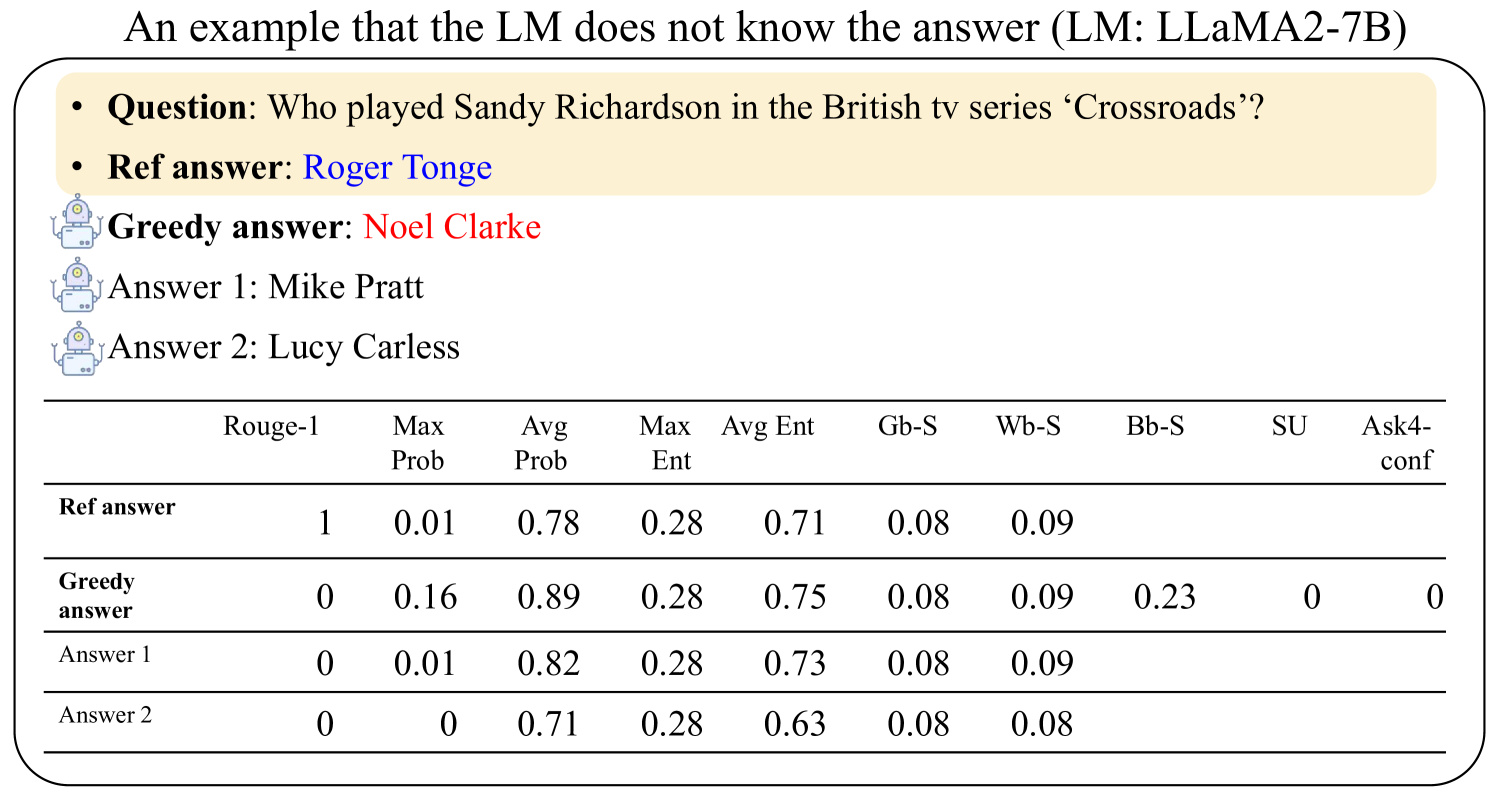

The image is a figure, likely from a research paper or technical report, illustrating an instance where a Large Language Model (LLM) fails to answer a factual question correctly. It presents a specific question, the correct reference answer, the model's incorrect "greedy" answer, two alternative incorrect answers, and a table of associated confidence and evaluation metrics.

### Components/Axes

The image is structured in two main sections within a rounded-corner frame:

1. **Top Section (Question & Answers):** Contains the question, reference answer, and three model-generated answers.

2. **Bottom Section (Metrics Table):** A data table with 10 columns and 4 rows of data.

**Textual Content (Top Section):**

* **Title:** "An example that the LM does not know the answer (LM: LLaMA2-7B)"

* **Question:** "Who played Sandy Richardson in the British tv series ‘Crossroads’?"

* **Ref answer:** "Roger Tonge" (displayed in blue text)

* **Greedy answer:** "Noel Clarke" (displayed in red text)

* **Answer 1:** "Mike Pratt"

* **Answer 2:** "Lucy Carless"

**Metrics Table Structure:**

* **Columns (Headers):** Rouge-1, Max Prob, Avg Prob, Max Ent, Avg Ent, Gb-S, Wb-S, Bb-S, SU, Ask4-conf

* **Rows (Labels):** Ref answer, Greedy answer, Answer 1, Answer 2

### Detailed Analysis

**Table Data Transcription:**

The table contains numerical values for various metrics associated with each answer. Empty cells are denoted by a blank space.

| Row Label | Rouge-1 | Max Prob | Avg Prob | Max Ent | Avg Ent | Gb-S | Wb-S | Bb-S | SU | Ask4-conf |

|----------------|---------|----------|----------|---------|---------|------|------|------|----|-----------|

| **Ref answer** | 1 | 0.01 | 0.78 | 0.28 | 0.71 | 0.08 | 0.09 | | | |

| **Greedy answer** | 0 | 0.16 | 0.89 | 0.28 | 0.75 | 0.08 | 0.09 | 0.23 | 0 | 0 |

| **Answer 1** | 0 | 0.01 | 0.82 | 0.28 | 0.73 | 0.08 | 0.09 | | | |

| **Answer 2** | 0 | 0 | 0.71 | 0.28 | 0.63 | 0.08 | 0.08 | | | |

**Key Metric Observations:**

* **Rouge-1:** Only the reference answer has a score of 1, indicating a perfect match with the ground truth. All model answers score 0.

* **Max Prob (Maximum Probability):** The "Greedy answer" has the highest value (0.16), suggesting the model assigned its highest token probability to this incorrect sequence. The reference answer has a very low max probability (0.01).

* **Avg Prob (Average Probability):** The "Greedy answer" also has the highest average probability (0.89), indicating the model was generally confident in its tokens for this incorrect answer.

* **Entropy (Max Ent, Avg Ent):** Entropy values are relatively consistent across answers, with the reference answer having the lowest average entropy (0.71), suggesting slightly less uncertainty in its token generation compared to the incorrect answers.

* **Specialized Metrics (Gb-S, Wb-S, Bb-S, SU, Ask4-conf):** These appear to be domain-specific confidence or similarity scores. Notably, only the "Greedy answer" has values for Bb-S (0.23), SU (0), and Ask4-conf (0).

### Key Observations

1. **Model Confidence vs. Correctness:** The model's "Greedy answer" (its most likely output) is incorrect. Crucially, this incorrect answer is generated with higher internal probability metrics (Max Prob, Avg Prob) than the correct reference answer.

2. **Complete Failure on Factual Recall:** All three model-generated answers are factually incorrect, as shown by the Rouge-1 score of 0.

3. **Metric Discrepancy:** The table highlights a disconnect between the model's internal confidence signals (high probabilities) and factual accuracy. The model is confidently wrong.

4. **Data Completeness:** The "Greedy answer" row is the only one populated with values for all 10 metrics, suggesting it is the primary focus of the analysis.

### Interpretation

This figure serves as a diagnostic case study in LLM failure modes, specifically for factual recall. It demonstrates that a model (LLaMA2-7B in this instance) can generate an incorrect answer with high internal confidence, as measured by token probabilities. The high `Avg Prob` (0.89) for the wrong answer versus the low `Avg Prob` (0.78) for the correct one is a critical finding. It suggests that the model's probability distribution is not a reliable indicator of factual correctness for this out-of-knowledge question.

The inclusion of specialized metrics like `Bb-S`, `SU`, and `Ask4-conf` (likely standing for something like "Ask for confidence") only for the greedy answer implies these are being evaluated as potential signals for detecting such failures. Their low or zero values here might indicate they are not triggering a "low confidence" flag, which is itself a problem.

In essence, the image argues that relying solely on a model's greedy decoding output or its raw probability scores is insufficient for guaranteeing factual accuracy, especially when the model lacks the knowledge. It underscores the need for external verification, retrieval-augmented generation, or more sophisticated uncertainty quantification methods.