TECHNICAL ASSET FINGERPRINT

b956e9ca993b83ba4e088b6a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

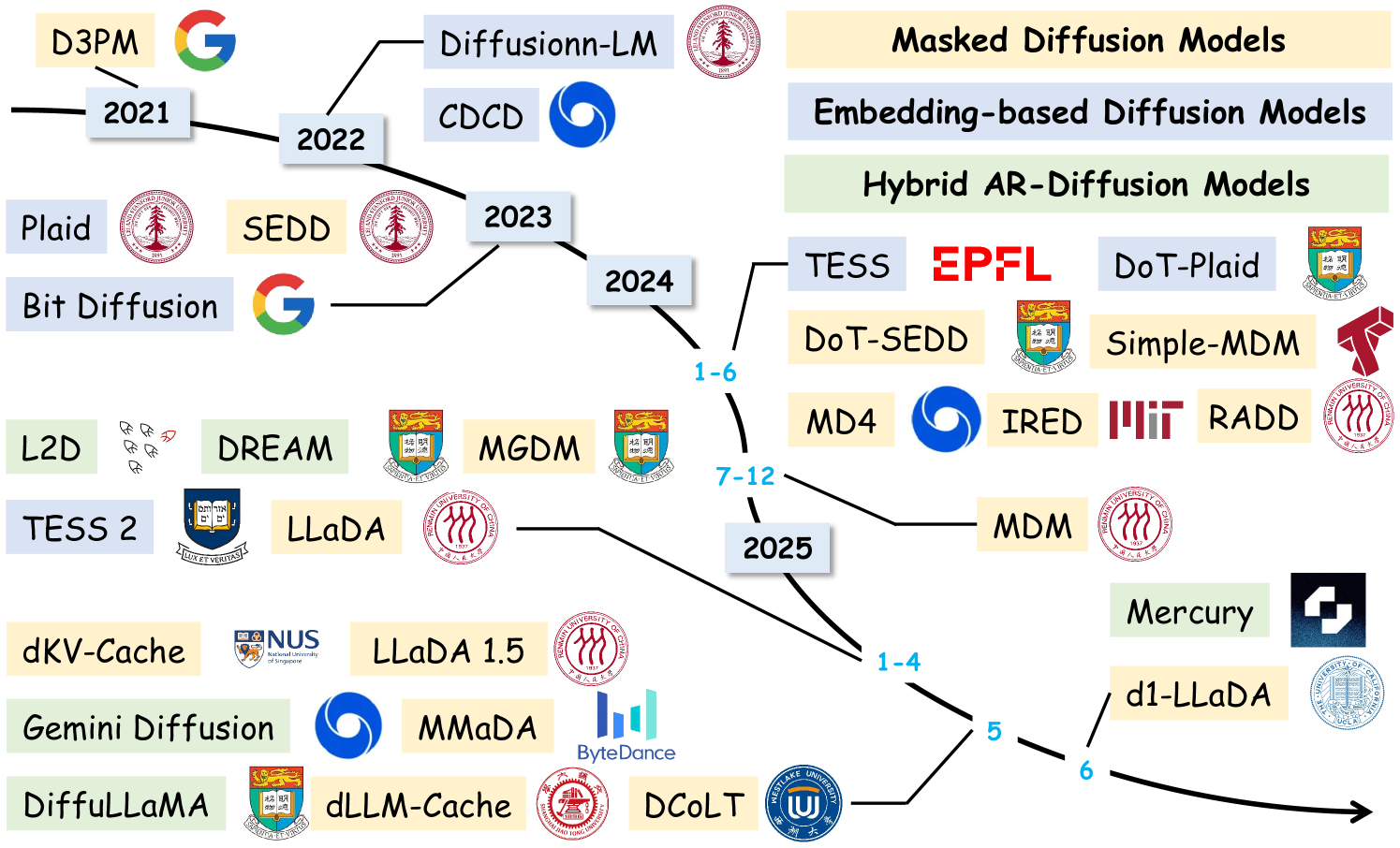

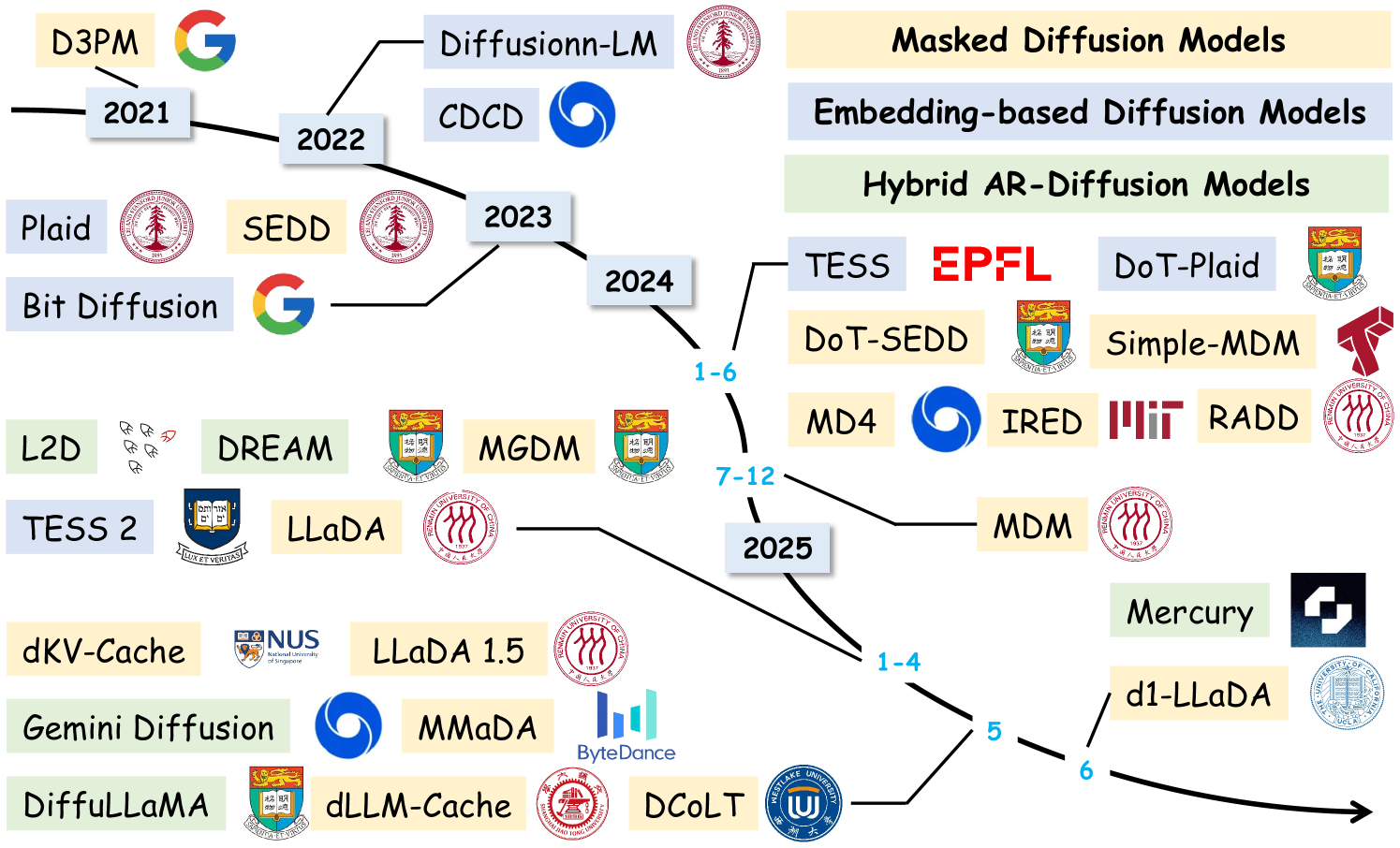

## Timeline Diagram: Evolution of Diffusion Models (2021-2025)

### Overview

This image is a timeline diagram illustrating the development and lineage of various diffusion models for generative AI, primarily focused on text or sequence generation. The timeline spans from 2021 to 2025, showing the emergence of different model families and their institutional origins. The diagram uses a branching, tree-like structure to show progression and relationships over time.

### Components/Axes

* **Timeline Axis:** A central, black, branching line flows from left to right, representing chronological progression.

* **Year Markers:** Key years are labeled along the timeline: `2021`, `2022`, `2023`, `2024`, and `2025`.

* **Sub-Year Markers:** Within 2024 and 2025, there are markers for month ranges: `1-6` and `7-12` for 2024; `1-4`, `5`, and `6` for 2025.

* **Model Labels:** Individual models are presented in colored boxes. The box color corresponds to a category defined in the legend.

* **Legend (Top-Right):** Defines three model categories:

* **Yellow Box:** `Masked Diffusion Models`

* **Blue Box:** `Embedding-based Diffusion Models`

* **Green Box:** `Hybrid AR-Diffusion Models`

* **Institutional Logos:** Logos are placed next to model names to indicate the affiliated research institution or company.

### Detailed Analysis

The diagram maps models to their release year and category. Below is a reconstruction of the information, grouped by year and cross-referenced with the legend colors.

**2021**

* `D3PM` (Yellow - Masked Diffusion Model) - Logo: Google (G)

* `Plaid` (Blue - Embedding-based Diffusion Model) - Logo: Stanford University (red tree seal)

**2022**

* `Diffusionn-LM` (Blue - Embedding-based Diffusion Model) - Logo: Stanford University

* `CDCD` (Blue - Embedding-based Diffusion Model) - Logo: Google (blue swirl)

**2023**

* `SEDD` (Yellow - Masked Diffusion Model) - Logo: Stanford University

* `Bit Diffusion` (Blue - Embedding-based Diffusion Model) - Logo: Google (G)

* `L2D` (Green - Hybrid AR-Diffusion Model) - Logo: Abstract bird/arrow symbol

* `DREAM` (Green - Hybrid AR-Diffusion Model) - Logo: Stanford University

* `MGDM` (Yellow - Masked Diffusion Model) - Logo: Stanford University

**2024 (Months 1-6)**

* `TESS` (Blue - Embedding-based Diffusion Model) - Logo: EPFL (red text)

* `DoT-Plaid` (Blue - Embedding-based Diffusion Model) - Logo: Stanford University

* `DoT-SEDD` (Yellow - Masked Diffusion Model) - Logo: Stanford University

* `Simple-MDM` (Yellow - Masked Diffusion Model) - Logo: Tsinghua University (red "T")

* `MD4` (Blue - Embedding-based Diffusion Model) - Logo: Google (blue swirl)

* `IRED` (Yellow - Masked Diffusion Model) - Logo: MIT (red block letters)

* `RADD` (Yellow - Masked Diffusion Model) - Logo: The Chinese University of Hong Kong (red seal)

**2024 (Months 7-12)**

* `TESS 2` (Blue - Embedding-based Diffusion Model) - Logo: Duke University (blue shield)

* `LLaDA` (Yellow - Masked Diffusion Model) - Logo: Stanford University

**2025 (Months 1-4)**

* `MDM` (Yellow - Masked Diffusion Model) - Logo: Stanford University

* `Mercury` (Green - Hybrid AR-Diffusion Model) - Logo: Abstract black/white geometric symbol

* `d1-LLaDA` (Yellow - Masked Diffusion Model) - Logo: University of California, San Diego (blue seal)

**2025 (Month 5)**

* `dKV-Cache` (Yellow - Masked Diffusion Model) - Logo: National University of Singapore (NUS shield)

* `LLaDA 1.5` (Yellow - Masked Diffusion Model) - Logo: Stanford University

* `Gemini Diffusion` (Green - Hybrid AR-Diffusion Model) - Logo: Google (blue swirl)

* `MMaDA` (Yellow - Masked Diffusion Model) - Logo: ByteDance (blue bars)

* `DiffuLLaMA` (Green - Hybrid AR-Diffusion Model) - Logo: Stanford University

* `dLLM-Cache` (Yellow - Masked Diffusion Model) - Logo: Stanford University

* `DCoLT` (Yellow - Masked Diffusion Model) - Logo: University of Waterloo (blue "U" crest)

**2025 (Month 6)**

* The timeline continues with an arrow pointing right, indicating ongoing development beyond the labeled models.

### Key Observations

1. **Institutional Dominance:** Stanford University (red tree seal) is the most frequently appearing institution, associated with models across all three categories and throughout the timeline. Google is also a major contributor.

2. **Category Evolution:** The early years (2021-2022) show a mix of Masked (Yellow) and Embedding-based (Blue) models. Hybrid AR-Diffusion models (Green) first appear in 2023 (`L2D`, `DREAM`) and continue to be developed.

3. **Temporal Clustering:** There is a significant increase in the number of models listed in 2024 and 2025, suggesting an acceleration in research activity in this field.

4. **Model Lineage:** The branching structure implies evolutionary relationships. For example, `DoT-Plaid` and `DoT-SEDD` in 2024 appear as derivatives or successors to earlier `Plaid` (2021) and `SEDD` (2023) models. Similarly, `LLaDA` (2024) leads to `LLaDA 1.5` and `d1-LLaDA` in 2025.

### Interpretation

This diagram serves as a **research landscape map** for diffusion-based language models. It visually argues that the field is rapidly evolving and diversifying.

* **Trend:** The progression from 2021 to 2025 shows a clear trend from foundational models (D3PM, Plaid) towards more specialized and hybrid architectures. The emergence of the "Hybrid AR-Diffusion" category (Green) in 2023 and its persistence suggests a research direction that combines the strengths of autoregressive (AR) and diffusion models.

* **Collaboration & Competition:** The clustering of logos indicates both collaboration (multiple logos on one model, though not explicitly shown here) and competition between major labs (Stanford, Google, EPFL, etc.) to advance the state-of-the-art.

* **Pace of Innovation:** The dense grouping of models in 2024 and 2025, especially with sub-year markers, implies a very fast-paced research cycle where new variants and improvements are published every few months.

* **Underlying Narrative:** The diagram tells a story of a technology moving from initial conception (2021) through a period of experimentation and categorization (2022-2023) into a phase of rapid iteration, scaling, and practical refinement (2024-2025), as seen with models like `LLaDA 1.5` and cache-focused models (`dKV-Cache`, `dLLM-Cache`). The arrow at the end suggests this evolution is ongoing and open-ended.

DECODING INTELLIGENCE...