## Diagram: Reinforcement Learning with Tool-Augmented Reasoning Pipeline

### Overview

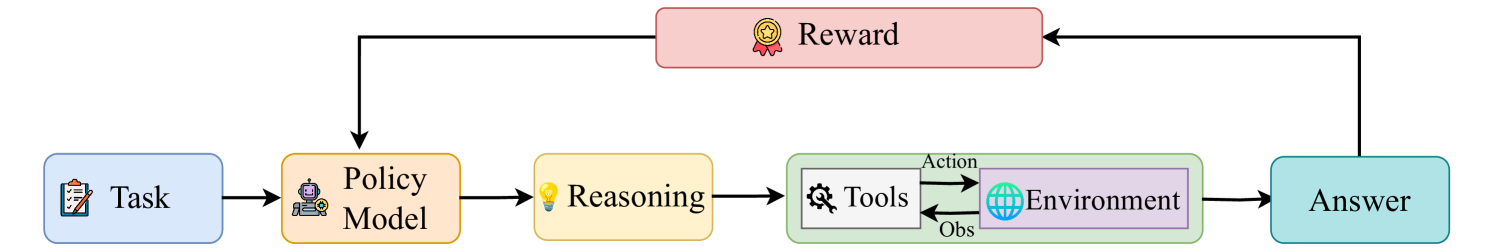

The image displays a flowchart illustrating a sequential process for an AI or machine learning system, likely depicting a reinforcement learning (RL) framework where an agent uses reasoning and tools to complete tasks. The diagram features a primary left-to-right workflow with a critical feedback loop involving a reward signal.

### Components/Axes

The diagram consists of seven distinct, color-coded rectangular boxes connected by directional arrows. Each box contains an icon and a text label.

**Main Flow Components (Left to Right):**

1. **Task** (Light blue box, leftmost): Contains a clipboard icon.

2. **Policy Model** (Light orange box): Contains a robot head icon.

3. **Reasoning** (Light yellow box): Contains a lightbulb icon.

4. **Tools & Environment Block** (Green-bordered container):

* **Tools** (White box, left side of container): Contains a gear icon.

* **Environment** (Light purple box, right side of container): Contains a globe icon.

* **Connecting Arrows within Block:** An arrow labeled **"Action"** points from Tools to Environment. An arrow labeled **"Obs"** (Observation) points from Environment back to Tools.

5. **Answer** (Light teal box, rightmost): No icon visible.

**Feedback Component:**

6. **Reward** (Light red/pink box, positioned centrally above the main flow): Contains a medal/ribbon icon.

**Flow Arrows:**

* A solid black arrow points from **Task** to **Policy Model**.

* A solid black arrow points from **Policy Model** to **Reasoning**.

* A solid black arrow points from **Reasoning** to the **Tools** box within the green container.

* A solid black arrow points from the **Environment** box to **Answer**.

* A solid black arrow points from **Answer** up to **Reward**.

* A solid black arrow points from **Reward** down to **Policy Model**.

### Detailed Analysis

The process flow is strictly sequential with one feedback loop:

1. The pipeline begins with a **Task**.

2. The task is fed into a **Policy Model**, which presumably decides on an action or strategy.

3. The Policy Model's output goes to a **Reasoning** module, suggesting a step for deliberation or planning.

4. The reasoning output is directed to **Tools**, which then executes an **Action** upon the **Environment**.

5. The **Environment** returns an observation (**Obs**) back to the Tools, creating a local interaction loop.

6. The final state or result from the Environment leads to an **Answer**.

7. The **Answer** is evaluated, generating a **Reward** signal.

8. This **Reward** is fed back to the **Policy Model**, presumably to update and improve it for future tasks.

### Key Observations

* **Hierarchical Feedback:** The reward loop is a high-level feedback mechanism that updates the core Policy Model, while the Tools-Environment interaction represents a lower-level, iterative execution loop.

* **Component Isolation:** The "Tools" and "Environment" are grouped within a single green container, indicating they form a tightly coupled subsystem for interacting with the external world.

* **Iconography:** Each major component has a symbolic icon (clipboard, robot, lightbulb, gear, globe, medal) that reinforces its function.

* **Linear Progression with Recursion:** The overall flow is linear (Task → Answer), but it is made adaptive through the recursive reward signal.

### Interpretation

This diagram models a sophisticated AI agent architecture that goes beyond simple input-output mapping. It explicitly incorporates **reasoning** as an intermediate step between policy decision and action, and it assumes the agent has access to **tools** to manipulate an **environment**. The structure is characteristic of modern approaches to **Reinforcement Learning from Human Feedback (RLHF)** or **tool-augmented language models**, where an agent learns through trial, error, and reward.

The key insight is the separation of the *policy* (the agent's strategy) from the *reasoning* and *tool-use* mechanisms. The policy is refined based on the ultimate reward received for its answers, not on the intermediate steps. This suggests a framework where the agent is trained to optimize for final outcomes, while being free to develop its own internal reasoning and tool-use strategies to achieve them. The "Obs" (observation) feedback from the Environment to the Tools is crucial, as it allows for dynamic adjustment of actions within a single task execution.