## Flowchart: Reinforcement Learning System Architecture

### Overview

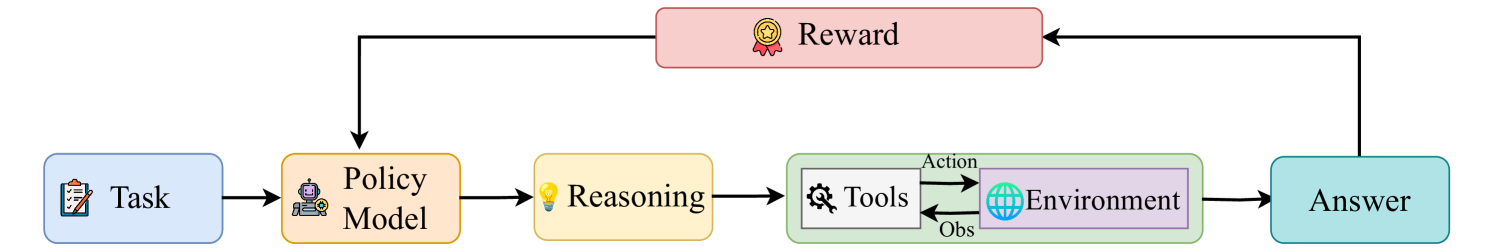

The diagram illustrates a cyclical process for a reinforcement learning system, where a task is processed through a policy model, reasoning, tools/environment interaction, and reward feedback to refine the policy. The flow emphasizes iterative learning and decision-making.

### Components/Axes

1. **Blocks**:

- **Task** (blue, clipboard icon): Represents the initial problem or objective.

- **Policy Model** (yellow, robot icon): Core decision-making component.

- **Reasoning** (yellow, light bulb icon): Logical processing step.

- **Tools** (green, wrench icon): External resources for action execution.

- **Environment** (green, globe icon): Contextual setting for actions.

- **Answer** (blue, speech bubble icon): Output result of the process.

- **Reward** (pink, trophy icon): Feedback signal for policy optimization.

2. **Arrows**:

- **Task → Policy Model**: Input task to the policy model.

- **Policy Model → Reasoning**: Policy model initiates reasoning.

- **Reasoning → Tools/Environment**: Reasoning directs actions via tools or environment.

- **Tools/Environment → Answer**: Actions produce observable outcomes.

- **Answer → Reward**: Outcomes generate rewards.

- **Reward → Policy Model**: Reward feedback refines the policy model.

### Detailed Analysis

- **Task**: The starting point, symbolizing the problem to be solved.

- **Policy Model**: Central component that determines actions based on reasoning and feedback.

- **Reasoning**: Logical step where the policy model evaluates possible actions.

- **Tools/Environment**: External systems or real-world contexts where actions are executed. "Tools" and "Environment" are parallel pathways under "Reasoning."

- **Answer**: Result of executing actions in the environment or using tools.

- **Reward**: Quantitative or qualitative feedback indicating the success of the action. This feedback loops back to the **Policy Model** to improve future decisions.

### Key Observations

1. **Feedback Loop**: The **Reward** directly influences the **Policy Model**, creating a reinforcement learning cycle where the model iteratively improves based on outcomes.

2. **Divergent Paths**: "Reasoning" branches into "Tools" and "Environment," suggesting flexibility in how actions are executed (e.g., using external tools vs. interacting with the environment).

3. **Cyclical Nature**: The system is designed for continuous improvement, with no terminal state—answers and rewards perpetually refine the policy.

### Interpretation

This flowchart represents a **reinforcement learning (RL) framework**:

- The **Policy Model** acts as the agent, learning optimal actions through trial and error.

- **Reasoning** bridges high-level decision-making with low-level execution via **Tools** or **Environment**.

- **Reward** serves as the learning signal, guiding the policy toward better performance.

- The absence of explicit termination conditions implies an open-ended optimization process, common in RL systems where the goal is to maximize cumulative rewards over time.

The diagram emphasizes the interplay between **decision-making** (policy model), **execution** (tools/environment), and **feedback** (reward), highlighting the adaptive nature of RL systems.