TECHNICAL ASSET FINGERPRINT

b9e8cda4b220eee9f7bb1c55

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

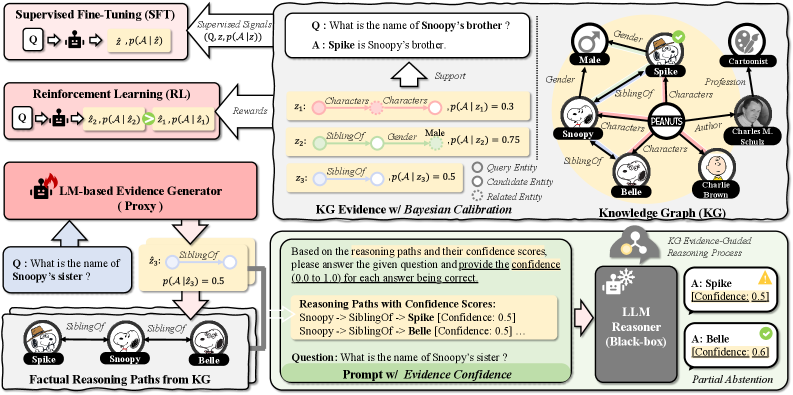

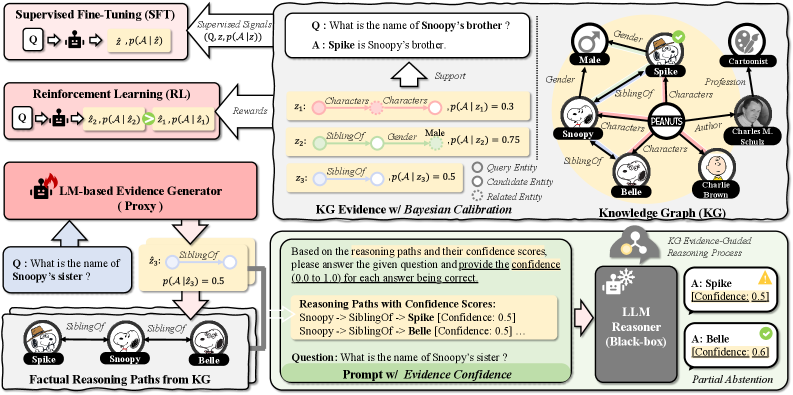

## Diagram: Knowledge Graph Reasoning Process

### Overview

The image illustrates a knowledge graph reasoning process, incorporating supervised fine-tuning (SFT), reinforcement learning (RL), and a language model (LM)-based evidence generator to answer questions about relationships within a knowledge graph. The process involves querying the knowledge graph, generating reasoning paths, and calibrating confidence scores to arrive at an answer.

### Components/Axes

* **Supervised Fine-Tuning (SFT)**: A process where a model is trained on labeled data. The input is a question (Q), and the output is an answer (z-hat) and its probability p(A | z-hat).

* Supervised Signals: (Q, z, p(A | z))

* **Reinforcement Learning (RL)**: A process where a model learns through rewards. The input is a question (Q), and the output is an answer (z2) and its probability p(A | z2), which is then refined to z1 and p(A | z1).

* Rewards: Feedback signal for the RL process.

* **LM-based Evidence Generator (Proxy)**: A component that generates evidence based on the input question.

* **Factual Reasoning Paths from KG**: Reasoning paths derived from the knowledge graph.

* **KG Evidence w/ Bayesian Calibration**: A process that calibrates the evidence from the knowledge graph using Bayesian methods.

* **Knowledge Graph (KG)**: A graph representing entities and their relationships.

* Entities: Male, Spike, Snoopy, Belle, Charlie Brown, Charles M. Schulz

* Relationships: Gender, SiblingOf, Characters, Profession, Author

* **Prompt w/ Evidence Confidence**: The question prompt along with the confidence scores of the reasoning paths.

* **LLM Reasoner (Black-box)**: A language model reasoner that processes the evidence and provides an answer.

* KG Evidence-Guided Reasoning Process

* **Partial Abstention**: The final output, indicating the model's confidence in the answers.

* **Legend**:

* Query Entity: Represented by a circle.

* Candidate Entity: Represented by a dotted circle.

* Related Entity: Represented by a filled circle.

### Detailed Analysis or Content Details

* **Example Question 1**: "What is the name of Snoopy's brother?"

* Answer: "Spike is Snoopy's brother."

* Support:

* z1: Characters -> Characters, p(A | z1) = 0.3 (red)

* z2: SiblingOf -> Gender -> Male, p(A | z2) = 0.75 (green)

* z3: SiblingOf, p(A | z3) = 0.5 (gray)

* **Example Question 2**: "What is the name of Snoopy's sister?"

* z3: SiblingOf, p(A | z3) = 0.5 (gray)

* Factual Reasoning Paths from KG:

* Spike -> SiblingOf -> Snoopy

* Snoopy -> SiblingOf -> Belle

* **Reasoning Paths with Confidence Scores**:

* Snoopy -> SiblingOf -> Spike [Confidence: 0.5]

* Snoopy -> SiblingOf -> Belle [Confidence: 0.5]

* **LLM Reasoner Output**:

* A: Spike [Confidence: 0.5] (Incorrect)

* A: Belle [Confidence: 0.6] (Correct)

### Key Observations

* The diagram illustrates a multi-stage process for answering questions using a knowledge graph.

* The process involves supervised fine-tuning, reinforcement learning, and an LM-based evidence generator.

* The knowledge graph contains entities and their relationships, which are used to generate reasoning paths.

* The confidence scores of the reasoning paths are calibrated using Bayesian methods.

* The LLM reasoner processes the evidence and provides an answer with a confidence score.

### Interpretation

The diagram demonstrates a sophisticated approach to knowledge graph reasoning, combining multiple techniques to improve the accuracy and reliability of answers. The use of supervised fine-tuning and reinforcement learning helps to train the model on labeled data and optimize its performance. The LM-based evidence generator provides additional evidence to support the reasoning process. The Bayesian calibration of confidence scores helps to ensure that the model is confident in its answers. The example questions and reasoning paths illustrate how the process works in practice. The final output shows that the model is able to correctly answer the question about Snoopy's sister with a high degree of confidence.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Knowledge Graph Evidence-Guided Reasoning Process

### Overview

This diagram illustrates a knowledge graph (KG) evidence-guided reasoning process, comparing Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) approaches to an LM-based evidence generator. It demonstrates how KG evidence is used to calibrate reasoning paths and provide confidence scores for answers generated by a Large Language Model (LLM). The diagram is segmented into four main areas: SFT/RL comparison, KG Evidence w/ Bayesian Calibration, Knowledge Graph (KG), and LLM Reasoner w/ Evidence Confidence.

### Components/Axes

The diagram contains several key components:

* **Supervised Fine-Tuning (SFT):** Input question (Q), reasoning path (z), probability of answer given reasoning path (p(A|z)), and supervised signals (Q, z, p(A|z)).

* **Reinforcement Learning (RL):** Input question (Q), reasoning path (z), probability of answer given reasoning path (p(A|z)), probability of reasoning path (p(z|A)), and rewards.

* **LM-based Evidence Generator (Proxy):** Connects to both SFT and RL, and generates reasoning paths from questions.

* **KG Evidence w/ Bayesian Calibration:** Demonstrates how KG evidence is used to calculate probabilities of reasoning paths. Includes examples with probabilities p(A|z) = 0.3, 0.75, and 0.5.

* **Knowledge Graph (KG):** A visual representation of entities and relationships (e.g., Snoopy, Spike, Charlie Brown, siblings, gender, profession, author).

* **LLM Reasoner (Black-box):** Takes reasoning paths with confidence scores as input and outputs answers with confidence levels.

* **Prompt w/ Evidence Confidence:** Shows the input prompt to the LLM Reasoner and the resulting confidence scores for each answer.

### Detailed Analysis or Content Details

**1. SFT/RL Comparison (Left Side):**

* **SFT:** The flow shows Q -> z -> p(A|z) with "Supervised Signals" feeding back into the process.

* **RL:** The flow shows Q -> z -> p(A|z) -> p(z|A) with "Rewards" feeding back into the process.

* **LM-based Evidence Generator (Proxy):** Receives questions like "What is the name of Snoopy's sister?" and outputs reasoning paths.

**2. KG Evidence w/ Bayesian Calibration (Top-Right):**

* **Question:** "What is the name of Snoopy's brother?"

* **Answer:** "Spike is Snoopy's brother."

* **Reasoning Path:** Characters -> Characters -> p(A|z) = 0.3

* **Reasoning Path:** Characters -> Gender -> p(A|z) = 0.75

* **Reasoning Path:** SiblingsOf -> Gender -> p(A|z) = 0.5

**3. Knowledge Graph (KG) (Center-Right):**

* Entities: Snoopy, Spike, Charlie Brown, Belle, Male, PEANUTS, Charles M. Schulz.

* Relationships: Gender, SiblingsOf, Cartoonist, Author, Profession, Characters.

* The graph visually connects these entities and relationships.

**4. LLM Reasoner w/ Evidence Confidence (Bottom-Right):**

* **Prompt:** "What is the name of Snoopy's sister?"

* **Reasoning Paths with Confidence Scores:**

* Snoopy -> SiblingsOf -> Spike [Confidence: 0.5]

* Snoopy -> SiblingsOf -> Belle [Confidence: 0.6]

* **Answers with Confidence:**

* A: Spike [Confidence: 0.5] (represented by a yellow triangle)

* A: Belle [Confidence: 0.6] (represented by a yellow triangle)

* **Partial Abstention:** Indicates that the LLM may abstain from answering if confidence is low.

**5. Factual Reasoning Paths from KG (Bottom-Left):**

* **Question:** "What is the name of Snoopy's sister?"

* **Reasoning Path:** Spike -> SiblingsOf -> Snoopy with p(A|z) = 0.5

* **Reasoning Path:** Belle -> SiblingsOf -> Snoopy

### Key Observations

* The KG evidence provides probabilities for different reasoning paths, which are then used to calibrate the LLM's confidence in its answers.

* The LLM Reasoner outputs answers with associated confidence scores, allowing for partial abstention when confidence is low.

* The diagram highlights the difference between SFT and RL approaches, with SFT relying on supervised signals and RL relying on rewards.

* The probabilities p(A|z) vary significantly (0.3, 0.5, 0.75), indicating varying degrees of support for different reasoning paths.

### Interpretation

The diagram demonstrates a sophisticated approach to knowledge-based reasoning using LLMs. By integrating a knowledge graph and Bayesian calibration, the system aims to improve the accuracy and reliability of LLM-generated answers. The confidence scores provide a measure of uncertainty, allowing for more informed decision-making. The comparison between SFT and RL suggests that both approaches can benefit from KG evidence, but they differ in how they leverage this information. The use of partial abstention is a crucial feature, preventing the LLM from providing potentially incorrect answers when it lacks sufficient confidence. The diagram illustrates a move towards more explainable and trustworthy AI systems by explicitly incorporating knowledge and uncertainty into the reasoning process. The diagram is a conceptual illustration of a system, and does not contain specific numerical data beyond the probabilities associated with reasoning paths. It focuses on the *process* of reasoning rather than presenting quantitative results.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Knowledge Graph Evidence-Guided Reasoning Pipeline

### Overview

This image is a technical flowchart illustrating a machine learning pipeline that integrates Supervised Fine-Tuning (SFT), Reinforcement Learning (RL), and a Language Model (LM)-based Evidence Generator to answer questions using a Knowledge Graph (KG). The system generates reasoning paths from the KG, calibrates their confidence, and uses them to prompt an LLM Reasoner, which can provide answers with confidence scores or abstain partially.

### Components/Axes

The diagram is organized into several interconnected blocks and regions:

**1. Left Column (Training & Evidence Generation Pipeline):**

* **Top Block:** "Supervised Fine-Tuning (SFT)". Input: `Q` (Question). Output: `ẑ, p(A|ẑ)` (Answer prediction and probability). Receives "Supervised Signals (Q, z, p(A|z))".

* **Middle Block:** "Reinforcement Learning (RL)". Input: `Q`. Process: `ẑ₁, p(A|ẑ₁) → ẑ₂, p(A|ẑ₂)`. Receives "Rewards".

* **Bottom Block:** "LM-based Evidence Generator (Proxy)". Input: `Q: What is the name of Snoopy's sister?`. Output: `ẑ₃: SiblingOf` with `p(A|ẑ₃) = 0.5`. This block feeds into the "Factual Reasoning Paths from KG" section.

**2. Bottom-Left Region (Factual Reasoning Paths from KG):**

* Shows a small graph with nodes: `Spike`, `Snoopy`, `Belle`.

* Edges are labeled `SiblingOf`.

* This visually represents the reasoning path: `Spike --SiblingOf--> Snoopy --SiblingOf--> Belle`.

**3. Central Top Region (KG Evidence w/ Bayesian Calibration):**

* **Question:** `Q: What is the name of Snoopy's brother?`

* **Answer:** `A: Spike is Snoopy's brother.`

* **Evidence Paths (z₁, z₂, z₃):**

* `z₁: Characters --Characters-->` with `p(A|z₁) = 0.3`

* `z₂: SiblingOf --Gender--> Male` with `p(A|z₂) = 0.75`

* `z₃: SiblingOf` with `p(A|z₃) = 0.5`

* **Legend:** Defines node colors: `Query Entity` (light blue), `Candidate Entity` (light orange), `Related Entity` (light green).

**4. Top-Right Region (Knowledge Graph - KG):**

* A network graph centered on `PEANUTS`.

* **Nodes (Characters):** `Snoopy`, `Spike`, `Belle`, `Charlie Brown`, `Charles M. Schulz`.

* **Node Attributes:** `Gender: Male` (for Spike), `Profession: Cartoonist` (for Charles M. Schulz).

* **Relationship Edges:** `SiblingOf` (connecting Snoopy-Spike, Snoopy-Belle), `Characters` (connecting PEANUTS to Snoopy, Spike, Belle, Charlie Brown), `Author` (connecting Charles M. Schulz to PEANUTS).

**5. Bottom-Right Region (KG Evidence-Guided Reasoning Process):**

* **Input Prompt:** "Based on the reasoning paths and their confidence scores, please answer the given question and provide the confidence (0.0 to 1.0) for each answer being correct."

* **Reasoning Paths with Confidence Scores:**

* `Snoopy -> SiblingOf -> Spike [Confidence: 0.5]`

* `Snoopy -> SiblingOf -> Belle [Confidence: 0.5]`

* **Question:** `What is the name of Snoopy's sister?`

* **Label:** "Prompt w/ Evidence Confidence"

* **Process Block:** "LLM Reasoner (Black-box)".

* **Outputs:**

* `A: Spike [Confidence: 0.5]` (with a yellow warning icon).

* `A: Belle [Confidence: 0.6]` (with a green checkmark icon).

* **Label:** "Partial Abstention".

### Detailed Analysis

The diagram details a multi-stage process for evidence-based question answering:

1. **Evidence Generation:** An LM-based generator proposes potential evidence paths (e.g., `SiblingOf`) from a Knowledge Graph in response to a question. Each path `z` is assigned an initial probability `p(A|z)`.

2. **Knowledge Graph Structure:** The KG contains entities (Snoopy, Spike, Belle) and relationships (`SiblingOf`, `Characters`, `Author`). It also includes attributes (Gender, Profession).

3. **Bayesian Calibration:** Evidence paths are evaluated. For the question about Snoopy's brother, the path `SiblingOf -> Male` (`z₂`) has the highest confidence (0.75), correctly pointing to Spike.

4. **Reasoning Path Extraction:** For the question about Snoopy's sister, two factual paths are extracted from the KG: `Snoopy -> SiblingOf -> Spike` and `Snoopy -> SiblingOf -> Belle`. Both are assigned an initial confidence of 0.5.

5. **LLM Reasoning with Confidence:** The paths, their confidence scores, and the question are formatted into a prompt for a black-box LLM Reasoner. The LLM outputs two possible answers (Spike and Belle) with adjusted confidence scores (0.5 and 0.6, respectively).

6. **Partial Abstention:** The system demonstrates "Partial Abstention" by presenting multiple answers with their confidences rather than forcing a single, potentially incorrect choice. The green checkmark on "Belle" suggests it is the more correct answer for "sister," but the system acknowledges the ambiguity.

### Key Observations

* **Dual Question Example:** The diagram uses two related questions ("brother" and "sister") to illustrate the pipeline's operation.

* **Confidence Flow:** Confidence scores (`p(A|z)`) are generated, used in prompts, and then output by the LLM, showing an end-to-end confidence-aware process.

* **Visual Coding:** Colors are used consistently: pink for the LM-based Evidence Generator, light blue/orange/green for entity types in the evidence legend, and yellow/green icons for answer confidence.

* **Graph Complexity:** The KG is a small, focused subgraph of the Peanuts universe, sufficient to demonstrate the sibling relationships needed to answer the sample questions.

* **Black-Box LLM:** The LLM Reasoner is explicitly labeled as a "Black-box," indicating the pipeline is designed to work with various underlying language models.

### Interpretation

This diagram presents a framework for making AI question-answering systems more reliable and interpretable by grounding their responses in structured knowledge (a KG) and explicit reasoning paths. The core innovation is the integration of **confidence-calibrated evidence** directly into the prompt for a large language model.

* **Problem Addressed:** It tackles the issue of LLMs generating plausible but factually incorrect answers ("hallucinations") by forcing them to consider and weight specific evidence trails from a trusted knowledge source.

* **Mechanism:** The system doesn't just retrieve facts; it retrieves *reasoning paths* (e.g., "Snoopy has a sibling who is male") and their associated confidence. This allows the LLM to perform a form of weighted inference.

* **Significance of Partial Abstention:** The output for "Snoopy's sister" is particularly telling. Instead of incorrectly asserting Spike is the sister or guessing, the system presents both sibling candidates with confidence scores. This "partial abstention" is a crucial feature for high-stakes applications, as it transparently communicates uncertainty and allows a human or downstream system to make the final judgment.

* **Pipeline Synergy:** The left column (SFT, RL, Evidence Generator) suggests the evidence generation component itself is trained and refined, likely to produce more relevant and accurate evidence paths (`z`) for a given question (`Q`). This creates a closed-loop system where the reasoning process improves over time.

In essence, the diagram depicts a move from opaque, end-to-end question answering towards a more transparent, evidence-based, and confidence-aware reasoning architecture.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Multi-Stage Question Answering System with Knowledge Graph Integration

### Overview

The diagram illustrates a complex question-answering pipeline combining supervised learning, reinforcement learning, knowledge graph reasoning, and large language model (LLM) inference. It shows data flow between components including supervised fine-tuning (SFT), reinforcement learning (RL), LM-based evidence generation, knowledge graph (KG) calibration, and LLM reasoning with confidence scoring.

### Components/Axes

1. **Supervised Fine-Tuning (SFT)**

- Input: Question (Q) + Context (z)

- Output: Probability distribution p(A|z)

- Visual: Pink box with "Q → z, p(A|z)" notation

2. **Reinforcement Learning (RL)**

- Input: Question (Q)

- Output: Reward signal + p(A|z)

- Visual: Blue box with "Q → Rewards → p(A|z)"

3. **LM-based Evidence Generator (Proxy)**

- Input: Question (Q)

- Output: Evidence path probabilities (p(A|z))

- Visual: Red box with "LM-based Evidence Generator (Proxy)" label

4. **KG Evidence with Bayesian Calibration**

- Contains:

- Knowledge Graph (KG) with nodes (characters, relationships)

- Bayesian calibration scores (p(A|z))

- Visual: Central yellow circle with KG nodes and probability annotations

5. **LLM Reasoner (Black-box)**

- Input: Question + Evidence paths

- Output: Final answer with confidence scores

- Visual: Gray box labeled "LLM Reasoner (Black-box)"

### Detailed Analysis

**Key Textual Elements:**

- **KG Nodes & Relationships:**

- Characters: Spike, Snoopy, Belle, Peanuts

- Relationships: SiblingOf, Gender, Profession

- Notable: "Snoopy's sister" question with 0.5 probability

- **Confidence Scores:**

- Spike: 0.3 (Character), 0.5 (SiblingOf)

- Snoopy: 0.75 (Gender)

- Belle: 0.5 (SiblingOf)

- Partial Abstention threshold: 0.5 confidence

- **Process Flow:**

1. SFT → RL → LM Evidence Generator

2. KG Evidence → Bayesian Calibration

3. Combined evidence → LLM Reasoner

4. Confidence scoring → Partial Abstention decision

**Spatial Grounding:**

- KG central position (top-right)

- SFT/RL in upper-left quadrant

- LM Evidence Generator in lower-left

- LLM Reasoner in bottom-right

- Confidence scores color-coded (green/yellow)

### Key Observations

1. **Confidence Thresholding:**

- 0.5 confidence score acts as decision boundary

- Spike's 0.5 confidence for "SiblingOf" relationship triggers partial abstention

2. **Knowledge Graph Integration:**

- KG provides structured relationships (SiblingOf, Gender)

- Bayesian calibration refines probabilities from LM

3. **Multi-Stage Reasoning:**

- SFT provides initial probabilities

- RL optimizes reward-based paths

- LM Evidence Generator creates reasoning paths

- KG adds factual constraints

- LLM combines all evidence with confidence scoring

### Interpretation

This system demonstrates a hybrid approach to question answering that:

1. **Combines Multiple Evidence Sources:**

- Statistical patterns (SFT/RL)

- Factual knowledge (KG)

- Language model reasoning (LLM)

2. **Implements Confidence-Aware Decision Making:**

- Partial abstention mechanism prevents low-confidence answers

- Thresholding at 0.5 confidence score

3. **Uses KG for Factual Grounding:**

- Relationships like "SiblingOf" provide critical constraints

- Bayesian calibration improves probability estimates

4. **Reinforcement Learning Optimization:**

- Reward signals refine answer selection

- Creates feedback loop between LM and KG

The partial abstention at 0.5 confidence suggests the system prioritizes accuracy over completeness, potentially missing answers when confidence is insufficient. The KG's central position indicates its critical role in providing factual constraints to the otherwise probabilistic LM-based reasoning.

DECODING INTELLIGENCE...