## Diagram: Knowledge Graph Reasoning Process

### Overview

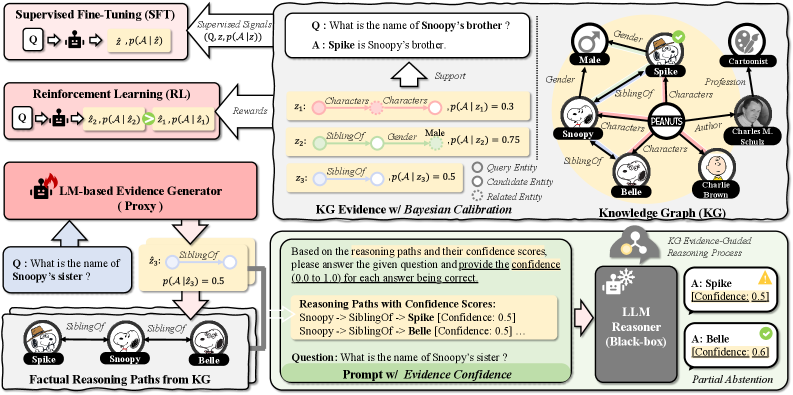

The image illustrates a knowledge graph reasoning process, incorporating supervised fine-tuning (SFT), reinforcement learning (RL), and a language model (LM)-based evidence generator to answer questions about relationships within a knowledge graph. The process involves querying the knowledge graph, generating reasoning paths, and calibrating confidence scores to arrive at an answer.

### Components/Axes

* **Supervised Fine-Tuning (SFT)**: A process where a model is trained on labeled data. The input is a question (Q), and the output is an answer (z-hat) and its probability p(A | z-hat).

* Supervised Signals: (Q, z, p(A | z))

* **Reinforcement Learning (RL)**: A process where a model learns through rewards. The input is a question (Q), and the output is an answer (z2) and its probability p(A | z2), which is then refined to z1 and p(A | z1).

* Rewards: Feedback signal for the RL process.

* **LM-based Evidence Generator (Proxy)**: A component that generates evidence based on the input question.

* **Factual Reasoning Paths from KG**: Reasoning paths derived from the knowledge graph.

* **KG Evidence w/ Bayesian Calibration**: A process that calibrates the evidence from the knowledge graph using Bayesian methods.

* **Knowledge Graph (KG)**: A graph representing entities and their relationships.

* Entities: Male, Spike, Snoopy, Belle, Charlie Brown, Charles M. Schulz

* Relationships: Gender, SiblingOf, Characters, Profession, Author

* **Prompt w/ Evidence Confidence**: The question prompt along with the confidence scores of the reasoning paths.

* **LLM Reasoner (Black-box)**: A language model reasoner that processes the evidence and provides an answer.

* KG Evidence-Guided Reasoning Process

* **Partial Abstention**: The final output, indicating the model's confidence in the answers.

* **Legend**:

* Query Entity: Represented by a circle.

* Candidate Entity: Represented by a dotted circle.

* Related Entity: Represented by a filled circle.

### Detailed Analysis or Content Details

* **Example Question 1**: "What is the name of Snoopy's brother?"

* Answer: "Spike is Snoopy's brother."

* Support:

* z1: Characters -> Characters, p(A | z1) = 0.3 (red)

* z2: SiblingOf -> Gender -> Male, p(A | z2) = 0.75 (green)

* z3: SiblingOf, p(A | z3) = 0.5 (gray)

* **Example Question 2**: "What is the name of Snoopy's sister?"

* z3: SiblingOf, p(A | z3) = 0.5 (gray)

* Factual Reasoning Paths from KG:

* Spike -> SiblingOf -> Snoopy

* Snoopy -> SiblingOf -> Belle

* **Reasoning Paths with Confidence Scores**:

* Snoopy -> SiblingOf -> Spike [Confidence: 0.5]

* Snoopy -> SiblingOf -> Belle [Confidence: 0.5]

* **LLM Reasoner Output**:

* A: Spike [Confidence: 0.5] (Incorrect)

* A: Belle [Confidence: 0.6] (Correct)

### Key Observations

* The diagram illustrates a multi-stage process for answering questions using a knowledge graph.

* The process involves supervised fine-tuning, reinforcement learning, and an LM-based evidence generator.

* The knowledge graph contains entities and their relationships, which are used to generate reasoning paths.

* The confidence scores of the reasoning paths are calibrated using Bayesian methods.

* The LLM reasoner processes the evidence and provides an answer with a confidence score.

### Interpretation

The diagram demonstrates a sophisticated approach to knowledge graph reasoning, combining multiple techniques to improve the accuracy and reliability of answers. The use of supervised fine-tuning and reinforcement learning helps to train the model on labeled data and optimize its performance. The LM-based evidence generator provides additional evidence to support the reasoning process. The Bayesian calibration of confidence scores helps to ensure that the model is confident in its answers. The example questions and reasoning paths illustrate how the process works in practice. The final output shows that the model is able to correctly answer the question about Snoopy's sister with a high degree of confidence.