\n

## Diagram: Knowledge Graph Evidence-Guided Reasoning Process

### Overview

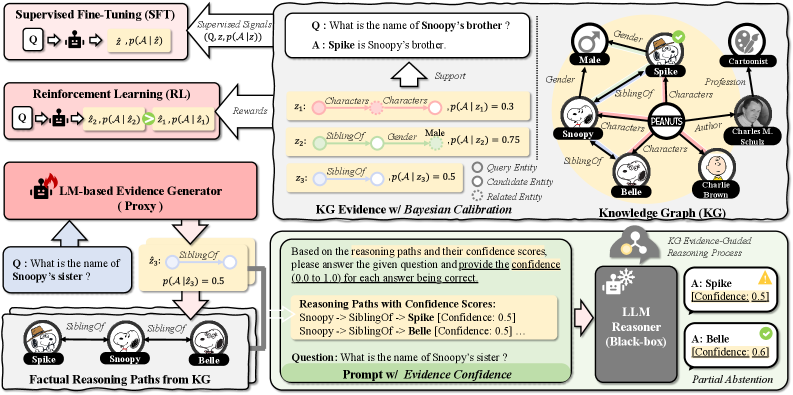

This diagram illustrates a knowledge graph (KG) evidence-guided reasoning process, comparing Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) approaches to an LM-based evidence generator. It demonstrates how KG evidence is used to calibrate reasoning paths and provide confidence scores for answers generated by a Large Language Model (LLM). The diagram is segmented into four main areas: SFT/RL comparison, KG Evidence w/ Bayesian Calibration, Knowledge Graph (KG), and LLM Reasoner w/ Evidence Confidence.

### Components/Axes

The diagram contains several key components:

* **Supervised Fine-Tuning (SFT):** Input question (Q), reasoning path (z), probability of answer given reasoning path (p(A|z)), and supervised signals (Q, z, p(A|z)).

* **Reinforcement Learning (RL):** Input question (Q), reasoning path (z), probability of answer given reasoning path (p(A|z)), probability of reasoning path (p(z|A)), and rewards.

* **LM-based Evidence Generator (Proxy):** Connects to both SFT and RL, and generates reasoning paths from questions.

* **KG Evidence w/ Bayesian Calibration:** Demonstrates how KG evidence is used to calculate probabilities of reasoning paths. Includes examples with probabilities p(A|z) = 0.3, 0.75, and 0.5.

* **Knowledge Graph (KG):** A visual representation of entities and relationships (e.g., Snoopy, Spike, Charlie Brown, siblings, gender, profession, author).

* **LLM Reasoner (Black-box):** Takes reasoning paths with confidence scores as input and outputs answers with confidence levels.

* **Prompt w/ Evidence Confidence:** Shows the input prompt to the LLM Reasoner and the resulting confidence scores for each answer.

### Detailed Analysis or Content Details

**1. SFT/RL Comparison (Left Side):**

* **SFT:** The flow shows Q -> z -> p(A|z) with "Supervised Signals" feeding back into the process.

* **RL:** The flow shows Q -> z -> p(A|z) -> p(z|A) with "Rewards" feeding back into the process.

* **LM-based Evidence Generator (Proxy):** Receives questions like "What is the name of Snoopy's sister?" and outputs reasoning paths.

**2. KG Evidence w/ Bayesian Calibration (Top-Right):**

* **Question:** "What is the name of Snoopy's brother?"

* **Answer:** "Spike is Snoopy's brother."

* **Reasoning Path:** Characters -> Characters -> p(A|z) = 0.3

* **Reasoning Path:** Characters -> Gender -> p(A|z) = 0.75

* **Reasoning Path:** SiblingsOf -> Gender -> p(A|z) = 0.5

**3. Knowledge Graph (KG) (Center-Right):**

* Entities: Snoopy, Spike, Charlie Brown, Belle, Male, PEANUTS, Charles M. Schulz.

* Relationships: Gender, SiblingsOf, Cartoonist, Author, Profession, Characters.

* The graph visually connects these entities and relationships.

**4. LLM Reasoner w/ Evidence Confidence (Bottom-Right):**

* **Prompt:** "What is the name of Snoopy's sister?"

* **Reasoning Paths with Confidence Scores:**

* Snoopy -> SiblingsOf -> Spike [Confidence: 0.5]

* Snoopy -> SiblingsOf -> Belle [Confidence: 0.6]

* **Answers with Confidence:**

* A: Spike [Confidence: 0.5] (represented by a yellow triangle)

* A: Belle [Confidence: 0.6] (represented by a yellow triangle)

* **Partial Abstention:** Indicates that the LLM may abstain from answering if confidence is low.

**5. Factual Reasoning Paths from KG (Bottom-Left):**

* **Question:** "What is the name of Snoopy's sister?"

* **Reasoning Path:** Spike -> SiblingsOf -> Snoopy with p(A|z) = 0.5

* **Reasoning Path:** Belle -> SiblingsOf -> Snoopy

### Key Observations

* The KG evidence provides probabilities for different reasoning paths, which are then used to calibrate the LLM's confidence in its answers.

* The LLM Reasoner outputs answers with associated confidence scores, allowing for partial abstention when confidence is low.

* The diagram highlights the difference between SFT and RL approaches, with SFT relying on supervised signals and RL relying on rewards.

* The probabilities p(A|z) vary significantly (0.3, 0.5, 0.75), indicating varying degrees of support for different reasoning paths.

### Interpretation

The diagram demonstrates a sophisticated approach to knowledge-based reasoning using LLMs. By integrating a knowledge graph and Bayesian calibration, the system aims to improve the accuracy and reliability of LLM-generated answers. The confidence scores provide a measure of uncertainty, allowing for more informed decision-making. The comparison between SFT and RL suggests that both approaches can benefit from KG evidence, but they differ in how they leverage this information. The use of partial abstention is a crucial feature, preventing the LLM from providing potentially incorrect answers when it lacks sufficient confidence. The diagram illustrates a move towards more explainable and trustworthy AI systems by explicitly incorporating knowledge and uncertainty into the reasoning process. The diagram is a conceptual illustration of a system, and does not contain specific numerical data beyond the probabilities associated with reasoning paths. It focuses on the *process* of reasoning rather than presenting quantitative results.