## Flowchart: Multi-Stage Question Answering System with Knowledge Graph Integration

### Overview

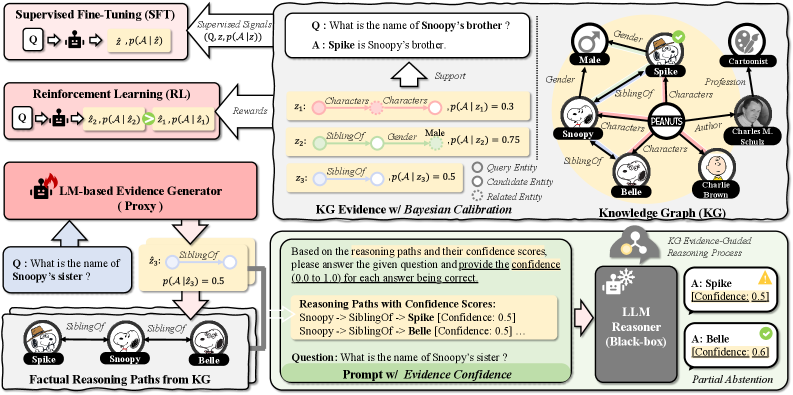

The diagram illustrates a complex question-answering pipeline combining supervised learning, reinforcement learning, knowledge graph reasoning, and large language model (LLM) inference. It shows data flow between components including supervised fine-tuning (SFT), reinforcement learning (RL), LM-based evidence generation, knowledge graph (KG) calibration, and LLM reasoning with confidence scoring.

### Components/Axes

1. **Supervised Fine-Tuning (SFT)**

- Input: Question (Q) + Context (z)

- Output: Probability distribution p(A|z)

- Visual: Pink box with "Q → z, p(A|z)" notation

2. **Reinforcement Learning (RL)**

- Input: Question (Q)

- Output: Reward signal + p(A|z)

- Visual: Blue box with "Q → Rewards → p(A|z)"

3. **LM-based Evidence Generator (Proxy)**

- Input: Question (Q)

- Output: Evidence path probabilities (p(A|z))

- Visual: Red box with "LM-based Evidence Generator (Proxy)" label

4. **KG Evidence with Bayesian Calibration**

- Contains:

- Knowledge Graph (KG) with nodes (characters, relationships)

- Bayesian calibration scores (p(A|z))

- Visual: Central yellow circle with KG nodes and probability annotations

5. **LLM Reasoner (Black-box)**

- Input: Question + Evidence paths

- Output: Final answer with confidence scores

- Visual: Gray box labeled "LLM Reasoner (Black-box)"

### Detailed Analysis

**Key Textual Elements:**

- **KG Nodes & Relationships:**

- Characters: Spike, Snoopy, Belle, Peanuts

- Relationships: SiblingOf, Gender, Profession

- Notable: "Snoopy's sister" question with 0.5 probability

- **Confidence Scores:**

- Spike: 0.3 (Character), 0.5 (SiblingOf)

- Snoopy: 0.75 (Gender)

- Belle: 0.5 (SiblingOf)

- Partial Abstention threshold: 0.5 confidence

- **Process Flow:**

1. SFT → RL → LM Evidence Generator

2. KG Evidence → Bayesian Calibration

3. Combined evidence → LLM Reasoner

4. Confidence scoring → Partial Abstention decision

**Spatial Grounding:**

- KG central position (top-right)

- SFT/RL in upper-left quadrant

- LM Evidence Generator in lower-left

- LLM Reasoner in bottom-right

- Confidence scores color-coded (green/yellow)

### Key Observations

1. **Confidence Thresholding:**

- 0.5 confidence score acts as decision boundary

- Spike's 0.5 confidence for "SiblingOf" relationship triggers partial abstention

2. **Knowledge Graph Integration:**

- KG provides structured relationships (SiblingOf, Gender)

- Bayesian calibration refines probabilities from LM

3. **Multi-Stage Reasoning:**

- SFT provides initial probabilities

- RL optimizes reward-based paths

- LM Evidence Generator creates reasoning paths

- KG adds factual constraints

- LLM combines all evidence with confidence scoring

### Interpretation

This system demonstrates a hybrid approach to question answering that:

1. **Combines Multiple Evidence Sources:**

- Statistical patterns (SFT/RL)

- Factual knowledge (KG)

- Language model reasoning (LLM)

2. **Implements Confidence-Aware Decision Making:**

- Partial abstention mechanism prevents low-confidence answers

- Thresholding at 0.5 confidence score

3. **Uses KG for Factual Grounding:**

- Relationships like "SiblingOf" provide critical constraints

- Bayesian calibration improves probability estimates

4. **Reinforcement Learning Optimization:**

- Reward signals refine answer selection

- Creates feedback loop between LM and KG

The partial abstention at 0.5 confidence suggests the system prioritizes accuracy over completeness, potentially missing answers when confidence is insufficient. The KG's central position indicates its critical role in providing factual constraints to the otherwise probabilistic LM-based reasoning.